- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

设置GPU

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU

tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpu0],"GPU")

导入数据

import os,PIL,pathlib

import matplotlib.pyplot as plt

import numpy as np

from tensorflow import keras

from tensorflow.keras import layers,models

data_dir = r"C:\Users\11054\Desktop\kLearning\p1_data"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*.jpg')))

print("图片总数为:",image_count)

图片总数为: 1125

roses = list(data_dir.glob('sunrise/*.jpg'))

PIL.Image.open(str(roses[0]))

加载数据

batch_size = 32

img_height = 180

img_width = 180

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 1125 files belonging to 4 classes.

Using 900 files for training.

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 1125 files belonging to 4 classes.

Using 225 files for validation.

class_names = train_ds.class_names

print(class_names)

['cloudy', 'rain', 'shine', 'sunrise']

plt.figure(figsize=(20, 10))

for images, labels in train_ds.take(1):

for i in range(20):

ax = plt.subplot(5, 10, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")

检查数据

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

(32, 180, 180, 3)

(32,)

配置数据集

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

构建网络

num_classes = 4

"""

关于卷积核的计算不懂的可以参考文章:https://blog.csdn.net/qq_38251616/article/details/114278995

layers.Dropout(0.4) 作用是防止过拟合,提高模型的泛化能力。

在上一篇文章花朵识别中,训练准确率与验证准确率相差巨大就是由于模型过拟合导致的

关于Dropout层的更多介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/115826689

"""

model = models.Sequential([

layers.Conv2D(16, (3, 3), activation='relu', input_shape=(img_height, img_width, 3)), # 卷积层1,卷积核3*3

layers.AveragePooling2D((2, 2)), # 池化层1,2*2采样

layers.Conv2D(32, (3, 3), activation='relu'), # 卷积层2,卷积核3*3

layers.AveragePooling2D((2, 2)), # 池化层2,2*2采样

layers.Conv2D(64, (3, 3), activation='relu'), # 卷积层3,卷积核3*3

layers.Dropout(0.3), # 让神经元以一定的概率停止工作,防止过拟合,提高模型的泛化能力。

layers.Flatten(), # Flatten层,连接卷积层与全连接层

layers.Dense(128, activation='relu'), # 全连接层,特征进一步提取

layers.Dense(num_classes) # 输出层,输出预期结果

])

model.summary() # 打印网络结构

C:\Users\11054\.conda\envs\py311\Lib\site-packages\keras\src\layers\convolutional\base_conv.py:107: UserWarning: Do not pass an `input_shape`/`input_dim` argument to a layer. When using Sequential models, prefer using an `Input(shape)` object as the first layer in the model instead.

super().__init__(activity_regularizer=activity_regularizer, **kwargs)

Model: "sequential"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━┩ │ conv2d (Conv2D) │ (None, 178, 178, 16) │ 448 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ average_pooling2d (AveragePooling2D) │ (None, 89, 89, 16) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ conv2d_1 (Conv2D) │ (None, 87, 87, 32) │ 4,640 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ average_pooling2d_1 │ (None, 43, 43, 32) │ 0 │ │ (AveragePooling2D) │ │ │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ conv2d_2 (Conv2D) │ (None, 41, 41, 64) │ 18,496 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ dropout (Dropout) │ (None, 41, 41, 64) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ flatten (Flatten) │ (None, 107584) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ dense (Dense) │ (None, 128) │ 13,770,880 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ dense_1 (Dense) │ (None, 4) │ 516 │ └──────────────────────────────────────┴─────────────────────────────┴─────────────────┘

Total params: 13,794,980 (52.62 MB)

Trainable params: 13,794,980 (52.62 MB)

Non-trainable params: 0 (0.00 B)

编译

# 设置优化器

opt = tf.keras.optimizers.Adam(learning_rate=0.001)

model.compile(optimizer=opt,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

训练模型

epochs = 10

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

Epoch 1/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m6s[0m 152ms/step - accuracy: 0.4130 - loss: 594.6376 - val_accuracy: 0.6444 - val_loss: 0.8201

Epoch 2/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 144ms/step - accuracy: 0.7569 - loss: 0.7675 - val_accuracy: 0.6889 - val_loss: 0.7538

Epoch 3/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 142ms/step - accuracy: 0.9237 - loss: 0.3134 - val_accuracy: 0.7733 - val_loss: 0.6527

Epoch 4/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 142ms/step - accuracy: 0.9228 - loss: 0.2068 - val_accuracy: 0.7644 - val_loss: 0.8386

Epoch 5/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 143ms/step - accuracy: 0.9287 - loss: 0.2245 - val_accuracy: 0.8044 - val_loss: 0.6802

Epoch 6/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 141ms/step - accuracy: 0.9833 - loss: 0.0794 - val_accuracy: 0.8000 - val_loss: 0.7839

Epoch 7/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 143ms/step - accuracy: 0.9932 - loss: 0.0422 - val_accuracy: 0.7778 - val_loss: 0.9634

Epoch 8/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 142ms/step - accuracy: 0.9816 - loss: 0.1061 - val_accuracy: 0.8089 - val_loss: 0.9127

Epoch 9/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 143ms/step - accuracy: 0.9967 - loss: 0.0190 - val_accuracy: 0.8044 - val_loss: 1.1308

Epoch 10/10

[1m29/29[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 146ms/step - accuracy: 0.9934 - loss: 0.0234 - val_accuracy: 0.8178 - val_loss: 0.9809

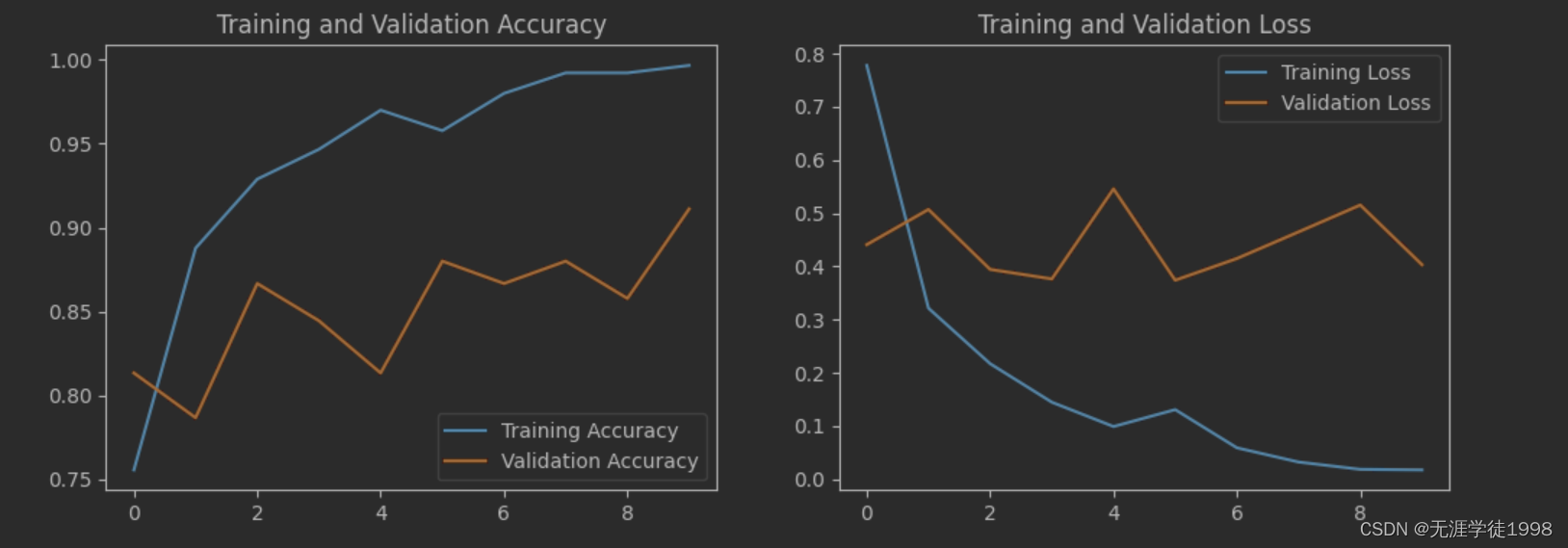

模型评估

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

个人小结

运行时遇到错误:

AttributeError: module ‘keras._tf_keras.keras.layers’ has no attribute ‘experimental’

将对应的内容 layers.experimental.preprocessing.Rescaling 删除后运行成功,Rescaling 层用于将输入图像的像素值从 [0, 255] 归一化到 [0, 1],可以用如下操作代替归一化

import tensorflow as tf

from tensorflow.keras import layers

def mean_std_normalize(image):

return (image - 127.5) / 127.5

train_ds = train_ds.map(lambda x, y: (mean_std_normalize(x), y))

val_ds = val_ds.map(lambda x, y: (mean_std_normalize(x), y))

634

634

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?