目录

实验所需

实验环境

python=3.6.8

python库:d2l

pytorch=1.5.0

torchtext=0.6.0

import collections

import os

import io

import math

import torch

from torch import nn

import torch.nn.functional as F

import torchtext.vocab as Vocab

import torch.utils.data as Data

import sys

# sys.path.append("..")

import d2lzh_pytorch as d2l

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(torch.__version__, device)数据集

一个较小的法语--英语数据集

elle est vieille . she is old .

elle est tranquille . she is quiet .

elle a tort . she is wrong .

elle est canadienne . she is canadian .

elle est japonaise . she is japanese .

ils sont russes . they are russian .

ils se disputent . they are arguing .

ils regardent . they are watching .

ils sont acteurs . they are actors .

elles sont crevees . they are exhausted .

il est mon genre ! he is my type !

il a des ennuis . he is in trouble .

c est mon frere . he is my brother .

c est mon oncle . he is my uncle .

il a environ mon age . he is about my age .

elles sont toutes deux bonnes . they are both good .

elle est bonne nageuse . she is a good swimmer .

c est une personne adorable . he is a lovable person .

il fait du velo . he is riding a bicycle .

ils sont de grands amis . they are great friends .数据预处理

构造序列中出现词的词典,并用<pad>将序列补至等长,并为每句加上结束标记

使用词典将每句序列转换为对应索引

# 将一个序列中所有的词记录在all_tokens中以便之后构造词典,然后在该序列后面添加PAD直到序列

# 长度变为max_seq_len,然后将序列保存在all_seqs中

PAD, BOS, EOS = '<pad>', '<bos>', '<eos>'

def process_one_seq(seq_tokens, all_tokens, all_seqs, max_seq_len):

all_tokens.extend(seq_tokens)

seq_tokens += [EOS] + [PAD] * (max_seq_len - len(seq_tokens) - 1)

all_seqs.append(seq_tokens)

# 使用所有的词来构造词典。并将所有序列中的词变换为词索引后构造Tensor

def build_data(all_tokens, all_seqs):

vocab = Vocab.Vocab(collections.Counter(all_tokens),

specials=[PAD, BOS, EOS])

indices = [[vocab.stoi[w] for w in seq] for seq in all_seqs]

return vocab, torch.tensor(indices)分别建立法语和英语词典,并利用对应数据创建数据集类为模型生成输入数据

def read_data(max_seq_len):

# in和out分别是input和output的缩写

in_tokens, out_tokens, in_seqs, out_seqs = [], [], [], []

with io.open('fr-en-small.txt') as f:

lines = f.readlines()

for line in lines:

in_seq, out_seq = line.rstrip().split('\t')

in_seq_tokens, out_seq_tokens = in_seq.split(' '), out_seq.split(' ')

if max(len(in_seq_tokens), len(out_seq_tokens)) > max_seq_len - 1:

continue # 如果加上EOS后长于max_seq_len,则忽略掉此样本

process_one_seq(in_seq_tokens, in_tokens, in_seqs, max_seq_len)

process_one_seq(out_seq_tokens, out_tokens, out_seqs, max_seq_len)

in_vocab, in_data = build_data(in_tokens, in_seqs)

out_vocab, out_data = build_data(out_tokens, out_seqs)

return in_vocab, out_vocab, Data.TensorDataset(in_data, out_data)max_seq_len = 7

in_vocab, out_vocab, dataset = read_data(max_seq_len)其中一组数据的样子,前是法语输入,后是英语标签

dataset[0]![]()

使用 torch中的DataLoader类以dataset为数据,为训练模型创建一个迭代器

data_iter = DataLoader(dataset, batch_size, shuffle=True)至此,数据预处理部分结束

模型实现

编码器(Encoder)的实现

class Encoder(nn.Module):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

drop_prob=0, **kwargs):

super(Encoder, self).__init__(**kwargs)

self.embedding = nn.Embedding(vocab_size, embed_size)

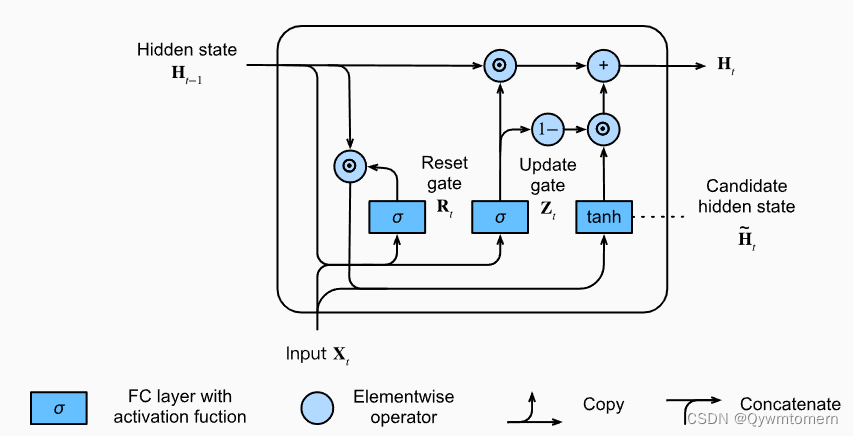

self.rnn = nn.GRU(embed_size, num_hiddens, num_layers, dropout=drop_prob)

def forward(self, inputs, state):

# 输入形状是(批量大小, 时间步数)。将输出互换样本维和时间步维

embedding = self.embedding(inputs.long()).permute(1, 0, 2) # (seq_len, batch, input_size)

return self.rnn(embedding, state)

def begin_state(self):

return Noneencoder = Encoder(vocab_size=10, embed_size=8, num_hiddens=16, num_layers=2)

output, state = encoder(torch.zeros((4, 7)), encoder.begin_state())包含一个词嵌入(向量化输入)和一个GRU门控循环单元

词嵌入将输入转换为向量

GRU对输入上下文实现长期依赖和记忆功能,输出隐藏状态

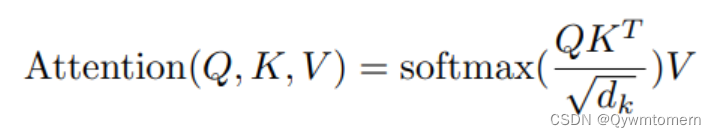

实现注意力机制

def attention_model(input_size, attention_size):

model = nn.Sequential(nn.Linear(input_size, attention_size, bias=False),

nn.Tanh(),

nn.Linear(attention_size, 1, bias=False))

return model

def attention_forward(model, enc_states, dec_state):

"""

enc_states: (时间步数, 批量大小, 隐藏单元个数)

dec_state: (批量大小, 隐藏单元个数)

"""

# 将解码器隐藏状态广播到和编码器隐藏状态形状相同后进行连结

dec_states = dec_state.unsqueeze(dim=0).expand_as(enc_states)

enc_and_dec_states = torch.cat((enc_states, dec_states), dim=2)

e = model(enc_and_dec_states) # 形状为(时间步数, 批量大小, 1)

alpha = F.softmax(e, dim=0) # 在时间步维度做softmax运算

return (alpha * enc_states).sum(dim=0) # 返回背景变量

解码器(Decoder)的实现

class Decoder(nn.Module):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

attention_size, drop_prob=0):

super(Decoder, self).__init__()

self.embedding = nn.Embedding(vocab_size, embed_size)

self.attention = attention_model(2*num_hiddens, attention_size)

# GRU的输入包含attention输出的c和实际输入, 所以尺寸是 num_hiddens+embed_size

self.rnn = nn.GRU(num_hiddens + embed_size, num_hiddens,

num_layers, dropout=drop_prob)

self.out = nn.Linear(num_hiddens, vocab_size)

def forward(self, cur_input, state, enc_states):

"""

cur_input shape: (batch, )

state shape: (num_layers, batch, num_hiddens)

"""

# 使用注意力机制计算背景向量

c = attention_forward(self.attention, enc_states, state[-1])

# 将嵌入后的输入和背景向量在特征维连结, (批量大小, num_hiddens+embed_size)

input_and_c = torch.cat((self.embedding(cur_input), c), dim=1)

# 为输入和背景向量的连结增加时间步维,时间步个数为1

output, state = self.rnn(input_and_c.unsqueeze(0), state)

# 移除时间步维,输出形状为(批量大小, 输出词典大小)

output = self.out(output).squeeze(dim=0)

return output, state

def begin_state(self, enc_state):

# 直接将编码器最终时间步的隐藏状态作为解码器的初始隐藏状态

return enc_state使用注意力机制和Encoder的输出作为Decoder的输入,将编码器最终时间步的隐藏状态作为解码器的初始隐藏状态

训练模型

定义损失函数

def batch_loss(encoder, decoder, X, Y, loss):

batch_size = X.shape[0]

enc_state = encoder.begin_state()

enc_outputs, enc_state = encoder(X, enc_state)

# 初始化解码器的隐藏状态

dec_state = decoder.begin_state(enc_state)

# 解码器在最初时间步的输入是BOS

dec_input = torch.tensor([out_vocab.stoi[BOS]] * batch_size)

# 我们将使用掩码变量mask来忽略掉标签为填充项PAD的损失, 初始全1

mask, num_not_pad_tokens = torch.ones(batch_size,), 0

l = torch.tensor([0.0])

for y in Y.permute(1,0): # Y shape: (batch, seq_len)

dec_output, dec_state = decoder(dec_input, dec_state, enc_outputs)

l = l + (mask * loss(dec_output, y)).sum()

dec_input = y # 使用强制教学

num_not_pad_tokens += mask.sum().item()

# EOS后面全是PAD. 下面一行保证一旦遇到EOS接下来的循环中mask就一直是0

mask = mask * (y != out_vocab.stoi[EOS]).float()

return l / num_not_pad_tokens使用强制学习,将真实的目标序列中的每个时间步的真实输出作为Decoder的输入,而不是将前一个时间步生成的输出作为当前时间步的输入。会使收敛更快,但在训练和推断时的不一致性会导致泛化能力不强

训练

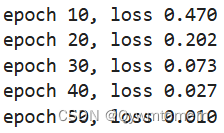

使用交叉熵损失函数,自适应动量的随机优化方法(Adam)

def train(encoder, decoder, dataset, lr, batch_size, num_epochs):

enc_optimizer = torch.optim.Adam(encoder.parameters(), lr=lr)

dec_optimizer = torch.optim.Adam(decoder.parameters(), lr=lr)

loss = nn.CrossEntropyLoss(reduction='none')

data_iter = Data.DataLoader(dataset, batch_size, shuffle=True)

for epoch in range(num_epochs):

l_sum = 0.0

for X, Y in data_iter:

enc_optimizer.zero_grad()

dec_optimizer.zero_grad()

l = batch_loss(encoder, decoder, X, Y, loss)

l.backward()

enc_optimizer.step()

dec_optimizer.step()

l_sum += l.item()

if (epoch + 1) % 10 == 0:

print("epoch %d, loss %.3f" % (epoch + 1, l_sum / len(data_iter)))embed_size, num_hiddens, num_layers = 64, 64, 2

attention_size, drop_prob, lr, batch_size, num_epochs = 10, 0.5, 0.01, 2, 50

encoder = Encoder(len(in_vocab), embed_size, num_hiddens, num_layers,

drop_prob)

decoder = Decoder(len(out_vocab), embed_size, num_hiddens, num_layers,

attention_size, drop_prob)

train(encoder, decoder, dataset, lr, batch_size, num_epochs)

模型性能检测

序列预测输出

使用贪婪搜索

def translate(encoder, decoder, input_seq, max_seq_len):

in_tokens = input_seq.split(' ')

in_tokens += [EOS] + [PAD] * (max_seq_len - len(in_tokens) - 1)

enc_input = torch.tensor([[in_vocab.stoi[tk] for tk in in_tokens]]) # batch=1

enc_state = encoder.begin_state()

enc_output, enc_state = encoder(enc_input, enc_state)

dec_input = torch.tensor([out_vocab.stoi[BOS]])

dec_state = decoder.begin_state(enc_state)

output_tokens = []

for _ in range(max_seq_len):

dec_output, dec_state = decoder(dec_input, dec_state, enc_output)

pred = dec_output.argmax(dim=1)

pred_token = out_vocab.itos[int(pred.item())]

if pred_token == EOS: # 当任一时间步搜索出EOS时,输出序列即完成

break

else:

output_tokens.append(pred_token)

dec_input = pred

return output_tokensinput_seq = 'ils regardent .'

translate(encoder, decoder, input_seq, max_seq_len)![]()

使用bleu评价翻译结果

评价机器翻译结果通常使用BLEU(Bilingual Evaluation Understudy)[1]。对于模型预测序列中任意的子序列,BLEU考察这个子序列是否出现在标签序列中。

具体来说,设词数为𝑛的子序列的精度为。它是预测序列与标签序列匹配词数为𝑛的子序列的数量与预测序列中词数为𝑛的子序列的数量之比。举个例子,假设标签序列为𝐴、𝐵、𝐶、𝐷、𝐸、𝐹,预测序列为𝐴、𝐵、𝐵、𝐶、𝐷,那么𝑝1=4/5, 𝑝2=3/4, 𝑝3=1/3, 𝑝4=0 。设

和

分别为标签序列和预测序列的词数,那么,BLEU的定义为

其中𝑘是我们希望匹配的子序列的最大词数。可以看到当预测序列和标签序列完全一致时,BLEU为1。

因为匹配较长子序列比匹配较短子序列更难,BLEU对匹配较长子序列的精度赋予了更大权重。例如,当固定在0.5时,随着𝑛的增大,

。

另外,模型预测较短序列往往会得到较高值。因此,上式中连乘项前面的系数是为了惩罚较短的输出而设的。举个例子,当𝑘=2时,假设标签序列为𝐴、𝐵、𝐶、𝐷、𝐸、𝐹,而预测序列为𝐴、𝐵。虽然𝑝1=𝑝2=1,但惩罚系数exp(1−6/2)≈0.14,因此BLEU也接近0.14。

def bleu(pred_tokens, label_tokens, k):

len_pred, len_label = len(pred_tokens), len(label_tokens)

score = math.exp(min(0, 1 - len_label / len_pred))

for n in range(1, k + 1):

num_matches, label_subs = 0, collections.defaultdict(int)

for i in range(len_label - n + 1):

label_subs[''.join(label_tokens[i: i + n])] += 1

for i in range(len_pred - n + 1):

if label_subs[''.join(pred_tokens[i: i + n])] > 0:

num_matches += 1

label_subs[''.join(pred_tokens[i: i + n])] -= 1

score *= math.pow(num_matches / (len_pred - n + 1), math.pow(0.5, n))

return scoredef score(input_seq, label_seq, k):

pred_tokens = translate(encoder, decoder, input_seq, max_seq_len)

label_tokens = label_seq.split(' ')

print('bleu %.3f, predict: %s' % (bleu(pred_tokens, label_tokens, k),

' '.join(pred_tokens)))score('ils regardent .', 'they are watching .', k=2)![]()

score('ils sont canadienne .', 'they are canadian .', k=2)![]()

使用更大的数据集进行训练

数据来源

Tatoeba Project. Tab-delimited Bilingual Sentence Pairs from the Tatoeba Project (Good for Anki and Similar Flashcard Applications)

Hi. 嗨。 CC-BY 2.0 (France) Attribution: tatoeba.org #538123 (CM) & #891077 (Martha)

Hi. 你好。 CC-BY 2.0 (France) Attribution: tatoeba.org #538123 (CM) & #4857568 (musclegirlxyp)

Run. 你用跑的。 CC-BY 2.0 (France) Attribution: tatoeba.org #4008918 (JSakuragi) & #3748344 (egg0073)

Stop! 住手! CC-BY 2.0 (France) Attribution: tatoeba.org #448320 (CM) & #448321 (GlossaMatik)

Wait! 等等! CC-BY 2.0 (France) Attribution: tatoeba.org #1744314 (belgavox) & #4970122 (wzhd)

Wait! 等一下! CC-BY 2.0 (France) Attribution: tatoeba.org #1744314 (belgavox) & #5092613 (mirrorvan)

Begin. 开始! CC-BY 2.0 (France) Attribution: tatoeba.org #6102432 (mailohilohi) & #5094852 (Jin_Dehong)

Hello! 你好。 CC-BY 2.0 (France) Attribution: tatoeba.org #373330 (CK) & #4857568 (musclegirlxyp)

I try. 我试试。 CC-BY 2.0 (France) Attribution: tatoeba.org #20776 (CK) & #8870261 (will66)

I won! 我赢了。 CC-BY 2.0 (France) Attribution: tatoeba.org #2005192 (CK) & #5102367 (mirrorvan)

Oh no! 不会吧。 CC-BY 2.0 (France) Attribution: tatoeba.org #1299275 (CK) & #5092475 (mirrorvan)

Cheers! 乾杯! CC-BY 2.0 (France) Attribution: tatoeba.org #487006 (human600) & #765577 (Martha)

Got it? 知道了没有? CC-BY 2.0 (France) Attribution: tatoeba.org #455353 (CM) & #455357 (GlossaMatik)

Got it? 懂了吗? CC-BY 2.0 (France) Attribution: tatoeba.org #455353 (CM) & #2032276 (ydcok)

Got it? 你懂了吗? CC-BY 2.0 (France) Attribution: tatoeba.org #455353 (CM) & #7768205 (jiangche)

He ran. 他跑了。 CC-BY 2.0 (France) Attribution: tatoeba.org #672229 (CK) & #5092389 (mirrorvan)

Hop in. 跳进来。 CC-BY 2.0 (France) Attribution: tatoeba.org #1111548 (Scott) & #5092444 (mirrorvan)

I know. 我知道。 CC-BY 2.0 (France) Attribution: tatoeba.org #319990 (CK) & #3378015 (GlossaMatik)

I quit. 我退出。 CC-BY 2.0 (France) Attribution: tatoeba.org #731636 (Eldad) & #5102253 (mirrorvan)

I quit. 我不干了。 CC-BY 2.0 (France) Attribution: tatoeba.org #731636 (Eldad) & #9569182 (MiracleQ)

……代码修正

加入下列代码,读取你下载的数据集并做简单切分

df = pd.read_csv('./en-zh.txt', sep='\\t', engine='python', header=None)

trainen = df[0].values.tolist()#[:10000]

trainzh = df[1].values.tolist()#[:10000]更改下列循环项

def read_data(max_seq_len):

# in和out分别是input和output的缩写

in_tokens, out_tokens, in_seqs, out_seqs = [], [], [], []

for i in range(len(df)):

in_seq, out_seq = trainen[i], trainzh[i]

in_seq_tokens, out_seq_tokens = in_seq.split(' '), out_seq.split(' ')

if max(len(in_seq_tokens), len(out_seq_tokens)) > max_seq_len - 1:

continue # 如果加上EOS后长于max_seq_len,则忽略掉此样本

process_one_seq(in_seq_tokens, in_tokens, in_seqs, max_seq_len)

process_one_seq(out_seq_tokens, out_tokens, out_seqs, max_seq_len)

in_vocab, in_data = build_data(in_tokens, in_seqs)

out_vocab, out_data = build_data(out_tokens, out_seqs)

return in_vocab, out_vocab, Data.TensorDataset(in_data, out_data)for i in range(len(df)):

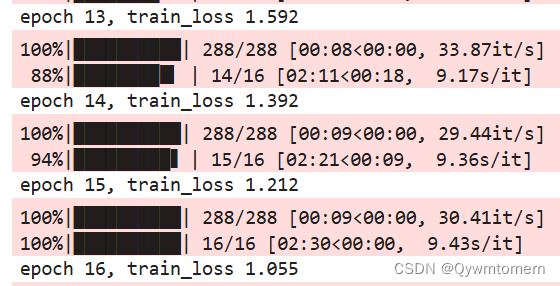

in_seq, out_seq = trainen[i], trainzh[i]参数设定

embed_size, num_hiddens, num_layers = 128, 128, 2

attention_size, drop_prob, lr, batch_size, num_epochs = 10, 0.5, 0.001, 64, 16

encoder = Encoder(len(in_vocab), embed_size, num_hiddens, num_layers,

drop_prob)

decoder = Decoder(len(out_vocab), embed_size, num_hiddens, num_layers,

attention_size, drop_prob)

encoder = encoder.to(device)

decoder = decoder.to(device)

train(encoder, decoder, dataset, lr, batch_size, num_epochs)训练结果

翻译

input_seq = 'I thought Tom and Mary were crazy.'

input_seq = input_seq

translate(encoder, decoder, input_seq, max_seq_len)![]()

评估

score('I thought Tom and Mary were crazy.', '我本以为汤姆和玛丽疯了呢。', k=2) ![]()

score('I visited my friend Tom yesterday.', '我昨天拜訪了我的朋友湯姆。', k=2)![]()

828

828

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?