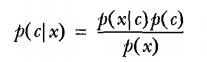

贝叶斯准则:

贝叶斯准则告诉我们:如何交换条件概率中的条件和结果,即如果已知P(x|c),要求P(c|x):

朴素贝叶斯有两个假设:

1:特征之间相互独立

2:每个特征同等重要

对于一个文档分类问题,已知文档x,要求x属于类别c的概率P(c|x)

在训练数据集中,

我们可以很方便的求出类别为c的文档的概率P( c)= 类别为c的文档数/文档总数

而在类别为c的文档中,特征为x的概率P(x|c)=P(x1|c)P(x2|c)P(x3|c)…P(xn|c),即特征x的每个属性xi出现的概率之积。

P(xi|c)=属性xi出现的次数/类别c中所有元素的个数

并且P(x)=1

因此我们即可求出P(c|x)

我们将文档划分为概率最高的类别中。

问题描述:

对于一个评论区的留言文档,我们想要屏蔽一些含有侮辱性词汇垃圾文档,即将文档分为两类:

0:正常留言文档

1:含有侮辱性词汇文档

训练集数据:

1:‘my’, ‘dog’, ‘has’, ‘flea’, ‘problems’, ‘help’, ‘please’

2:‘maybe’, ‘not’, ‘take’, ‘him’, ‘to’, ‘dog’, ‘park’, 'stupid’

3:‘my’, ‘dalmation’, ‘is’, ‘so’, ‘cute’, ‘I’, ‘love’, ‘him’

4:‘stop’, ‘posting’, ‘stupid’, ‘worthless’, ‘garbage’

5:‘mr’, ‘licks’, ‘ate’, ‘my’, ‘steak’, ‘how’, ‘to’, ‘stop’, ‘him’

6:‘quit’, ‘buying’, ‘worthless’, ‘dog’, ‘food’, 'stupid’

数据标签:

0

1

0

1

0

1

代码实现:

(1)数据的特征表示:

对于文本数据,我们要将文本数据转换为对应的数值数据

在自然语言处理领域,文本最简单的处理方式是将每个文本数据转换为一个对应的词向量

首先,训练集中所有的单词数据会构成一个词汇表,假设大小为n

词向量:表示一个文档,是一个长度为n的列表,列表中每一个元素代表词汇表中的一个单词出现与否(或出现的次数),出现则赋1否则赋0

1.1: 加载训练数据集postingList和对应的标签classVec

from numpy import *

def loadDataSet():

postingList=[['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'],

['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'],

['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'],

['stop', 'posting', 'stupid', 'worthless', 'garbage'],

['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'],

['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']]

classVec = [0,1,0,1,0,1] #1 is abusive, 0 not

return postingList,classVec

1.2: 创建包含所有单词的单词表

def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet:

vocabSet = vocabSet | set(document)

return list(vocabSet)

1.3: 将文本(单词集合列表)转换为0,1词向量

将文本中每个单词在单词表中查找到对应的索引位子,将该位子赋1,其他赋0

def setOfWord2Vec(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if word in vocabList:

returnVec[vocabList.index(word)] = 1

else :print("{} is not in my Vocabulary".format(word))

return returnVec

(2)从词向量计算概率

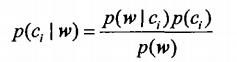

w为一个文档词向量,由每个词出现与否构成,则文档w所属类别概率为:

其中:

P(ci)=类别i中文档数目/文档总数

P(w|ci) = P(w0|ci)P(w1|ci)P(w2|ci)…P(wn|ci), 且P(wj|ci)=在类别i文档中wi单词出现的数目/类别i中的文档单词的总数

P(w)=1

def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory) / float(numTrainDocs) #P(c1)类别1的概率

p0Num = zeros(numWords); p1Num = zeros(numWords) # p0Num:类别0中各个单词出现的次数 p1Num:类别1中各个单词出现的次数

p0Denom = 0.0; p1Denom = 0.0 #p0Denom 类别0中单词总数 p1Denom 类别1中单词总数

for i in range(numTrainDocs):

if trainCategory[i] == 1:

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = p1Num/p1Denom #P(wi|c1)

p0Vect = p0Num/p0Denom #P(wi|c2)

return p0Vect, p1Vect, pAbusive

所以

P(c1|w) = p1Vect各个元素之积 * pAbusive

P(c0|w) = p0Vect各个元素之积 * (1-pAbusive)

优化:

因为P(w|c1) = p1Vect各个元素之积,若其中某个P(wi|c1)=0 即wi单词为出现在文档中, 那么P(w|c1)都为0.并且 p1Vect各个元素之积容易出现下溢出的现象。

所以我们修改两处:

1:将所有单词出现的次数初始化为1,每个类别单词总数初始化为2

p0Num = ones(numWords); p1Num = ones(numWords)

p0Denom = 2.0; p1Denom = 2.0

2:将概率公式两边取对数log(),由单调性可知,各个类别概率的大小关系不变。

p1Vect = log(p1Num/p1Denom)

p0Vect = log(p0Num/p0Denom)

则整体代码为:

def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory) / float(numTrainDocs)

p0Num = ones(numWords); p1Num = ones(numWords)

p0Denom = 2.0; p1Denom = 2.0

for i in range(numTrainDocs):

if trainCategory[i] == 1:

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = log(p1Num/p1Denom)

p0Vect = log(p0Num/p0Denom)

return p0Vect, p1Vect, pAbusive

(3)贝叶斯分类器

分类器:将输入的测试文档词向量进行分类

def classifyNB(vec2Classify, p0Vec, p1Vec, pClass1):

p1 = sum(vec2Classify * p1Vec) + log(pClass1)

p0 = sum(vec2Classify * p0Vec) + log(1 - pClass1)

if p1 > p0:

return 1

else:

return 0

vec2Classify * p1Vec:取出待分类文档中包含的单词的概率

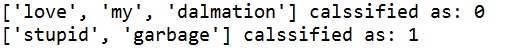

测试:

[‘love’, ‘my’, ‘dalmation’]

[‘stupid’, ‘garbage’]

def testingNB():

listOposts,listClasses = loadDataSet()

myVocabList = createVocabList(listOposts)

trainMat = []

for postinDoc in listOposts:

trainMat.append(setOfWord2Vec(myVocabList,postinDoc))

p0V,p1V,pAb = trainNB0(array(trainMat),array(listClasses))

testEntry = ['love', 'my', 'dalmation']

thisDoc = array(setOfWord2Vec(myVocabList, testEntry))

print("{} calssified as: {}".format(testEntry,classifyNB(thisDoc,p0V,p1V,pAb)))

testEntry = ['stupid', 'garbage']

thisDoc = array(setOfWord2Vec(myVocabList, testEntry))

print("{} calssified as: {}".format(testEntry,classifyNB(thisDoc,p0V,p1V,pAb)))

结果:

1244

1244

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?