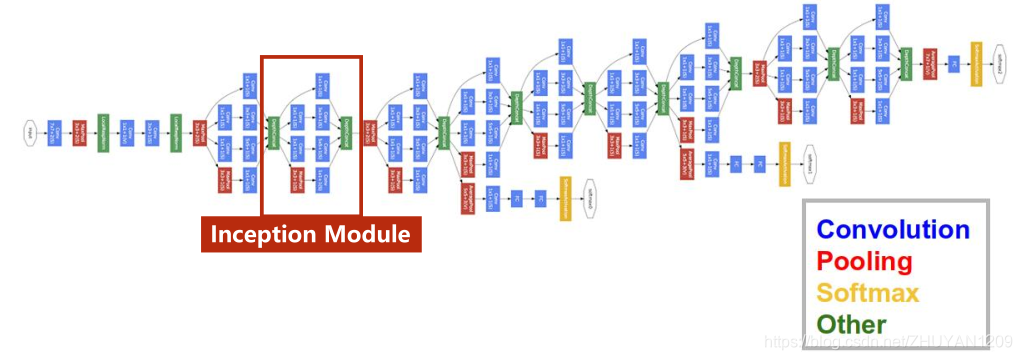

一般来说,提升网络性能最保险的方法就是增加网络的宽度和深度,这样做同时也会伴随着副作用。首先越深越宽的网络往往会意味着有巨大的参数量,当数据量很少的时候,训练出来的网络很容易过拟合,并且当网络有很深的深度的时候,很容易造成梯度消失现象。这两个副作用制约着又深又宽的卷积神经网络的发展,Inception网络很好的解决了这两个问题。

1 Inception网络结构

2 实现代码

class InceptionA(torch.nn.Module):

def __init__(self,in_channels):

super(InceptionA,self).__init__()

self.branch1x1=torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_1=torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_2=torch.nn.Conv2d(16,24,kernel_size=5,padding=2)

self.branch3x3_1=torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch3x3_2=torch.nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3x3_3=torch.nn.Conv2d(24,24,kernel_size=3,padding=1)

self.branch_pool=torch.nn.Conv2d(in_channels,24,kernel_size=1)

def forward(self,x):

branch1x1=self.branch1x1(x)

branch5x5=self.branch5x5_1(x)

branch5x5=self.branch5x5_2(branch5x5)

branch3x3=self.branch3x3_1(x)

branch3x3=self.branch3x3_2(branch3x3)

branch3x3=self.branch3x3_3(branch3x3)

branch_pool=F.avg_pool2d(x,kernel_size=3,stride=1,padding=1)

branch_pool=self.branch_pool(branch_pool)

outputs=[branch_pool,branch1x1,branch3x3,branch5x5]

return torch.cat(outputs,dim=1)

class Net(torch.nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1=torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2=torch.nn.Conv2d(88,20,kernel_size=5)

self.incep1=InceptionA(in_channels=10)

self.incep2=InceptionA(in_channels=20)

self.mp=torch.nn.MaxPool2d(2)

self.fc=torch.nn.Linear(1408,10)

def forward(self,x):

in_size=x.size(0)

x=F.relu(self.mp(self.conv1(x)))

x=self.incep1(x)

x=F.relu(self.mp(self.conv2(x)))

x=self.incep2(x)

x=x.view(in_size,-1)

x=self.fc(x)

return x3 运行结果

数据集采用MNIST

1072

1072

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?