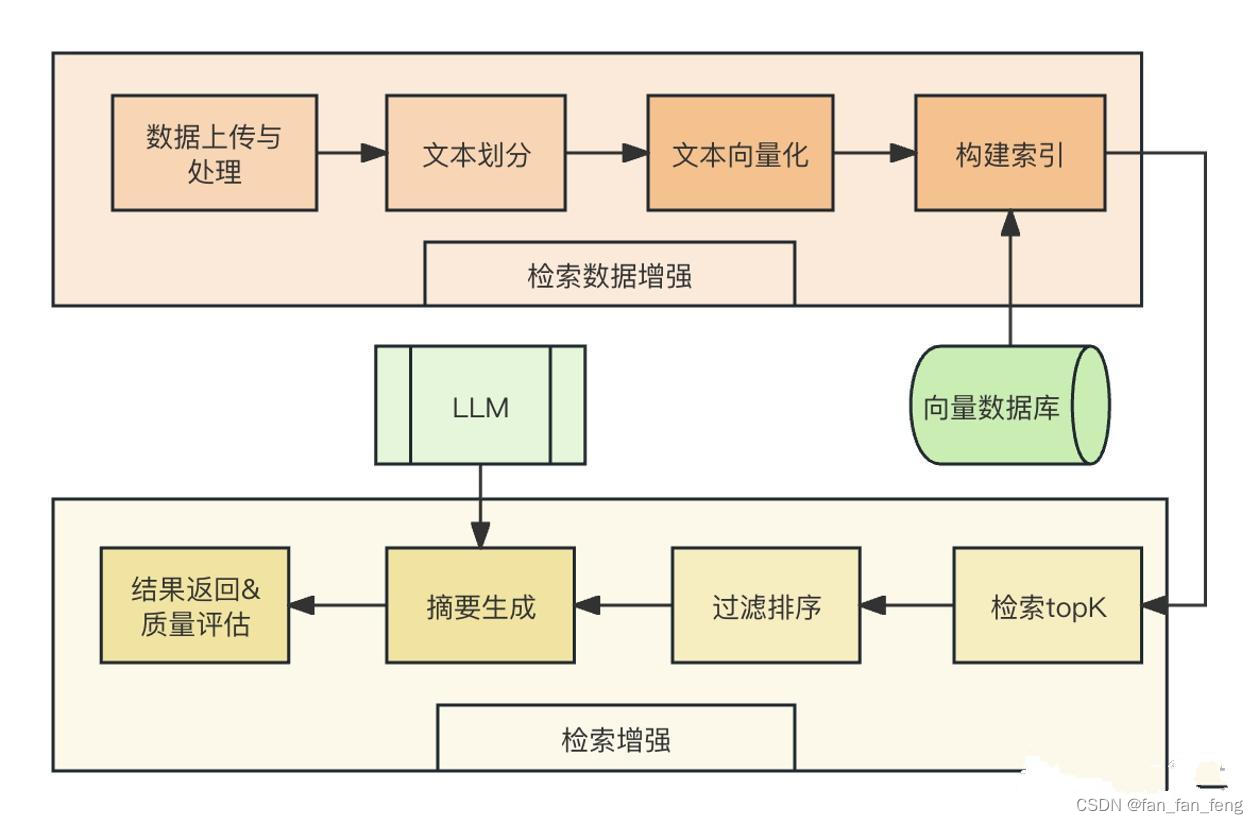

一、整体框架图

RAG检索流程中包括了数据上传与处理、文本划分、文本向量化、构建索引、检索、过滤排序、摘要生成、结果处理及返回等模块如下图:

二、基于langchain开发RAG

2.1 LLM 模块自定义

这里以重写一个支持 智普api接口的LLM 组件

from typing import Any, List, Mapping, Optional, Dict

from langchain_core.callbacks.manager import CallbackManagerForLLMRun

from langchain_core.language_models.llms import LLM

from zhipuai import ZhipuAI

# 继承自 langchain.llms.base.LLM

class ZhipuAILLM(LLM):

# 默认选用 ERNIE-Bot-turbo 模型,即目前一般所说的百度文心大模型

model: str = "glm-4"

# 温度系数

temperature: float = 0.1

# API_Key

api_key: str = None

def _call(self, prompt : str, stop: Optional[List[str]] = None,

run_manager: Optional[CallbackManagerForLLMRun] = None,

**kwargs: Any):

def gen_glm_params(prompt):

'''

构造 GLM 模型请求参数 messages

请求参数:

prompt: 对应的用户提示词

'''

messages = [{"role": "user", "content": prompt}]

return messages

client = ZhipuAI(

api_key=self.api_key

)

messages = gen_glm_params(prompt)

response = client.chat.completions.create(

model = self.model,

messages = messages,

temperature = self.temperature

)

if len(response.choices) > 0:

return response.choices[0].message.content

return "generate answer error"

# 首先定义一个返回默认参数的方法

@property

def _default_params(self) -> Dict[str, Any]:

"""获取调用Ennie API的默认参数。"""

normal_params = {

"temperature": self.temperature,

}

# print(type(self.model_kwargs))

return {**normal_params}

@property

def _llm_type(self) -> str:

return "Wenxin"

@property

def _identifying_params(self) -> Mapping[str, Any]:

"""Get the identifying parameters."""

return {**{"model": self.model}, **self._default_params}

624

624

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?