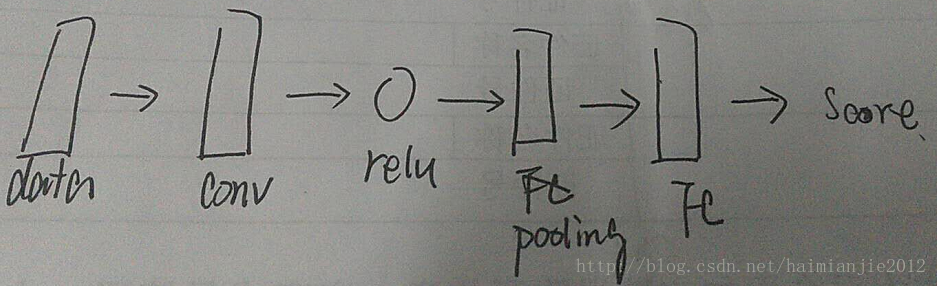

本案例中定义的CNN网络模型如下:

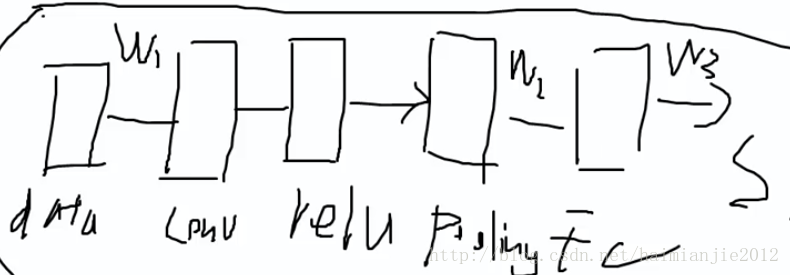

cnn.py文件中的__init__()函数主要作用是对卷积神经网络的参数w1,w2,w3等进行初始化,下面是该函数的代码:

def __init__(self, input_dim=(3, 32, 32), num_filters=32, filter_size=7,

hidden_dim=100, num_classes=10, weight_scale=1e-3, reg=0.0,

dtype=np.float32):

self.params = {}

self.reg = reg

self.dtype = dtype

# Initialize weights and biases

C, H, W = input_dim

self.params['W1'] = weight_scale * np.random.randn(num_filters, C, filter_size, filter_size)

self.params['b1'] = np.zeros(num_filters)

self.params['W2'] = weight_scale * np.random.randn(int(num_filters*H*W/4), hidden_dim)

self.params['b2'] = np.zeros(hidden_dim)

self.params['W3'] = weight_scale * np.random.randn(hidden_dim, num_classes)

self.params['b3'] = np.zeros(num_classes)

for k, v in self.params.items():

self.params[k] = v.astype(dtype)无论是caffe还是tensorflow类型都会把数据类型转为float32类型,所以__init__()函数最后一个参数定义为dtype=np.float32。

cnn.py文件中还有另外一个函数 loss()函数,这两个函数起到了定义CNN卷积网络结构的作用。下面是 loss()函数的代码:

def loss(self, X, y=None):

W1, b1 = self.params['W1'], self.params['b1']

W2, b2 = self.params['W2'], self.params['b2']

W3, b3 = self.params['W3'], self.params['b3']

# pass conv_param to the forward pass for the convolutional layer

filter_size = W1.shape[2]

conv_param = {'stride': 1, 'pad': (int)((filter_size - 1) / 2)}

# pass pool_param to the forward pass for the max-pooling layer

pool_param = {'pool_height': 2, 'pool_width': 2, 'stride': 2}

# compute the forward pass

a1, cache1 = conv_relu_pool_forward(X, W1, b1, conv_param, pool_param)

a2, cache2 = affine_relu_forward(a1, W2, b2)

scores, cache3 = affine_forward(a2, W3, b3)

if y is None:

return scores

# compute the backward pass

data_loss, dscores = softmax_loss(scores, y)

da2, dW3, db3 = affine_backward(dscores, cache3)

da1, dW2, db2 = affine_relu_backward(da2, cache2)

dX, dW1, db1 = conv_relu_pool_backward(da1, cache1)

# Add regularization

dW1 += self.reg * W1

dW2 += self.reg * W2

dW3 += self.reg * W3

reg_loss = 0.5 * self.reg * sum(np.sum(W * W) for W in [W1, W2, W3])

loss = data_loss + reg_loss

grads = {'W1': dW1, 'b1': db1, 'W2': dW2, 'b2': db2, 'W3': dW3, 'b3': db3}

return loss, grads从loss()函数我们可以看到卷积层的参数为: conv_param = {'stride': 1, 'pad': (int)((filter_size - 1) / 2)}

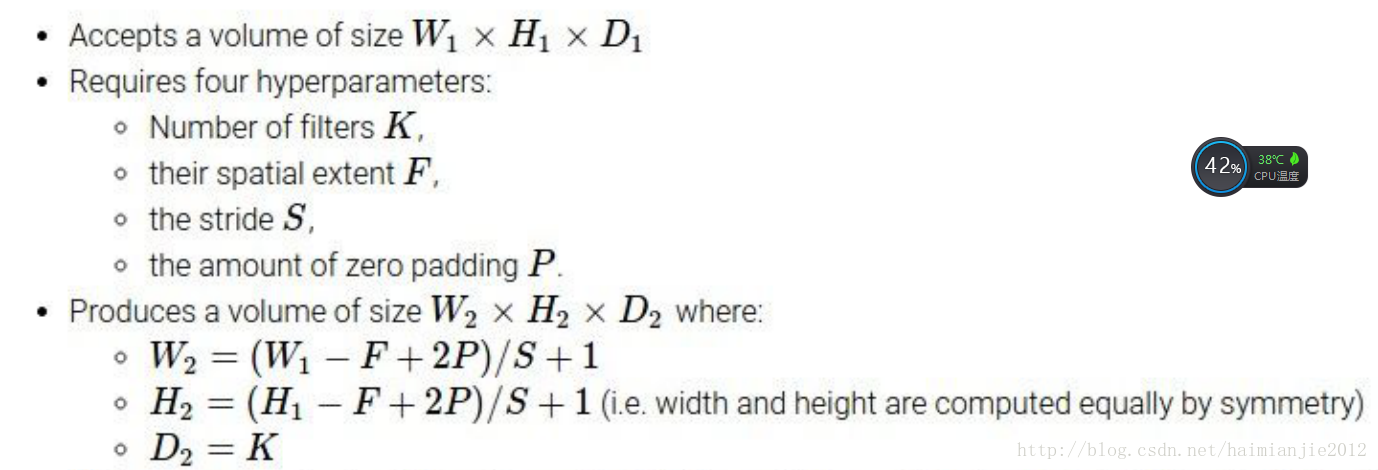

filter_size在_init_()函数中已经定义为7,所以pad值等于3,根据公式1:

conv卷积层的输出高度和宽度为(32-7+2*3)/1+1=32,所以conv卷积层的输出为32*32*32,32分别代表过滤器个数、高度和宽度。

loss()函数中pool池化层的参数定义为: pool_param = {'pool_height': 2, 'pool_width': 2, 'stride': 2}

同理根据公式1计算池化层输出的高度和宽度为:(32-2+2*0)/2+1=16,所以pool池化层的输出为:32*16*16,也就是说经过池化层之后高度和宽度分别减半了。

所以_init()函数中初始化池化层与FC全连接层间的参数w2时,定义为: self.params['W2'] = weight_scale * np.random.randn(int(num_filters*H*W/4), hidden_dim),num_filters*H*W/4就是pool池化层的输出为:32*16*16。

2088

2088

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?