该章节介绍VITGAN对抗生成网络中,ModulatedLinear调制模块 部分的代码实现。

目录(文章发布后会补上链接):

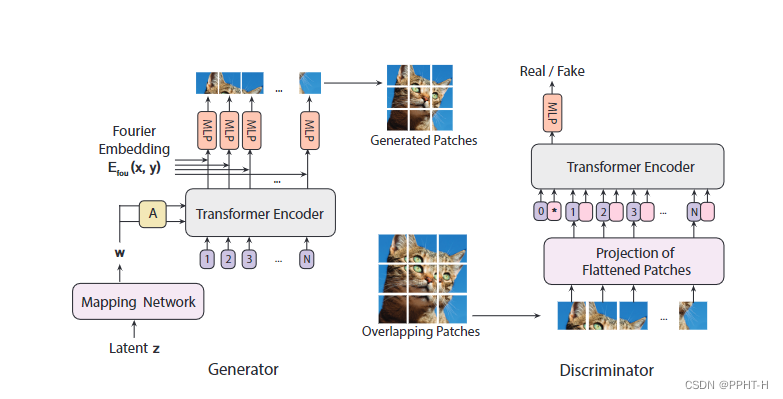

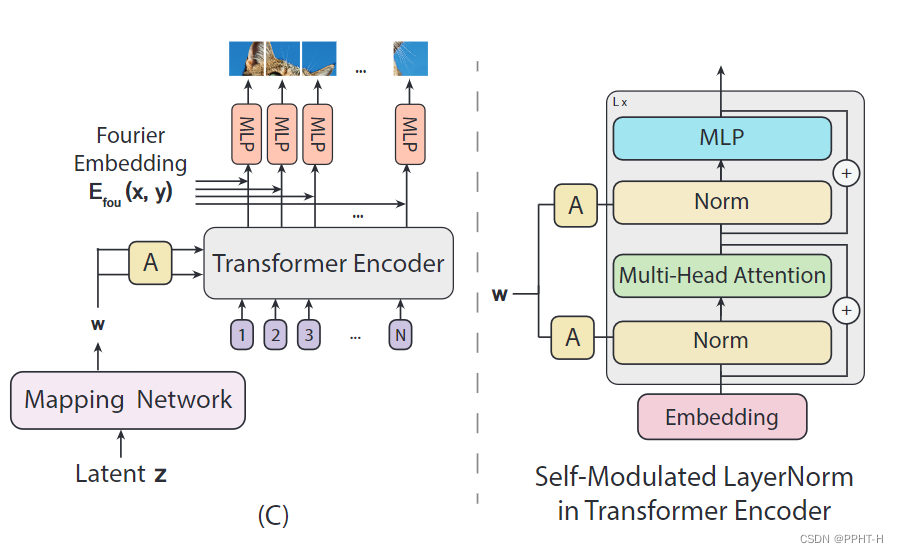

- 网络结构简介

- Mapping NetWork 实现

- PositionalEmbedding 实现

- MLP 实现

- MSA多头注意力 实现

- SLN自调制 实现

- CoordinatesPositionalEmbedding 实现

- ModulatedLinear 实现

- Siren 实现

- Generator生成器 实现

- PatchEmbedding 实现

- ISN 实现

- Discriminator鉴别器 实现

- VITGAN 实现

ModulatedLinear调制模块 简介

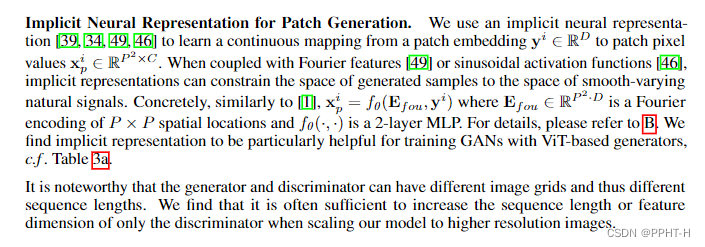

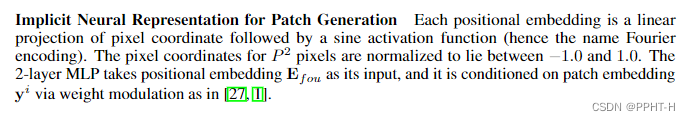

ModulatedLinear调制模块 是用于将二维位置信息映射到模型特征中,增加模型细节,就是里面的

f

θ

(

E

f

o

u

,

y

i

)

f_\theta(E_{fou},y^i)

fθ(Efou,yi) ,对应图中 Fourier Embedding部分。

注意:该部分代码可能有误,欢迎留言指正!!!

代码实现

import tensorflow as tf

import numpy as np

import math

class ModulatedLinear(tf.keras.layers.Layer):

def __init__(

self,

hidden_dim,

output_dim,

demodulation=True,

use_bias=False,

kernel_initializer=None,

):

super().__init__()

self.hidden_dim = hidden_dim

self.output_dim = output_dim

self.demodulation = demodulation

self.use_bias = use_bias

self.kernel_initializer = kernel_initializer

def build(self, inputs_shape):

e_fou_shape, style_shape = inputs_shape

# print('e_fou_shape:', e_fou_shape)

# print('style_shape:', style_shape)

self.scale = 1 / math.sqrt(self.hidden_dim)

self.weight = self.add_weight(

name='w',

shape=[1,self.hidden_dim,self.output_dim],

dtype=tf.float32,

# initializer=tf.initializers.GlorotNormal(),

initializer=self.kernel_initializer,

)

self.style_to_mod = tf.keras.layers.Dense(

self.hidden_dim,

use_bias=self.use_bias,

kernel_initializer=self.kernel_initializer,

)

def call(self, inputs):

'''

input: (B*L,P*P,E)

style: (B, L, E=P*P*C)

'''

assert isinstance(inputs, tuple) and len(inputs) == 2

x, style = inputs

batch_size = tf.shape(x)[0] # B*L

# Computing the weight

style = self.style_to_mod(style)

style = tf.reshape(style, [batch_size, self.hidden_dim, 1]) # (B*L, hidden_dim, 1)

weight = self.scale * self.weight * style # (B*L, hidden_dim, output_dim)

weight = weight / (tf.math.reduce_euclidean_norm(weight, axis=1, keepdims=True) + 1e-8) # [B*L, hidden_dim, output_dim]

# Computing the out

x = tf.matmul(x, weight) # (B*L, P*P, output_dim)

return x

if __name__ == "__main__":

output_dim = 3 # 16*16*3

hidden_dim = 768 # 16*16*3

kernel_initializer = tf.random_uniform_initializer(

-1/output_dim,

1/output_dim

)

layer = ModulatedLinear(

hidden_dim=hidden_dim,

output_dim=output_dim,

)

e_fou = tf.random.uniform([2*196,256,768], dtype=tf.float32)

y = tf.random.uniform([2,196,768], dtype=tf.float32)

o1 = layer((e_fou, y))

tf.print('o1:', tf.shape(o1))

311

311

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?