模型和数据如下:

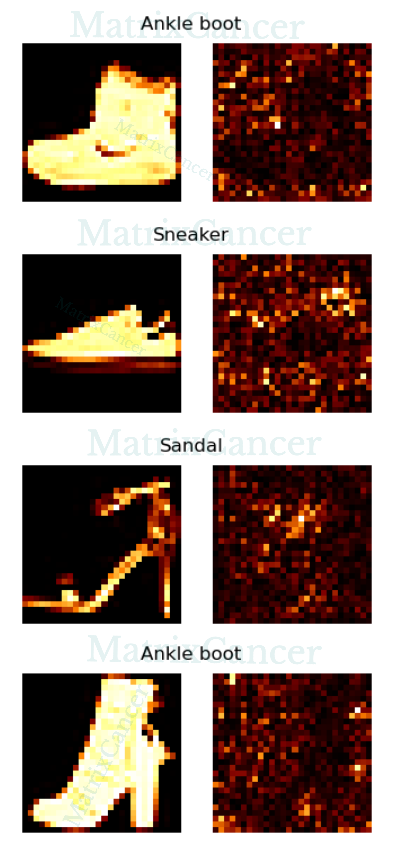

dataset fashion-mnist

initialize torch.nn.init.kaiming_uniform_

loss function torch.nn.CrossEntropyLoss

optimizer torch.optim.AdamW

network structure

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(

in_channels=1, out_channels=16, kernel_size=3,

padding=1, padding_mode='zeros', stride=1, dilation=1,

groups=1, bias=True,

)

self.conv2 = nn.Conv2d(

in_channels=16, out_channels=32, kernel_size=3,

padding=1, padding_mode='circular', stride=1, dilation=1,

groups=4, bias=True,

)

self.conv3 = nn.Conv2d(

in_channels=32, out_channels=128, kernel_size=3,

padding=1, padding_mode='circular', stride=1, dilation=1,

groups=4, bias=True,

)

# self.pool = nn.MaxPool2d(

# kernel_size=2, padding=0, stride=2, dilation=1,

# return_indices=False, ceil_mode=False,

# )

self.pool = nn.AvgPool2d(

kernel_size=2, padding=0, stride=2,

count_include_pad=True, ceil_mode=False,

)

self.fc1 = nn.Linear(128*3*3, 512)

self.fc2 = nn.Linear(512, 256)

self.fc3 = nn.Linear(256, 128)

self.fc4 = nn.Linear(128, 64)

self.fc5 = nn.Linear(64, 10)

self.relu = nn.ReLU()

self.dropout = nn.Dropout(p=0.6)

def forward(self, x):

x = x.view(x.size()[0], 1, 28, 28)

# _ 1 28 28

x = self.conv1(x)

x = self.relu(x)

x = self.pool(x)

# _ 16 14 14

x = self.conv2(x)

x = self.relu(x)

x = self.pool(x)

x = self.dropout(x)

# _ 32 7 7

x = self.conv3(x)

x = self.relu(x)

x = self.pool(x)

# _ 128 3 3

x = x.view(x.size()[0], 128*3*3)

x = self.fc1(x)

x = self.relu(x)

x = self.fc2(x)

x = self.relu(x)

x = self.dropout(x)

x = self.fc3(x)

x = self.relu(x)

x = self.dropout(x)

x = self.fc4(x)

x = self.relu(x)

x = self.dropout(x)

x = self.fc5(x)

x = self.relu(x)

return x

1967

1967

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?