第五章 经典神经网络

1.全连接层

全连接NN:每个神经元与前后相邻层的每一个神经元都有连接关系,输入时特征,输出为预测结果

参数个数:Σ(前层*后层w+后层b)

在实际中,经常参数过多,因此会对原始图像进行特征提取,再把提取到的特征送给全连接网络。

2.卷积网络(CBAPD)

基础知识

- 卷积计算是一种有效提取图像特征的方法,一般会用一个正方形的卷积核,按指定步长,在输入特征图上滑动,遍历输入特征图片中的每一个像素点,每一个步长,卷积核会与输入特征出线重合区域,重合区域对应元素相乘,求和再加上偏置项得到输入特征的一个像素点。

- 输入特征图的深度(channel数),决定了当前层卷积核的深度。

当前层卷积核的个数,决定了当前层输出特征图的深度。

可以多用几个卷积核提高这一层的特征提取能力 - 感受野(Receptive)/过滤器:卷积神经网络各输出特征图中的每一个像素点,在原始输入图片上映射区域的大小。

① 两个3*3过滤器:5*5------>3*3------>1*1

② 一个5*5过滤器:5*5------>1*1

两都具有相同的过滤能力

选择哪一个取决于参数量、计算量:

- 全零填充(padding):padding=’SAME’ | ‘VALID’

卷积神经网络主要模块:

C:Conv2D

B:BatchNormalization

A:Activation

P:Pooling

D:Dropout

C:卷积层实现

tf.keras.layers.Conv2D(

filter=卷积核个数,

kernel_size = 卷积核尺寸, # 正方形写长整数。或者(核高h,核宽w)

strides = 滑动步长 ,#横纵向相同写长整数,或(纵向步长h,横向步长w),默认1

padding = ‘same’ | ‘valid’ , # 使用全0填充|不使用0填充

activation = ‘relu’|’sigmoid’|’tanh’|’softmax’等, # 如果有BN此处不写

input_shape = (高,宽,通道数) # 输入特征图维度,可省略

)

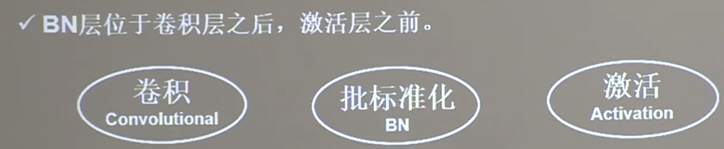

B:标准化(BN)

标准化(batch normalization,BN):使得数据符合0均值,1为1标准差的分布

批标准化:对一小批数据(batch)做标准化处理

批标准化:第K个卷积核的输出特征图(feature map)中第i个像素点

代码使用

tf.keras.layers.BatchNormalization()

A:激活层(Activation)

# relu,softmax,tanh等

tf.keras.layers.Activation(‘relu’)

P:池化层(Pooling)

池化用于减少特征数量。最大值池化可提取图片纹理,均值池化可保留背景特征

- 最大值池化

tf.keras.layers.MaxPool2D(

pool_size = 池化核尺寸, # 正方形写整数,或(核高h,核宽w)

strides = 池化步长, #步长整数,或(纵向h,横向w)

padding = ‘valid’ | ‘same’ # 默认是valid

)

- 平均值池化

tf.keras.layers.AveragePooling2D(

pool_size = 池化核尺寸, # 正方形写核长整数,或(核高h,核宽w)

strides = 池化步长, #步长整数,或(纵向h,横向w)

padding = ‘valid’ | ‘same’ # 默认是valid

)

D:舍弃(Dropout)

在神经网络训练时,将一部分神经元按照一定的概率从神经网络中暂时舍弃,神经网络使用时,被舍弃的神经元恢复链接

代码使用

tf.keras.layers.Droppout(舍弃的概率)

使用总结

model = tf.keras.models.Sequential(

Conv2D(filter=6,kernel_size=(5,5),padding=’same’), # 卷积层

BatchNormalization(), # 标准化层

Activation(‘relu’) # 激活

MaxPool2D(pool_size=(2,2),stride = 2,padding=’same’), # 池化层

Dropout(0.2) # dropout层

)

实例

import tensorflow as tf

import os

import numpy as np

from matplotlib import pyplot as plt

from tensorflow.keras.layers import Conv2D, BatchNormalization, Activation, MaxPool2D, Dropout, Flatten, Dense

from tensorflow.keras import Model

np.set_printoptions(threshold=np.inf)

cifar10 = tf.keras.datasets.cifar10

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

class Baseline(Model):

def __init__(self):

super(Baseline, self).__init__()

self.c1 = Conv2D(filters=6, kernel_size=(5, 5), padding='same') # 卷积层

self.b1 = BatchNormalization() # BN层

self.a1 = Activation('relu') # 激活层

self.p1 = MaxPool2D(pool_size=(2, 2), strides=2, padding='same') # 池化层

self.d1 = Dropout(0.2) # dropout层

self.flatten = Flatten()

self.f1 = Dense(128, activation='relu')

self.d2 = Dropout(0.2)

self.f2 = Dense(10, activation='softmax')

def call(self, x):

x = self.c1(x)

x = self.b1(x)

x = self.a1(x)

x = self.p1(x)

x = self.d1(x)

x = self.flatten(x)

x = self.f1(x)

x = self.d2(x)

y = self.f2(x)

return y

model = Baseline()

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=False),

metrics=['sparse_categorical_accuracy'])

checkpoint_save_path = "./checkpoint/Baseline.ckpt"

if os.path.exists(checkpoint_save_path + '.index'):

print('-------------load the model-----------------')

model.load_weights(checkpoint_save_path)

cp_callback = tf.keras.callbacks.ModelCheckpoint(filepath=checkpoint_save_path,

save_weights_only=True,

save_best_only=True)

history = model.fit(x_train, y_train, batch_size=32, epochs=5,

validation_data=(x_test, y_test), validation_freq=1,callbacks=[cp_callback])

model.summary()

# print(model.trainable_variables)

file = open('./weights.txt', 'w')

for v in model.trainable_variables:

file.write(str(v.name) + '\n')

file.write(str(v.shape) + '\n')

file.write(str(v.numpy()) + '\n')

file.close()

############### show ##############

# 显示训练集和验证集的acc和loss曲线

acc = history.history['sparse_categorical_accuracy']

val_acc = history.history['val_sparse_categorical_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

plt.subplot(1, 2, 1)

plt.plot(acc, label='Training Accuracy')

plt.plot(val_acc, label='Validation Accuracy')

plt.title('Training and Validation Accuracy')

plt.legend()

plt.subplot(1, 2, 2)

plt.plot(loss, label='Training Loss')

plt.plot(val_loss, label='Validation Loss')

plt.title('Training and Validation Loss')

plt.legend()

plt.show()

3.LeNet

class LeNet5(Model):

def __init__(self):

super(LeNet5, self).__init__()

self.c1 = Conv2D(filters=6,kernel_size=(5,5),

activation='sigmoid')

self.p1 = MaxPool2D(pool_size=(2,2),strides=2)

self.c2 = Conv2D(filters=16,kernel_size=(5,5),activation='sigmoid')

self.p2 = MaxPool2D(pool_size=(2,2),strides=2)

self.flatten = Flatten()

self.f1 = Dense(128,activation='sigmoid')

self.f2 = Dense(84,activation='sigmoid')

self.f3 = Dense(10,activation='sigmoid')

def call(self,x):

x = self.c1(x)

x = self.p1(x)

x = self.c2(x)

x = self.p2(x)

x = self.flatten(x)

x = self.f1(x)

x = self.f2(x)

y = self.f3(x)

return y

4.AlexNet

class AlexNet8(Model):

def __init__(self):

super(AlexNet8, self).__init__()

self.c1 = Conv2D(filters=96,kernel_size=(3,3))

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.p1 = MaxPool2D(pool_size=(3,3),strides=2)

self.c2 = Conv2D(filters=256,kernel_size=(3,3))

self.b2 = BatchNormalization()

self.a2 = Activation('relu')

self.p2 = MaxPool2D(pool_size=(3,3),strides=2)

self.c3 = Conv2D(filters=384,kernel_size=(3,3),padding='same')

self.c4 = Conv2D(filters=384, kernel_size=(3, 3), padding='same')

self.c5 = Conv2D(filters=384, kernel_size=(3, 3), padding='same')

self.p3 = MaxPool2D(pool_size=(3,3),strides=2)

self.flatten = Flatten()

self.f1 = Dense(2048,activation='relu')

self.d1 = Dropout(0.5)

self.f2 = Dense(2048,activation='relu')

self.d2 = Dropout(0.5)

self.f3 = Dense(10,activation='softmax')

def call(self, x):

x = self.c1(x)

x = self.b1(x)

x = self.a1(x)

x = self.p1(x)

x = self.c2(x)

x = self.b2(x)

x = self.a2(x)

x = self.p2(x)

x = self.c3(x)

x = self.c4(x)

x = self.c5(x)

x = self.p3(x)

x = self.flatten(x)

x = self.f1(x)

x = self.d1(x)

x = self.f2(x)

x = self.d2(x)

y = self.f3(x)

return y

5.InceptionNet

# 实现Inception结构块中relu结构

'''

C:(核:ch*kernel_size*kernel_size 步长strides:1 填充:same)

B:(YES)

A:(relu)

P:(None)

D:(None)

'''

class ConvBNRelu(Model):

def __init__(self, ch, kernelsz=3, strides=1, padding='same'):

super(ConvBNRelu, self).__init__()

self.model = tf.keras.models.Sequential([

Conv2D(ch, kernelsz, strides=strides, padding=padding),

BatchNormalization(),

Activation('relu')

])

def call(self, x):

x = self.model(x,training=False) # 在training=False时,BN通过整个训练集计算均值、方差去做批归一化,training=True时,通过当前batch的均值、方差去做批归一化。推理: training=False效果好

return x

# 结构块类,用于实现一个Inception结构块

class InceptionBlk(Model):

def __init__(self, ch, strides=1):

super(InceptionBlk, self).__init__()

self.ch = ch

self.strides = strides

# 第一小块

self.c1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

# 第二小块

self.c2_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c2_2 = ConvBNRelu(ch, kernelsz=3, strides=1)

# 第三小块

self.c3_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c3_2 = ConvBNRelu(ch, kernelsz=5, strides=1)

# 第四小块

self.p4_1 = MaxPool2D(3, strides=1, padding='same')

self.c4_2 = ConvBNRelu(ch, kernelsz=1, strides=strides)

def call(self, x):

x1 = self.c1(x)

x2_1 = self.c2_1(x)

x2_2 = self.c2_2(x2_1)

x3_1 = self.c3_1(x)

x3_2 = self.c3_2(x3_1)

x4_1 = self.p4_1(x)

x4_2 = self.c4_2(x4_1)

# concat along axis=channel

x = tf.concat([x1, x2_2, x3_2, x4_2], axis=3)

return x

# 用于实现Inception网络

class Inception10(Model):

def __init__(self, num_blocks, num_classes, init_ch=16, **kwargs):

super(Inception10, self).__init__(**kwargs)

self.in_channels = init_ch

self.out_channels = init_ch

self.num_blocks = num_blocks

self.init_ch = init_ch

self.c1 = ConvBNRelu(init_ch)

self.blocks = tf.keras.models.Sequential()

for block_id in range(num_blocks):

for layer_id in range(2):

if layer_id == 0:

block = InceptionBlk(self.out_channels, strides=2)

else:

block = InceptionBlk(self.out_channels, strides=1)

self.blocks.add(block)

# enlarger out_channels per block

self.out_channels *= 2

self.p1 = GlobalAveragePooling2D()

self.f1 = Dense(num_classes, activation='softmax')

def call(self, x):

x = self.c1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return y

model = Inception10(num_blocks=2, num_classes=10)

6.ResNet

class ResnetBlock(Model):

def __init__(self, filters, strides=1, residual_path=False):

super(ResnetBlock, self).__init__()

self.filters = filters

self.strides = strides

self.residual_path = residual_path

self.c1 = Conv2D(filters, (3, 3), strides=strides, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.c2 = Conv2D(filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b2 = BatchNormalization()

# residual_path为True时,对输入进行下采样,即用1x1的卷积核做卷积操作,保证x能和F(x)维度相同,顺利相加

if residual_path:

self.down_c1 = Conv2D(filters, (1, 1), strides=strides, padding='same', use_bias=False)

self.down_b1 = BatchNormalization()

self.a2 = Activation('relu')

def call(self, inputs):

residual = inputs # residual等于输入值本身,即residual=x

# 将输入通过卷积、BN层、激活层,计算F(x)

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.c2(x)

y = self.b2(x)

if self.residual_path:

residual = self.down_c1(inputs)

residual = self.down_b1(residual)

out = self.a2(y + residual) # 最后输出的是两部分的和,即F(x)+x或F(x)+Wx,再过激活函数

return out

class ResNet18(Model):

def __init__(self, block_list, initial_filters=64): # block_list表示每个block有几个卷积层

super(ResNet18, self).__init__()

self.num_blocks = len(block_list) # 共有几个block

self.block_list = block_list

self.out_filters = initial_filters

self.c1 = Conv2D(self.out_filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.blocks = tf.keras.models.Sequential()

# 构建ResNet网络结构

for block_id in range(len(block_list)): # 第几个resnet block

for layer_id in range(block_list[block_id]): # 第几个卷积层

if block_id != 0 and layer_id == 0: # 对除第一个block以外的每个block的输入进行下采样

block = ResnetBlock(self.out_filters, strides=2, residual_path=True)

else:

block = ResnetBlock(self.out_filters, residual_path=False)

self.blocks.add(block) # 将构建好的block加入resnet

self.out_filters *= 2 # 下一个block的卷积核数是上一个block的2倍

self.p1 = tf.keras.layers.GlobalAveragePooling2D()

self.f1 = tf.keras.layers.Dense(10, activation='softmax', kernel_regularizer=tf.keras.regularizers.l2())

def call(self, inputs):

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return y

771

771

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?