最近在进行行人检测的工作,opencv已集成Navneet Dalal 和Bill Triggs于2005年CVPR提出的利用Hog进行特征提取,利用线性SVM作为分类器,从而实现行人检测的方法。依赖于Ubuntu 14.04+OpenCV 2.4.10,训练自己的SVM行人分类器。

HOG基础

进入HOGDescriptor所在的头文件,其构造函数

CV_WRAP HOGDescriptor() : winSize(64,128), blockSize(16,16), blockStride(8,8),

cellSize(8,8), nbins(9), derivAperture(1), winSigma(-1),

histogramNormType(HOGDescriptor::L2Hys), L2HysThreshold(0.2), gammaCorrection(true),

nlevels(HOGDescriptor::DEFAULT_NLEVELS)

{}

CV_WRAP HOGDescriptor(Size _winSize, Size _blockSize, Size _blockStride,

Size _cellSize, int _nbins, int _derivAperture=1, double _winSigma=-1,

int _histogramNormType=HOGDescriptor::L2Hys,

double _L2HysThreshold=0.2, bool _gammaCorrection=false,

int _nlevels=HOGDescriptor::DEFAULT_NLEVELS)

: winSize(_winSize), blockSize(_blockSize), blockStride(_blockStride), cellSize(_cellSize),

nbins(_nbins), derivAperture(_derivAperture), winSigma(_winSigma),

histogramNormType(_histogramNormType), L2HysThreshold(_L2HysThreshold),

gammaCorrection(_gammaCorrection), nlevels(_nlevels)

{}

CV_WRAP HOGDescriptor(const String& filename)

{

load(filename);

}

HOGDescriptor(const HOGDescriptor& d)

{

d.copyTo(*this);

}

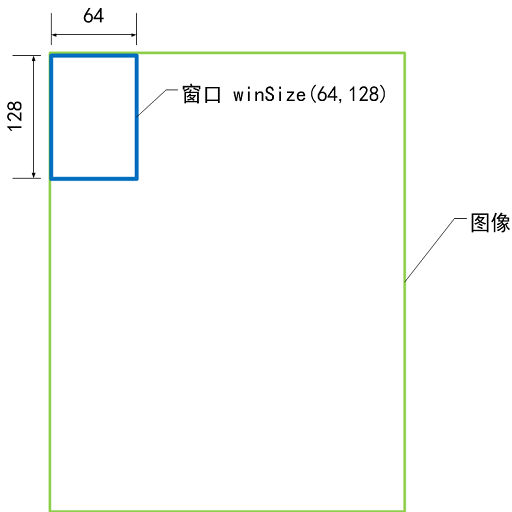

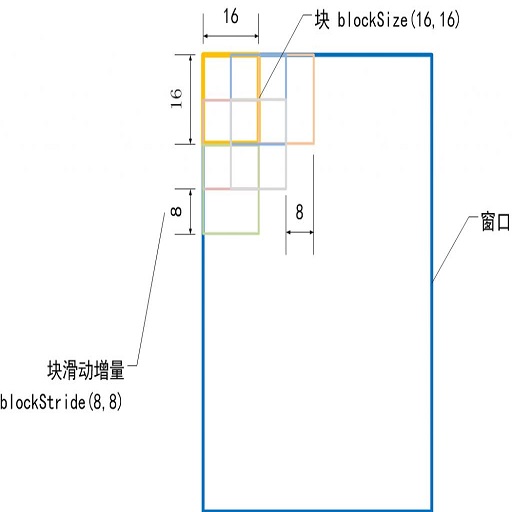

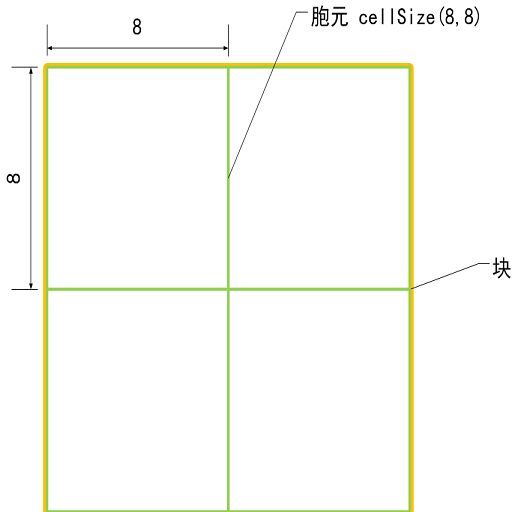

主要参数是winSize(64,128),blockSize(16,16), blockStride(8,8),cellSize(8,8),nbins(9)等。其各自的含义可解释如下:

检测窗口的大小:winSize

块的大小:blockSize

cell的大小:cellSize

梯度方向数:nbins

nBins表示在一个胞元(cell)中统计梯度的方向数目,例如nBins=9时,在一个胞元内统计9个方向的梯度直方图,每个方向为180/9=20度。

HOG描述子维度

OpenCV中是利用如下方式计算hog描述子的长度的,其源码为:

size_t HOGDescriptor::getDescriptorSize() const

{

CV_Assert(blockSize.width % cellSize.width == 0 &&

blockSize.height % cellSize.height == 0);

CV_Assert((winSize.width - blockSize.width) % blockStride.width == 0 &&

(winSize.height - blockSize.height) % blockStride.height == 0 );

return (size_t)nbins*

(blockSize.width/cellSize.width)*

(blockSize.height/cellSize.height)*

((winSize.width - blockSize.width)/blockStride.width + 1)*

((winSize.height - blockSize.height)/blockStride.height + 1);

}

OpenCV中一个Hog描述子是针对一个检测窗口而言的,一个检测窗口有((128-16)/8+1)*((64-16)/8+1)=105个Block,一个Block有4个Cell,一个Cell的Hog描述子向量的长度是9,所以一个检测窗口的Hog描述子的向量长度是105*4*9=3780维。

训练自己的SVM分类器

选择INRIAPerson/train_64x128_H96/中的正负样本,利用

$ ls pos/ > pos.lst

$ ls neg/ > neg.lst分别将pos和neg目录下所用图像目录存入pos.lst和neg.list中。

利用如何程序进行训练得到自己的分类器并保存在hogSVMdetector.txt中。

#include "opencv2/imgproc/imgproc.hpp"

#include "opencv2/objdetect/objdetect.hpp"

#include "opencv2/highgui/highgui.hpp"

#include "opencv2/ml/ml.hpp"

#include <iostream>

#include <fstream>

#include <cstring>

using namespace cv;

using namespace std;

class MySVM : public CvSVM

{

public:

double * get_alpha_vector()

{

return this->decision_func->alpha;

}

float get_rho()

{

return this->decision_func->rho;

}

};

int main(int argc, char** argv)

{

Mat img;

if( argc < 5 ) {

cout << "USAGE: " << argv[0] << " <pos_img_path> | <pos_img_list>.txt | <neg_img_path> | <neg_img_list>.txt" << endl;

exit(1);

}

string posimgPath_fname(argv[1]);

ifstream imgPosList_file(argv[2], ios::in);

if (!imgPosList_file.good()) {

cout << "Cannot open image list file!" << endl;

}

string negimgPath_fname(argv[3]);

ifstream imgNegList_file(argv[4], ios::in);

if (!imgNegList_file.good()) {

cout << "Cannot open image list file!" << endl;

}

HOGDescriptor hog(Size(64,128), Size(16,16), Size(8,8), Size(8,8), 9, 1, -1, HOGDescriptor::L2Hys, 0.2, false, HOGDescriptor::DEFAULT_NLEVELS);

size_t hogLength = hog.getDescriptorSize();

vector < string > img_path;

vector < int > img_catg;

string buf;

while ( imgPosList_file ) {

if ( getline( imgPosList_file, buf ) ) {

string imgpath = posimgPath_fname + buf;

img_path.push_back(imgpath);

img_catg.push_back(1);

}

}

imgPosList_file.close();

while ( imgNegList_file ) {

if ( getline( imgNegList_file, buf ) ) {

string imgpath = negimgPath_fname + buf;

img_path.push_back(imgpath);

img_catg.push_back(-1);

}

}

imgNegList_file.close();

Mat sampleFeatureMat(img_path.size(), hogLength, CV_32FC1);

Mat sampleLabelMat(img_path.size(), 1, CV_32FC1);

cout << "*****************************************" << endl;

cout << "Start to load pos and neg image, Compute its feature..." << endl;

double t = (double)getTickCount();

Mat src;

for (int i = 0; i < img_path.size(); i++) {

src = imread(img_path[i]);

if(!src.data) {

cout << "Can not load the image: " << img_path[i] << endl;

continue;

}

resize(src, src, Size(64, 128));

vector < float > descriptors;

hog.compute(src, descriptors, Size(8,8));

for ( int j = 0; j < descriptors.size(); j++) {

sampleFeatureMat.at<float>(i,j) = descriptors[j];

sampleLabelMat.at<float>(i,0) = img_catg[i];

}

}

cout << "end of loading and computing !" << endl;

cout << "*****************************************" << endl;

cout << "Start to train for SVM classifier..." << endl;

CvSVMParams params;

params.svm_type = CvSVM::C_SVC;

params.kernel_type = CvSVM::LINEAR;

params.term_crit = cvTermCriteria(CV_TERMCRIT_ITER, 100, 1e-6);

// Train the SVM

MySVM svm;

svm.train(sampleFeatureMat, sampleLabelMat, Mat(), Mat(), params);

svm.save("SVM_HOG.xml");

cout << "end of training for SVM !!!" << endl;

cout << "*****************************************" << endl;

t = (double)getTickCount() - t;

cout << "Training time = " << t*1000./cv::getTickFrequency() << "ms" << endl;

int DescriptorDim = svm.get_var_count();

int supportVectorNum = svm.get_support_vector_count();

Mat alphaMat = Mat::zeros(1, supportVectorNum, CV_32FC1);

Mat supportVectorMat = Mat::zeros(supportVectorNum, DescriptorDim, CV_32FC1);

Mat resultMat = Mat::zeros(1, DescriptorDim, CV_32FC1);

for(int i=0; i<supportVectorNum; i++) {

const float * pSVData = svm.get_support_vector(i);

for(int j=0; j<DescriptorDim; j++) {

supportVectorMat.at<float>(i,j) = pSVData[j];

}

}

double * pAlphaData = svm.get_alpha_vector();

for(int i=0; i<supportVectorNum; i++) {

alphaMat.at<float>(0,i) = pAlphaData[i];

}

resultMat = -1 * alphaMat * supportVectorMat;

vector<float> myDetector;

for(int i=0; i<DescriptorDim; i++) {

myDetector.push_back(resultMat.at<float>(0,i));

}

myDetector.push_back(svm.get_rho());

ofstream out("hogSVMDetector.txt", ios::out);

for (int i = 0; i < myDetector.size() - 1; i++) {

out << myDetector[i] << '\n';

}

out << myDetector[myDetector.size() - 1];

out.close();

return 0;

}

利用训练得到的分类器进行行人检测

#include "opencv2/imgproc/imgproc.hpp"

#include "opencv2/objdetect/objdetect.hpp"

#include "opencv2/highgui/highgui.hpp"

#include "opencv2/ml/ml.hpp"

#include <iostream>

#include <fstream>

#include <cstring>

using namespace cv;

using namespace std;

int main(int argc, char** argv)

{

Mat img;

if( argc < 4 ) {

cout << "USAGE: " << argv[0] << " <hog_svm_detector>.txt | <image_path> | <image_list>.txt" << endl;

exit(1);

}

ifstream filein(argv[1], ios::in);

string imgPath_fname(argv[2]);

ifstream imgList_file(argv[3], ios::in);

if (!imgList_file.good()) {

cout << "Cannot open image list file!" << endl;

}

vector< float > detector;

float val = 0.0f;

while( !filein.eof() ) {

filein >> val;

detector.push_back(val);

}

filein.close();

cout << "detector: " << detector.size() << endl;

HOGDescriptor hog;

hog.setSVMDetector(detector);

namedWindow("people detector", 1);

string buf;

string imgpath;

while(imgList_file) {

if(getline(imgList_file, buf)) {

imgpath = imgPath_fname + buf;

}

img = imread(imgpath);

cout << imgpath << " : ";

if(!img.data)

continue;

fflush(stdout);

vector<Rect> found, found_filtered;

double t = (double)getTickCount();

hog.detectMultiScale(img, found, 0, Size(8,8), Size(32,32), 1.05, 2);

t = (double)getTickCount() - t;

cout << "detection time = " << t*1000./cv::getTickFrequency() << "ms" << endl;

size_t i, j;

for( i = 0; i < found.size(); i++ ) {

Rect r = found[i];

for( j = 0; j < found.size(); j++ )

if( j != i && (r & found[j]) == r)

break;

if( j == found.size() )

found_filtered.push_back(r);

}

for( i = 0; i < found_filtered.size(); i++ ) {

Rect r = found_filtered[i];

// the HOG detector returns slightly larger rectangles than the real objects.

// so we slightly shrink the rectangles to get a nicer output.

r.x += cvRound(r.width*0.1);

r.width = cvRound(r.width*0.8);

r.y += cvRound(r.height*0.07);

r.height = cvRound(r.height*0.8);

rectangle(img, r.tl(), r.br(), cv::Scalar(0,255,0), 3);

}

imshow("people detector", img);

waitKey(100) ;

}

cout << "Done!" << endl;

return 0;

}

4088

4088

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?