文章目录

1.分类

1.1 决策树

基础数据

数值,武器,子弹,血量,身边队友,行为

0,手枪,少,少,没,逃跑

1,机枪,中,中,有,战斗

2,,多,多,,躲藏

3,98K,多,中,有,战斗

4,mp,中,少,有,躲藏

5,m4,少,多,有,躲藏

6,AK47,少,多,有,战斗

7,巴雷特,中,多,没,战斗

8,AWM,少,中,有,躲藏

9,MP4,中,多,没,逃跑

10,MG,多,多,没,战斗

11,机枪,少,中,没,逃跑

12,巴雷特,少,少,没,逃跑

13,AK47,多,多,有,战斗

14,AWM,多,多,没,战斗

15,AK47,多,中,有,战斗

16,手枪,多,少,没,躲藏

decision_tree_t

'''

手动转换数据

ppt上示例(移除) 这个还是有点问题,以后要是碰到了再使用吧.

'''

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.tree import DecisionTreeClassifier as DTC

from sklearn import metrics

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

'''

数值 武器类型 子弹 血量 身边是否有队友 行为类别

0 手枪 少 少 没 逃跑

1 机枪 多 多 有 战斗

'''

#names = ['zidan','wuqi','xueliang','do_what','duiyou']

#df = pd.read_csv('fightrun.csv',encoding='utf-8',names=names) # 指定列名

# df = pd.read_csv(r'..\csv\fightrun.csv')

df = pd.read_csv('fightrun2.csv')

#df = df.ix[1:,['武器','子弹','血量','身边队友','行为']]

df.columns = ['#', 'zidan', 'wuqi', 'hp', 'duiyou', 'do']

df = df.drop('#', axis=1)

# 手动转

#df[(df=='手枪')|(df=='少')|(df=='没')|(df=='逃跑')] = 0

#df[(df=='机枪')|(df=='中')|(df=='有')|(df=='战斗')] = 1

#df[(df=='多')|(df=='躲藏')] = 2

#df = df.astype(int)

# 用LabelEncoder转

classle = LabelEncoder()

df['zidan'] = classle.fit_transform(df['zidan'].values)

df['wuqi'] = classle.fit_transform(df['wuqi'].values)

df['hp'] = classle.fit_transform(df['hp'].values)

df['duiyou'] = classle.fit_transform(df['duiyou'].values)

df['do'] = classle.fit_transform(df['do'].values)

X = df[['zidan','wuqi','hp','duiyou']]

y = df['do']

#print(y)

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.5, test_size=0.5)

model = DTC().fit(X_train, y_train)

# 预测

# 预测一组特征

y_pred = model.predict([(1,1,0,1)])

# 预测n组特征

y_pred = model.predict(X_test) # 预测出的结果

# 预测结果,

print(metrics.classification_report(y_test, y_pred))

print(metrics.confusion_matrix(y_test, y_pred))

# 说明

# precision recall f1-score support

#

# 0 0.73 0.89 0.80 9

# 1 0.95 0.87 0.91 23

#

#avg / total 0.89 0.88 0.88 32

# 以上为metrics.classification_report的返回结果,

#[[ 8 1]

# [ 3 20]]

# 上面两行metrics.confusion_matrix的结果

# 其中precision = 8 / (8 + 3)

# recall = 8 / (8 + 1)

# f1-score = 2 * (precision * recall) / (precision + recall)

# support是权重

# avg / total 是加权均值 比如recall = (0.89*9+0.87*23)/(9+23)

probas_ = model.predict_proba(X_test)

fpr, tpr, thresholds = metrics.roc_curve(y_test, probas_[:, 1], pos_label=1)

#

## 计算AUC值

auc = metrics.auc(fpr, tpr, reorder=False)

print('auc is ', auc)

#

## 作ROC曲线

plt.plot(fpr, tpr, linewidth=2, label = 'ROC', color = 'green') #作出ROC曲线

plt.xlabel('False Positive Rate') #坐标轴标签

plt.ylabel('True Positive Rate') #坐标轴标签

plt.ylim(0, 1.05) #边界范围

plt.xlim(0, 1.05) #边界范围

plt.show()

decision_tree_t2

'''

手动转换数据

3种结果行为,战斗,逃跑,躲藏,

有空值

'''

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.tree import DecisionTreeClassifier as DTC # 决策树分类

from sklearn import metrics

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

'''

数值 武器类型 子弹 血量 身边队友 行为类别

0 手枪 少 少 没 逃跑

1 机枪 中 中 有 战斗

2 多 多 躲藏

'''

# (1)读取数据

# df = pd.read_csv(r'..\csv\fightrun2.csv')

df = pd.read_csv('fightrun2.csv')

df = df.ix[1:,['子弹','武器','血量','身边队友','行为']] # 读取的列要么都是字符,要么都是数字

df = df.dropna()

print(df)

# 子弹 武器 血量 身边队友 行为

# 1 中 机枪 中 有 战斗

# 3 多 98K 中 有 战斗

# 4 中 mp 少 有 躲藏

# 5 少 m4 多 有 躲藏

# (2)用LabelEncoder转

classle = LabelEncoder()

df['武器'] = classle.fit_transform(df['武器'].values)

df['子弹'] = classle.fit_transform(df['子弹'].values)

df['血量'] = classle.fit_transform(df['血量'].values)

df['身边队友'] = classle.fit_transform(df['身边队友'].values)

df['行为'] = classle.fit_transform(df['行为'].values)

print("****************")

print(classle.classes_) # ['战斗' '躲藏' '逃跑'] 0 1 2 这个是最后一个行为的classle

# (3) DecisionTreeClassifier as DTC 决策树模型

X = df[['武器','子弹','血量','身边队友']]

print(X)

# 武器 子弹 血量 身边队友

# 1 9 0 0 0

# 3 0 1 0 0

# 4 6 0 2 0

# 5 5 2 1 0

y = df['行为']#.astype(int)

print(y)

# 1 0

# 3 0

# 4 1

# 5 1

# 6 0

# 7 0

# 8 1

# 9 2

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.6, test_size=0.4)

model = DTC()

model.fit(X_train, y_train)

# (4)预测

y_pred = model.predict(X_test) # 预测出的结果

# 打印预测结果,

print(metrics.classification_report(y_test, y_pred))

# precision recall f1-score support

#

# 0 1.00 0.25 0.40 4

# 1 0.25 1.00 0.40 1

# 2 0.00 0.00 0.00 1

#

# micro avg 0.33 0.33 0.33 6

# macro avg 0.42 0.42 0.27 6

# weighted avg 0.71 0.33 0.33 6

print("*******************************")

# 混淆矩阵

print(metrics.confusion_matrix(y_test, y_pred))

# [[1 2 1]

# [0 1 0]

# [0 1 0]]

# 预测

n = model.predict([[0, 0, 0, 1]])

print(n) # 0

print(classle.inverse_transform(n)) # ['战斗']

n = model.predict([[1, 0, 0, 0,]])

print(n) # 0 将label换成相应的值

print(classle.inverse_transform(n)) # ['战斗']

1.2 贝叶斯

1.2.1 案例1

数据源头1

数值,武器,子弹,血量,身边队友,行为

0,手枪,少,少,没,逃跑

1,机枪,中,中,有,战斗

2,,多,多,,躲藏

3,98K,多,中,有,战斗

4,mp,中,少,有,躲藏

5,m4,少,多,有,躲藏

6,AK47,少,多,有,战斗

7,巴雷特,中,多,没,战斗

8,AWM,少,中,有,躲藏

9,MP4,中,多,没,逃跑

10,MG,多,多,没,战斗

11,机枪,少,中,没,逃跑

12,巴雷特,少,少,没,逃跑

13,AK47,多,多,有,战斗

14,AWM,多,多,没,战斗

15,AK47,多,中,有,战斗

16,手枪,多,少,没,躲藏

bayes1

'''

from decision_tree_t2

'''

from itertools import product

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.preprocessing import LabelEncoder

from sklearn.naive_bayes import GaussianNB

from sklearn import metrics

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

# 贝叶斯判断概率,属于哪个类别的高

'''

数值 武器类型 子弹 血量 身边队友 行为类别

0 手枪 少 少 没 逃跑

1 机枪 中 中 有 战斗

2 多 多 躲藏

'''

# df = pd.read_csv(r'..\csv\fightrun2.csv')

df = pd.read_csv('fightrun2.csv')

df = df.ix[:,['子弹','武器','血量','身边队友','行为']] # 选取需要的列

df = df.dropna()

# 手动转

#df[(df=='手枪')|(df=='少')|(df=='没')|(df=='逃跑')] = 0

#df[(df=='机枪')|(df=='中')|(df=='有')|(df=='战斗')] = 1

#df[(df=='多')|(df=='躲藏')] = 2

#df = df.astype(int)

# 用LabelEncoder转

classle = LabelEncoder()

df['武器'] = classle.fit_transform(df['武器'].values)

df['子弹'] = classle.fit_transform(df['子弹'].values)

df['血量'] = classle.fit_transform(df['血量'].values)

df['身边队友'] = classle.fit_transform(df['身边队友'].values)

df['行为'] = classle.fit_transform(df['行为'].values)

X = df[['武器','子弹','血量','身边队友']]

y = df['行为']

#print(y)

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.6, test_size=0.4)

model = GaussianNB()

m = model.fit(X_train, y_train)

# 预测

y_pred = model.predict(X_test) # 预测出的结果

y_test = y_test # 期望的结果

# 打印预测结果,

print(metrics.classification_report(y_test, y_pred))

# precision recall f1-score support

#

# 0 1.00 0.50 0.67 6

# 2 0.25 1.00 0.40 1

#

# micro avg 0.57 0.57 0.57 7

# macro avg 0.62 0.75 0.53 7

# weighted avg 0.89 0.57 0.63 7

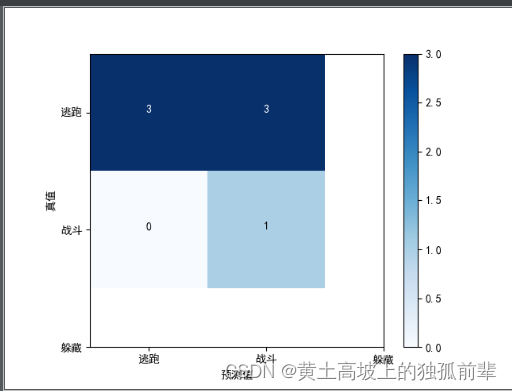

# 混淆矩阵

cm = metrics.confusion_matrix(y_test, y_pred)

## 矩阵标准化 值在0, 1 之间

#cm = cm.astype('float') / cm.sum(axis=1)[:, np.newaxis]

print(cm)

# [[3 3]

# [0 1]]

# 画混淆矩阵

classes = ['逃跑','战斗','躲藏'] # 分类名

length = range(len(classes)) # 混淆矩阵的边长

plt.rcParams['font.sans-serif'] = ['SimHei'] # 正常显示中文

plt.imshow(cm, cmap = plt.cm.Blues)

thresh = cm.max() / 2.

for i, j in product(range(cm.shape[0]), range(cm.shape[1])):

plt.text(j, i, cm[i, j],

horizontalalignment="center",

# 浅色背景深色字,深背景浅色字

color="white" if cm[i, j] > thresh else "black")

plt.xticks(length, classes)

plt.yticks(length, classes)

plt.colorbar()

plt.ylabel('真值')

plt.xlabel('预测值')

plt.savefig("bayes.png")

plt.show()

1.2.2 案例2

数据源头

喜欢吃萝卜,喜欢吃鱼,喜欢捉耗子,喜欢啃骨头,短尾巴,长耳朵,分类

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

否,是,是,否,是,是,猫

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,否,是,是,猫

否,是,是,否,否,否,猫

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

是,否,否,否,否,否,兔子

是,否,否,否,是,否,兔子

是,是,是,是,是,否,狗

是,是,是,是,否,是,狗

否,是,是,是,否,否,狗

否,是,否,是,否,否,狗

否,否,否,是,是,否,狗

是,否,是,否,是,否,狗

否,是,否,是,否,是,狗

否,是,否,是,是,是,狗

是,否,否,是,否,否,狗

是,否,否,是,是,否,狗

否,是,否,是,否,是,狗

否,否,否,是,是,是,狗

否,否,是,是,否,是,狗

否,否,是,是,是,否,狗

否,否,否,否,否,否,狗

否,否,否,否,是,否,狗

否,否,否,否,是,是,狗

否,否,否,否,否,是,狗

否,否,否,是,否,否,狗

否,否,是,是,否,否,狗

是,否,否,否,是,是,兔子

是,否,否,否,否,是,兔子

否,是,是,否,否,是,猫

否,是,是,是,是,是,猫

是,是,是,是,是,是,兔子

bayes2

'''

猫狗兔子

ppt上示例

'''

from itertools import product

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.naive_bayes import GaussianNB, MultinomialNB

from sklearn import metrics

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

'''

是 1

否 0

狗 1 猫 2 兔子 3

'''

# df = pd.read_csv(r'..\csv\catdograbbit.csv')

df = pd.read_csv('category.csv')

# 1.特征处理 3选1

# (1)手动转

# df[df == '是'] = 1

# df[df == '否'] = 0

# df[df == '狗'] = 1

# df[df == '猫'] = 2

# df[df == '兔子'] = 3

# df = df.astype(np.int8)

# print(df)

# 线性回归是连续值;这里的分类是非连续值,拥有哪一些属性,是哪种动物?

# 喜欢吃萝卜 喜欢吃鱼 喜欢捉耗子 喜欢啃骨头 短尾巴 长耳朵 分类

# 0 0 1 1 0 1 1 2

# 1 1 0 0 0 0 1 3

# 2 0 1 1 0 0 1 2

# 3 0 1 1 0 1 1 2

# 4 0 1 1 0 0 0 2

# 5 1 0 0 0 1 1 3

## 选取训练集特征

X = df[['喜欢吃萝卜', '喜欢吃鱼', '喜欢捉耗子', '喜欢啃骨头', '短尾巴', '长耳朵']]

y = df['分类']

# (2) 用labelencoder 转

classle = LabelEncoder()

# df['喜欢吃萝卜'] = classle.fit_transform(df['喜欢吃萝卜'].values)

# df['喜欢吃鱼'] = classle.fit_transform(df['喜欢吃鱼'].values)

# df['喜欢捉耗子'] = classle.fit_transform(df['喜欢捉耗子'].values)

# df['喜欢啃骨头'] = classle.fit_transform(df['喜欢啃骨头'].values)

# df['短尾巴'] = classle.fit_transform(df['短尾巴'].values)

# df['长耳朵'] = classle.fit_transform(df['长耳朵'].values)

# 这个for循环就是等价于上面的语句

# for column_name in df.colimns:

# df[column_name]=classle.fit_transform(df[column_name].values)

# print(classle.classes_)

# print(df.head())

## 选取训练集特征

# X = df[['喜欢吃萝卜','喜欢吃鱼','喜欢捉耗子','喜欢啃骨头','短尾巴','长耳朵']]

# y = classle.fit_transform(df['分类'].values)

# (3)用哑变量

# classle = LabelEncoder()

# X = pd.get_dummies(df.drop('分类', axis=1))

# y = classle.fit_transform(df['分类'].values)

# 特征转换,可以手动,可以用LabelEncoder,看情况使用

# 手动

df[df == '否'] = 0

df[df == '是'] = 1

# LabelEncoder

classle = LabelEncoder()

df['分类'] = classle.fit_transform(df['分类'])

print(df)

# 喜欢吃萝卜 喜欢吃鱼 喜欢捉耗子 喜欢啃骨头 短尾巴 长耳朵 分类 (分类是0,1,2 兔子 狗 猫)

# 0 0 1 1 0 1 1 1

# 1 1 0 0 0 0 1 2

# 2 0 1 1 0 0 1 1

# 3 0 1 1 0 1 1 1

# 4 0 1 1 0 0 0 1

# 5 1 0 0 0 1 1 2

# print(df.head())

# print(classle.classes_) # ['兔子' '狗' '猫']

# 2.确定特征集

X = df.drop('分类', axis=1)

y = df['分类']

# 3.交叉验证 为什么要交叉验证--(过拟合Overfit)

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.7, test_size=0.3)

print(X.shape) # (442, 6)

print(X_train.shape) # (309, 6)

print(X_test.shape) # (133, 6)

# 4. 学习 (朴素贝叶斯)

model = GaussianNB()

# model = MultinomialNB()

model.fit(X_train, y_train)

# 5.查看预测效果 -------------------start--------------------------------

print("5分数********************")

# 一般用test看分数 随机抽取的数据集,所以结果不同

print(model.score(X_test, y_test)) # 0.8120300751879699 分数,就是report的f1-score

# 预测报告

y_pred = model.predict(X_test)

report = metrics.classification_report(y_test, y_pred)

print("report****************")

print(report) # 测试值 预测值报告 分数

# precision recall f1-score support

#

# 0 1.00 0.68 0.81 79

# 1 0.91 1.00 0.95 21

# 2 0.59 1.00 0.74 33

#

# micro avg 0.81 0.81 0.81 133

# macro avg 0.83 0.89 0.84 133

# weighted avg 0.88 0.81 0.82 133

# 分类是0,1,2 兔子 狗 猫

# precision(精确度,谁分类好一点) 代表 兔子分类> 狗分类>猫分类

# precision 就是分类正确率的意思,正确个数/整个个数

# 6.混淆矩阵

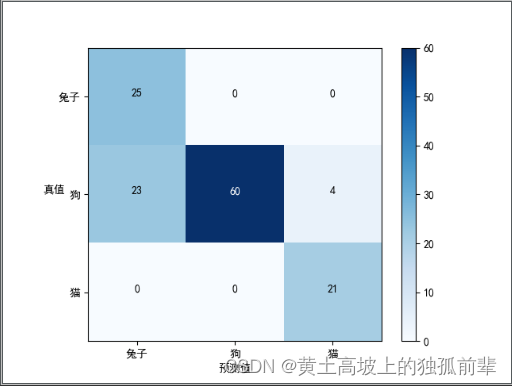

cm = metrics.confusion_matrix(y_test, y_pred)

print("cm********************")

print(cm)

# [[54 2 23]

# [ 0 21 0]

# [ 0 0 33]]

# 画混淆矩阵

plt.rcParams['font.sans-serif'] = ['SimHei'] # 正常显示中文

plt.imshow(cm, cmap=plt.cm.Blues) # 画矩阵

half = cm.max() / 2

# classes = ['狗','猫','兔子'] # 分类名

classes = classle.classes_

# print(classes)

length = range(len(classes)) # 混淆矩阵的边长

plt.xticks(length, classes, rotation=0)

plt.yticks(length, classes)

plt.colorbar()

plt.ylabel('真值', rotation=0)

plt.xlabel('预测值')

# 每个块显示数字

for i, j in product(range(cm.shape[0]), range(cm.shape[1])):

plt.text(j, i, cm[i, j],

# 字居中

horizontalalignment="center",

# 浅色背景深色字,深背景浅色字

color="white" if cm[i, j] > half else "black",

# size=15 字体大小

)

plt.savefig('bayes2_rcParams.png')

plt.show()

# 7.查看预测效果 ------------------end---------------------------------

# 打印不出来?

print("7**********************")

# 预测一个值 训练完了之后,来了一个有相应特征的对象,可以将其分到相应的类中去.

# 喜欢吃萝卜 喜欢吃鱼 喜欢捉耗子 喜欢啃骨头 短尾巴 长耳朵 分类 (分类是0,1,2 兔子 狗 猫)

n = model.predict([[0, 0, 0, 1, 1, 1]])

print(n) # 1

print(classle.inverse_transform(n)) # ['狗']

n = model.predict([[1, 0, 0, 0, 0, 1]])

print(n) # 0 将label换成相应的值

print(classle.inverse_transform(n)) # ['兔子']

# 如何看混淆矩阵

# 预测值

# 猫 狗 兔子

# 猫 5 3 0

# 真

# 实 狗 2 3 1

# 值

# 兔子 0 2 11

# 对角线上的就是真实值

# 真实 8只猫 狗6只 兔子13只

# 8只猫 3只真实的猫被预测成狗

# 6只狗 其中2只狗预测成2只猫,1只狗预测成兔子

# 预测 7只猫 狗8只 兔子12只

# 预测7只猫 有5只是真猫 其中有2只狗预测成猫

# 预测12只兔子 有11只是真实兔子,其中1只狗预测成兔子

1.3 集成学习

'''

这么多分类器,选择哪一个分类方法比较好 ??

运用 集成学习的分类器

'''

import numpy as np

import pandas as pd

from sklearn.naive_bayes import GaussianNB, MultinomialNB # 朴素贝叶斯分类(高斯)

from sklearn.neighbors import KNeighborsClassifier # K-最近邻分类

from sklearn.tree import DecisionTreeClassifier # 决策树分类

from sklearn.linear_model import LogisticRegression # 逻辑回归分类

from sklearn.model_selection import train_test_split, cross_val_score

from sklearn.preprocessing import LabelEncoder

from sklearn.ensemble import VotingClassifier # 投票分类器

'''

是 1

否 0

狗 1 猫 2 兔子 3

'''

#names = ['luobo','yu','haozi','gutou','dwb','ced','fenlei']

## 读取csv时自定义列名

#df = pd.read_csv('D:\csv\catdograbbit.csv', names = names)

# df = pd.read_csv(r'..\csv\catdograbbit.csv')

df = pd.read_csv('category.csv')

# 用labelencoder 转

classle = LabelEncoder()

df['喜欢吃萝卜'] = classle.fit_transform(df['喜欢吃萝卜'].values)

df['喜欢吃鱼'] = classle.fit_transform(df['喜欢吃鱼'].values)

df['喜欢捉耗子'] = classle.fit_transform(df['喜欢捉耗子'].values)

df['喜欢啃骨头'] = classle.fit_transform(df['喜欢啃骨头'].values)

df['短尾巴'] = classle.fit_transform(df['短尾巴'].values)

df['长耳朵'] = classle.fit_transform(df['长耳朵'].values)

# 选取训练集特征

X = df[['喜欢吃萝卜','喜欢吃鱼','喜欢捉耗子','喜欢啃骨头','短尾巴','长耳朵']]

y = classle.fit_transform(df['分类'].values)

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.7, test_size=0.3)

model1 = GaussianNB()

model2 = MultinomialNB()

model3 = KNeighborsClassifier()

model4 = DecisionTreeClassifier()

model5 = LogisticRegression()

models = [model1,model2,model3,model4,model5]

labels = ['GaussianNB','MultinomialNB','KNeighborsClassifier','DecisionTreeClassifier','LogisticRegression']

# estimators 集成学习,投票者进行投票

eclf = VotingClassifier(estimators=list(zip(labels, models)))

models.append(eclf)

labels.append('VotingClassifier')

for model, label in list(zip(models, labels)):

scores = cross_val_score(model, X_train, y_train)

#print(scores)

print("Accuracy: {0:.2f} (+/- {1:.2f}) -{2}".format(scores.mean(), scores.std(), label))

'''

-----------------------------------------------------------------------------------------------------

'''

# 0.83 是 scores.mean() 0.04)是 scores.std() GaussianNB 是 label

# Accuracy: 0.83 (+/- 0.04) -GaussianNB # 朴素贝叶斯分类(高斯)

# Accuracy: 0.94 (+/- 0.01) -MultinomialNB

# Accuracy: 0.98 (+/- 0.03) -KNeighborsClassifier # K-最近邻分类

# Accuracy: 1.00 (+/- 0.00) -DecisionTreeClassifier # 决策树分类

# Accuracy: 0.92 (+/- 0.03) -LogisticRegression # 逻辑回归分类

# Accuracy: 1.00 (+/- 0.00) -VotingClassifier # 投票分类器

# scores.mean() 分数均值越高代表越好

# scores.std() 标准差越小代表越稳定

# 决策树分类

# 投票分类器 这两个模型比较好

2.回归

数据源

"Id","TV","Radio","Newspaper","Sales"

"1",230.1,37.8,69.2,22.1

"2",44.5,39.3,45.1,10.4

"3",17.2,45.9,69.3,9.3

"4",151.5,41.3,58.5,18.5

"5",180.8,10.8,58.4,12.9

"6",8.7,48.9,75,7.2

"7",57.5,32.8,23.5,11.8

"8",120.2,19.6,11.6,13.2

"9",8.6,2.1,1,4.8

"10",199.8,2.6,21.2,10.6

"11",66.1,5.8,24.2,8.6

"12",214.7,24,4,17.4

"13",23.8,35.1,65.9,9.2

"14",97.5,7.6,7.2,9.7

"15",204.1,32.9,46,19

"16",195.4,47.7,52.9,22.4

"17",67.8,36.6,114,12.5

"18",281.4,39.6,55.8,24.4

"19",69.2,20.5,18.3,11.3

"20",147.3,23.9,19.1,14.6

"21",218.4,27.7,53.4,18

"22",237.4,5.1,23.5,12.5

"23",13.2,15.9,49.6,5.6

"24",228.3,16.9,26.2,15.5

"25",62.3,12.6,18.3,9.7

"26",262.9,3.5,19.5,12

"27",142.9,29.3,12.6,15

"28",240.1,16.7,22.9,15.9

"29",248.8,27.1,22.9,18.9

"30",70.6,16,40.8,10.5

"31",292.9,28.3,43.2,21.4

"32",112.9,17.4,38.6,11.9

"33",97.2,1.5,30,9.6

"34",265.6,20,0.3,17.4

"35",95.7,1.4,7.4,9.5

"36",290.7,4.1,8.5,12.8

"37",266.9,43.8,5,25.4

"38",74.7,49.4,45.7,14.7

"39",43.1,26.7,35.1,10.1

"40",228,37.7,32,21.5

"41",202.5,22.3,31.6,16.6

"42",177,33.4,38.7,17.1

"43",293.6,27.7,1.8,20.7

"44",206.9,8.4,26.4,12.9

"45",25.1,25.7,43.3,8.5

"46",175.1,22.5,31.5,14.9

"47",89.7,9.9,35.7,10.6

"48",239.9,41.5,18.5,23.2

"49",227.2,15.8,49.9,14.8

"50",66.9,11.7,36.8,9.7

"51",199.8,3.1,34.6,11.4

"52",100.4,9.6,3.6,10.7

"53",216.4,41.7,39.6,22.6

"54",182.6,46.2,58.7,21.2

"55",262.7,28.8,15.9,20.2

"56",198.9,49.4,60,23.7

"57",7.3,28.1,41.4,5.5

"58",136.2,19.2,16.6,13.2

"59",210.8,49.6,37.7,23.8

"60",210.7,29.5,9.3,18.4

"61",53.5,2,21.4,8.1

"62",261.3,42.7,54.7,24.2

"63",239.3,15.5,27.3,15.7

"64",102.7,29.6,8.4,14

"65",131.1,42.8,28.9,18

"66",69,9.3,0.9,9.3

"67",31.5,24.6,2.2,9.5

"68",139.3,14.5,10.2,13.4

"69",237.4,27.5,11,18.9

"70",216.8,43.9,27.2,22.3

"71",199.1,30.6,38.7,18.3

"72",109.8,14.3,31.7,12.4

"73",26.8,33,19.3,8.8

"74",129.4,5.7,31.3,11

"75",213.4,24.6,13.1,17

"76",16.9,43.7,89.4,8.7

"77",27.5,1.6,20.7,6.9

"78",120.5,28.5,14.2,14.2

"79",5.4,29.9,9.4,5.3

"80",116,7.7,23.1,11

"81",76.4,26.7,22.3,11.8

"82",239.8,4.1,36.9,12.3

"83",75.3,20.3,32.5,11.3

"84",68.4,44.5,35.6,13.6

"85",213.5,43,33.8,21.7

"86",193.2,18.4,65.7,15.2

"87",76.3,27.5,16,12

"88",110.7,40.6,63.2,16

"89",88.3,25.5,73.4,12.9

"90",109.8,47.8,51.4,16.7

"91",134.3,4.9,9.3,11.2

"92",28.6,1.5,33,7.3

"93",217.7,33.5,59,19.4

"94",250.9,36.5,72.3,22.2

"95",107.4,14,10.9,11.5

"96",163.3,31.6,52.9,16.9

"97",197.6,3.5,5.9,11.7

"98",184.9,21,22,15.5

"99",289.7,42.3,51.2,25.4

"100",135.2,41.7,45.9,17.2

"101",222.4,4.3,49.8,11.7

"102",296.4,36.3,100.9,23.8

"103",280.2,10.1,21.4,14.8

"104",187.9,17.2,17.9,14.7

"105",238.2,34.3,5.3,20.7

"106",137.9,46.4,59,19.2

"107",25,11,29.7,7.2

"108",90.4,0.3,23.2,8.7

"109",13.1,0.4,25.6,5.3

"110",255.4,26.9,5.5,19.8

"111",225.8,8.2,56.5,13.4

"112",241.7,38,23.2,21.8

"113",175.7,15.4,2.4,14.1

"114",209.6,20.6,10.7,15.9

"115",78.2,46.8,34.5,14.6

"116",75.1,35,52.7,12.6

"117",139.2,14.3,25.6,12.2

"118",76.4,0.8,14.8,9.4

"119",125.7,36.9,79.2,15.9

"120",19.4,16,22.3,6.6

"121",141.3,26.8,46.2,15.5

"122",18.8,21.7,50.4,7

"123",224,2.4,15.6,11.6

"124",123.1,34.6,12.4,15.2

"125",229.5,32.3,74.2,19.7

"126",87.2,11.8,25.9,10.6

"127",7.8,38.9,50.6,6.6

"128",80.2,0,9.2,8.8

"129",220.3,49,3.2,24.7

"130",59.6,12,43.1,9.7

"131",0.7,39.6,8.7,1.6

"132",265.2,2.9,43,12.7

"133",8.4,27.2,2.1,5.7

"134",219.8,33.5,45.1,19.6

"135",36.9,38.6,65.6,10.8

"136",48.3,47,8.5,11.6

"137",25.6,39,9.3,9.5

"138",273.7,28.9,59.7,20.8

"139",43,25.9,20.5,9.6

"140",184.9,43.9,1.7,20.7

"141",73.4,17,12.9,10.9

"142",193.7,35.4,75.6,19.2

"143",220.5,33.2,37.9,20.1

"144",104.6,5.7,34.4,10.4

"145",96.2,14.8,38.9,11.4

"146",140.3,1.9,9,10.3

"147",240.1,7.3,8.7,13.2

"148",243.2,49,44.3,25.4

"149",38,40.3,11.9,10.9

"150",44.7,25.8,20.6,10.1

"151",280.7,13.9,37,16.1

"152",121,8.4,48.7,11.6

"153",197.6,23.3,14.2,16.6

"154",171.3,39.7,37.7,19

"155",187.8,21.1,9.5,15.6

"156",4.1,11.6,5.7,3.2

"157",93.9,43.5,50.5,15.3

"158",149.8,1.3,24.3,10.1

"159",11.7,36.9,45.2,7.3

"160",131.7,18.4,34.6,12.9

"161",172.5,18.1,30.7,14.4

"162",85.7,35.8,49.3,13.3

"163",188.4,18.1,25.6,14.9

"164",163.5,36.8,7.4,18

"165",117.2,14.7,5.4,11.9

"166",234.5,3.4,84.8,11.9

"167",17.9,37.6,21.6,8

"168",206.8,5.2,19.4,12.2

"169",215.4,23.6,57.6,17.1

"170",284.3,10.6,6.4,15

"171",50,11.6,18.4,8.4

"172",164.5,20.9,47.4,14.5

"173",19.6,20.1,17,7.6

"174",168.4,7.1,12.8,11.7

"175",222.4,3.4,13.1,11.5

"176",276.9,48.9,41.8,27

"177",248.4,30.2,20.3,20.2

"178",170.2,7.8,35.2,11.7

"179",276.7,2.3,23.7,11.8

"180",165.6,10,17.6,12.6

"181",156.6,2.6,8.3,10.5

"182",218.5,5.4,27.4,12.2

"183",56.2,5.7,29.7,8.7

"184",287.6,43,71.8,26.2

"185",253.8,21.3,30,17.6

"186",205,45.1,19.6,22.6

"187",139.5,2.1,26.6,10.3

"188",191.1,28.7,18.2,17.3

"189",286,13.9,3.7,15.9

"190",18.7,12.1,23.4,6.7

"191",39.5,41.1,5.8,10.8

"192",75.5,10.8,6,9.9

"193",17.2,4.1,31.6,5.9

"194",166.8,42,3.6,19.6

"195",149.7,35.6,6,17.3

"196",38.2,3.7,13.8,7.6

"197",94.2,4.9,8.1,9.7

"198",177,9.3,6.4,12.8

"199",283.6,42,66.2,25.5

"200",232.1,8.6,8.7,13.4

2.1 一元线性回归

'''

(1)一元线性回归 注意相关系数,回归系数,判定系数(或者叫决定系数)

一般是先求出相关系数r并对其进行假设检验,如果r显著并有进行回归分析之必要,再建立回归方程。

(3)回归中的相关系数和决定系数概念及Python实现 http://www.cnblogs.com/python-frog/p/8988030.html

通过结果验证,简单线性回归模型中,成立

'''

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.linear_model import LinearRegression

from sklearn.externals import joblib # 模型持久化

from sklearn import metrics

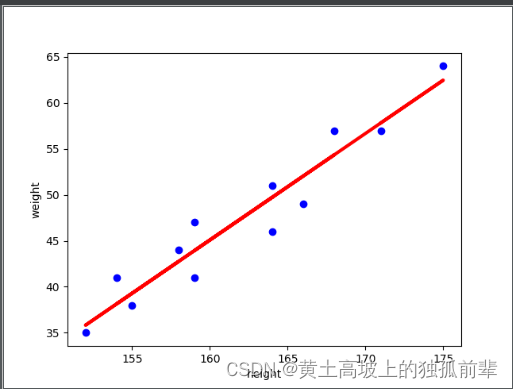

height_weight = {

'height': (171, 175, 159, 155, 152, 158, 154, 164, 168, 166, 159, 164),

'weight': (57, 64, 41, 38, 35, 44, 41, 51, 57, 49, 47, 46)

}

height = (171, 175, 159, 155, 152, 158, 154, 164, 168, 166, 159, 164)

weight = (57, 64, 41, 38, 35, 44, 41, 51, 57, 49, 47, 46)

# (1)字典的 键:值 字符串:元组

dct = {'height': height, 'weight': weight}

# 散点图

# scatter是散点的意思 (0轴,1轴) (x轴,y轴)

# 机器学习 机器帮我们找好规律

# plt.scatter(height, weight)

# plt.show()

# (2)构建一个DataFrame

# df = pd.DataFrame(fangjia)

df = pd.DataFrame(height_weight)

# height weight

# 0 171 57

# 1 175 64

# '' ''' '''

# (3)x代表特征 reshape(-1, 1)中的 -1 代表行不清楚的情况下

x = df["height"].values.reshape(-1, 1)

# 以上不能直接用 ,x要是二维的 [[171],[175],...]

# x = df["height"].values.reshape(-1, 1)

# 0 171

# 1 175

# ... ...

# (4)y代表结果集

y = df["weight"]

# weight

# 0 57

# 1 64

# '' '''

# 模型持久化 将模型保存到本地(默认文件的后缀名是pkl) lr.pkl是一个路径,这里是相对路径,是在同一个文件夹下

# model = LinearRegression()

# model.fit(x, y)

# joblib.dump(model, 'lr.pkl')

# (5)相关性高,用线性回归得出的结果才可靠 (目前两个数据之间的相关系统)

print(df.height.corr(df.weight)) # 0.9593031405705869

# 训练集 特征 (特征训练集) 训练集是二维数组

X_train = df.height.values.reshape(-1, 1)

# 结果集 (结果训练集)

y_train = df.weight

# (6)_1学习训练集生成模型

model = LinearRegression()

model.fit(X_train, y_train)

# 判定系数

y_pre=model.predict(X_train)

print("判定系数是***************")

# 'weight的真实值 pd.Series(y_pre) 通过height进行回归计算出的weight的预测值

print(df['weight'].corr(pd.Series(y_pre))) # 结果是0.9593031405705865

# (6)_2保存训练完成的模型

# 训练完的模型持久化 lr.pkl 是二进制格式的,留给机器看的.

joblib.dump(model, 'lr.pkl')

# 读取持久化的模型

model = joblib.load('lr.pkl')

# (7)预测

# print(model.predict(170)) x要传递一个二维数组,二维集合 不能是一维

print(model.predict([[170]])) # [56.67589406]

x_pred = [[171], [175]]

y_pred = model.predict(x_pred)

print(y_pred) # [57.83495436 62.47119557]

# (8)得到直线的斜率,截距

a, b = model.coef_, model.intercept_

print("直线的斜率: {},截距: {}".format(a, b)) # 直线的斜率: [1.1590603],截距: -140.36435732455482

# (9)_1 画图

# 散点图

plt.scatter(df['height'], df['weight'], color='blue')

# 拟合的直线

plt.plot(X_train, model.predict(X_train), color='red', linewidth=3)

# plt.plot(df['height'], a*df['height']+b, color='red', linewidth=3)

plt.xlabel("height")

plt.ylabel('weight')

# (9)_2显示画图,保存画图

plt.savefig('linear_model.png')

plt.show()

# 下面的目前先不看......

#### 统计量参数

def get_lr_stats(x, y, model):

message0 = '一元线性回归方程为: ' + '\ty' + '=' + str(model.intercept_[0]) + ' + ' + str(model.coef_[0][0]) + '*x'

from scipy import stats

n = len(x)

y_prd = model.predict(x)

Regression = sum((y_prd - np.mean(y)) ** 2) # 回归

Residual = sum((y - y_prd) ** 2) # 残差

R_square = Regression / (Regression + Residual) # 相关性系数R^2

F = (Regression / 1) / (Residual / (n - 2)) # F 分布

pf = stats.f.sf(F, 1, n - 2)

message1 = ('相关系数(R^2): ' + str(R_square[0]) + ';' + '\n' +

'回归分析(SSR): ' + str(Regression[0]) + ';' + '\t残差(SSE): ' + str(Residual[0]) + ';' + '\n' +

' F : ' + str(F[0]) + ';' + '\t' + 'pf : ' + str(pf[0]))

## T

L_xx = n * np.var(x)

sigma = np.sqrt(Residual / n)

t = model.coef_ * np.sqrt(L_xx) / sigma

pt = stats.t.sf(t, n - 2)

message2 = ' t : ' + str(t[0][0]) + ';' + '\t' + 'pt : ' + str(pt[0][0])

return print(message0 + '\n' + message1 + '\n' + message2)

print(get_lr_stats(df.height.values.reshape(-1, 1), df.weight, model))

2.2 多元线性回归

'''

多元线性回归

参考http://blog.csdn.net/lulei1217/article/details/49386295

'''

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn import metrics

import seaborn as sns

# Advertising.csv来自http://www-bcf.usc.edu/~gareth/ISL/Advertising.csv

# "","TV","Radio","Newspaper","Sales"

# df = pd.read_csv(r'..\csv\Advertising.csv')

# (1)读取样本

df = pd.read_csv('Advertising.csv',encoding='utf-8')

#print(df)

#

#print(df.Sales.corr(df.TV))

#print(df.Sales.corr(df.Radio))

#print(df.Sales.corr(df.Newspaper))

# (2) 选择

# 选择特征库

X = df[['TV', 'Radio', 'Newspaper']]

#print(x)

# 生成训练集和测试集(测试TV,Radio,Newspaper三种方式对广告销量的影响Sales)

y = df['Sales']

# (3)交叉验证

# 交叉验证,用一部分数据来训练,另一部分来验证,这两部分数据不能重复

X_train, X_test, y_train, y_test = train_test_split(X, y, train_size=0.6, test_size=0.4)

# X_train, X_test, y_train, y_test 是一个多元赋值 将后面的元组一个一个赋值. train_size=0.6 的值赋给y_train

print("3************")

print(X_train.shape)

print(y_train.shape)

print(X_test.shape) #print(y_test.shape)

# (4) 训练模型

print("4************")

model = LinearRegression()

model.fit(X_train, y_train)

a, b = model.intercept_, model.coef_

print("直线的斜率: {},截距: {}".format(a, b)) # 直线的斜率(回归系数): [1.1590603],截距: -140.36435732455482

#y=2.668+0.0464*TV+0.192*Radio-0.00349*Newspaper

# (5)预测训练模型结果

print("5************")

y_pred = model.predict(X_test)

print(y_pred) # 机器学习预测出来的

print(y_test) # 真实数据的测试值

# 自己拿3个数字测试

# y_pred = model.predict([[230.1,37.8,69.2],[44.5,39.3,45.1],[17.2,45.9,69.3]])

#print(metrics.accuracy_score(y_test, y_pred))

# 以上报错 continuous is not supported

#

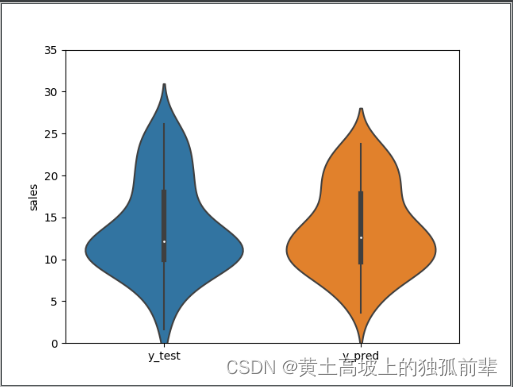

# (5)_2预测结果和真实值比较

# 封装一个字典

dct = {

'y_test':y_test,

'y_pred': y_pred

}

df = pd.DataFrame(dct)

#

print(df.y_test.corr(df.y_pred))

# 0.9531363896456168

print(df)

# y_test y_pred

# 128 24.7 22.072041

# 145 10.3 9.483712

# 11 17.4 17.118617

# 72 8.8 9.991983

# 106 7.2 5.764625

# ... ... ...

print(df.describe()) # 对上述df 的数据按照列进行统计

# y_test y_pred

# count 80.000000 80.000000

# mean 14.371250 14.419086

# std 5.070745 4.874209

# min 5.300000 3.705385

# 25% 10.775000 10.145408

# 50% 13.300000 14.407218

# 75% 17.700000 18.360683

# max 25.400000 23.269870

# (6)绘制小提琴图

# 画提琴图

# df是上面的DataFrame

sns.violinplot(data = df)

plt.ylim(0,35) # y值的取值范围

plt.ylabel('sales')

plt.savefig('linear_model_violinplot.png')

plt.show()

3.推荐

基于内容推荐

3.1 案例1

'''

根据chapter8 anjuke_zufang.py 抓取的数据

房源推荐系统,

根据用户浏览的房源,推荐相似区域,租金,房型等的房源 (基于内容的推荐)

一个函数接收用户经常访问的房源

def accept()

另一个函数推荐和前面一个函数接收的房源 类似的房源

def recommend()

初步设想思路是

1 先用聚类分好类,产生data

2 然后用knn(K最近邻)算法,去学习上述分好类的data,产生一个model

3 再用上面的model去预测历史浏览记录的房源,

看属于哪个类别的房源最多

4 recommend函数就推荐那个最多类别的房源,推5条左右

简单起见,这里只考虑,租金,装修,面积

#1. 根据租金,装修,面积 对不同的房源进行分类

#2.得到模型

#3.根据不同用户的现有数据,(租金,装修,面积) 推荐类似的房源.

'''

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.cluster import KMeans, DBSCAN

from sklearn.preprocessing import LabelEncoder, StandardScaler, MinMaxScaler

from sklearn.neighbors import KNeighborsClassifier

from random import choice

df = pd.read_csv(r'..\csv\anjuke.csv', encoding='gbk')

#print(df.tail(10))

#print(df.columns)

#X = df.loc[:, ['租金', '租赁方式', '装修', '面积', '年代']]

X = df.loc[:, ['租金', '装修', '面积']]

# 特征整理

def get_mianji(mianji):

return mianji.replace('平米', '')

#def get_niandai(niandai):

# if niandai == "暂无":

# rtn = 2000

# else:

# rtn = niandai.replace('年', '')

# return int(rtn) - 1980

# 装修用手工整理,安装装修的简单到豪华排序,LabelEncoder的顺序不一定,所以不用

zx = X['装修'].copy()

zx[zx=='毛坯'] = 1

zx[zx=='简单装修'] = 2

zx[zx=='中等装修'] = 3

zx[zx=='精装修'] = 4

zx[zx=='豪华装修'] = 5

X['装修'] = zx

X['面积'] = X['面积'].apply(get_mianji)

#X['年代'] = X['年代'].apply(get_niandai)

#X['租赁方式'] = LabelEncoder().fit_transform(X['租赁方式'].values)

#X = X.dropna()

#print(X.head(50), X.shape)

ss = StandardScaler()

X2 = ss.fit_transform(X)

kmeans = KMeans(n_clusters=15, n_init=50)

kmeans.fit(X2)

#统计各个类别的数目

#r1 = pd.Series(kmeans.labels_).value_counts()

## 找出聚类中心

##r2 = pd.DataFrame(kmeans.cluster_centers_)

## 聚类中心真实值

#r2 = pd.DataFrame(ss.inverse_transform(kmeans.cluster_centers_))

##横向连接(0是纵向),得到聚类中心对应的类别下的数目

#r = pd.concat([r2, r1], axis = 1)

##重命名表头

#r.columns = list(X.columns) + ['类别数目']

#print(r)

y_pred = kmeans.predict(X2)

ydata = pd.DataFrame(y_pred, columns=['分类'])

# knn学习用

data = pd.concat([X, ydata], axis=1)

# 推荐用

data_recommend = pd.concat([df, ydata], axis=1)

#print(data.head(30), data.shape[0])

X = data.drop('分类', axis=1)

y = data['分类']

knn = KNeighborsClassifier(n_neighbors=7)

knn.fit(X, y)

def _random_choice(lst, n):

'''从列表lst中随机选择n个不重复的元素'''

if n > len(lst):

return lst

choiced_elements = []

lst = lst.copy()

while n > 0:

element = choice(lst)

choiced_elements.append(element)

lst.remove(element) # 避免重复选择

n -= 1

return choiced_elements

#rc = _random_choice([1,2,3,4,5,6,7], 2)

#print(rc)

# 假装这是某个用户最近的浏览历史记录

viewed = [

[9900, 1, 150],

[9000, 4, 150],

[9000, 4, 150],

[9000, 3, 150],

[9200, 4, 160],

[9400, 4, 180],

[9000, 4, 150],

[9000, 4, 160],

[92000, 4, 1120],

[9000, 4, 190],

[9500, 2, 127],

]

def history_view():

# 从csv文件中随机选择20条,假装是某个用户最近的浏览历史记录

choosed_idx = _random_choice(list(df.index), 20)

choosed_rows = df.ix[choosed_idx,:]

#print(choosed_rows)

X = choosed_rows.loc[:, ['租金', '装修', '面积']]

print('-----------------------------查看历史----------------------------------------------')

print(X)

zx = X['装修'].copy()

zx[zx=='毛坯'] = 1

zx[zx=='简单装修'] = 2

zx[zx=='中等装修'] = 3

zx[zx=='精装修'] = 4

zx[zx=='豪华装修'] = 5

X['装修'] = zx

X['面积'] = X['面积'].apply(get_mianji)

return X

#history_view()

def recommend(viewed):

'''

根据用户最近的浏览记录,推荐浏览类型最多的房源

'''

viewed_types = knn.predict(viewed)

#print(viewed_types)

value_counts = pd.Series(viewed_types).value_counts()

#print(value_counts)

most_view = value_counts.index[0] # 推荐最多浏览的类型

#print(most_view)

recommended = data_recommend[data_recommend['分类'] == most_view]

#idx = np.array([1,2,3,4,5])

n = 5

#if recommended.shape[0]>n: # 在这一类别里随机选5条,否则就全部选出

# idx = list(recommended.index)

# choiced_idx = []

# while n > 0:

# a = choice(idx)

# choiced_idx.append(a)

# idx.remove(a) # 避免重复选择

# n -= 1

##choiced_idx = np.array(choiced_idx)

choiced_idx = _random_choice(list(recommended.index), n)

#print(choiced_idx)

recommended = recommended.ix[choiced_idx, :]

print('-----------------------------推荐的----------------------------------------------')

print(recommended)

return recommended

#recommend(viewed)

#recommend(history_view())

3.2 案例2

'''

根据chapter8 anjuke_zufang.py 抓取的数据

房源推荐系统,

根据用户浏览的房源,推荐相似区域,租金,房型等的房源 (基于内容的推荐)

一个函数接收用户经常访问的房源

def accept()

另一个函数推荐和前面一个函数接收的房源 类似的房源

def recommend()

1 先用聚类分好类,产生data

2 看历史最近浏览的房源的类别,看属于哪个类别的房源最多

3 recommend函数就推荐那个最多类别的房源,推5条左右

简单起见,这里只考虑,租金,装修,面积

'''

import copy

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.cluster import KMeans, DBSCAN

from sklearn.preprocessing import LabelEncoder, StandardScaler, MinMaxScaler

from random import choice

area = ['浦东新区', '闵行区', '松江区',

'徐汇区', '普陀区', '长宁区',

'青浦区', '静安区', '上海周边',

'杨浦区', '虹口区', '宝山区',

'嘉定区', '黄浦区', '奉贤区',

'崇明区', '金山区']

# 查询网址http://www.gpsspg.com/maps.htm 百度地图gps

gps = (

(31.2274065041,121.5505840120), (31.1189141643,121.3886803785), (31.0383289332,121.2330541677),

(31.1946458680,121.4433055580), (31.2553119532,121.4035442489), (31.2265243725,121.4304185175),

(31.1555447438,121.1308224101), (31.2296614952,121.4624372609), (31.2363429624,121.4803295328),

(31.2656839054,121.5326577316), (31.2703244262,121.5118910226), (31.4109435502,121.4959742660),

(31.3805628349,121.2727914784), (31.2374294453,121.4912966392), (30.9239497878,121.4806129429),

(31.6288408354,121.4038337007), (30.7479665497,121.3489176446)

)

area_gps = dict(zip(area, gps))

#print(area_gps)

df = pd.read_csv(r'..\csv\anjuke.csv', encoding='gbk')

#print(list(df['区域1'].unique()))

#print(df['区域1'].value_counts())

def history_viewed(viewed_index):

'''根据输入的index选择几条数据,

假装是某个用户最近的浏览历史记录最新的几条'''

return df.ix[viewed_index, :]

#viewed_index = 3, 4, 5, 6, 22, 40, 43 # 四五十平米,租金2,3千

#viewed_index = 12, 18, 39, 46 # 中等装修

#viewed_index = 1, 8, 17, 20, 27, 30, 37 # 一百多平米

viewed_index = 0, 1, 6, 13, 15, 17,18,19 # 浦东新区

viewed = history_viewed(viewed_index)

#print('-----------------------------浏览过的----------------------------------------------')

#print(viewed)

#print(df.tail(10))

#print(df.columns)

#X = df.loc[:, ['租金', '租赁方式', '装修', '面积', '年代']]

X = df.loc[:, ['租金', '装修', '面积', '区域1']]

# 特征整理

def get_mianji(mianji):

return mianji.replace('平米', '')

def get_area_gps(area):

return area_gps[area]

# 装修用手工整理,安装装修的简单到豪华排序,LabelEncoder的顺序不一定,所以不用

zx = X['装修'].copy()

zx[zx=='毛坯'] = 1

zx[zx=='简单装修'] = 2

zx[zx=='中等装修'] = 3

zx[zx=='精装修'] = 4

zx[zx=='豪华装修'] = 5

X['装修'] = zx

X['面积'] = X['面积'].apply(get_mianji)

# 处理区域坐标

X['区域1'] = X['区域1'].apply(get_area_gps)

X['area_x'] = X['区域1'].apply(lambda x:x[0])

X['area_y'] = X['区域1'].apply(lambda x:x[1])

X = X.drop('区域1', axis=1)

#print(X.head())

X = X.astype(float)

X_filtered = X[(X['面积']<300) & (X['租金']<20000)]

df_filtered = df[df.index.isin(X_filtered.index)]

#print(df_filtered.shape[0])

#print(X_filtered.shape[0])

ss = StandardScaler()

X2 = ss.fit_transform(X_filtered)

model = KMeans(n_clusters=15, n_init=50)

# 肘方法

#ine = [[],[]] # 画图用的坐标点

#for n in range(2, 50):

# inertia = KMeans(n_clusters=n, n_init=20).fit(X2).inertia_

# ine[0].append(n)

# ine[1].append(inertia)

## 肘方法

#plt.plot(ine[0], ine[1])

#plt.show()

#model = DBSCAN(eps = 0.1, min_samples=10)

#model.fit(X2)

y_pred = model.fit_predict(X2)

y_data = pd.DataFrame(y_pred, columns=['分类'], index=df_filtered.index)

#print(y_data.index)

#print(y_data.shape[0])

# 推荐用

data_recommend = pd.concat([df_filtered, y_data], axis=1)

#print(data_recommend.shape[0])

print(y_pred == model.labels_)

print(data_recommend.shape)

def _random_choice(lst, n=5):

'''从列表lst中随机选择n个不重复的元素'''

if n > len(lst):

return lst

choiced_elements = []

lst = lst.copy()

while n > 0:

element = choice(lst)

choiced_elements.append(element)

lst.remove(element) # 避免重复选择

n -= 1

return choiced_elements

#rc = _random_choice([1,2,3,4,5,6,7], 2)

#print(rc)

def recommend():

'''

根据用户最近的浏览记录,推荐浏览类型最多的房源

'''

viewed_types = y_pred[np.array(viewed_index)]

value_counts = pd.Series(viewed_types).value_counts()

most_view = value_counts.index[0] # 推荐最多浏览的类型

#print(most_view)

recommended = data_recommend[data_recommend['分类'] == most_view]

#idx = np.array([1,2,3,4,5])

n = 5

# 随机选这一类别的n跳记录

choiced_idx = _random_choice(list(recommended.index), n)

#print(choiced_idx)

recommended = recommended.ix[choiced_idx, :]

print('-----------------------------推荐的----------------------------------------------')

print(recommended)

return recommended

def recommend2():

viewed2 = data_recommend[data_recommend.index.isin(viewed_index)]

print(viewed2['分类'])

print(viewed2['分类'].value_counts())

viewed_types = model.labels_[np.array(viewed_index)]

print(viewed_types)

view_counts = pd.Series(viewed_types).value_counts()

most_view = view_counts.index[0]

print(most_view)

sametype = data_recommend[data_recommend['分类']==most_view]

#print(sametype.head())

choosed_index = _random_choice(list(sametype.index))

#print(data_recommend.ix[choosed_index,:])

return data_recommend.ix[choosed_index,:]

recommend2()

4.聚类

样例数据

ID,R,F,M

1,27,6,232.61

2,3,5,1507.11

3,4,16,817.62

4,3,11,232.81

5,14,7,1913.05

6,19,8,220.07

7,5,2,615.83

8,26,2,1059.66

9,21,9,304.82

10,2,21,1227.96

11,15,2,521.02

12,26,3,438.22

13,17,11,1744.55

14,30,16,1957.44

15,5,7,1713.79

16,4,21,1768.11

17,93,2,1016.34

18,16,3,950.36

19,4,1,754.93

20,27,1,294.23

21,5,1,195.3

22,17,3,1845.34

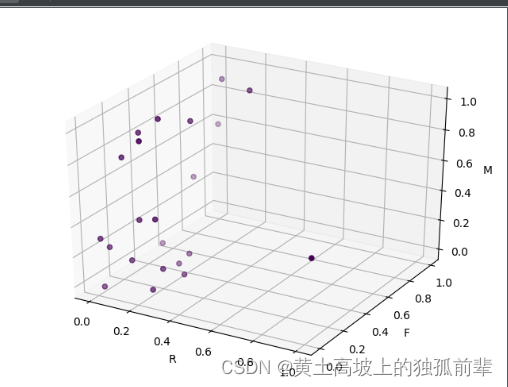

4.1 DBSCAN

# 使用DBSCAN算法聚类消费行为特征数据

#

# 选两个特征, 以便画图

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.cluster import KMeans, DBSCAN

from sklearn.preprocessing import StandardScaler, MinMaxScaler

from mpl_toolkits.mplot3d import Axes3D # 画3D图

# 可以做二维数据的分析

# df = pd.read_csv('consumption_data.csv', encoding='utf-8')

# print(df)

# df = df.ix[:, ['F', 'M']] # 去掉ID

# print(df)

# # 去掉夸张离群点

# df = df[ (df.F<50) | (df.M<8400)]

# # 数据规范化

# df = (df - df.mean())/df.std()

df = pd.read_csv('consumption_data.csv', encoding='utf-8')

df = df.drop('ID', axis=1) # 去掉ID,一样的意思

# 一.去掉夸张离群点 将离群点删除

df = df[(df.R < 80) | (df.F < 50) | (df.M < 8400)]

# 二.特征数据规范化,统一取值范围 选一种

# 1 零-均值规范化,均值为0,标准差为1

# 用sklearn方法 StandardScaler 方法

ss = MinMaxScaler()

scaled_df = ss.fit_transform(df) # 标准化的目的是为了 R F M三个轴的刻度范围能够都在0-10之间

df2 = pd.DataFrame(ss.fit_transform(df), columns=list('RFM'))

# 三

# 参数

# eps:确定在同一聚类中两个点彼此之间的距离

# min_samples: 每个分类最少点数

# model = DBSCAN(eps=0.5, min_samples=10)

# 如果将eps=0.5,由于不同点的距离为0.5的被分为一类,这样可能全部被分为一类。

model = DBSCAN(eps=0.1)

model.fit(df2) # 开始聚类 标准化之后的数据。eps是0-1之间的数 可以0.05

# print(model.predict([[1,25, 2535]]))

# 散点图

y_pred = model.fit_predict(df2)

# 这个是画二维的。

# plt.scatter(df2.R,df2.F) # 未分类散点图

# plt.scatter(df2.R, df2.F, c=y_pred) # 分类

# plt.show()

sd = plt.figure().add_subplot(111, projection='3d')

sd.set_xlabel('R')

sd.set_ylabel('F')

sd.set_zlabel('M')

sd.scatter(df2.R, df2.F, df2.M, c=y_pred)

plt.show()

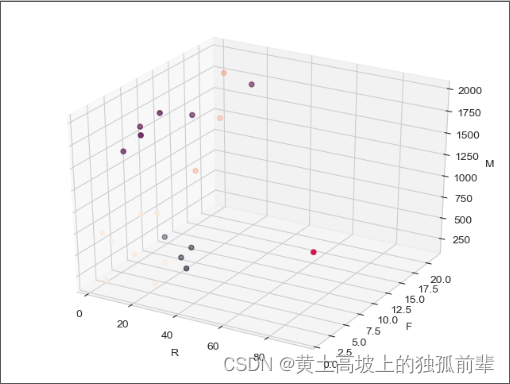

4.2 Kmeans

# 使用K-Means算法聚类消费行为特征数据

# ppt上示例

import matplotlib.pyplot as plt # 画2d图

from mpl_toolkits.mplot3d import Axes3D # 画3D图

import numpy as np # 数组数据处理包

import pandas as pd # 数据处理包

from sklearn.cluster import KMeans, DBSCAN # KMeans 聚类方法

from sklearn.preprocessing import MinMaxScaler, StandardScaler # 数据标准化2中方法

import seaborn as sns # 画2d图

sns.set_style("whitegrid")

df = pd.read_csv('consumption_data.csv', encoding='utf-8')

print(df)

# df = df.ix[:, ['R', 'F', 'M']] # 去掉ID

df = df.drop('ID', axis=1) # 去掉ID,一样的意思

print(df)

# 画散点图,盒型图(横轴是F,纵轴是M) 去除离群点

# plt.scatter(df.F, df.M)

# plt.show()

# 观察数据,看是否有异常值

# 可以画箱型图,直观

# sns.boxplot(data=df.R)

# plt.ylim(0, df.R.max())

# plt.ylim(0, 130)

# plt.show()

# 得到R>80 有一个异常点

# sns.boxplot(data=df.F)

# plt.ylim(0, df.F.max())

# plt.ylim(0, 100)

# plt.show()

# 我的数据太少,没有看到异常点

# sns.boxplot(data=df.M)

# plt.ylim(0, df.M.max())

# plt.ylim(0, 20000)

# plt.show()

# 一.去掉夸张离群点 将离群点删除

df = df[(df.R < 80) | (df.F < 50) | (df.M < 8400)]

# 二.特征数据规范化,统一取值范围 选一种

# 1 零-均值规范化,均值为0,标准差为1

# df2 = (df - df.mean())/df.std() # df2为规范化的数据集

# 用sklearn方法 StandardScaler 方法

ss = StandardScaler()

scaled_df = ss.fit_transform(df) # 标准化的目的是为了 R F M三个轴的刻度范围能够都在0-10之间

df2 = pd.DataFrame(ss.fit_transform(df), columns=list('RFM'))

# 2 最小-最大规范化, 范围限定在0到1之间

# df2 = (df - df.min())/(df.max() - df.min())

# 用sklearn方法 MinMaxScaler 坐标轴都变成0-1了

# scaled_df = MinMaxScaler().fit_transform(df)

# df2 = pd.DataFrame(scaled_df, columns=list('RFM'))

# 3 上面选择第1种 第2种方法 画图

# 标准化方法,自己看一下3D散点图

# sd = plt.figure().add_subplot(111, projection='3d')

# sd.set_xlabel('R')

# sd.set_ylabel('F')

# sd.set_zlabel('M')

# sd.scatter(df2.R, df2.F, df2.M)

# plt.show()

# 三.分类算法

# 分为n_clusters类,n_init中心点随机放置的次数

# model = KMeans(n_clusters=9, n_init=5)

# 1.分为n_clusters类,聚类最大循环次数500 到底分成几类好?

# model = KMeans(n_clusters=5, max_iter=500)

model = KMeans(n_clusters=5)

# (1)开始聚类学习(给标准化之后的数据进行学习)

model.fit(df2)

# (2)scaled_df 和 df2其实是一样的数据,只不过df2中的字段变了一下

y_pred = model.predict(df2)

print(y_pred) # [3 1 2 3 1 3 0 0 3 2 0 0 1 2 1 2 4 0 0 0 0 1] 这就是分的类

print(df2.shape, y_pred.shape)

# scaled_df.shape (22, 3) 原来22个数据,有3个特征 R M F

# y_pred.shape (22,)

# print(model.predict([[0.1, 0.1, 0.1]]))

# (3)每个点到达中心点的距离之和 (分的类越多,值越小)

print(model.inertia_) # 10.834820247097994

# (3)_1肘方法 找到线的切线的位置是最好的类数 多少个类合适?

# 画图用的坐标点 x轴 聚类的个数 y轴 不同类个数的情况下,每个点到聚类中心的距离值得和.

ine = [[], []]

# 范围给适当的数据,如果有940个数据,可以给range(2,31)

for n in range(2, 10):

# inertia = KMeans(n_clusters=n, n_init=5).fit(df2).inertia_

inertia = KMeans(n_clusters=n).fit(df2).inertia_

ine[0].append(n)

ine[1].append(inertia)

print(ine)

plt.plot(ine[0], ine[1])

plt.show()

# print(model.labels_) # 打印model的类别

# print(model.cluster_centers_) # 打印聚类中心

# 2.分类算法中几个相应的值

# (1)统计各个类别的数目

r1 = pd.Series(model.labels_).value_counts()

print(r1)

# 类别 个数

# 0 9

# 1 5

# 4 4

# 2 3

# 3 1

# (2)找出每一个类别的聚类中心

r2 = pd.DataFrame(model.cluster_centers_)

print(r2)

# 0 1 2

# 0 0.162393 0.066667 0.208362

# 1 0.101099 0.280000 0.879310

# 2 0.109890 0.916667 0.826194

# 3 1.000000 0.050000 0.465933

# 4 0.107143 0.500000 0.112664

# # (3)聚类中心真实值

# ss = StandardScaler()

# r2 = pd.DataFrame(ss.inverse_transform(model.cluster_centers_))

# print(r2)

# #(4)将 r1 ,r2两个表放到一起

# 横向连接(0是纵向),得到聚类中心对应的类别下的数目

r = pd.concat([r2, r1], axis=1)

print(r) # 默认Series是0,要重新命名为类别数目.

# 0 1 2 0

# 0 0.162393 0.066667 0.208362 9

# 1 0.101099 0.280000 0.879310 5

# 2 0.109890 0.916667 0.826194 3

# 3 0.107143 0.500000 0.112664 4

# 4 1.000000 0.050000 0.465933 1

# 重命名表头

r.columns = list(df.columns) + ['类别数目']

print(r)

# R F M 类别数目

# 0 0.162393 0.066667 0.208362 9

# 1 0.101099 0.280000 0.879310 5

# 2 0.109890 0.916667 0.826194 3

# 3 0.107143 0.500000 0.112664 4

# 4 1.000000 0.050000 0.465933 1

# 3.画3D散点图 (不同类的颜色不同)

# model.fit_predict(df2) # == model.fit(df2).predict(df2)

# y_pred = model.predict(df2) # 因为这个model已经fit过,只要predict

sd = plt.figure().add_subplot(111, projection='3d')

sd.set_xlabel('R')

sd.set_ylabel('F')

sd.set_zlabel('M')

sd.scatter(df.R, df.F, df.M, c=y_pred)

# 直接用原来的df来画图,这样看图能够更加清晰 y_pred 和model.labels 是同一个值

# sd.scatter(df.R, df.F, df.M, c=model.labels_)

plt.show()

'''

# sns.barplot(x="sex", y="survived", hue="class", data=r2)

# plt.show()

# ----------------分类中心的柱状图----------------------

# r3 = r.drop('类别数目', axis=1)

##plt.bar(range(3), r3.ix[0], label='0')

##plt.legend()

# sns.barplot(data=r3)

# plt.show()

# --------------------------------------

## 带图例的3D散点图, 上面画的就是这样的,已经OK了

# y_pred = model.predict(df2) # 因为这个model已经fit过,只要predict

# sd = plt.figure().add_subplot(111, projection = '3d')

# sd.set_xlabel('R')

# sd.set_ylabel('F')

# sd.set_zlabel('M')

## 分组构建不同类型的集合

# type0 = ([], [], []) # 一系列(x, y, z)坐标这里为R, F, M

# type1 = ([], [], [])

# type2 = ([], [], [])

# type3 = ([], [], [])

# type4 = ([], [], [])

# types = tuple(set(y_pred))

# length = len(y_pred)

# for i in range(length):

# if y_pred[i] == types[0]:

# type0[0].append(df.R[i])

# type0[1].append(df.F[i])

# type0[2].append(df.M[i])

# elif y_pred[i] == types[1]:

# type1[0].append(df.R[i])

# type1[1].append(df.F[i])

# type1[2].append(df.M[i])

# elif y_pred[i] == types[2]:

# type2[0].append(df.R[i])

# type2[1].append(df.F[i])

# type2[2].append(df.M[i])

# elif y_pred[i] == types[3]:

# type3[0].append(df.R[i])

# type3[1].append(df.F[i])

# type3[2].append(df.M[i])

# elif y_pred[i] == types[4]:

# type4[0].append(df.R[i])

# type4[1].append(df.F[i])

# type4[2].append(df.M[i])

#

# t0 = sd.scatter(type0[0], type0[1],type0[2], marker='x', color='b')

# t1 = sd.scatter(type1[0], type1[1],type1[2], marker='x', color='c')

# t2 = sd.scatter(type2[0], type2[1],type2[2], marker='o', color='r')

# t3 = sd.scatter(type3[0], type3[1],type3[2], marker='o', color='y')

# t4 = sd.scatter(type4[0], type4[1],type4[2], marker='o', color='g')

# plt.legend((t0, t1, t2, t3, t4),

# ('type0', 'type1', 'type2', 'type3', 'type4'),

# scatterpoints=1,

# loc=2, # 显示位置

# ncol=2, # 几列

# fontsize=8)

# plt.show()

'''

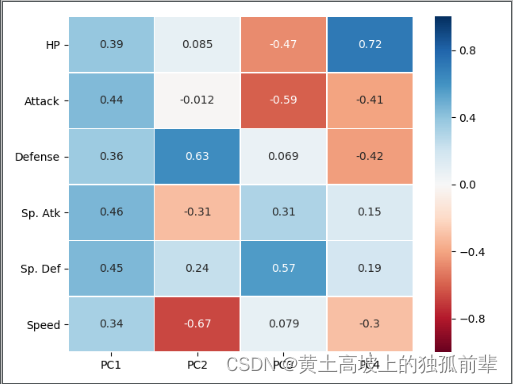

5.降维

数据源

#,Name,Type 1,Type 2,Total,HP,Attack,Defense,Sp. Atk,Sp. Def,Speed,Generation,Legendary

1,Bulbasaur,Grass,Poison,318,45,49,49,65,65,45,1,False

2,Ivysaur,Grass,Poison,405,60,62,63,80,80,60,1,False

3,Venusaur,Grass,Poison,525,80,82,83,100,100,80,1,False

3,VenusaurMega Venusaur,Grass,Poison,625,80,100,123,122,120,80,1,False

4,Charmander,Fire,,309,39,52,43,60,50,65,1,False

5,Charmeleon,Fire,,405,58,64,58,80,65,80,1,False

6,Charizard,Fire,Flying,534,78,84,78,109,85,100,1,False

6,CharizardMega Charizard X,Fire,Dragon,634,78,130,111,130,85,100,1,False

6,CharizardMega Charizard Y,Fire,Flying,634,78,104,78,159,115,100,1,False

7,Squirtle,Water,,314,44,48,65,50,64,43,1,False

8,Wartortle,Water,,405,59,63,80,65,80,58,1,False

9,Blastoise,Water,,530,79,83,100,85,105,78,1,False

9,BlastoiseMega Blastoise,Water,,630,79,103,120,135,115,78,1,False

10,Caterpie,Bug,,195,45,30,35,20,20,45,1,False

11,Metapod,Bug,,205,50,20,55,25,25,30,1,False

12,Butterfree,Bug,Flying,395,60,45,50,90,80,70,1,False

13,Weedle,Bug,Poison,195,40,35,30,20,20,50,1,False

14,Kakuna,Bug,Poison,205,45,25,50,25,25,35,1,False

15,Beedrill,Bug,Poison,395,65,90,40,45,80,75,1,False

15,BeedrillMega Beedrill,Bug,Poison,495,65,150,40,15,80,145,1,False

16,Pidgey,Normal,Flying,251,40,45,40,35,35,56,1,False

17,Pidgeotto,Normal,Flying,349,63,60,55,50,50,71,1,False

18,Pidgeot,Normal,Flying,479,83,80,75,70,70,101,1,False

18,PidgeotMega Pidgeot,Normal,Flying,579,83,80,80,135,80,121,1,False

19,Rattata,Normal,,253,30,56,35,25,35,72,1,False

20,Raticate,Normal,,413,55,81,60,50,70,97,1,False

21,Spearow,Normal,Flying,262,40,60,30,31,31,70,1,False

22,Fearow,Normal,Flying,442,65,90,65,61,61,100,1,False

23,Ekans,Poison,,288,35,60,44,40,54,55,1,False

24,Arbok,Poison,,438,60,85,69,65,79,80,1,False

25,Pikachu,Electric,,320,35,55,40,50,50,90,1,False

26,Raichu,Electric,,485,60,90,55,90,80,110,1,False

27,Sandshrew,Ground,,300,50,75,85,20,30,40,1,False

28,Sandslash,Ground,,450,75,100,110,45,55,65,1,False

29,Nidoran♀,Poison,,275,55,47,52,40,40,41,1,False

30,Nidorina,Poison,,365,70,62,67,55,55,56,1,False

31,Nidoqueen,Poison,Ground,505,90,92,87,75,85,76,1,False

32,Nidoran♂,Poison,,273,46,57,40,40,40,50,1,False

33,Nidorino,Poison,,365,61,72,57,55,55,65,1,False

34,Nidoking,Poison,Ground,505,81,102,77,85,75,85,1,False

35,Clefairy,Fairy,,323,70,45,48,60,65,35,1,False

36,Clefable,Fairy,,483,95,70,73,95,90,60,1,False

37,Vulpix,Fire,,299,38,41,40,50,65,65,1,False

38,Ninetales,Fire,,505,73,76,75,81,100,100,1,False

39,Jigglypuff,Normal,Fairy,270,115,45,20,45,25,20,1,False

40,Wigglytuff,Normal,Fairy,435,140,70,45,85,50,45,1,False

41,Zubat,Poison,Flying,245,40,45,35,30,40,55,1,False

42,Golbat,Poison,Flying,455,75,80,70,65,75,90,1,False

43,Oddish,Grass,Poison,320,45,50,55,75,65,30,1,False

44,Gloom,Grass,Poison,395,60,65,70,85,75,40,1,False

45,Vileplume,Grass,Poison,490,75,80,85,110,90,50,1,False

46,Paras,Bug,Grass,285,35,70,55,45,55,25,1,False

47,Parasect,Bug,Grass,405,60,95,80,60,80,30,1,False

48,Venonat,Bug,Poison,305,60,55,50,40,55,45,1,False

49,Venomoth,Bug,Poison,450,70,65,60,90,75,90,1,False

50,Diglett,Ground,,265,10,55,25,35,45,95,1,False

51,Dugtrio,Ground,,405,35,80,50,50,70,120,1,False

52,Meowth,Normal,,290,40,45,35,40,40,90,1,False

53,Persian,Normal,,440,65,70,60,65,65,115,1,False

54,Psyduck,Water,,320,50,52,48,65,50,55,1,False

55,Golduck,Water,,500,80,82,78,95,80,85,1,False

56,Mankey,Fighting,,305,40,80,35,35,45,70,1,False

57,Primeape,Fighting,,455,65,105,60,60,70,95,1,False

58,Growlithe,Fire,,350,55,70,45,70,50,60,1,False

59,Arcanine,Fire,,555,90,110,80,100,80,95,1,False

60,Poliwag,Water,,300,40,50,40,40,40,90,1,False

61,Poliwhirl,Water,,385,65,65,65,50,50,90,1,False

62,Poliwrath,Water,Fighting,510,90,95,95,70,90,70,1,False

63,Abra,Psychic,,310,25,20,15,105,55,90,1,False

64,Kadabra,Psychic,,400,40,35,30,120,70,105,1,False

65,Alakazam,Psychic,,500,55,50,45,135,95,120,1,False

65,AlakazamMega Alakazam,Psychic,,590,55,50,65,175,95,150,1,False

66,Machop,Fighting,,305,70,80,50,35,35,35,1,False

67,Machoke,Fighting,,405,80,100,70,50,60,45,1,False

68,Machamp,Fighting,,505,90,130,80,65,85,55,1,False

69,Bellsprout,Grass,Poison,300,50,75,35,70,30,40,1,False

70,Weepinbell,Grass,Poison,390,65,90,50,85,45,55,1,False

71,Victreebel,Grass,Poison,490,80,105,65,100,70,70,1,False

72,Tentacool,Water,Poison,335,40,40,35,50,100,70,1,False

73,Tentacruel,Water,Poison,515,80,70,65,80,120,100,1,False

74,Geodude,Rock,Ground,300,40,80,100,30,30,20,1,False

75,Graveler,Rock,Ground,390,55,95,115,45,45,35,1,False

76,Golem,Rock,Ground,495,80,120,130,55,65,45,1,False

77,Ponyta,Fire,,410,50,85,55,65,65,90,1,False

78,Rapidash,Fire,,500,65,100,70,80,80,105,1,False

79,Slowpoke,Water,Psychic,315,90,65,65,40,40,15,1,False

80,Slowbro,Water,Psychic,490,95,75,110,100,80,30,1,False

80,SlowbroMega Slowbro,Water,Psychic,590,95,75,180,130,80,30,1,False

81,Magnemite,Electric,Steel,325,25,35,70,95,55,45,1,False

82,Magneton,Electric,Steel,465,50,60,95,120,70,70,1,False

83,Farfetch'd,Normal,Flying,352,52,65,55,58,62,60,1,False

84,Doduo,Normal,Flying,310,35,85,45,35,35,75,1,False

85,Dodrio,Normal,Flying,460,60,110,70,60,60,100,1,False

86,Seel,Water,,325,65,45,55,45,70,45,1,False

87,Dewgong,Water,Ice,475,90,70,80,70,95,70,1,False

88,Grimer,Poison,,325,80,80,50,40,50,25,1,False

89,Muk,Poison,,500,105,105,75,65,100,50,1,False

90,Shellder,Water,,305,30,65,100,45,25,40,1,False

91,Cloyster,Water,Ice,525,50,95,180,85,45,70,1,False

92,Gastly,Ghost,Poison,310,30,35,30,100,35,80,1,False

93,Haunter,Ghost,Poison,405,45,50,45,115,55,95,1,False

94,Gengar,Ghost,Poison,500,60,65,60,130,75,110,1,False

94,GengarMega Gengar,Ghost,Poison,600,60,65,80,170,95,130,1,False

95,Onix,Rock,Ground,385,35,45,160,30,45,70,1,False

96,Drowzee,Psychic,,328,60,48,45,43,90,42,1,False

97,Hypno,Psychic,,483,85,73,70,73,115,67,1,False

98,Krabby,Water,,325,30,105,90,25,25,50,1,False

99,Kingler,Water,,475,55,130,115,50,50,75,1,False

100,Voltorb,Electric,,330,40,30,50,55,55,100,1,False

101,Electrode,Electric,,480,60,50,70,80,80,140,1,False

102,Exeggcute,Grass,Psychic,325,60,40,80,60,45,40,1,False

103,Exeggutor,Grass,Psychic,520,95,95,85,125,65,55,1,False

104,Cubone,Ground,,320,50,50,95,40,50,35,1,False

105,Marowak,Ground,,425,60,80,110,50,80,45,1,False

106,Hitmonlee,Fighting,,455,50,120,53,35,110,87,1,False

107,Hitmonchan,Fighting,,455,50,105,79,35,110,76,1,False

108,Lickitung,Normal,,385,90,55,75,60,75,30,1,False

109,Koffing,Poison,,340,40,65,95,60,45,35,1,False

110,Weezing,Poison,,490,65,90,120,85,70,60,1,False

111,Rhyhorn,Ground,Rock,345,80,85,95,30,30,25,1,False

112,Rhydon,Ground,Rock,485,105,130,120,45,45,40,1,False

113,Chansey,Normal,,450,250,5,5,35,105,50,1,False

114,Tangela,Grass,,435,65,55,115,100,40,60,1,False

115,Kangaskhan,Normal,,490,105,95,80,40,80,90,1,False

115,KangaskhanMega Kangaskhan,Normal,,590,105,125,100,60,100,100,1,False

116,Horsea,Water,,295,30,40,70,70,25,60,1,False

117,Seadra,Water,,440,55,65,95,95,45,85,1,False

118,Goldeen,Water,,320,45,67,60,35,50,63,1,False

119,Seaking,Water,,450,80,92,65,65,80,68,1,False

120,Staryu,Water,,340,30,45,55,70,55,85,1,False

121,Starmie,Water,Psychic,520,60,75,85,100,85,115,1,False

122,Mr. Mime,Psychic,Fairy,460,40,45,65,100,120,90,1,False

123,Scyther,Bug,Flying,500,70,110,80,55,80,105,1,False

124,Jynx,Ice,Psychic,455,65,50,35,115,95,95,1,False

125,Electabuzz,Electric,,490,65,83,57,95,85,105,1,False

126,Magmar,Fire,,495,65,95,57,100,85,93,1,False

127,Pinsir,Bug,,500,65,125,100,55,70,85,1,False

127,PinsirMega Pinsir,Bug,Flying,600,65,155,120,65,90,105,1,False

128,Tauros,Normal,,490,75,100,95,40,70,110,1,False

129,Magikarp,Water,,200,20,10,55,15,20,80,1,False

130,Gyarados,Water,Flying,540,95,125,79,60,100,81,1,False

130,GyaradosMega Gyarados,Water,Dark,640,95,155,109,70,130,81,1,False

131,Lapras,Water,Ice,535,130,85,80,85,95,60,1,False

132,Ditto,Normal,,288,48,48,48,48,48,48,1,False

133,Eevee,Normal,,325,55,55,50,45,65,55,1,False

134,Vaporeon,Water,,525,130,65,60,110,95,65,1,False

135,Jolteon,Electric,,525,65,65,60,110,95,130,1,False

136,Flareon,Fire,,525,65,130,60,95,110,65,1,False

137,Porygon,Normal,,395,65,60,70,85,75,40,1,False

138,Omanyte,Rock,Water,355,35,40,100,90,55,35,1,False

139,Omastar,Rock,Water,495,70,60,125,115,70,55,1,False

140,Kabuto,Rock,Water,355,30,80,90,55,45,55,1,False

141,Kabutops,Rock,Water,495,60,115,105,65,70,80,1,False

142,Aerodactyl,Rock,Flying,515,80,105,65,60,75,130,1,False

142,AerodactylMega Aerodactyl,Rock,Flying,615,80,135,85,70,95,150,1,False

143,Snorlax,Normal,,540,160,110,65,65,110,30,1,False

144,Articuno,Ice,Flying,580,90,85,100,95,125,85,1,True

145,Zapdos,Electric,Flying,580,90,90,85,125,90,100,1,True

146,Moltres,Fire,Flying,580,90,100,90,125,85,90,1,True

147,Dratini,Dragon,,300,41,64,45,50,50,50,1,False

148,Dragonair,Dragon,,420,61,84,65,70,70,70,1,False

149,Dragonite,Dragon,Flying,600,91,134,95,100,100,80,1,False

150,Mewtwo,Psychic,,680,106,110,90,154,90,130,1,True

150,MewtwoMega Mewtwo X,Psychic,Fighting,780,106,190,100,154,100,130,1,True

150,MewtwoMega Mewtwo Y,Psychic,,780,106,150,70,194,120,140,1,True

151,Mew,Psychic,,600,100,100,100,100,100,100,1,False

152,Chikorita,Grass,,318,45,49,65,49,65,45,2,False

153,Bayleef,Grass,,405,60,62,80,63,80,60,2,False

154,Meganium,Grass,,525,80,82,100,83,100,80,2,False

155,Cyndaquil,Fire,,309,39,52,43,60,50,65,2,False

156,Quilava,Fire,,405,58,64,58,80,65,80,2,False

157,Typhlosion,Fire,,534,78,84,78,109,85,100,2,False

158,Totodile,Water,,314,50,65,64,44,48,43,2,False

159,Croconaw,Water,,405,65,80,80,59,63,58,2,False

160,Feraligatr,Water,,530,85,105,100,79,83,78,2,False

161,Sentret,Normal,,215,35,46,34,35,45,20,2,False

162,Furret,Normal,,415,85,76,64,45,55,90,2,False

163,Hoothoot,Normal,Flying,262,60,30,30,36,56,50,2,False

164,Noctowl,Normal,Flying,442,100,50,50,76,96,70,2,False

165,Ledyba,Bug,Flying,265,40,20,30,40,80,55,2,False

166,Ledian,Bug,Flying,390,55,35,50,55,110,85,2,False

167,Spinarak,Bug,Poison,250,40,60,40,40,40,30,2,False

168,Ariados,Bug,Poison,390,70,90,70,60,60,40,2,False

169,Crobat,Poison,Flying,535,85,90,80,70,80,130,2,False

170,Chinchou,Water,Electric,330,75,38,38,56,56,67,2,False

171,Lanturn,Water,Electric,460,125,58,58,76,76,67,2,False

172,Pichu,Electric,,205,20,40,15,35,35,60,2,False

173,Cleffa,Fairy,,218,50,25,28,45,55,15,2,False

174,Igglybuff,Normal,Fairy,210,90,30,15,40,20,15,2,False

175,Togepi,Fairy,,245,35,20,65,40,65,20,2,False

176,Togetic,Fairy,Flying,405,55,40,85,80,105,40,2,False

177,Natu,Psychic,Flying,320,40,50,45,70,45,70,2,False

178,Xatu,Psychic,Flying,470,65,75,70,95,70,95,2,False

179,Mareep,Electric,,280,55,40,40,65,45,35,2,False

180,Flaaffy,Electric,,365,70,55,55,80,60,45,2,False

181,Ampharos,Electric,,510,90,75,85,115,90,55,2,False

181,AmpharosMega Ampharos,Electric,Dragon,610,90,95,105,165,110,45,2,False

182,Bellossom,Grass,,490,75,80,95,90,100,50,2,False

183,Marill,Water,Fairy,250,70,20,50,20,50,40,2,False

184,Azumarill,Water,Fairy,420,100,50,80,60,80,50,2,False

185,Sudowoodo,Rock,,410,70,100,115,30,65,30,2,False

186,Politoed,Water,,500,90,75,75,90,100,70,2,False

187,Hoppip,Grass,Flying,250,35,35,40,35,55,50,2,False

188,Skiploom,Grass,Flying,340,55,45,50,45,65,80,2,False

189,Jumpluff,Grass,Flying,460,75,55,70,55,95,110,2,False

190,Aipom,Normal,,360,55,70,55,40,55,85,2,False

191,Sunkern,Grass,,180,30,30,30,30,30,30,2,False

192,Sunflora,Grass,,425,75,75,55,105,85,30,2,False

193,Yanma,Bug,Flying,390,65,65,45,75,45,95,2,False

194,Wooper,Water,Ground,210,55,45,45,25,25,15,2,False

195,Quagsire,Water,Ground,430,95,85,85,65,65,35,2,False

196,Espeon,Psychic,,525,65,65,60,130,95,110,2,False

197,Umbreon,Dark,,525,95,65,110,60,130,65,2,False

198,Murkrow,Dark,Flying,405,60,85,42,85,42,91,2,False

199,Slowking,Water,Psychic,490,95,75,80,100,110,30,2,False

200,Misdreavus,Ghost,,435,60,60,60,85,85,85,2,False

201,Unown,Psychic,,336,48,72,48,72,48,48,2,False

202,Wobbuffet,Psychic,,405,190,33,58,33,58,33,2,False

203,Girafarig,Normal,Psychic,455,70,80,65,90,65,85,2,False

204,Pineco,Bug,,290,50,65,90,35,35,15,2,False

205,Forretress,Bug,Steel,465,75,90,140,60,60,40,2,False

206,Dunsparce,Normal,,415,100,70,70,65,65,45,2,False

207,Gligar,Ground,Flying,430,65,75,105,35,65,85,2,False

208,Steelix,Steel,Ground,510,75,85,200,55,65,30,2,False

208,SteelixMega Steelix,Steel,Ground,610,75,125,230,55,95,30,2,False

209,Snubbull,Fairy,,300,60,80,50,40,40,30,2,False

210,Granbull,Fairy,,450,90,120,75,60,60,45,2,False

211,Qwilfish,Water,Poison,430,65,95,75,55,55,85,2,False

212,Scizor,Bug,Steel,500,70,130,100,55,80,65,2,False

212,ScizorMega Scizor,Bug,Steel,600,70,150,140,65,100,75,2,False

213,Shuckle,Bug,Rock,505,20,10,230,10,230,5,2,False

214,Heracross,Bug,Fighting,500,80,125,75,40,95,85,2,False

214,HeracrossMega Heracross,Bug,Fighting,600,80,185,115,40,105,75,2,False

215,Sneasel,Dark,Ice,430,55,95,55,35,75,115,2,False

216,Teddiursa,Normal,,330,60,80,50,50,50,40,2,False

217,Ursaring,Normal,,500,90,130,75,75,75,55,2,False

218,Slugma,Fire,,250,40,40,40,70,40,20,2,False

219,Magcargo,Fire,Rock,410,50,50,120,80,80,30,2,False

220,Swinub,Ice,Ground,250,50,50,40,30,30,50,2,False

221,Piloswine,Ice,Ground,450,100,100,80,60,60,50,2,False

222,Corsola,Water,Rock,380,55,55,85,65,85,35,2,False

223,Remoraid,Water,,300,35,65,35,65,35,65,2,False

224,Octillery,Water,,480,75,105,75,105,75,45,2,False

225,Delibird,Ice,Flying,330,45,55,45,65,45,75,2,False

226,Mantine,Water,Flying,465,65,40,70,80,140,70,2,False

227,Skarmory,Steel,Flying,465,65,80,140,40,70,70,2,False

228,Houndour,Dark,Fire,330,45,60,30,80,50,65,2,False

229,Houndoom,Dark,Fire,500,75,90,50,110,80,95,2,False

229,HoundoomMega Houndoom,Dark,Fire,600,75,90,90,140,90,115,2,False

230,Kingdra,Water,Dragon,540,75,95,95,95,95,85,2,False

231,Phanpy,Ground,,330,90,60,60,40,40,40,2,False

232,Donphan,Ground,,500,90,120,120,60,60,50,2,False

233,Porygon2,Normal,,515,85,80,90,105,95,60,2,False

234,Stantler,Normal,,465,73,95,62,85,65,85,2,False

235,Smeargle,Normal,,250,55,20,35,20,45,75,2,False

236,Tyrogue,Fighting,,210,35,35,35,35,35,35,2,False

237,Hitmontop,Fighting,,455,50,95,95,35,110,70,2,False

238,Smoochum,Ice,Psychic,305,45,30,15,85,65,65,2,False

239,Elekid,Electric,,360,45,63,37,65,55,95,2,False

240,Magby,Fire,,365,45,75,37,70,55,83,2,False

241,Miltank,Normal,,490,95,80,105,40,70,100,2,False

242,Blissey,Normal,,540,255,10,10,75,135,55,2,False

243,Raikou,Electric,,580,90,85,75,115,100,115,2,True

244,Entei,Fire,,580,115,115,85,90,75,100,2,True

245,Suicune,Water,,580,100,75,115,90,115,85,2,True

246,Larvitar,Rock,Ground,300,50,64,50,45,50,41,2,False

247,Pupitar,Rock,Ground,410,70,84,70,65,70,51,2,False

248,Tyranitar,Rock,Dark,600,100,134,110,95,100,61,2,False

248,TyranitarMega Tyranitar,Rock,Dark,700,100,164,150,95,120,71,2,False

249,Lugia,Psychic,Flying,680,106,90,130,90,154,110,2,True

250,Ho-oh,Fire,Flying,680,106,130,90,110,154,90,2,True

251,Celebi,Psychic,Grass,600,100,100,100,100,100,100,2,False

252,Treecko,Grass,,310,40,45,35,65,55,70,3,False

253,Grovyle,Grass,,405,50,65,45,85,65,95,3,False

254,Sceptile,Grass,,530,70,85,65,105,85,120,3,False

254,SceptileMega Sceptile,Grass,Dragon,630,70,110,75,145,85,145,3,False

255,Torchic,Fire,,310,45,60,40,70,50,45,3,False

256,Combusken,Fire,Fighting,405,60,85,60,85,60,55,3,False

257,Blaziken,Fire,Fighting,530,80,120,70,110,70,80,3,False

257,BlazikenMega Blaziken,Fire,Fighting,630,80,160,80,130,80,100,3,False

258,Mudkip,Water,,310,50,70,50,50,50,40,3,False

259,Marshtomp,Water,Ground,405,70,85,70,60,70,50,3,False

260,Swampert,Water,Ground,535,100,110,90,85,90,60,3,False

260,SwampertMega Swampert,Water,Ground,635,100,150,110,95,110,70,3,False

261,Poochyena,Dark,,220,35,55,35,30,30,35,3,False

262,Mightyena,Dark,,420,70,90,70,60,60,70,3,False

263,Zigzagoon,Normal,,240,38,30,41,30,41,60,3,False

264,Linoone,Normal,,420,78,70,61,50,61,100,3,False

265,Wurmple,Bug,,195,45,45,35,20,30,20,3,False

266,Silcoon,Bug,,205,50,35,55,25,25,15,3,False

267,Beautifly,Bug,Flying,395,60,70,50,100,50,65,3,False

268,Cascoon,Bug,,205,50,35,55,25,25,15,3,False

269,Dustox,Bug,Poison,385,60,50,70,50,90,65,3,False

270,Lotad,Water,Grass,220,40,30,30,40,50,30,3,False

271,Lombre,Water,Grass,340,60,50,50,60,70,50,3,False

272,Ludicolo,Water,Grass,480,80,70,70,90,100,70,3,False

273,Seedot,Grass,,220,40,40,50,30,30,30,3,False

274,Nuzleaf,Grass,Dark,340,70,70,40,60,40,60,3,False

275,Shiftry,Grass,Dark,480,90,100,60,90,60,80,3,False

276,Taillow,Normal,Flying,270,40,55,30,30,30,85,3,False

277,Swellow,Normal,Flying,430,60,85,60,50,50,125,3,False

278,Wingull,Water,Flying,270,40,30,30,55,30,85,3,False

279,Pelipper,Water,Flying,430,60,50,100,85,70,65,3,False

280,Ralts,Psychic,Fairy,198,28,25,25,45,35,40,3,False

281,Kirlia,Psychic,Fairy,278,38,35,35,65,55,50,3,False

282,Gardevoir,Psychic,Fairy,518,68,65,65,125,115,80,3,False

282,GardevoirMega Gardevoir,Psychic,Fairy,618,68,85,65,165,135,100,3,False

283,Surskit,Bug,Water,269,40,30,32,50,52,65,3,False

284,Masquerain,Bug,Flying,414,70,60,62,80,82,60,3,False

285,Shroomish,Grass,,295,60,40,60,40,60,35,3,False

286,Breloom,Grass,Fighting,460,60,130,80,60,60,70,3,False

287,Slakoth,Normal,,280,60,60,60,35,35,30,3,False

288,Vigoroth,Normal,,440,80,80,80,55,55,90,3,False

289,Slaking,Normal,,670,150,160,100,95,65,100,3,False

290,Nincada,Bug,Ground,266,31,45,90,30,30,40,3,False

291,Ninjask,Bug,Flying,456,61,90,45,50,50,160,3,False

292,Shedinja,Bug,Ghost,236,1,90,45,30,30,40,3,False

293,Whismur,Normal,,240,64,51,23,51,23,28,3,False