OpenCV3.1 SIFT使用

OpenCV3对OpenCV的模块进行了调整,将开发中与nofree模块放在 了OpenCV_contrib中(包含SIFT),gitHub上的官方项目分成了两个,opencv 与 opencv_contrib。所以,要使用sift接口需在opencv3.1基础上,再安装opencv_contrib。本文主要记录如何安装opencv_contrib,配置Xcode,sift接口的用法。 环境:OSX + Xcode + OpenCV3.1

$ cd <opencv_build_directory>

$ cmake -DOPENCV_EXTRA_MODULES_PATH =<opencv_contrib>/modules <opencv_source_directory>

$ make -j5

$ sudo make install Where <opencv_build_directory> and <opencv_source_directory> is directory in opencv3.1 install tutorial

like How to develop OpenCV with Xcode

/usr/local/lib /usr/local/include

-lopencv_stitching -lopencv_superres -lopencv_videostab -lopencv_aruco -lopencv_bgsegm -lopencv_bioinspired -lopencv_ccalib -lopencv_dnn -lopencv_dpm -lopencv_fuzzy -lopencv_line_descriptor -lopencv_optflow -lopencv_plot -lopencv_reg -lopencv_saliency -lopencv_stereo -lopencv_structured_light -lopencv_rgbd -lopencv_surface_matching -lopencv_tracking -lopencv_datasets -lopencv_text -lopencv_face -lopencv_xfeatures2d -lopencv_shape -lopencv_video -lopencv_ximgproc -lopencv_calib3d -lopencv_features2d -lopencv_flann -lopencv_xobjdetect -lopencv_objdetect -lopencv_ml -lopencv_xphoto -lippicv -lopencv_highgui -lopencv_videoio -lopencv_imgcodecs -lopencv_photo -lopencv_imgproc -lopencv_core

sample in (souce_dir)/samples/cpp/tutorial_code/xfeatures2D/LATCH_match.cpp or bellow

#include "opencv2/xfeatures2d.hpp"

cv::Ptr<Feature2D> f2d = xfeatures2d::SIFT::create();

std ::vector <KeyPoint>std ::vector < DMatch >

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28

https://github.com/Itseez/opencv_contrib https://github.com/Itseez/opencv http://blog.csdn.net/lijiang1991/article/details/50756065 http://docs.opencv.org/3.1.0/d5/d3c/classcv_1_1xfeatures2d_1_1SIFT.html#gsc.tab=0

一个实验代码::

不多说什么了,直接上代码吧:

#include <iostream> #include <stdio.h> #include "opencv2/core.hpp" #include "opencv2/core/utility.hpp" #include "opencv2/core/ocl.hpp" #include "opencv2/imgcodecs.hpp" #include "opencv2/highgui.hpp" #include "opencv2/features2d.hpp" #include "opencv2/calib3d.hpp" #include "opencv2/imgproc.hpp" #include"opencv2/flann.hpp" #include"opencv2/xfeatures2d.hpp" #include"opencv2/ml.hpp" using namespace cv; using namespace std; using namespace cv::xfeatures2d; using namespace cv::ml; int main() { Mat a = imread("box.png" , IMREAD_GRAYSCALE); Mat b = imread("box_in_scene.png" , IMREAD_GRAYSCALE); Ptr<SURF> surf; surf = SURF::create(800 ); BFMatcher matcher; Mat c, d; vector<KeyPoint>key1, key2; vector<DMatch> matches; surf->detectAndCompute(a, Mat(), key1, c); surf->detectAndCompute(b, Mat(), key2, d); matcher.match(c, d, matches); sort(matches.begin(), matches.end()); vector< DMatch > good_matches; int ptsPairs = std::min( 50 , ( int )(matches.size() * 0.15 )); cout << ptsPairs << endl; for ( int i = 0 ; i < ptsPairs; i++) { good_matches.push_back(matches[i]); } Mat outimg; drawMatches(a, key1, b, key2, good_matches, outimg, Scalar::all(-1 ), Scalar::all(- 1 ),vector< char >(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS); std::vector<Point2f> obj; std::vector<Point2f> scene; for (size_t i = 0 ; i < good_matches.size(); i++) { obj.push_back(key1[good_matches[i].queryIdx].pt); scene.push_back(key2[good_matches[i].trainIdx].pt); } std::vector<Point2f> obj_corners(4 ); obj_corners[0 ] = Point( 0 , 0 ); obj_corners[1 ] = Point(a.cols, 0 ); obj_corners[2 ] = Point(a.cols, a.rows); obj_corners[3 ] = Point( 0 , a.rows); std::vector<Point2f> scene_corners(4 ); Mat H = findHomography(obj, scene, RANSAC); perspectiveTransform(obj_corners, scene_corners, H); line(outimg,scene_corners[0 ] + Point2f(( float )a.cols, 0 ), scene_corners[ 1 ] + Point2f(( float )a.cols, 0 ),Scalar( 0 , 255 , 0 ), 2 , LINE_AA); line(outimg,scene_corners[1 ] + Point2f(( float )a.cols, 0 ), scene_corners[ 2 ] + Point2f(( float )a.cols, 0 ),Scalar( 0 , 255 , 0 ), 2 , LINE_AA); line(outimg,scene_corners[2 ] + Point2f(( float )a.cols, 0 ), scene_corners[ 3 ] + Point2f(( float )a.cols, 0 ),Scalar( 0 , 255 , 0 ), 2 , LINE_AA); line(outimg,scene_corners[3 ] + Point2f(( float )a.cols, 0 ), scene_corners[ 0 ] + Point2f(( float )a.cols, 0 ),Scalar( 0 , 255 , 0 ), 2 , LINE_AA); imshow("aaaa" ,outimg); cvWaitKey(0 ); }

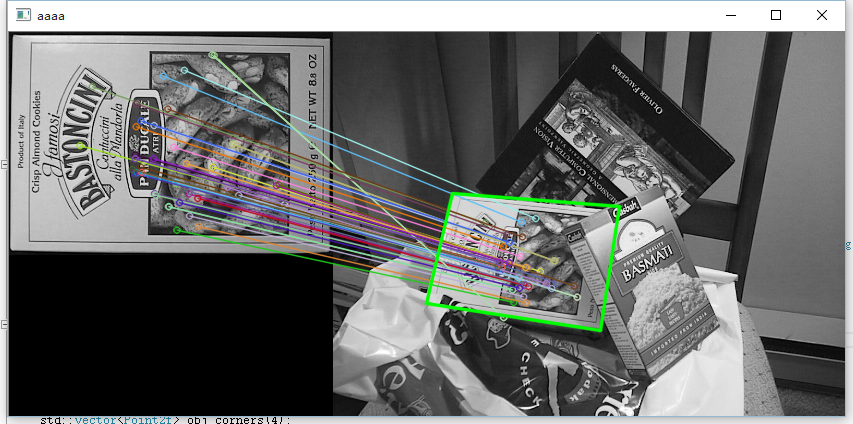

运行图:

cv::Mat img_object = imread("/storage/emulated/0/ApplePearFace/imgTemp.jpg" ); cv::Mat img_scene(yimage); if (!img_object.data || !img_scene.data) { cout << "Error reading images." << endl; return 0 ; } cv::Ptr<cv::FeatureDetector> detector = cv::FeatureDetector::create("ORB" ); cv::Ptr<cv::DescriptorExtractor> descriptor = cv::DescriptorExtractor::create("ORB" ); vector<cv::KeyPoint> kp_object, kp_scene; detector->detect(img_object, kp_object); detector->detect(img_scene, kp_scene); cv::Mat desp_object, desp_scene; descriptor->compute(img_object, kp_object, desp_object); descriptor->compute(img_scene, kp_scene, desp_scene); vector<cv::DMatch> matches; cv::FlannBasedMatcher matcher(new cv::flann::LshIndexParams( 20 , 10 , 2 )); matcher.match(desp_object, desp_scene, matches); cv::Mat img_matches; cv::drawMatches(img_object, kp_object, img_scene, kp_scene, matches, img_matches); cv::vector<cv::Point2f> obj_points; cv::vector<cv::Point2f> scene; for ( int i = 0 ; i < matches.size(); i++) { obj_points.push_back(kp_object[matches[i].queryIdx].pt); scene.push_back(kp_scene[matches[i].trainIdx].pt); } cv::Mat H = cv::findHomography(obj_points, scene, CV_RANSAC); cv::vector<cv::Point2f> obj_corners(4 ); cv::vector<cv::Point2f> scene_corners(4 ); obj_corners[0 ] = cv::Point( 0 , 0 ); obj_corners[1 ] = cv::Point(img_object.cols, 0 ); obj_corners[2 ] = cv::Point(img_object.cols, img_object.rows); obj_corners[3 ] = cv::Point( 0 , img_object.rows); cv::perspectiveTransform(obj_corners, scene_corners, H); cv::line(img_matches, scene_corners[0 ] + cv::Point2f(img_object.cols, 0 ), scene_corners[ 1 ] + cv::Point2f(img_object.cols, 0 ), cv::Scalar( 0 , 255 , 0 ), 4 ); cv::line(img_matches, scene_corners[1 ] + cv::Point2f(img_object.cols, 0 ), scene_corners[ 2 ] + cv::Point2f(img_object.cols, 0 ), cv::Scalar( 0 , 255 , 0 ), 4 ); cv::line(img_matches, scene_corners[2 ] + cv::Point2f(img_object.cols, 0 ), scene_corners[ 3 ] + cv::Point2f(img_object.cols, 0 ), cv::Scalar( 0 , 255 , 0 ), 4 ); cv::line(img_matches, scene_corners[3 ] + cv::Point2f(img_object.cols, 0 ), scene_corners[ 0 ] + cv::Point2f(img_object.cols, 0 ), cv::Scalar( 0 , 255 , 0 ), 4 ); cv::Mat dstSize; cv::resize(img_matches, dstSize, Size(2 * h, w));

185

185

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?