1.用accuracy来对比

# -*-coding:utf-8-*-

"""

accuracy来对比决策树和随机森林

"""

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import load_wine

#(178, 13)

wine=load_wine()

# print(wine.data.shape)

print(wine.target)

from sklearn.model_selection import train_test_split

Xtrain,Xtest,Ytrain,Ytest=train_test_split(wine.data,wine.target,test_size=0.3)

clf=DecisionTreeClassifier(random_state=0)

rfc=RandomForestClassifier(random_state=0)

clf=clf.fit(Xtrain,Ytrain)

rfc=rfc.fit(Xtrain,Ytrain)

#score就是accuracy

score_c=clf.score(Xtest,Ytest)

score_rfc=rfc.score(Xtest,Ytest)

print("Single Tree:{}".format(score_c),

"Random Forest:{}".format(score_rfc))

Single Tree:0.8703703703703703 Random Forest:1.02.交叉熵验证对比

# -*-coding:utf-8-*-

"""

交叉熵来对比决策树和随机森林

"""

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import load_wine

from sklearn.model_selection import cross_val_score

import matplotlib.pyplot as plt

plt.switch_backend("TkAgg")

#(178, 13)

wine=load_wine()

# print(wine.data.shape)

print(wine.target)

from sklearn.model_selection import train_test_split

Xtrain,Xtest,Ytrain,Ytest=train_test_split(wine.data,wine.target,test_size=0.3)

rfc=RandomForestClassifier(n_estimators=25)

rfc_s=cross_val_score(rfc,wine.data,wine.target,cv=10)

clf=DecisionTreeClassifier()

clf_s=cross_val_score(clf,wine.data,wine.target,cv=10)

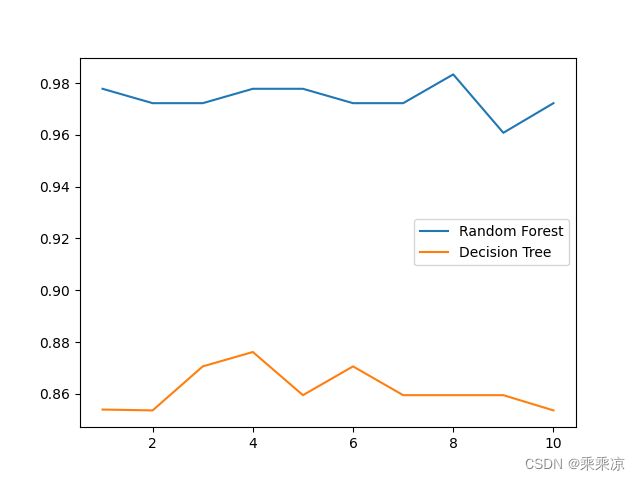

plt.plot(range(1,11),rfc_s,label="RandomForest")

plt.plot(range(1,11),clf_s,label="DecisionTree")

plt.legend()

plt.show()

3.多次平均交叉熵对比

# -*-coding:utf-8-*-

"""

交叉熵平均来对比决策树和随机森林

"""

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import load_wine

from sklearn.model_selection import cross_val_score

import matplotlib.pyplot as plt

plt.switch_backend("TkAgg")

#(178, 13)

wine=load_wine()

# print(wine.data.shape)

print(wine.target)

from sklearn.model_selection import train_test_split

Xtrain,Xtest,Ytrain,Ytest=train_test_split(wine.data,wine.target,test_size=0.3)

rfc_mc=[]

clf_mc=[]

for i in range(10):

rfc=RandomForestClassifier(n_estimators=25)

rfc_s=cross_val_score(rfc,wine.data,wine.target,cv=10).mean()

rfc_mc.append(rfc_s)

clf=DecisionTreeClassifier()

clf_s=cross_val_score(clf,wine.data,wine.target,cv=10).mean()

clf_mc.append(clf_s)

plt.plot(range(1,11),rfc_mc,label="Random Forest")

plt.plot(range(1,11),clf_mc,label="Decision Tree")

plt.legend()

plt.show()

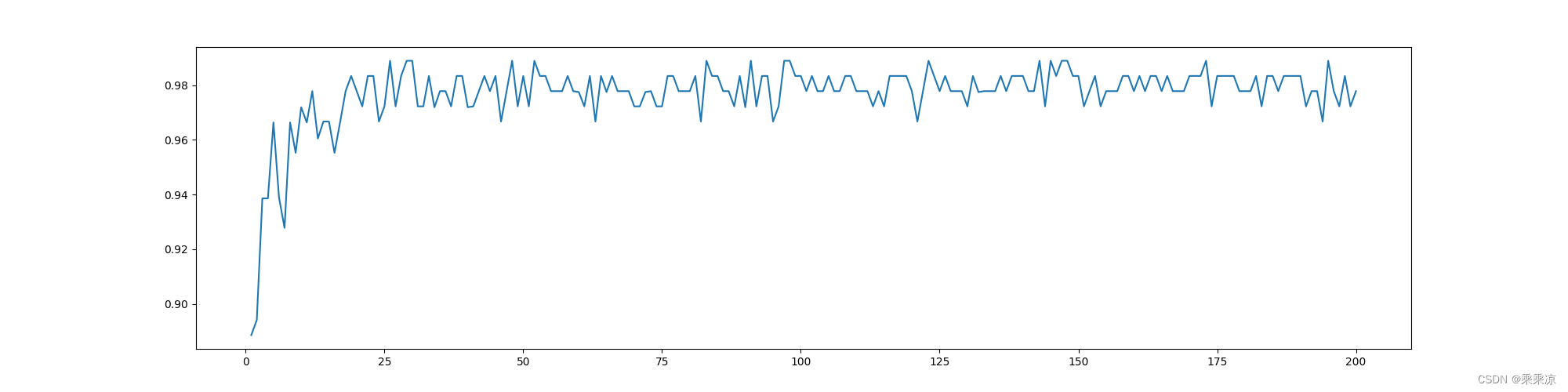

4.选择合适的estimators

为随机森林选择合适的决策树的数量

# -*-coding:utf-8-*-

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import load_wine

from sklearn.model_selection import cross_val_score

import matplotlib.pyplot as plt

plt.switch_backend("TkAgg")

#(178, 13)

wine=load_wine()

# print(wine.data.shape)

print(wine.target)

from sklearn.model_selection import train_test_split

Xtrain,Xtest,Ytrain,Ytest=train_test_split(wine.data,wine.target,test_size=0.3)

superpa=[]

for i in range(200):

rfc=RandomForestClassifier(n_estimators=i+1,n_jobs=-1)

rfc_s=cross_val_score(rfc,wine.data,wine.target,cv=10).mean()

superpa.append(rfc_s)

print(max(superpa),superpa.index(max(superpa))+1)

plt.figure(figsize=[20,5])

plt.plot(range(1,201),superpa)

plt.show()0.9888888888888889 26

3411

3411

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?