MiniCPM-Llama3-V-2_5-int4大模型部署使用环境:

python3.8+cuda11.8其它要求,按照安装文档要求下载即可

我是在算力平台用4090跑的, GPU 显存(8GB)可以部署推理 int4 量化版本,如果推理非量化版本需要更高显存

MiniCPM-V 仓库文件下载

openbmb/MiniCPM-Llama3-V-2_5-int4 模型文件下载

MiniCPM-Llama3-V-2_5 非量化模型文件地址

MiniCPM-V-2.5 部署应用等

在算力平台AutoDL遇到的报错:

root@autodl-container-cffc47b4c5-4a5f97c0:~/tf-logs# conda activate minicpmv2 CommandNotFoundError: Your shell has not been properly configured to use 'conda activate'. To initialize your shell, run

需要运行:

conda init bash

显示文件大小

ls -lh example.zipdf -h /root/autodl-tmptar -xvf 解压rar文件

# 更新包列表

sudo apt-get update

# 安装 unrar

sudo apt-get install unrar

# 解压 MiniCPM-Llama3-V-2_5-int4.rar

unrar x MiniCPM-Llama3-V-2_5-int4.rar

# 查看解压后的文件

ls -lunrar x xxx.rarunrar x查看磁盘空间大小

df -h /dev/sda1安装

- 克隆我们的仓库并跳转到相应目录

git clone https://github.com/OpenBMB/MiniCPM-V.git

cd MiniCPM-V2. 创建 conda 环境

conda create -n minicpmv2.5 python=3.8 -yconda activate minicpmv2.53. 安装依赖

pip install -r requirements.txt -i https://pypi.mirrors.ustc.edu.cn/simple pip install gradio==3.40.0 -i https://pypi.mirrors.ustc.edu.cn/simple其它要求

用法

使用 NVIDIA GPU 上的 Huggingface transformers 进行推理。要求在 Python 3.10 上进行测试:

Pillow==10.1.0

torch==2.1.2

torchvision==0.16.2

transformers==4.40.0

sentencepiece==0.1.99test.py

# test.py

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True, torch_dtype=torch.float16)

model = model.to(device='cuda')

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True)

model.eval()

image = Image.open('xx.jpg').convert('RGB')

question = 'What is in the image?'

msgs = [{'role': 'user', 'content': question}]

res = model.chat(

image=image,

msgs=msgs,

tokenizer=tokenizer,

sampling=True, # if sampling=False, beam_search will be used by default

temperature=0.7,

# system_prompt='' # pass system_prompt if needed

)

print(res)

## if you want to use streaming, please make sure sampling=True and stream=True

## the model.chat will return a generator

res = model.chat(

image=image,

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

temperature=0.7,

stream=True

)

generated_text = ""

for new_text in res:

generated_text += new_text

print(new_text, flush=True, end='')

报错

ERROR: pip's dependency resolver does not currently take into account all the packages that are installed. This behaviour is the source of the following dependency conflicts.

spacy 3.7.2 requires typer<0.10.0,>=0.3.0, but you have typer 0.12.3 which is incompatible.

weasel 0.3.4 requires typer<0.10.0,>=0.3.0, but you have typer 0.12.3 which is incompatible.

解决方法:调整了几个就ok了

这是我拉出来运行成功的版本 做参考

Package Version

------------------------- -----------

accelerate 0.30.1

addict 2.4.0

aiofiles 23.2.1

aiohttp 3.9.5

aiosignal 1.3.1

altair 5.3.0

annotated-types 0.6.0

anyio 3.7.1

async-timeout 4.0.3

attrs 23.2.0

bitsandbytes 0.43.1

blis 0.7.11

catalogue 2.0.10

certifi 2024.2.2

charset-normalizer 3.3.2

click 8.1.7

cloudpathlib 0.16.0

colorama 0.4.6

confection 0.1.4

contourpy 1.1.1

cycler 0.12.1

cymem 2.0.8

dnspython 2.6.1

editdistance 0.6.2

einops 0.7.0

email_validator 2.1.1

et-xmlfile 1.1.0

exceptiongroup 1.2.1

fairscale 0.4.0

fastapi 0.70.0

fastapi-cli 0.0.1

ffmpy 0.3.2

filelock 3.14.0

fonttools 4.51.0

frozenlist 1.4.1

fsspec 2024.5.0

gradio 3.40.0

gradio_client 0.16.3

h11 0.14.0

httpcore 1.0.5

httptools 0.6.1

httpx 0.27.0

huggingface-hub 0.23.0

idna 3.7

importlib-metadata 1.7.0

importlib_resources 6.4.0

Jinja2 3.1.4

joblib 1.4.2

jsonlines 4.0.0

jsonschema 4.22.0

jsonschema-specifications 2023.12.1

kiwisolver 1.4.5

langcodes 3.4.0

language_data 1.2.0

linkify-it-py 2.0.3

lxml 5.2.2

marisa-trie 1.1.1

markdown-it-py 2.2.0

markdown2 2.4.10

MarkupSafe 2.1.5

matplotlib 3.7.4

mdit-py-plugins 0.3.3

mdurl 0.1.2

more-itertools 10.1.0

mpmath 1.3.0

multidict 6.0.5

murmurhash 1.0.10

networkx 3.1

nltk 3.8.1

numpy 1.24.4

nvidia-cublas-cu12 12.1.3.1

nvidia-cuda-cupti-cu12 12.1.105

nvidia-cuda-nvrtc-cu12 12.1.105

nvidia-cuda-runtime-cu12 12.1.105

nvidia-cudnn-cu12 8.9.2.26

nvidia-cufft-cu12 11.0.2.54

nvidia-curand-cu12 10.3.2.106

nvidia-cusolver-cu12 11.4.5.107

nvidia-cusparse-cu12 12.1.0.106

nvidia-nccl-cu12 2.18.1

nvidia-nvjitlink-cu12 12.4.127

nvidia-nvtx-cu12 12.1.105

opencv-python-headless 4.5.5.64

openpyxl 3.1.2

orjson 3.10.3

packaging 23.2

pandas 2.0.3

Pillow 10.1.0

pip 24.0

pkgutil_resolve_name 1.3.10

portalocker 2.8.2

preshed 3.0.9

protobuf 4.25.0

psutil 5.9.8

pydantic 1.10.15

pydantic_core 2.18.2

pydub 0.25.1

Pygments 2.18.0

pyparsing 3.1.2

python-dateutil 2.9.0.post0

python-dotenv 1.0.1

python-multipart 0.0.9

pytz 2024.1

PyYAML 6.0.1

referencing 0.35.1

regex 2024.5.15

requests 2.31.0

rich 13.7.1

rpds-py 0.18.1

sacrebleu 2.3.2

safetensors 0.4.3

seaborn 0.13.0

semantic-version 2.10.0

sentencepiece 0.1.99

setuptools 69.5.1

shellingham 1.5.4

shortuuid 1.0.11

six 1.16.0

smart-open 6.4.0

sniffio 1.3.1

spacy 3.7.2

spacy-legacy 3.0.12

spacy-loggers 1.0.5

srsly 2.4.8

starlette 0.16.0

sympy 1.12

tabulate 0.9.0

thinc 8.2.3

timm 0.9.10

tokenizers 0.19.1

toolz 0.12.1

torch 2.1.2

torchvision 0.16.2

tqdm 4.66.1

transformers 4.40.0

triton 2.1.0

typer 0.9.0

typing_extensions 4.8.0

tzdata 2024.1

uc-micro-py 1.0.3

ujson 5.10.0

urllib3 2.2.1

uvicorn 0.29.0

uvloop 0.19.0

wasabi 1.1.2

watchfiles 0.21.0

weasel 0.3.4

websockets 11.0.3

wheel 0.43.0

yarl 1.9.4

zipp 3.18.2

(minicpmv2.5) root@autodl-container-ff4a4e81ec-d3875dd0:~# conda list

# packages in environment at /root/miniconda3/envs/minicpmv2.5:

#

# Name Version Build Channel

_libgcc_mutex 0.1 main https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

_openmp_mutex 5.1 1_gnu https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

accelerate 0.30.1 pypi_0 pypi

addict 2.4.0 pypi_0 pypi

aiofiles 23.2.1 pypi_0 pypi

aiohttp 3.9.5 pypi_0 pypi

aiosignal 1.3.1 pypi_0 pypi

altair 5.3.0 pypi_0 pypi

annotated-types 0.6.0 pypi_0 pypi

anyio 3.7.1 pypi_0 pypi

async-timeout 4.0.3 pypi_0 pypi

attrs 23.2.0 pypi_0 pypi

bitsandbytes 0.43.1 pypi_0 pypi

blis 0.7.11 pypi_0 pypi

ca-certificates 2024.3.11 h06a4308_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

catalogue 2.0.10 pypi_0 pypi

certifi 2024.2.2 pypi_0 pypi

charset-normalizer 3.3.2 pypi_0 pypi

click 8.1.7 pypi_0 pypi

cloudpathlib 0.16.0 pypi_0 pypi

colorama 0.4.6 pypi_0 pypi

confection 0.1.4 pypi_0 pypi

contourpy 1.1.1 pypi_0 pypi

cycler 0.12.1 pypi_0 pypi

cymem 2.0.8 pypi_0 pypi

dnspython 2.6.1 pypi_0 pypi

editdistance 0.6.2 pypi_0 pypi

einops 0.7.0 pypi_0 pypi

email-validator 2.1.1 pypi_0 pypi

et-xmlfile 1.1.0 pypi_0 pypi

exceptiongroup 1.2.1 pypi_0 pypi

fairscale 0.4.0 pypi_0 pypi

fastapi 0.70.0 pypi_0 pypi

fastapi-cli 0.0.1 pypi_0 pypi

ffmpy 0.3.2 pypi_0 pypi

filelock 3.14.0 pypi_0 pypi

fonttools 4.51.0 pypi_0 pypi

frozenlist 1.4.1 pypi_0 pypi

fsspec 2024.5.0 pypi_0 pypi

gradio 3.40.0 pypi_0 pypi

gradio-client 0.16.3 pypi_0 pypi

h11 0.14.0 pypi_0 pypi

httpcore 1.0.5 pypi_0 pypi

httptools 0.6.1 pypi_0 pypi

httpx 0.27.0 pypi_0 pypi

huggingface-hub 0.23.0 pypi_0 pypi

idna 3.7 pypi_0 pypi

importlib-metadata 1.7.0 pypi_0 pypi

importlib-resources 6.4.0 pypi_0 pypi

jinja2 3.1.4 pypi_0 pypi

joblib 1.4.2 pypi_0 pypi

jsonlines 4.0.0 pypi_0 pypi

jsonschema 4.22.0 pypi_0 pypi

jsonschema-specifications 2023.12.1 pypi_0 pypi

kiwisolver 1.4.5 pypi_0 pypi

langcodes 3.4.0 pypi_0 pypi

language-data 1.2.0 pypi_0 pypi

ld_impl_linux-64 2.38 h1181459_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

libffi 3.4.4 h6a678d5_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

libgcc-ng 11.2.0 h1234567_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

libgomp 11.2.0 h1234567_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

libstdcxx-ng 11.2.0 h1234567_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

linkify-it-py 2.0.3 pypi_0 pypi

lxml 5.2.2 pypi_0 pypi

marisa-trie 1.1.1 pypi_0 pypi

markdown-it-py 2.2.0 pypi_0 pypi

markdown2 2.4.10 pypi_0 pypi

markupsafe 2.1.5 pypi_0 pypi

matplotlib 3.7.4 pypi_0 pypi

mdit-py-plugins 0.3.3 pypi_0 pypi

mdurl 0.1.2 pypi_0 pypi

more-itertools 10.1.0 pypi_0 pypi

mpmath 1.3.0 pypi_0 pypi

multidict 6.0.5 pypi_0 pypi

murmurhash 1.0.10 pypi_0 pypi

ncurses 6.4 h6a678d5_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

networkx 3.1 pypi_0 pypi

nltk 3.8.1 pypi_0 pypi

numpy 1.24.4 pypi_0 pypi

nvidia-cublas-cu12 12.1.3.1 pypi_0 pypi

nvidia-cuda-cupti-cu12 12.1.105 pypi_0 pypi

nvidia-cuda-nvrtc-cu12 12.1.105 pypi_0 pypi

nvidia-cuda-runtime-cu12 12.1.105 pypi_0 pypi

nvidia-cudnn-cu12 8.9.2.26 pypi_0 pypi

nvidia-cufft-cu12 11.0.2.54 pypi_0 pypi

nvidia-curand-cu12 10.3.2.106 pypi_0 pypi

nvidia-cusolver-cu12 11.4.5.107 pypi_0 pypi

nvidia-cusparse-cu12 12.1.0.106 pypi_0 pypi

nvidia-nccl-cu12 2.18.1 pypi_0 pypi

nvidia-nvjitlink-cu12 12.4.127 pypi_0 pypi

nvidia-nvtx-cu12 12.1.105 pypi_0 pypi

opencv-python-headless 4.5.5.64 pypi_0 pypi

openpyxl 3.1.2 pypi_0 pypi

openssl 3.0.13 h7f8727e_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

orjson 3.10.3 pypi_0 pypi

packaging 23.2 pypi_0 pypi

pandas 2.0.3 pypi_0 pypi

pillow 10.1.0 pypi_0 pypi

pip 24.0 py38h06a4308_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

pkgutil-resolve-name 1.3.10 pypi_0 pypi

portalocker 2.8.2 pypi_0 pypi

preshed 3.0.9 pypi_0 pypi

protobuf 4.25.0 pypi_0 pypi

psutil 5.9.8 pypi_0 pypi

pydantic 1.10.15 pypi_0 pypi

pydantic-core 2.18.2 pypi_0 pypi

pydub 0.25.1 pypi_0 pypi

pygments 2.18.0 pypi_0 pypi

pyparsing 3.1.2 pypi_0 pypi

python 3.8.19 h955ad1f_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

python-dateutil 2.9.0.post0 pypi_0 pypi

python-dotenv 1.0.1 pypi_0 pypi

python-multipart 0.0.9 pypi_0 pypi

pytz 2024.1 pypi_0 pypi

pyyaml 6.0.1 pypi_0 pypi

readline 8.2 h5eee18b_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

referencing 0.35.1 pypi_0 pypi

regex 2024.5.15 pypi_0 pypi

requests 2.31.0 pypi_0 pypi

rich 13.7.1 pypi_0 pypi

rpds-py 0.18.1 pypi_0 pypi

sacrebleu 2.3.2 pypi_0 pypi

safetensors 0.4.3 pypi_0 pypi

seaborn 0.13.0 pypi_0 pypi

semantic-version 2.10.0 pypi_0 pypi

sentencepiece 0.1.99 pypi_0 pypi

setuptools 69.5.1 py38h06a4308_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

shellingham 1.5.4 pypi_0 pypi

shortuuid 1.0.11 pypi_0 pypi

six 1.16.0 pypi_0 pypi

smart-open 6.4.0 pypi_0 pypi

sniffio 1.3.1 pypi_0 pypi

spacy 3.7.2 pypi_0 pypi

spacy-legacy 3.0.12 pypi_0 pypi

spacy-loggers 1.0.5 pypi_0 pypi

sqlite 3.45.3 h5eee18b_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

srsly 2.4.8 pypi_0 pypi

starlette 0.16.0 pypi_0 pypi

sympy 1.12 pypi_0 pypi

tabulate 0.9.0 pypi_0 pypi

thinc 8.2.3 pypi_0 pypi

timm 0.9.10 pypi_0 pypi

tk 8.6.14 h39e8969_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

tokenizers 0.19.1 pypi_0 pypi

toolz 0.12.1 pypi_0 pypi

torch 2.1.2 pypi_0 pypi

torchvision 0.16.2 pypi_0 pypi

tqdm 4.66.1 pypi_0 pypi

transformers 4.40.0 pypi_0 pypi

triton 2.1.0 pypi_0 pypi

typer 0.9.0 pypi_0 pypi

typing-extensions 4.8.0 pypi_0 pypi

tzdata 2024.1 pypi_0 pypi

uc-micro-py 1.0.3 pypi_0 pypi

ujson 5.10.0 pypi_0 pypi

urllib3 2.2.1 pypi_0 pypi

uvicorn 0.29.0 pypi_0 pypi

uvloop 0.19.0 pypi_0 pypi

wasabi 1.1.2 pypi_0 pypi

watchfiles 0.21.0 pypi_0 pypi

weasel 0.3.4 pypi_0 pypi

websockets 11.0.3 pypi_0 pypi

wheel 0.43.0 py38h06a4308_0 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

xz 5.4.6 h5eee18b_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

yarl 1.9.4 pypi_0 pypi

zipp 3.18.2 pypi_0 pypi

zlib 1.2.13 h5eee18b_1 https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/mainpip install typer==0.9.0 -i https://pypi.mirrors.ustc.edu.cn/simple

pip install fastapi-cli==0.0.1 -i https://pypi.mirrors.ustc.edu.cn/simple

pip install fastapi==0.70.0 -i https://pypi.mirrors.ustc.edu.cn/simple下载模型文件

git clone https://www.modelscope.cn/OpenBMB/MiniCPM-Llama3-V-2_5-int4.git参考以下代码进行推理

from chat import OmniLMMChat, img2base64

torch.manual_seed(0)

chat_model = OmniLMMChat('openbmb/MiniCPM-Llama3-V-2_5')

im_64 = img2base64('./assets/airplane.jpeg')

# First round chat

msgs = [{"role": "user", "content": "Tell me the model of this aircraft."}]

inputs = {"image": im_64, "question": json.dumps(msgs)}

answer = chat_model.chat(inputs)

print(answer)

# Second round chat

# pass history context of multi-turn conversation

msgs.append({"role": "assistant", "content": answer})

msgs.append({"role": "user", "content": "Introduce something about Airbus A380."})

inputs = {"image": im_64, "question": json.dumps(msgs)}

answer = chat_model.chat(inputs)

print(answer)python web_demo_2.5.py报错

OSError: We couldn't connect to 'https://huggingface.co' to load this file, couldn't find it in the cached files and it looks like MiniCPM-Llama3-V-2_5 is not the path to a directory containing a file named config.json.

Checkout your internet connection or see how to run the library in offline mode at 'https://huggingface.co/docs/transformers/installation#offline-mode'.

解决方法:

以下是做内网穿透需要安装的(如只是部署自己电脑或使用其它方式进入公网则不需要如下)

2.1 安装cpolar

在Ubuntu上打开终端,执行命令

首先,我们需要安装curl:

sudo apt-get install curl | sudo bash

- 国内安装(支持一键自动安装脚本)

curl -L https://www.cpolar.com/static/downloads/install-release-cpolar.sh | sudo bash

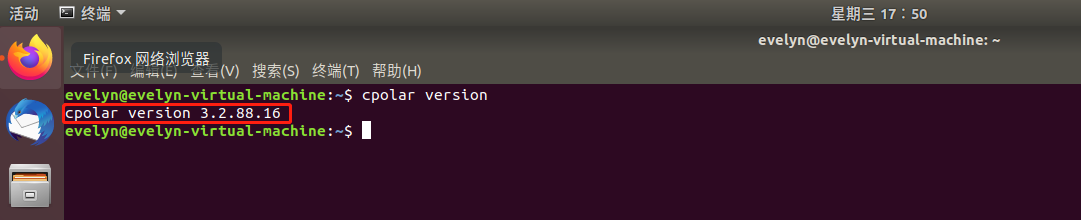

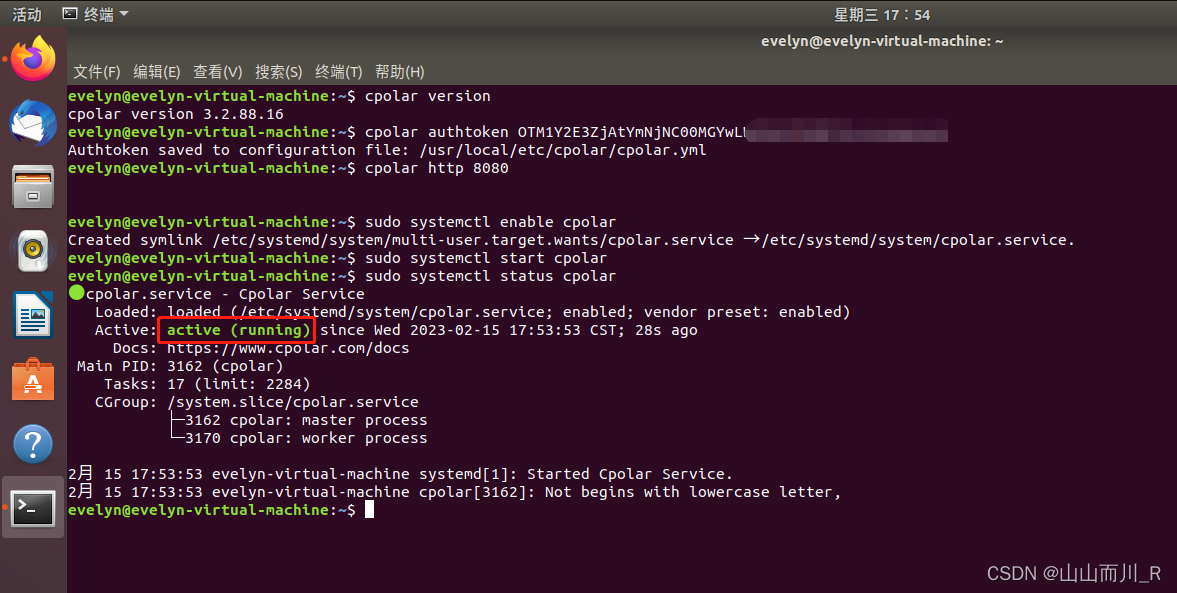

2.2 正常显示版本号即安装成功

cpolar version

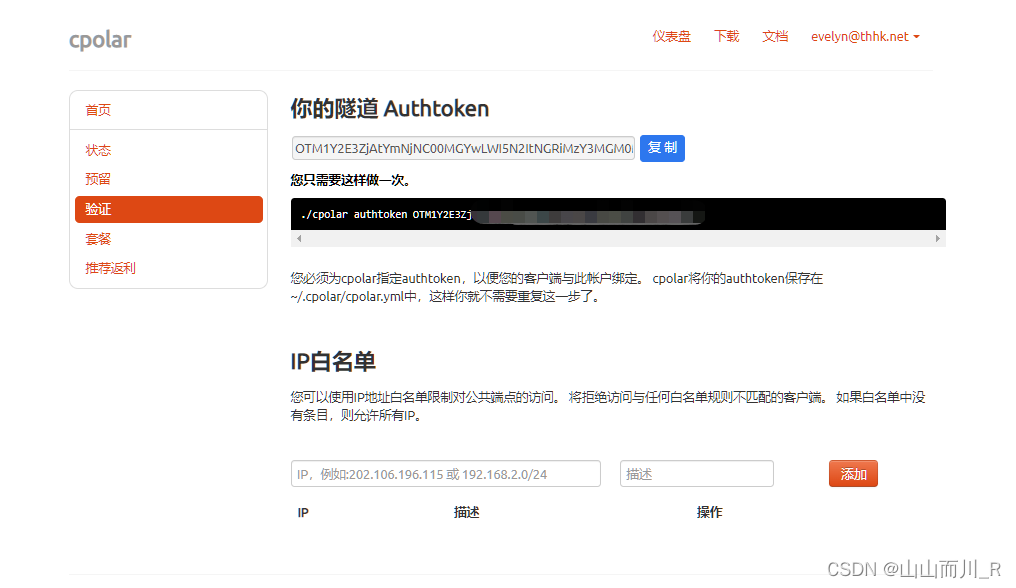

2.3 token认证

登录cpolar官网后台,点击左侧的验证,查看自己的认证token,之后将token贴在命令行里

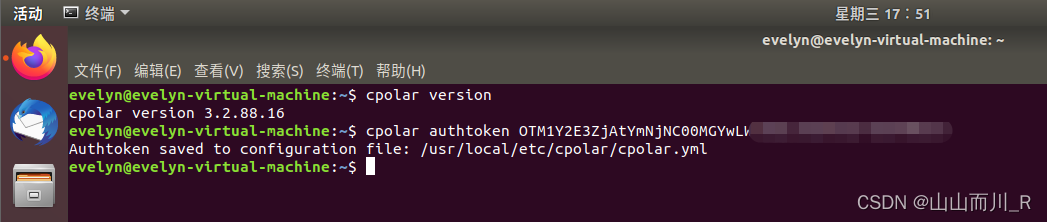

cpolar authtoken xxxxxxx

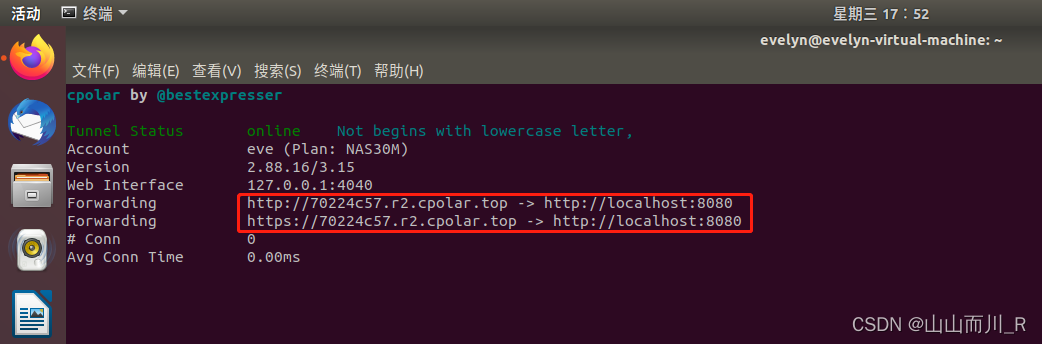

2.4 简单穿透测试一下

cpolar http 8080

2.5 将cpolar配置为后台服务并开机自启动

sudo systemctl enable cpolar

2.6 启动服务

sudo systemctl start cpolar

2.7 查看服务状态

sudo systemctl status cpolar

正常显示为active,为正常在线状态

344

344

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?