朴素贝叶斯算法—预测新闻数据

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

def pusubeiyesi():

news=fetch_20newsgroups(subset="all") #获取数据

#进行数据分割

x_train,x_test,y_train,y_test=train_test_split(news.data,news.target,test_size=0.25)

# print("训练集特征值:",x_train)

#进行特征抽取

tf=TfidfVectorizer()

#以训练集当中的词的列表进行每篇文章的重要性统计

x_train=tf.fit_transform(x_train)

# print(tf.get_feature_names_out())

x_test=tf.transform(x_test)

#进行朴素贝叶斯算法的预测

mlt=MultinomialNB(alpha=1.0)

print(x_train.toarray())

mlt.fit(x_train,y_train)

y_predict=mlt.predict(x_test)

print("预测文章类别为:",y_predict)

#得出准确率

print("准确率为:",mlt.score(x_test,y_test))

return None

pusubeiyesi()

运行结果:

[[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

...

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]]

预测文章类别为: [12 3 11 ... 9 15 17]

准确率为: 0.8491086587436333

Process finished with exit code 0

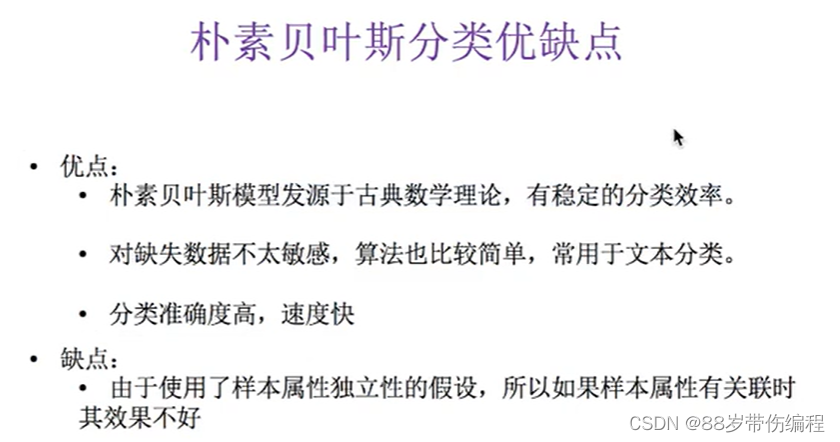

朴素贝叶斯算法优缺点

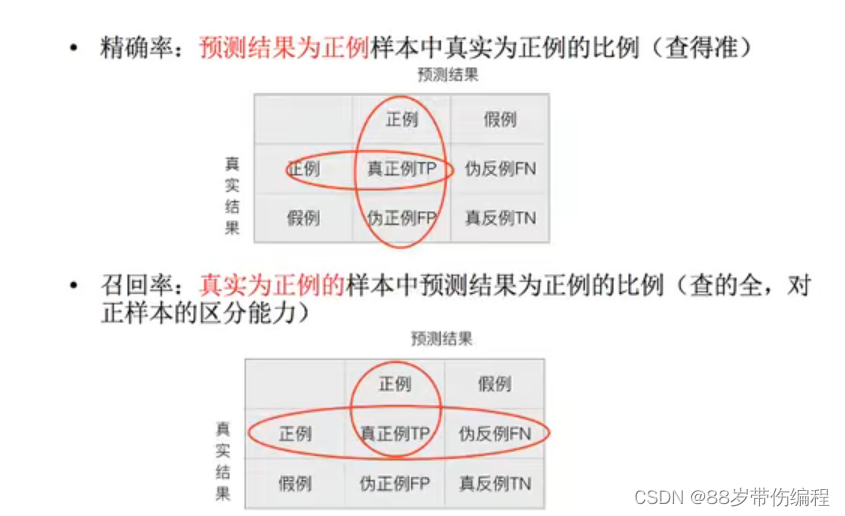

精确率与召回率

API:

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report

def pusubeiyesi():

news=fetch_20newsgroups(subset="all") #获取数据

#进行数据分割

x_train,x_test,y_train,y_test=train_test_split(news.data,news.target,test_size=0.25)

# print("训练集特征值:",x_train)

#进行特征抽取

tf=TfidfVectorizer()

#以训练集当中的词的列表进行每篇文章的重要性统计

x_train=tf.fit_transform(x_train)

# print(tf.get_feature_names_out())

x_test=tf.transform(x_test)

#进行朴素贝叶斯算法的预测

mlt=MultinomialNB(alpha=1.0)

print(x_train.toarray())

mlt.fit(x_train,y_train)

y_predict=mlt.predict(x_test)

print("预测文章类别为:",y_predict)

#得出准确率

print("准确率为:",mlt.score(x_test,y_test))

print("每个类别的精确率和召回率:", classification_report(y_test, y_predict, target_names=news.target_names))

return None

pusubeiyesi()

运行结果(添加后):

每个类别的精确率和召回率: precision recall f1-score support

alt.atheism 0.85 0.72 0.78 209

comp.graphics 0.88 0.76 0.82 234

comp.os.ms-windows.misc 0.81 0.85 0.83 233

comp.sys.ibm.pc.hardware 0.76 0.84 0.80 257

comp.sys.mac.hardware 0.93 0.84 0.88 254

comp.windows.x 0.94 0.87 0.90 245

misc.forsale 0.95 0.71 0.82 266

rec.autos 0.95 0.89 0.92 266

rec.motorcycles 0.91 0.96 0.93 245

rec.sport.baseball 0.90 0.94 0.92 232

rec.sport.hockey 0.94 0.98 0.96 239

sci.crypt 0.73 0.98 0.84 249

sci.electronics 0.92 0.80 0.86 244

sci.med 0.91 0.93 0.92 209

sci.space 0.91 0.92 0.92 254

soc.religion.christian 0.54 0.98 0.70 249

talk.politics.guns 0.80 0.95 0.87 236

talk.politics.mideast 0.91 0.98 0.94 231

talk.politics.misc 0.99 0.62 0.77 200

talk.religion.misc 1.00 0.17 0.29 160

accuracy 0.85 4712

macro avg 0.88 0.84 0.83 4712

weighted avg 0.87 0.85 0.84 4712

Process finished with exit code 0

依次是精确率,召回率

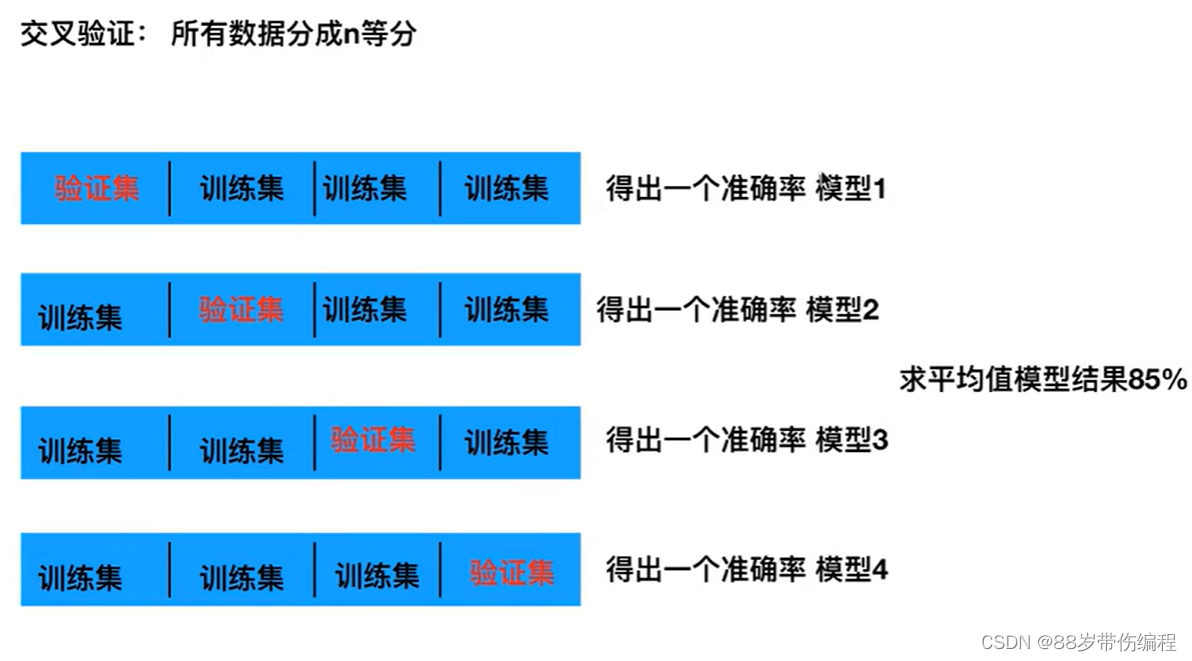

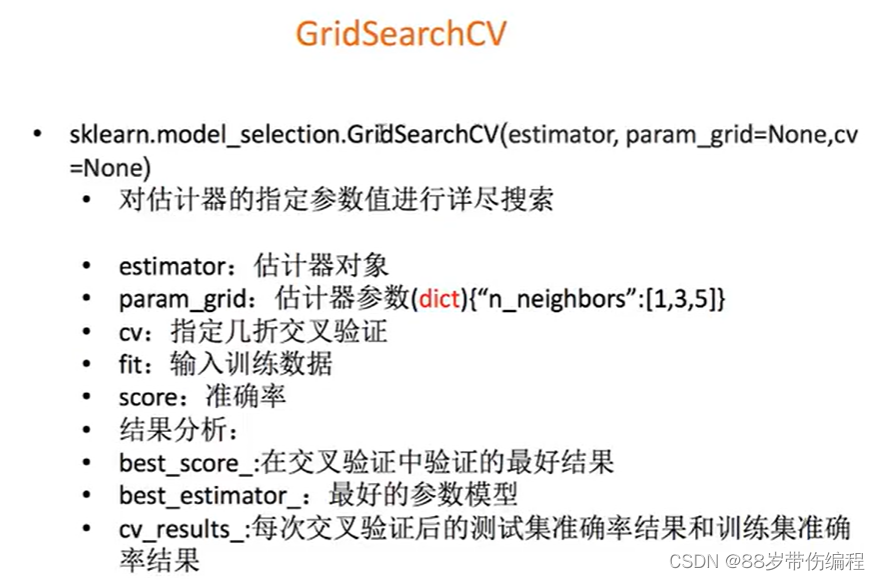

模型的选择与调优

交叉验证:

所有数据分成n等分

网格搜索(调参数):

from sklearn.model_selection import GridSearchCV

Knn=KNeighborsClassifier()

gc=GridSearchCV(Knn,param_grid={"n_neighbors":[1,3,5]},cv=2)

gc.fit(x_train,y_train)

#预测准确率

print("在测试集的准确率:",gc.score(x_test))

#把x_train分为验证集和训练集

print("在交叉验证中最好的结果:",gc.best_score_)

print("在交叉验证中最好的参数模型:",gc.best_estimator_)

print("每次交叉验证后的验证集准确率和训练集准确率",gc.cv_results_)

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?