官方文档传送门:

片段 -Ultralytics YOLOv8 文档![]() https://docs.ultralytics.com/zh/tasks/segment/seg-predict官方文档示例:

https://docs.ultralytics.com/zh/tasks/segment/seg-predict官方文档示例:

from ultralytics import YOLO

# Load a model

model = YOLO('yolov8n-seg.pt') # load an official model

model = YOLO('path/to/best.pt') # load a custom model

# Predict with the model

results = model('https://ultralytics.com/images/bus.jpg') # predict on an imageresults是一个列表,其中每个元素包含boxes(类别、边界框、置信度等基本信息)、masks(分割的掩码)、keypoints(关于姿态估计,分割任务时为None)、probs(关于分类,分割任务时为None)

从boxes中获取每个对象的cls(类别索引),需从张量中获取值,再手动根据yaml文件改成标签(待优化);再从mask中获取对应对象的xy(边缘点坐标),计算面积使用cv2.contourArea()函数。

运行代码示例:

from ultralytics import YOLO

import cv2

# Load a model

model = YOLO("模型路径") # load an official model

# Define path to the image file

source = "图像路径"

# Predict with the model

results = model(source=source, save=False) # predict on an image

for result in results:

boxes = result.boxes # Boxes 对象,用于边界框输出

masks = result.masks # Masks 对象,用于分割掩码输出

for box, mask in zip(boxes, masks):

for cls, contour in zip(box.cls, mask.xy):

class_id = cls.item() # 获取张量的值

if class_id == 0.0: # 根据yaml文件换成自己的

print("embryo")

elif class_id == 1.0:

print("cell")

print(cv2.contourArea(contour)) # 计算轮廓面积

其中模型对应的.yaml文件:

path: ../datasets/WSY-seg # dataset root dir

train: images/train # train images (relative to 'path') 128 images

val: images/train # val images (relative to 'path') 128 images

test: # test images (optional)

# Classes

names:

0: embryo

1: cell

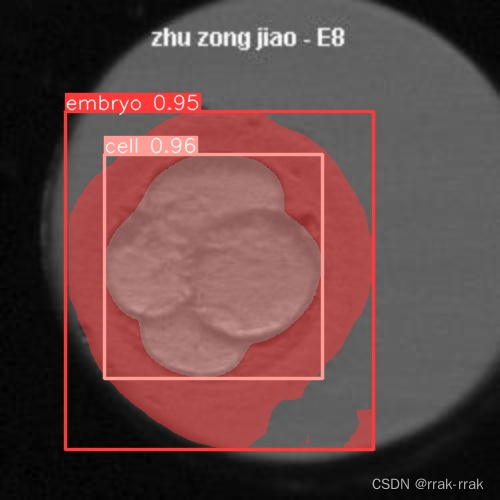

输出结果:

image 1/1 图像路径: 640x640 1 embryo, 1 cell, 27.9ms

Speed: 4.7ms preprocess, 27.9ms inference, 8.0ms postprocess per image at shape (1, 3, 640, 640)

cell

34007.87353515625

embryo

76430.6640625

注意此处的embryo是包含了cell的,是不能标中空的。

批量处理图片方法:

这里是用另外一个模型,分割图像中的cell和segment。

source是文件夹路径。该代码生成一个df,包括图片名称和对应的面积。这样可以导出excel或csv。

import pandas as pd

from ultralytics import YOLO

import cv2

def get_last_part_of_string(string):

last_backslash_index = string.rfind('\\')

if last_backslash_index != -1:

return string[last_backslash_index + 1:]

else:

return string

# Load a model

model = YOLO(r"E:\202306\segment\train_happy\weights\best.pt")

# Define path to the image file

source = r"E:\202306\人工评价+问题(1)\人工评价+问题\embryo_dataset_F25数据集\JE577_EMB7_DISCARD"

# Predict with the model

results = model(source=source, save=False, conf=0.7)

data = [] # Initialize an empty list to store the data

for result in results:

record = {}

boxes = result.boxes

masks = result.masks

path = get_last_part_of_string(result.path)

record["Path"] = path

cell_areas = []

cell_areas_box = []

segment_areas = []

for box, mask in zip(boxes, masks):

for cls, boxxyxy, maskContour in zip(box.cls, box.xyxy, mask.xy):

class_id = cls.item()

x1, y1, x2, y2 = [item.item() for item in boxxyxy]

if class_id == 1.0: # cell

boxArea = (x2 - x1) * (y2 - y1)

cell_areas_box.append(boxArea)

cell_areas.append(cv2.contourArea(maskContour))

elif class_id == 2.0: # segment

segmentArea = cv2.contourArea(maskContour)

segment_areas.append(segmentArea)

record["len_cell_areas"] = len(cell_areas)

record["cell_areas"] = cell_areas

data.append(record)

# Create DataFrame

df = pd.DataFrame(data)

print(df)

运行结果示例:

Path len_cell_areas cell_areas

0 图片01.png 1 [5982.572664737556]

1 图片02.png 1 [5917.78414849074]

2 图片03.png 1 [5838.779726962515]

3 图片04.png 1 [5920.048454688906]

4 图片05.png 1 [5870.545019034616]

5 图片06.png 1 [6221.850625191393]

6 图片07.png 1 [6003.519054066506]

7 图片08.png 2 [4716.300056756678, 6220.655430036932]

8 图片09.png 2 [2925.302606760233, 3053.55887752166]

9 图片10.png 2 [3267.8019318568986, 2970.969230435032]

10 图片11.png 2 [11576.5380859375, 12864.990234375]

11 图片12.png 2 [2546.697752431646, 2434.669943689063]

12 图片13.png 2 [3835.7404975981335, 2332.3920037686767]

13 图片14.png 1 [6029.749145191818]

14 图片15.png 3 [10395.81298828125, 9761.3525390625, 6403.8085...

15 图片16.png 3 [1893.9676402151235, 2125.634363901001, 996.73...

16 图片17.png 3 [1779.0463765645109, 2163.7527813625347, 940.5...

17 图片18.png 2 [2098.2091324186476, 2094.938419651953]

18 图片19.png 4 [1714.886724891694, 1636.82584444643, 762.3676...

19 图片20.png 4 [895.5303360021499, 907.3558746517228, 878.043...

20 图片21.png 5 [797.3409886407608, 1180.4745866096928, 742.86...

文章介绍了如何使用UltralyticsYOLOv8进行图像分割,展示了如何加载模型、进行预测并处理预测结果,包括获取类别、边界框和分割掩码,以及计算细胞区域面积。还提供了一个批量处理图片并存储结果的示例。

文章介绍了如何使用UltralyticsYOLOv8进行图像分割,展示了如何加载模型、进行预测并处理预测结果,包括获取类别、边界框和分割掩码,以及计算细胞区域面积。还提供了一个批量处理图片并存储结果的示例。

6409

6409

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?