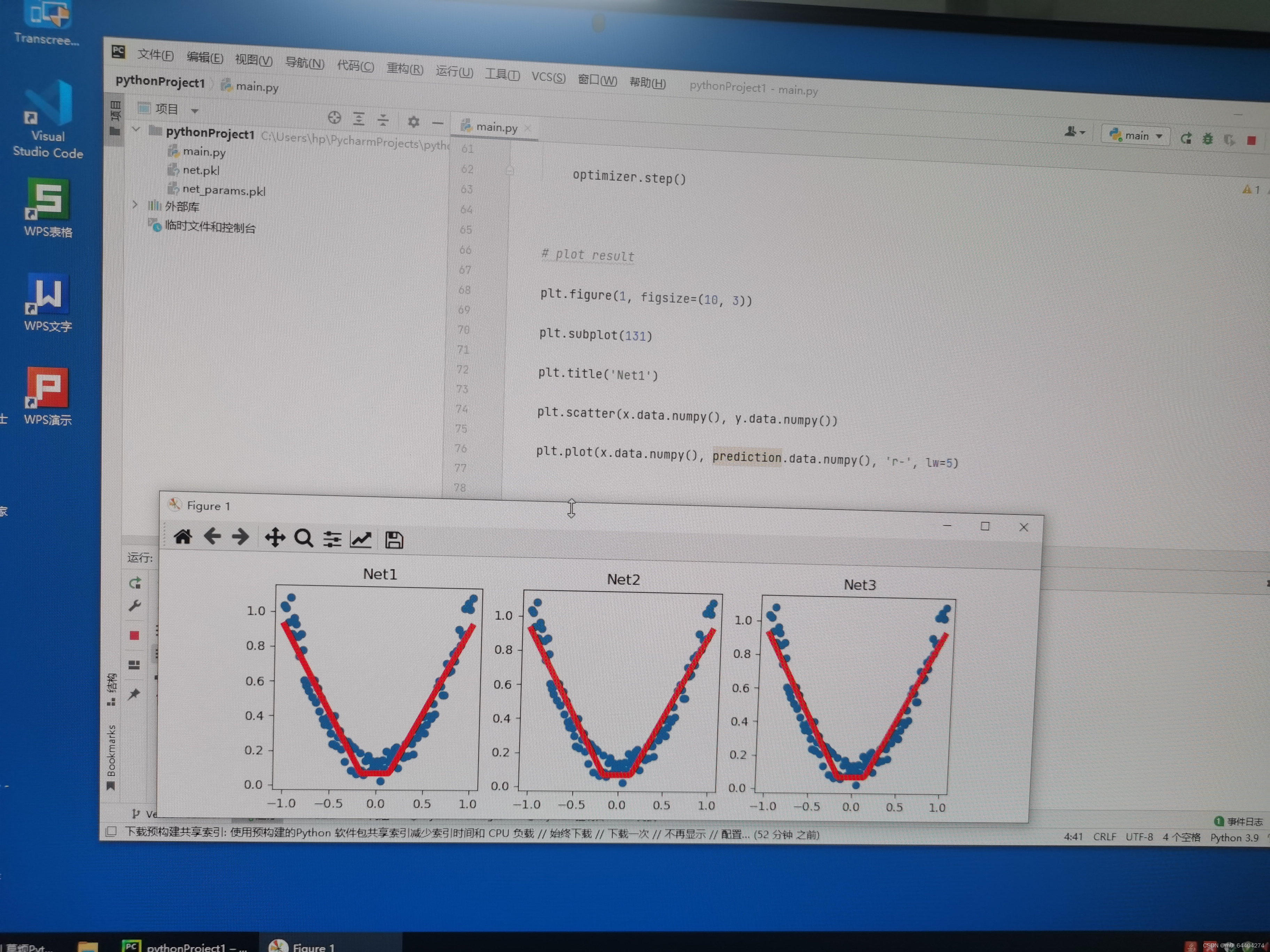

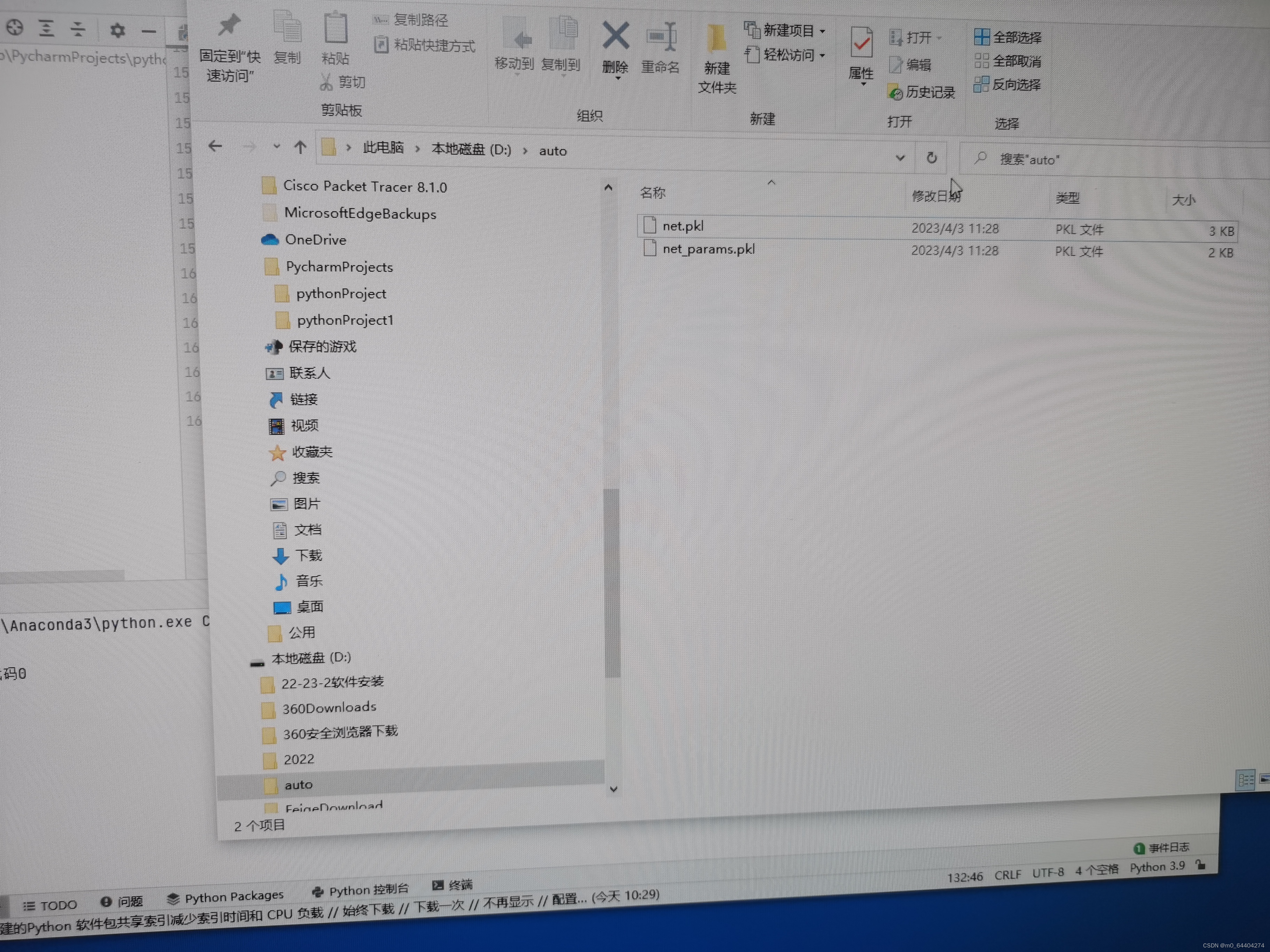

import osos.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"import torchimport matplotlib.pyplot as plt# torch.manual_seed(1) # reproducible# fake datax = torch.unsqueeze(torch.linspace(-1, 1, 100), dim=1) # x data (tensor), shape=(100, 1)y = x.pow(2) + 0.2*torch.rand(x.size()) # noisy y data (tensor), shape=(100, 1)# The code below is deprecated in Pytorch 0.4. Now, autograd directly supports tensors# x, y = Variable(x, requires_grad=False), Variable(y, requires_grad=False)def save(): # save net1 net1 = torch.nn.Sequential( torch.nn.Linear(1, 10), torch.nn.ReLU(), torch.nn.Linear(10, 1) ) optimizer = torch.optim.SGD(net1.parameters(), lr=0.5) loss_func = torch.nn.MSELoss() for t in range(100): prediction = net1(x) loss = loss_func(prediction, y) optimizer.zero_grad() loss.backward() optimizer.step() # plot result plt.figure(1, figsize=(10, 3)) plt.subplot(131) plt.title('Net1') plt.scatter(x.data.numpy(), y.data.numpy()) plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5) # 2 ways to save the net torch.save(net1, 'D:/auto/net.pkl') # save entire net torch.save(net1.state_dict(), 'D:/auto/net_params.pkl') # save only the parametersdef restore_net(): # restore entire net1 to net2 net2 = torch.load('D:/auto/net.pkl') prediction = net2(x) # plot result plt.subplot(132) plt.title('Net2') plt.scatter(x.data.numpy(), y.data.numpy()) plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5)def restore_params(): # restore only the parameters in net1 to net3 net3 = torch.nn.Sequential( torch.nn.Linear(1, 10), torch.nn.ReLU(), torch.nn.Linear(10, 1) ) # copy net1's parameters into net3 net3.load_state_dict(torch.load('D:/auto/net_params.pkl')) prediction = net3(x) # plot result plt.subplot(133) plt.title('Net3') plt.scatter(x.data.numpy(), y.data.numpy()) plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5) plt.show()# save net1save()# restore entire net (may slow)restore_net()# restore only the net parametersrestore_params()

107

107

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?