keepalived高可用

文章目录

1.简介:

Keepalived是一个开源的工具,可以在多台服务器之间提供故障转移和高可用性服务。它使用VRRP协议来提供虚拟IP(VIP),并监控服务器和服务的状态。当主服务器失效时,Keepalived可以自动将VIP转移到备用服务器上,以确保服务的连续性和可靠性。

2.优缺点:

优点:

- 高可用性:Keepalived可以通过将VIP迁移到备用服务器来实现服务的快速切换,并实现高可用性,从而减少服务的中断时间。

- 简单易用:Keepalived具有用户友好的配置文件和命令行界面,使得配置和管理变得简单易懂。

- 冗余和负载均衡:Keepalived不仅可以提供故障转移,还可以在主备服务器之间进行负载均衡,分配流量和资源。

缺点:

- 单一故障点:由于Keepalived依赖于主服务器的状态来触发故障转移,因此如果主服务器本身发生故障,可能会导致服务中断。

- 有限的监控功能:相比一些专门的监控工具,Keepalived的监控功能相对有限,只能基于简单的状态检查进行故障判断。

3.工作原理:

Keepalived使用VRRP协议来实现高可用性。它通过创建一个虚拟的IP地址(VIP),并将其关联到多个服务器(至少两台)上,这些服务器被视为一个组。其中一台服务器被选为主服务器,负责处理所有的请求和流量,而其他服务器则作为备用服务器。

主服务器通过发送VRRP广播消息,通知其他服务器它是当前的主服务器,同时将VIP分配给自己。备用服务器则收到这些广播消息,并等待主服务器失效。一旦备用服务器检测到主服务器失效,它将发起一个VRRP抢占过程来取得VIP,并成为新的主服务器,接管服务。

4.工作流程:

- 安装和配置:在多台服务器上安装Keepalived并进行配置。配置中包括VIP的定义、服务器角色的指定、监控脚本的配置等。

- VRRP通信:服务器之间通过多播或广播方式进行VRRP通信,主服务器发送广播消息以通知其他服务器自己是当前的主服务器。

- 健康检查:Keepalived定期对指定的服务或脚本进行健康检查,以确保服务正常运行。

- 故障检测:备用服务器持续监测主服务器的状态,一旦检测到主服务器故障,备用服务器将发起抢占过程。

- VIP转移:备用服务器成功抢占VIP后,它将成为新的主服务器,并接管服务。

- 恢复操作:当主服务器恢复正常后,它可以通过发送VRRP通告来参与抢占,争夺回VIP并成为新的主服务器。

5. keepalived实现nginx负载均衡机高可用

环境说明:

| 服务器类型 | IP地址 | 系统版本 |

|---|---|---|

| haproxy1(master) | 192.168.195.133 | centos 8 |

| haproxy2(slave) | 192.168.195.134 | centos 8 |

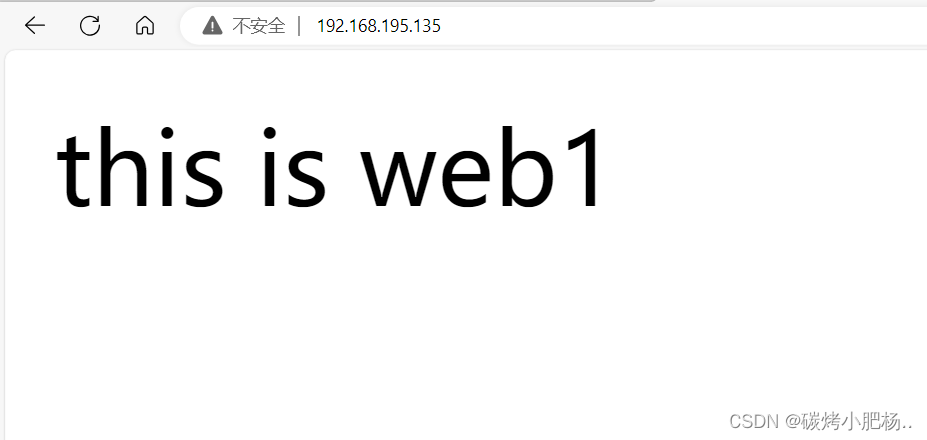

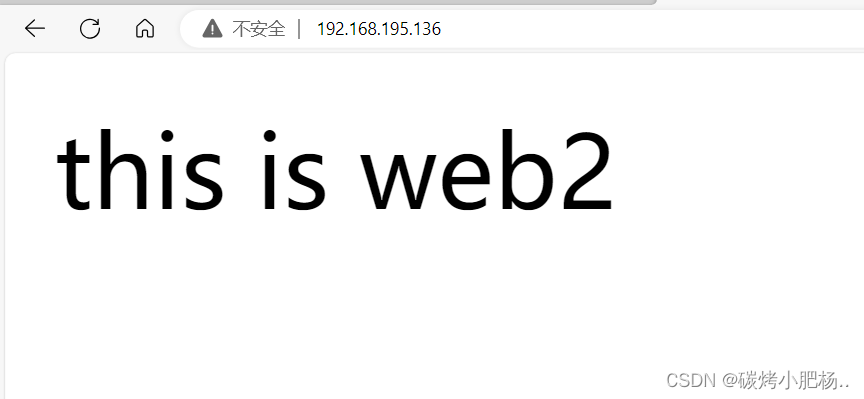

| web1 | 192.168.195.135 | centos 8 |

| web2 | 192.168.195.136 | centos 8 |

注:本次高可用虚拟IP(VIP)地址暂定为 192.168.195.100

haproxy部署http负载均衡前提(部署两台RS主机)

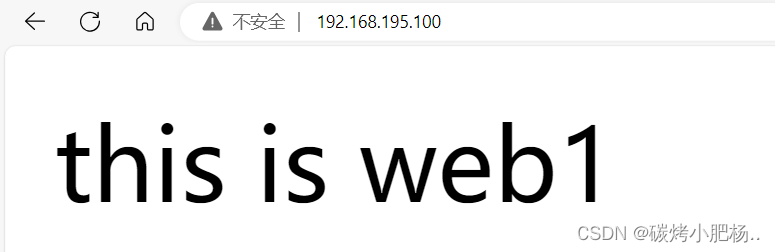

在后端服务器上准备测试的http页面(主机web1、web2)

//关永久闭防火墙和selinux

[root@web1 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@web1 ~]# setenforce 0

[root@web1 ~]# vim /etc/selinux/config

[root@web1 ~]# reboot //重启生效

[root@web1 ~]# getenforce

Disabled

//配置yum源

[root@web1 ~]# rm -rf /etc/yum.repos.d/*

[root@web1 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

//yum安装httpd服务,并开启服务设置访问网页内容

[root@web1 ~]# yum -y install httpd

省略 . . .

[root@web1 ~]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@web1 ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 *:80 *:*

LISTEN 0 128 [::]:22 [::]:*

[root@web1 ~]# echo "this is web1" > /var/www/html/index.html

在另一台web主机上进行相同的操作

//关永久闭防火墙和selinux

[root@web2 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@web2 ~]# setenforce 0

[root@web2 ~]# vim /etc/selinux/config

[root@web2 ~]# reboot //重启生效

[root@web2 ~]# getenforce

Disabled

//配置yum源

[root@web2 ~]# rm -rf /etc/yum.repos.d/*

[root@web2 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

//yum安装httpd服务,并开启服务设置访问网页内容

[root@web2 ~]# yum -y install httpd

省略 . . .

[root@web2 ~]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@web2 ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 *:80 *:*

LISTEN 0 128 [::]:22 [::]:*

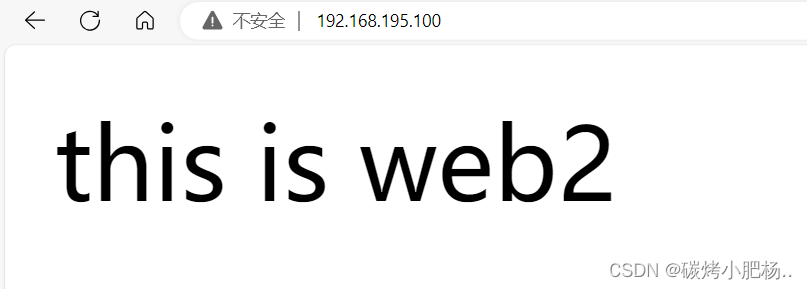

[root@web2 ~]# echo "this is web2" > /var/www/html/index.html

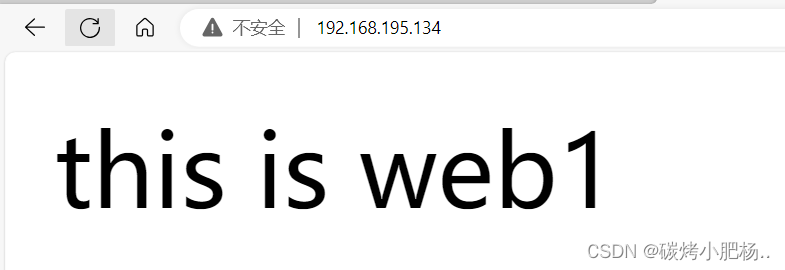

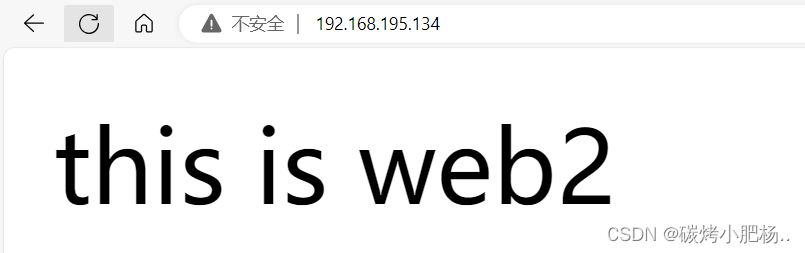

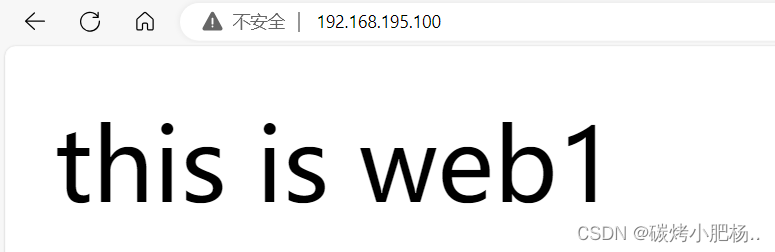

访问web主机网页

部署完成

5.1.keepalived安装

配置主keepalived

//永久关闭防火墙和selinux

[root@haproxy1 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@haproxy1 ~]# setenforce 0

[root@haproxy1 ~]# vim /etc/selinux/config

[root@haproxy1 ~]# reboot

[root@haproxy1 ~]# getenforce

Disabled

//配置yum源

[root@haproxy1 ~]# rm -rf /etc/yum.repos.d/*

[root@haproxy1 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

[root@haproxy1 ~]# yum clean all

[root@haproxy1 yum.repos.d]# yum makecache

//安装keepalived

[root@haproxy1 ~]# yum -y install epel-release vim wget gcc gcc-c++

省略 . . .

[root@haproxy1 ~]# yum -y install keepalived

省略 . . .

//查看安装生成的文件

[root@haproxy1 ~]# rpm -ql keepalived

/etc/keepalived //配置目录

/etc/keepalived/keepalived.conf //此为主配置文件

/etc/sysconfig/keepalived

/usr/bin/genhash

/usr/lib/systemd/system/keepalived.service //此为服务控制文件

/usr/libexec/keepalived

/usr/sbin/keepalived

. . . . .此处省略N行

同样的方法在备服务器上也安装keepalived

//永久关闭防火墙和selinux

[root@haproxy2 ~]# systemctl disable --now firewalld.service

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@haproxy2 ~]# setenforce 0

[root@haproxy2 ~]# vim /etc/selinux/config

[root@haproxy2 ~]# reboot

[root@haproxy2 ~]# getenforce

Disabled

//配置yum源

[root@haproxy2 ~]# rm -rf /etc/yum.repos.d/*

[root@haproxy2 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo

[root@haproxy2 ~]# yum clean all

[root@haproxy2 yum.repos.d]# yum makecache

//安装keepalived

[root@haproxy2 ~]# yum -y install epel-release vim wget gcc gcc-c++

省略 . . .

[root@haproxy2 ~]# yum -y install keepalived

省略 . . .

//查看安装生成的文件

[root@haproxy2 ~]# rpm -ql keepalived

/etc/keepalived //配置目录

/etc/keepalived/keepalived.conf //此为主配置文件

/etc/sysconfig/keepalived

/usr/bin/genhash

/usr/lib/systemd/system/keepalived.service //此为服务控制文件

/usr/libexec/keepalived

/usr/sbin/keepalived

. . . . .此处省略N行

5.2.在主备机上分别安装haproxy

部署两台haproxy负载均衡httpd的主机

进入haproxy官网拉取软件包

HAProxy - The Reliable, High Perf. TCP/HTTP Load Balancer

下列是利用haproxy1这台主机做的部署haproxy的流程,haproxy2主机上同样的做下面的操作

//安装依赖包,并创建用户

[root@haproxy1 ~]# yum -y install make gcc pcre-devel bzip2-devel openssl-devel systemd-devel vim wget

[root@haproxy1 ~]# useradd -r -M -s /sbin/nologin haproxy

//使用wget命令拉取haproxy软件包

[root@haproxy1 ~]# wget https://www.haproxy.org/download/2.7/src/haproxy-2.7.10.tar.gz

--2023-10-12 14:46:05-- https://www.haproxy.org/download/2.7/src/haproxy-2.7.10.tar.gz

Resolving www.haproxy.org (www.haproxy.org)... 51.15.8.218, 2001:bc8:35ee:100::1

Connecting to www.haproxy.org (www.haproxy.org)|51.15.8.218|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 4191948 (4.0M) [application/x-tar]

Saving to: ‘haproxy-2.7.10.tar.gz’

haproxy-2.7.10.tar.gz 100%[===============================================>] 4.00M 51.0KB/s in 65s

2023-10-12 14:47:12 (63.1 KB/s) - ‘haproxy-2.7.10.tar.gz’ saved [4191948/4191948]

[root@haproxy1 ~]# ls

anaconda-ks.cfg haproxy-2.7.10.tar.gz

//解压并进入该目录进行编译

[root@haproxy1 ~]# tar xf haproxy-2.7.10.tar.gz

[root@haproxy1 ~]# cd haproxy-2.7.10/

[root@haproxy1 haproxy-2.7.10]# make clean

[root@haproxy1 haproxy-2.7.10]# make -j $(nproc) TARGET=linux-glibc USE_OPENSSL=1 USE_ZLIB=1 USE_PCRE=1 USE_SYSTEMD=1

//进行安装,指定路径

[root@haproxy1 haproxy-2.7.10]# make install PREFIX=/usr/local/haproxy

//设置环境变量(此处通过软链接的方式设置环境变量)

[root@haproxy1 haproxy-2.7.10]# ln -s /usr/local/haproxy/sbin/* /usr/sbin/

[root@haproxy1 haproxy-2.7.10]# which haproxy

/usr/sbin/haproxy

//查看haproxy的版本,能够查看版本,则说明我们这个命令是可以使用的

[root@haproxy1 haproxy-2.7.10]# haproxy -v

HAProxy version 2.7.10-d796057 2023/08/09 - https://haproxy.org/

Status: stable branch - will stop receiving fixes around Q1 2024.

Known bugs: http://www.haproxy.org/bugs/bugs-2.7.10.html

Running on: Linux 4.18.0-193.el8.x86_64 #1 SMP Fri Mar 27 14:35:58 UTC 2020 x86_64

//配置各个负载的内核参数

[root@haproxy1 haproxy-2.7.10]# echo 'net.ipv4.ip_nonlocal_bind = 1' >> /etc/sysctl.conf

[root@haproxy1 haproxy-2.7.10]# echo 'net.ipv4.ip_forward = 1' >> /etc/sysctl.conf

[root@haproxy1 haproxy-2.7.10]# sysctl -p

net.ipv4.ip_nonlocal_bind = 1

net.ipv4.ip_forward = 1

//编写haproxys.service文件

[root@haproxy1 haproxy-2.7.10]# vim /usr/lib/systemd/system/haproxy.service

[root@haproxy1 haproxy-2.7.10]# cat /usr/lib/systemd/system/haproxy.service

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

[Service]

ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q

ExecStart=/usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/run/haproxy.pid

ExecReload=/bin/kill -USR2 $MAINPID

[Install]

WantedBy=multi-user.target

[root@haproxy1 haproxy-2.7.10]# systemctl daemon-reload

//配置日志记录功能

[root@haproxy1 ~]# vim /etc/rsyslog.conf

[root@haproxy1 ~]# cat /etc/rsyslog.conf

# Save boot messages also to boot.log

local0.* /var/log/haproxy.log //添加此行

local7.* /var/log/boot.log

//重启日志服务

[root@haproxy1 ~]# systemctl restart rsyslog.service

//编写配置文件

[root@haproxy1 ~]# mkdir /etc/haproxy

[root@haproxy1 ~]# vim /etc/haproxy/haproxy.cfg

[root@haproxy1 ~]# cat /etc/haproxy/haproxy.cfg

#--------------全局配置----------------

global

log 127.0.0.1 local0 info

#log loghost local0 info

maxconn 20480

#chroot /usr/local/haproxy

pidfile /var/run/haproxy.pid

#maxconn 4000

user haproxy

group haproxy

daemon

#---------------------------------------------------------------------

#common defaults that all the 'listen' and 'backend' sections will

#use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

log global

option dontlognull

option httpclose

option httplog

#option forwardfor

option redispatch

balance roundrobin

timeout connect 10s

timeout client 10s

timeout server 10s

timeout check 10s

maxconn 60000

retries 3

#--------------统计页面配置------------------

listen admin_stats

bind 0.0.0.0:8189

stats enable

mode http

log global

stats uri /haproxy_stats

stats realm Haproxy\ Statistics

stats auth admin:admin

#stats hide-version

stats admin if TRUE

stats refresh 30s

#---------------web设置-----------------------

listen webcluster

bind 0.0.0.0:80

mode http

#option httpchk GET /index.html

log global

maxconn 3000

balance roundrobin

cookie SESSION_COOKIE insert indirect nocache

server web1 192.168.195.135:80 check inter 2000 fall 5

server web2 192.168.195.136:80 check inter 2000 fall 5

#server web1 192.168.179.1:80 cookie web01 check inter 2000 fall 5

[root@haproxy1 haproxy-2.7.10]#

//重启haproxy服务,并将haproxy服务设置开机自启

[root@haproxy1 haproxy-2.7.10]# systemctl enable --now haproxy.service

Created symlink /etc/systemd/system/multi-user.target.wants/haproxy.service → /usr/lib/systemd/system/haproxy.service.

[root@haproxy1 haproxy-2.7.10]# systemctl status haproxy.service

● haproxy.service - HAProxy Load Balancer

Loaded: loaded (/usr/lib/systemd/system/haproxy.service; disabled; vendor preset: disabled)

Active: active (running) since Tue 2023-10-10 01:09:07 CST; 9s ago

Process: 12021 ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q (code=exited, status=0/SUCCESS)

Main PID: 12024 (haproxy)

Tasks: 3 (limit: 11294)

//查看端口

[root@haproxy1 haproxy-2.7.10]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:8189 0.0.0.0:*

LISTEN 0 128 [::]:22

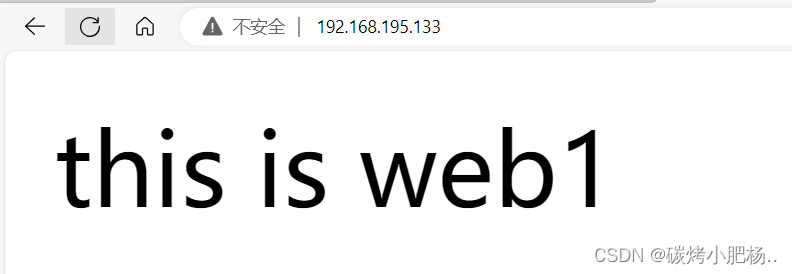

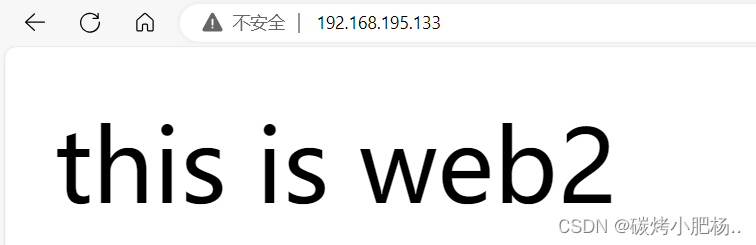

在浏览器上访问试试,确保haproxy1和haproxy2上的haproxy服务能够正常访问

通过192.168.195.133访问

通过192.168.195.134访问

5.3.keepalived配置

5.3.1.keepalived配置文件讲解

keepalived 的主配置文件是 /etc/keepalived/keepalived.conf。

vrrp_instance段配置

nopreempt //设置为不抢占。默认是抢占的,当高优先级的机器恢复后,会抢占低优先 \

级的机器成为MASTER,而不抢占,则允许低优先级的机器继续成为MASTER,即使高优先级 \

的机器已经上线。如果要使用这个功能,则初始化状态必须为BACKUP。

preempt_delay //设置抢占延迟。单位是秒,范围是0---1000,默认是0.发现低优先 \

级的MASTER后多少秒开始抢占。

vrrp_script段配置

//作用:添加一个周期性执行的脚本。脚本的退出状态码会被调用它的所有的VRRP Instance记录。

//注意:至少有一个VRRP实例调用它并且优先级不能为0.优先级范围是1-254.

vrrp_script <SCRIPT_NAME> {

...

}

//选项说明:

script "/path/to/somewhere" //指定要执行的脚本的路径。

interval <INTEGER> //指定脚本执行的间隔。单位是秒。默认为1s。

timeout <INTEGER> //指定在多少秒后,脚本被认为执行失败。

weight <-254 --- 254> //调整优先级。默认为2.

rise <INTEGER> //执行成功多少次才认为是成功。

fall <INTEGER> //执行失败多少次才认为失败。

user <USERNAME> [GROUPNAME] //运行脚本的用户和组。

init_fail //假设脚本初始状态是失败状态。

//weight说明:

1. 如果脚本执行成功(退出状态码为0),weight大于0,则priority增加。

2. 如果脚本执行失败(退出状态码为非0),weight小于0,则priority减少。

3. 其他情况下,priority不变。

real_server段配置

weight <INT> //给服务器指定权重。默认是1

inhibit_on_failure //当服务器健康检查失败时,将其weight设置为0, \

//而不是从Virtual Server中移除

notify_up <STRING> //当服务器健康检查成功时,执行的脚本

notify_down <STRING> //当服务器健康检查失败时,执行的脚本

uthreshold <INT> //到这台服务器的最大连接数

lthreshold <INT> //到这台服务器的最小连接数

tcp_check段配置

connect_ip <IP ADDRESS> //连接的IP地址。默认是real server的ip地址

connect_port <PORT> //连接的端口。默认是real server的端口

bindto <IP ADDRESS> //发起连接的接口的地址。

bind_port <PORT> //发起连接的源端口。

connect_timeout <INT> //连接超时时间。默认是5s。

fwmark <INTEGER> //使用fwmark对所有出去的检查数据包进行标记。

warmup <INT> //指定一个随机延迟,最大为N秒。可防止网络阻塞。如果为0,则关闭该功能。

retry <INIT> //重试次数。默认是1次。

delay_before_retry <INT> //默认是1秒。在重试之前延迟多少秒。

5.3.2.配置主keepalived

[root@haproxy1 ~]# vim /etc/keepalived/keepalived.conf

[root@haproxy1 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id haproxy1

}

vrrp_instance VI_1 {

state MASTER

interface ens160

virtual_router_id 80

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 12345678

}

virtual_ipaddress {

192.168.195.100

}

}

virtual_server 192.168.195.100 80 {

delay_loop 6

lb_algo rr

lb_kind NAT

persistence_timeout 50

protocol TCP

real_server 192.168.195.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.195.134 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@haproxy1 ~]# systemctl enable --now keepalived.service //开机自启keepalived服务

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

[root@haproxy1 ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:8189 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

5.3.3.配置备keepalived

[root@haproxy2 ~]# vim /etc/keepalived/keepalived.conf

[root@haproxy2 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id haproxy2

}

vrrp_instance VI_1 {

state BACKUP

interface ens160

virtual_router_id 80

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 12345678

}

virtual_ipaddress {

192.168.195.100

}

}

virtual_server 192.168.195.100 80 {

delay_loop 6

lb_algo rr

lb_kind NAT

persistence_timeout 50

protocol TCP

real_server 192.168.195.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.195.134 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@haproxy2 ~]# systemctl enable --now keepalived.service

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

[root@haproxy2 ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:8189 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

5.3.4.查看VIP在哪一台主机上

在MASTER上查看(haproxy1)

[root@haproxy1 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:1f:2f:75 brd ff:ff:ff:ff:ff:ff

inet 192.168.195.133/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 1011sec preferred_lft 1011sec

inet 192.168.195.100/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::411e:cef7:14ab:7e28/64 scope link noprefixroute

valid_lft forever preferred_lft forever

在SLAVE上查看(haproxy2)

[root@note1 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:1f:2f:75 brd ff:ff:ff:ff:ff:ff

inet 192.168.195.133/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 968sec preferred_lft 968sec

inet 192.168.195.100/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::411e:cef7:14ab:7e28/64 scope link noprefixroute

valid_lft forever preferred_lft forever

5.4.通过vip访问页面

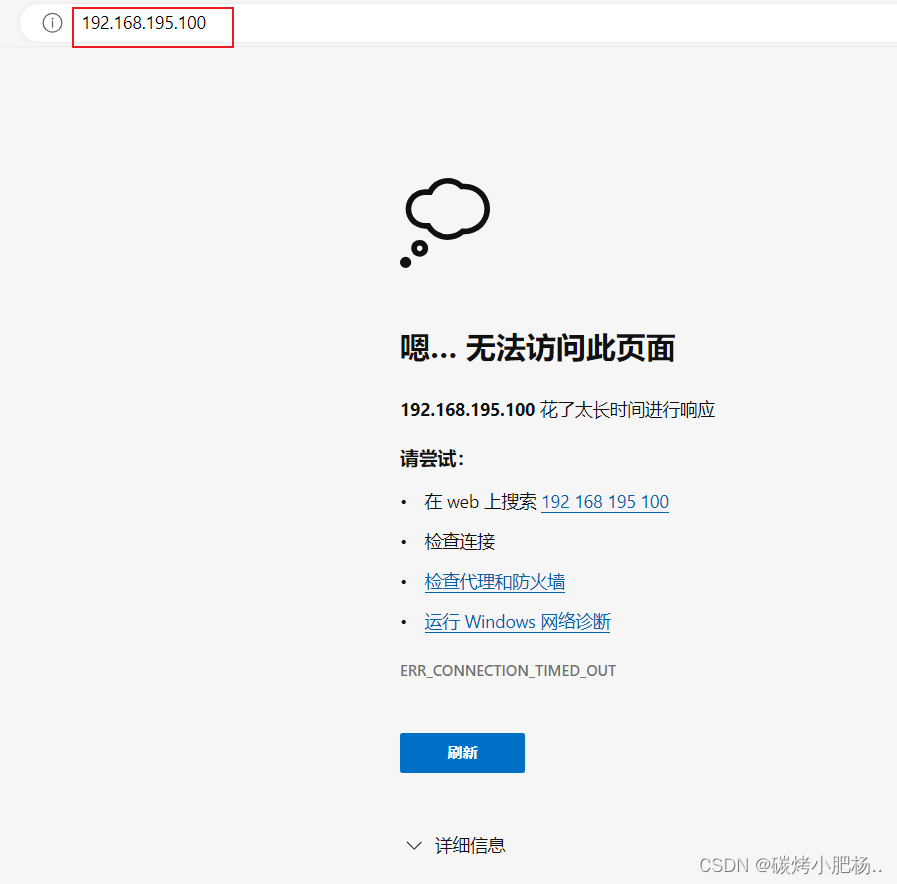

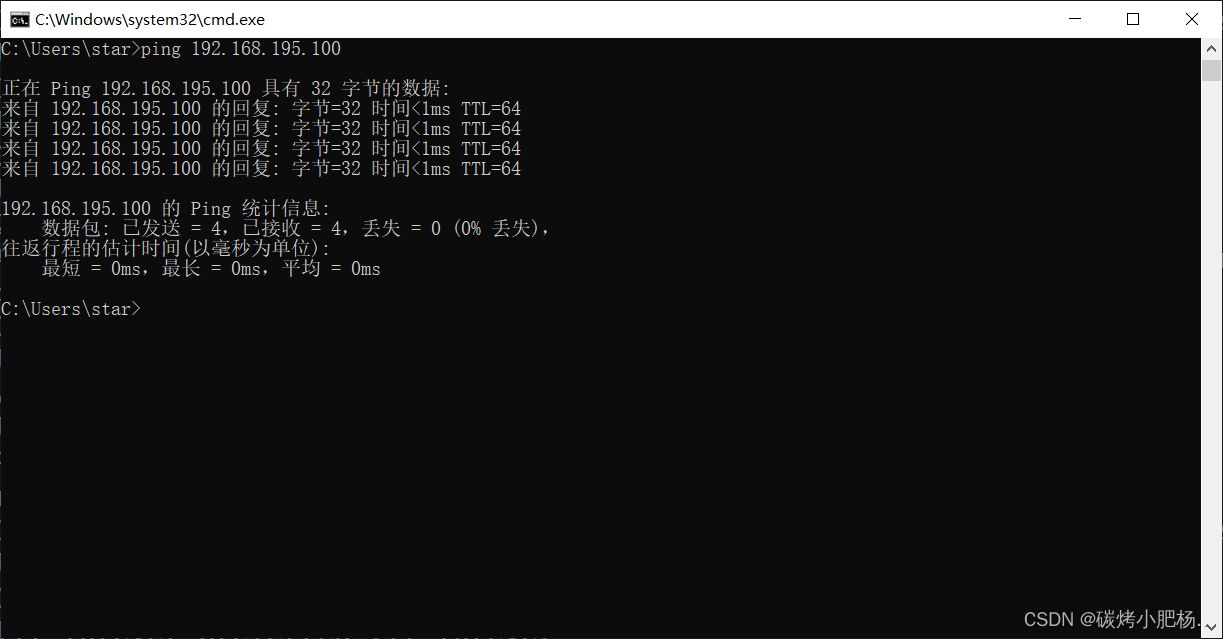

1.访问vip解决访问不了的问题

虽然访问不了,但是却能却ping通

注:为什么会访问不了,这是因为备节点在做干扰,因为现在备节点也可以做流量转发,导致流量不知道如何做选择,所以需要关闭slave主机(haproxy2)的haproxy服务后,再访问页面

再次启动slave上面的haproxy服务也不影响了

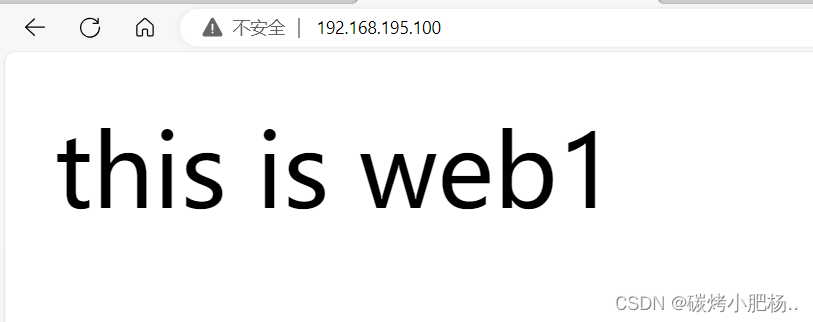

2.模拟master主机(haproxy1)服务因不明原因导致服务关闭,从而开启slave主机(haproxy2)的haproxy服务

注:由于我们是将master主机(haproxy1)上的keepalived服务关闭,从而使得这台主机不具有高可用,所以我们通过vip访问网页时,会直接通过slave主机(haproxy2)访问页面,我们就不需要关闭master主机上的haproxy服务

//在主master主机(haproxy1)上关闭keepalived服务

[root@haproxy1 ~]# systemctl stop keepalived.service

[root@haproxy1 ~]# ip a //再次查看vip,发现vip消失

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:1f:2f:75 brd ff:ff:ff:ff:ff:ff

inet 192.168.195.133/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 1392sec preferred_lft 1392sec

inet6 fe80::411e:cef7:14ab:7e28/64 scope link noprefixroute

valid_lft forever preferred_lft forever

//在备slave主机(haproxy2)上查看vip是否跳转过来

[root@haproxy2 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:8b:9b:af brd ff:ff:ff:ff:ff:ff

inet 192.168.195.134/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 994sec preferred_lft 994sec

inet 192.168.195.100/32 scope global ens160 //显示存在vip

valid_lft forever preferred_lft forever

inet6 fe80::3aa0:b2e5:ecf1:7bd1/64 scope link noprefixroute

valid_lft forever preferred_lft forever

再次通过vip访问页面

3.再次启用主master主机(haproxy1)的keepalived服务,由于我们配置keepalive服务时,在其配置文件中没有配置不抢占,所以当我们重启启用主master主机的keepalived服务,vip则会被重启抢占回来

//在master主机上(haproxy1)

[root@haproxy1 ~]# systemctl start keepalived.service

[root@haproxy1 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:1f:2f:75 brd ff:ff:ff:ff:ff:ff

inet 192.168.195.133/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 1736sec preferred_lft 1736sec

inet 192.168.195.100/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::411e:cef7:14ab:7e28/64 scope link noprefixroute

valid_lft forever preferred_lft forever

//在slave主机上(haproxy2)

[root@haproxy2 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:8b:9b:af brd ff:ff:ff:ff:ff:ff

inet 192.168.195.134/24 brd 192.168.195.255 scope global dynamic noprefixroute ens160

valid_lft 1625sec preferred_lft 1625sec

inet6 fe80::3aa0:b2e5:ecf1:7bd1/64 scope link noprefixroute

valid_lft forever preferred_lft forever

2182

2182

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?