单纯记录一下实验的代码

一、数据集构建

1、数据集的构建函数

import random

import numpy as np

# 固定随机种子

random.seed(0)

np.random.seed(0)

def generate_data(length, k, save_path):

if length < 3:

raise ValueError("The length of data should be greater than 2.")

if k == 0:

raise ValueError("k should be greater than 0.")

# 生成100条长度为length的数字序列,除前两个字符外,序列其余数字暂用0填充

base_examples = []

for n1 in range(0, 10):

for n2 in range(0, 10):

seq = [n1, n2] + [0] * (length - 2)

label = n1 + n2

base_examples.append((seq, label))

examples = []

# 数据增强:对base_examples中的每条数据,默认生成k条数据,放入examples

for base_example in base_examples:

for _ in range(k):

# 随机生成替换的元素位置和元素

idx = np.random.randint(2, length)

val = np.random.randint(0, 10)

# 对序列中的对应零元素进行替换

seq = base_example[0].copy()

label = base_example[1]

seq[idx] = val

examples.append((seq, label))

# 保存增强后的数据

with open(save_path, "w", encoding="utf-8") as f:

for example in examples:

# 将数据转为字符串类型,方便保存

seq = [str(e) for e in example[0]]

label = str(example[1])

line = " ".join(seq) + "\t" + label + "\n"

f.write(line)

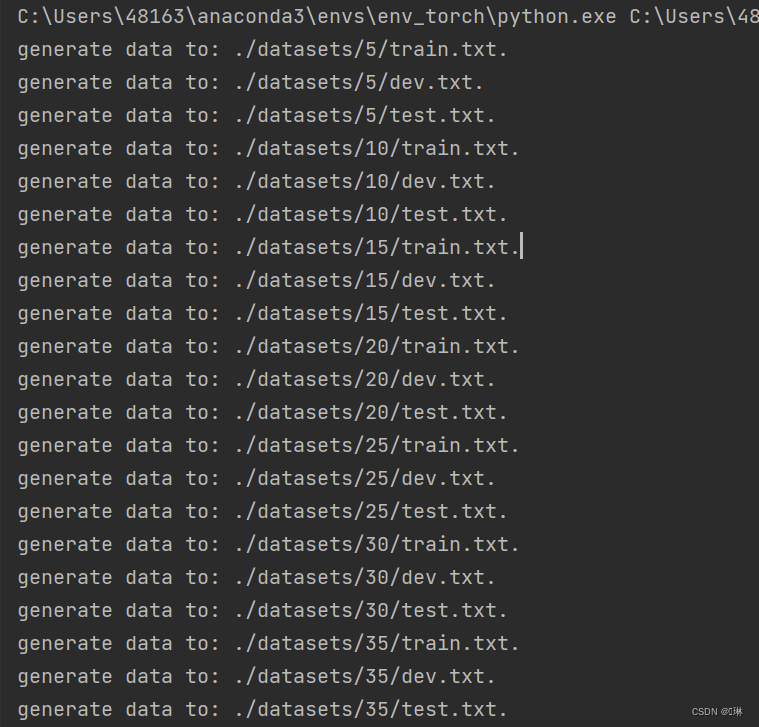

print(f"generate data to: {save_path}.")

# 定义生成的数字序列长度

lengths = [5, 10, 15, 20, 25, 30, 35]

for length in lengths:

# 生成长度为length的训练数据

save_path = f"./datasets/{length}/train.txt"

k = 3

generate_data(length, k, save_path)

# 生成长度为length的验证数据

save_path = f"./datasets/{length}/dev.txt"

k = 1

generate_data(length, k, save_path)

# 生成长度为length的测试数据

save_path = f"./datasets/{length}/test.txt"

k = 1

generate_data(length, k, save_path)

2、加载数据并进行数据划分

def load_data(data_path):

# 加载训练集

train_examples = []

train_path = os.path.join(data_path, "train.txt")

with open(train_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

train_examples.append((seq, label))

# 加载验证集

dev_examples = []

dev_path = os.path.join(data_path, "dev.txt")

with open(dev_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

dev_examples.append((seq, label))

# 加载测试集

test_examples = []

test_path = os.path.join(data_path, "test.txt")

with open(test_path, "r", encoding="utf-8") as f:

for line in f.readlines():

# 解析一行数据,将其处理为数字序列seq和标签label

items = line.strip().split("\t")

seq = [int(i) for i in items[0].split(" ")]

label = int(items[1])

test_examples.append((seq, label))

return train_examples, dev_examples, test_examples

# 设定加载的数据集的长度

length = 5

# 该长度的数据集的存放目录

data_path = f"./datasets/{length}"

# 加载该数据集

train_examples, dev_examples, test_examples = load_data(data_path)

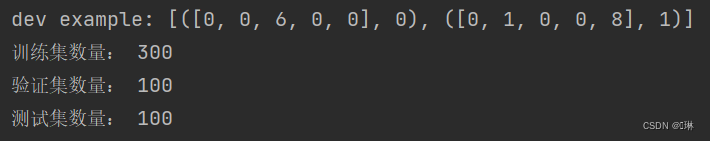

print("dev example:", dev_examples[:2])

print("训练集数量:", len(train_examples))

print("验证集数量:", len(dev_examples))

print("测试集数量:", len(test_examples))

3、 构造Dataset类

from torch.utils.data import Dataset

class DigitSumDataset(Dataset):

def __init__(self, data):

self.data = data

def __getitem__(self, idx):

example = self.data[idx]

seq = torch.tensor(example[0], dtype=torch.int64)

label = torch.tensor(example[1], dtype=torch.int64)

return seq, label

def __len__(self):

return len(self.data)

二、模型构建

1、嵌入层

import torch.nn as nn

import torch

class Embedding(nn.Module):

def __init__(self, num_embeddings, embedding_dim):

super(Embedding, self).__init__()

self.W = nn.init.xavier_uniform_(torch.empty(num_embeddings, embedding_dim), gain=1.0)

def forward(self, inputs):

# 根据索引获取对应词向量

embs = self.W[inputs]

return embs

emb_layer = Embedding(10, 5)

inputs = torch.tensor([0, 1, 2, 3])

emb_layer(inputs)

2、 SRN层

import torch.nn.functional as F

torch.manual_seed(0)

class SRN(nn.Module):

def __init__(self, input_size, hidden_size, W_attr=None, U_attr=None, b_attr=None):

super(SRN, self).__init__()

# 嵌入向量的维度

self.input_size = input_size

# 隐状态的维度

self.hidden_size = hidden_size

# 定义模型参数W,其shape为 input_size x hidden_size

if W_attr == None:

W = torch.zeros(size=[input_size, hidden_size], dtype=torch.float32)

else:

W = torch.tensor(W_attr, dtype=torch.float32)

self.W = torch.nn.Parameter(W)

# 定义模型参数U,其shape为hidden_size x hidden_size

if U_attr == None:

U = torch.zeros(size=[hidden_size, hidden_size], dtype=torch.float32)

else:

U = torch.tensor(U_attr, dtype=torch.float32)

self.U = torch.nn.Parameter(U)

# 定义模型参数b,其shape为 1 x hidden_size

if b_attr == None:

b = torch.zeros(size=[1, hidden_size], dtype=torch.float32)

else:

b = torch.tensor(b_attr, dtype=torch.float32)

self.b = torch.nn.Parameter(b)

# 初始化向量

def init_state(self, batch_size):

hidden_state = torch.zeros(size=[batch_size, self.hidden_size], dtype=torch.float32)

return hidden_state

# 定义前向计算

def forward(self, inputs, hidden_state=None):

# inputs: 输入数据, 其shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size = inputs.shape

# 初始化起始状态的隐向量, 其shape为 batch_size x hidden_size

if hidden_state is None:

hidden_state = self.init_state(batch_size)

# 循环执行RNN计算

for step in range(seq_len):

# 获取当前时刻的输入数据step_input, 其shape为 batch_size x input_size

step_input = inputs[:, step, :]

# 获取当前时刻的隐状态向量hidden_state, 其shape为 batch_size x hidden_size

hidden_state = F.tanh(torch.matmul(step_input, self.W) + torch.matmul(hidden_state, self.U) + self.b)

return hidden_state

## 初始化参数并运行

U_attr = [[0.0, 0.1], [0.1, 0.0]]

b_attr = [[0.1, 0.1]]

W_attr = [[0.1, 0.2], [0.1, 0.2]]

srn = SRN(2, 2, W_attr=W_attr, U_attr=U_attr, b_attr=b_attr)

inputs = torch.tensor([[[1, 0], [0, 2]]], dtype=torch.float32)

hidden_state = srn(inputs)

print("hidden_state", hidden_state)

![]()

将自己实现的SRN和Paddle框架内置的SRN返回的结果进行打印展示

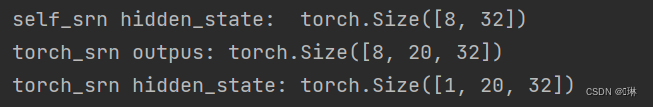

batch_size, seq_len, input_size = 8, 20, 32

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 设置模型的hidden_size

hidden_size = 32

torch_srn = nn.RNN(input_size, hidden_size)

self_srn = SRN(input_size, hidden_size)

self_hidden_state = self_srn(inputs)

torch_outputs, torch_hidden_state = torch_srn(inputs)

print("self_srn hidden_state: ", self_hidden_state.shape)

print("torch_srn outpus:", torch_outputs.shape)

print("torch_srn hidden_state:", torch_hidden_state.shape)

在进行实验时,首先定义输入数据inputs,然后将该数据torch内置的SRN与自己实现的SRN模型中,最后通过对比两者的隐状态输出向量

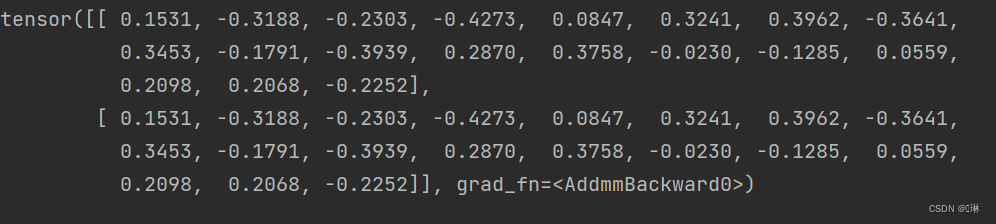

batch_size, seq_len, input_size, hidden_size = 2, 5, 10, 10

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 设置模型的hidden_size

bx_attr = torch.nn.Parameter(torch.tensor(torch.zeros([hidden_size, ])))

paddle_srn = nn.RNN(input_size, hidden_size)

# 获取paddle_srn中的参数,并设置相应的paramAttr,用于初始化SRN

W_attr = torch.nn.Parameter(torch.tensor(paddle_srn.weight_ih_l0.T))

U_attr = torch.nn.Parameter(torch.tensor(paddle_srn.weight_hh_l0.T))

b_attr = torch.nn.Parameter(torch.tensor(paddle_srn.bias_hh_l0))

self_srn = SRN(input_size, hidden_size, W_attr=W_attr, U_attr=U_attr, b_attr=b_attr)

# 进行前向计算,获取隐状态向量,并打印展示

self_hidden_state = self_srn(inputs)

paddle_outputs, paddle_hidden_state = paddle_srn(inputs)

print("torch SRN:\n", paddle_hidden_state.detach().numpy().squeeze(0))

print("self SRN:\n", self_hidden_state.detach().numpy())

进行对比两者在运算速度方面的差异

import time

# 这里创建一个随机数组作为测试数据,数据shape为batch_size x seq_len x input_size

batch_size, seq_len, input_size, hidden_size = 2, 5, 10, 10

inputs = torch.randn(size=[batch_size, seq_len, input_size])

# 实例化模型

self_srn = SRN(input_size, hidden_size)

torch_srn = nn.RNN(input_size, hidden_size)

# 计算自己实现的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

out = self_srn(inputs)

# 预热10次运算,不计入最终速度统计

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('self_srn speed:', avg_model_time, 's')

# 计算Paddle内置的SRN运算速度

model_time = 0

for i in range(100):

strat_time = time.time()

out = paddle_srn(inputs)

# 预热10次运算,不计入最终速度统计

if i < 10:

continue

end_time = time.time()

model_time += (end_time - strat_time)

avg_model_time = model_time / 90

print('torch_srn speed:', avg_model_time, 's')

3、 模型汇总

class Model_RNN4SeqClass(nn.Module):

def __init__(self, model, num_digits, input_size, hidden_size, num_classes):

super(Model_RNN4SeqClass, self).__init__()

# 传入实例化的RNN层,例如SRN

self.rnn_model = model

# 词典大小

self.num_digits = num_digits

# 嵌入向量的维度

self.input_size = input_size

# 定义Embedding层

self.embedding = Embedding(num_digits, input_size)

# 定义线性层

self.linear = nn.Linear(hidden_size, num_classes)

def forward(self, inputs):

# 将数字序列映射为相应向量

inputs_emb = self.embedding(inputs)

# 调用RNN模型

hidden_state = self.rnn_model(inputs_emb)

# 使用最后一个时刻的状态进行数字预测

logits = self.linear(hidden_state)

return logits

# 实例化一个input_size为4, hidden_size为5的SRN

srn = SRN(4, 5)

# 基于srn实例化一个数字预测模型实例

model = Model_RNN4SeqClass(srn, 10, 4, 5, 19)

# 生成一个shape为 2 x 3 的批次数据

inputs = torch.tensor([[1, 2, 3], [2, 3, 4]])

# 进行模型前向预测

logits = model(inputs)

print(logits)

三、模型训练

1、训练指定长度的数字预测模型

import os

import torch

import numpy as np

from nndl.metric import Accuracy

from nndl.runner import RunnerV3

# 训练轮次

num_epochs = 500

# 学习率

lr = 0.001

# 输入数字的类别数

num_digits = 10

# 将数字映射为向量的维度

input_size = 32

# 隐状态向量的维度

hidden_size = 32

# 预测数字的类别数

num_classes = 19

# 批大小

batch_size = 8

# 模型保存目录

save_dir = "./checkpoints"

from torch.utils.data import DataLoader

# 通过指定length进行不同长度数据的实验

def train(length):

print(f"\n====> Training SRN with data of length {length}.")

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

train_set, dev_set, test_set = DigitSumDataset(train_examples), DigitSumDataset(dev_examples), DigitSumDataset(

test_examples)

train_loader = DataLoader(train_set, batch_size=batch_size)

dev_loader = DataLoader(dev_set, batch_size=batch_size)

test_loader = DataLoader(test_set, batch_size=batch_size)

# 实例化模型

base_model = SRN(input_size, hidden_size)

model = Model_RNN4SeqClass(base_model, num_digits, input_size, hidden_size, num_classes)

# 指定优化器

optimizer = torch.optim.Adam(lr=lr, params=model.parameters())

# 定义评价指标

metric = Accuracy()

# 定义损失函数

loss_fn = nn.CrossEntropyLoss()

# 基于以上组件,实例化Runner

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 进行模型训练

model_save_path = os.path.join(save_dir, f"best_srn_model_{length}.pdparams")

runner.train(train_loader, dev_loader, num_epochs=num_epochs, eval_steps=100, log_steps=100,

save_path=model_save_path)

return runner2、多组训练

srn_runners = {}

lengths = [10, 15, 20, 25, 30, 35]

for length in lengths:

runner = train(length)

srn_runners[length] = runner====> Training SRN with data of length 30.

[Train] epoch: 0/500, step: 0/19000, loss: 2.89140

[Train] epoch: 2/500, step: 100/19000, loss: 2.63353

[Evaluate] dev score: 0.09000, dev loss: 2.85201

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.09000

[Train] epoch: 5/500, step: 200/19000, loss: 2.48649

[Evaluate] dev score: 0.10000, dev loss: 2.83415

[Evaluate] best accuracy performence has been updated: 0.09000 --> 0.10000

[Train] epoch: 7/500, step: 300/19000, loss: 2.47176

[Evaluate] dev score: 0.10000, dev loss: 2.82944

[Train] epoch: 10/500, step: 400/19000, loss: 2.41105

[Evaluate] dev score: 0.10000, dev loss: 2.82837

[Train] epoch: 13/500, step: 500/19000, loss: 2.46485

[Evaluate] dev score: 0.10000, dev loss: 2.82729

[Train] epoch: 15/500, step: 600/19000, loss: 2.36811

[Evaluate] dev score: 0.10000, dev loss: 2.82870

[Train] epoch: 18/500, step: 700/19000, loss: 2.51080

[Evaluate] dev score: 0.10000, dev loss: 2.82835

[Train] epoch: 21/500, step: 800/19000, loss: 2.68259

[Evaluate] dev score: 0.10000, dev loss: 2.82834

[Train] epoch: 23/500, step: 900/19000, loss: 2.64754

[Evaluate] dev score: 0.10000, dev loss: 2.82999

[Train] epoch: 26/500, step: 1000/19000, loss: 2.71052

[Evaluate] dev score: 0.10000, dev loss: 2.82973

[Train] epoch: 28/500, step: 1100/19000, loss: 3.46239

[Evaluate] dev score: 0.10000, dev loss: 2.83074

[Train] epoch: 31/500, step: 1200/19000, loss: 2.73092

[Evaluate] dev score: 0.11000, dev loss: 2.82986

[Evaluate] best accuracy performence has been updated: 0.10000 --> 0.11000

[Train] epoch: 34/500, step: 1300/19000, loss: 3.01139

[Evaluate] dev score: 0.11000, dev loss: 2.82793

[Train] epoch: 36/500, step: 1400/19000, loss: 2.95779

[Evaluate] dev score: 0.10000, dev loss: 2.82772

[Train] epoch: 39/500, step: 1500/19000, loss: 2.62417

[Evaluate] dev score: 0.12000, dev loss: 2.82301

[Evaluate] best accuracy performence has been updated: 0.11000 --> 0.12000

[Train] epoch: 42/500, step: 1600/19000, loss: 3.08947

[Evaluate] dev score: 0.11000, dev loss: 2.84262

[Train] epoch: 44/500, step: 1700/19000, loss: 2.38599

[Evaluate] dev score: 0.14000, dev loss: 2.82714

[Evaluate] best accuracy performence has been updated: 0.12000 --> 0.14000

[Train] epoch: 47/500, step: 1800/19000, loss: 2.54690

[Evaluate] dev score: 0.13000, dev loss: 2.82604

[Train] epoch: 50/500, step: 1900/19000, loss: 4.08309

[Evaluate] dev score: 0.12000, dev loss: 2.83105

[Train] epoch: 52/500, step: 2000/19000, loss: 2.61723

[Evaluate] dev score: 0.13000, dev loss: 2.83677

[Train] epoch: 55/500, step: 2100/19000, loss: 2.29881

[Evaluate] dev score: 0.12000, dev loss: 2.83485

[Train] epoch: 57/500, step: 2200/19000, loss: 2.29451

[Evaluate] dev score: 0.17000, dev loss: 2.83361

[Evaluate] best accuracy performence has been updated: 0.14000 --> 0.17000

[Train] epoch: 60/500, step: 2300/19000, loss: 2.06859

[Evaluate] dev score: 0.09000, dev loss: 2.85622

[Train] epoch: 63/500, step: 2400/19000, loss: 2.28708

[Evaluate] dev score: 0.10000, dev loss: 2.84263

[Train] epoch: 65/500, step: 2500/19000, loss: 2.07383

[Evaluate] dev score: 0.14000, dev loss: 2.86084

[Train] epoch: 68/500, step: 2600/19000, loss: 2.36320

[Evaluate] dev score: 0.11000, dev loss: 2.85338

[Train] epoch: 71/500, step: 2700/19000, loss: 2.66168

[Evaluate] dev score: 0.11000, dev loss: 2.86976

[Train] epoch: 73/500, step: 2800/19000, loss: 2.57861

[Evaluate] dev score: 0.11000, dev loss: 2.88931

[Train] epoch: 76/500, step: 2900/19000, loss: 2.62486

[Evaluate] dev score: 0.09000, dev loss: 2.87552

[Train] epoch: 78/500, step: 3000/19000, loss: 3.53559

[Evaluate] dev score: 0.11000, dev loss: 2.91478

[Train] epoch: 81/500, step: 3100/19000, loss: 2.45304

[Evaluate] dev score: 0.10000, dev loss: 2.91659

[Train] epoch: 84/500, step: 3200/19000, loss: 2.68421

[Evaluate] dev score: 0.09000, dev loss: 2.90763

[Train] epoch: 86/500, step: 3300/19000, loss: 2.54561

[Evaluate] dev score: 0.10000, dev loss: 2.95961

[Train] epoch: 89/500, step: 3400/19000, loss: 2.45495

[Evaluate] dev score: 0.10000, dev loss: 2.92800

[Train] epoch: 92/500, step: 3500/19000, loss: 2.92510

[Evaluate] dev score: 0.10000, dev loss: 2.93219

[Train] epoch: 94/500, step: 3600/19000, loss: 2.01391

[Evaluate] dev score: 0.11000, dev loss: 2.98736

[Train] epoch: 97/500, step: 3700/19000, loss: 2.69291

[Evaluate] dev score: 0.11000, dev loss: 2.94216

[Train] epoch: 100/500, step: 3800/19000, loss: 3.84634

[Evaluate] dev score: 0.11000, dev loss: 2.96470

[Train] epoch: 102/500, step: 3900/19000, loss: 2.21165

[Evaluate] dev score: 0.10000, dev loss: 2.96394

[Train] epoch: 105/500, step: 4000/19000, loss: 2.28277

[Evaluate] dev score: 0.13000, dev loss: 2.97002

[Train] epoch: 107/500, step: 4100/19000, loss: 2.31536

[Evaluate] dev score: 0.08000, dev loss: 2.94893

[Train] epoch: 110/500, step: 4200/19000, loss: 2.18310

[Evaluate] dev score: 0.10000, dev loss: 3.09379

[Train] epoch: 113/500, step: 4300/19000, loss: 2.07568

[Evaluate] dev score: 0.14000, dev loss: 2.88856

[Train] epoch: 115/500, step: 4400/19000, loss: 1.92610

[Evaluate] dev score: 0.08000, dev loss: 2.94792

[Train] epoch: 118/500, step: 4500/19000, loss: 1.95354

[Evaluate] dev score: 0.08000, dev loss: 3.01889

[Train] epoch: 121/500, step: 4600/19000, loss: 2.72078

[Evaluate] dev score: 0.10000, dev loss: 3.04175

[Train] epoch: 123/500, step: 4700/19000, loss: 2.47021

[Evaluate] dev score: 0.12000, dev loss: 2.91381

[Train] epoch: 126/500, step: 4800/19000, loss: 2.22428

[Evaluate] dev score: 0.08000, dev loss: 2.88290

[Train] epoch: 128/500, step: 4900/19000, loss: 3.48772

[Evaluate] dev score: 0.12000, dev loss: 2.91239

[Train] epoch: 131/500, step: 5000/19000, loss: 2.58595

[Evaluate] dev score: 0.12000, dev loss: 2.84817

[Train] epoch: 134/500, step: 5100/19000, loss: 1.98229

[Evaluate] dev score: 0.11000, dev loss: 2.87803

[Train] epoch: 136/500, step: 5200/19000, loss: 2.34322

[Evaluate] dev score: 0.09000, dev loss: 3.04079

[Train] epoch: 139/500, step: 5300/19000, loss: 2.20506

[Evaluate] dev score: 0.12000, dev loss: 2.75214

[Train] epoch: 142/500, step: 5400/19000, loss: 2.46105

[Evaluate] dev score: 0.14000, dev loss: 2.80240

[Train] epoch: 144/500, step: 5500/19000, loss: 1.70380

[Evaluate] dev score: 0.11000, dev loss: 2.84567

[Train] epoch: 147/500, step: 5600/19000, loss: 2.40838

[Evaluate] dev score: 0.13000, dev loss: 2.89123

[Train] epoch: 150/500, step: 5700/19000, loss: 3.55996

[Evaluate] dev score: 0.18000, dev loss: 2.74900

[Evaluate] best accuracy performence has been updated: 0.17000 --> 0.18000

[Train] epoch: 152/500, step: 5800/19000, loss: 1.94366

[Evaluate] dev score: 0.11000, dev loss: 2.77694

[Train] epoch: 155/500, step: 5900/19000, loss: 2.16266

[Evaluate] dev score: 0.13000, dev loss: 2.87541

[Train] epoch: 157/500, step: 6000/19000, loss: 1.96344

[Evaluate] dev score: 0.13000, dev loss: 2.67051

[Train] epoch: 160/500, step: 6100/19000, loss: 2.15139

[Evaluate] dev score: 0.12000, dev loss: 2.77823

[Train] epoch: 163/500, step: 6200/19000, loss: 2.05669

[Evaluate] dev score: 0.14000, dev loss: 2.64928

[Train] epoch: 165/500, step: 6300/19000, loss: 1.87417

[Evaluate] dev score: 0.17000, dev loss: 2.61312

[Train] epoch: 168/500, step: 6400/19000, loss: 1.80870

[Evaluate] dev score: 0.17000, dev loss: 2.55827

[Train] epoch: 171/500, step: 6500/19000, loss: 2.06816

[Evaluate] dev score: 0.11000, dev loss: 2.55843

[Train] epoch: 173/500, step: 6600/19000, loss: 2.12630

[Evaluate] dev score: 0.08000, dev loss: 3.16377

[Train] epoch: 176/500, step: 6700/19000, loss: 1.99488

[Evaluate] dev score: 0.18000, dev loss: 2.55298

[Train] epoch: 178/500, step: 6800/19000, loss: 2.40557

[Evaluate] dev score: 0.15000, dev loss: 2.51934

[Train] epoch: 181/500, step: 6900/19000, loss: 2.06669

[Evaluate] dev score: 0.18000, dev loss: 2.37826

[Train] epoch: 184/500, step: 7000/19000, loss: 1.43263

[Evaluate] dev score: 0.16000, dev loss: 2.35295

[Train] epoch: 186/500, step: 7100/19000, loss: 1.58488

[Evaluate] dev score: 0.17000, dev loss: 2.47752

[Train] epoch: 189/500, step: 7200/19000, loss: 1.52979

[Evaluate] dev score: 0.17000, dev loss: 2.30765

[Train] epoch: 192/500, step: 7300/19000, loss: 1.70468

[Evaluate] dev score: 0.19000, dev loss: 2.32297

[Evaluate] best accuracy performence has been updated: 0.18000 --> 0.19000

[Train] epoch: 194/500, step: 7400/19000, loss: 1.76281

[Evaluate] dev score: 0.14000, dev loss: 2.53191

[Train] epoch: 197/500, step: 7500/19000, loss: 2.09790

[Evaluate] dev score: 0.23000, dev loss: 2.25974

[Evaluate] best accuracy performence has been updated: 0.19000 --> 0.23000

[Train] epoch: 200/500, step: 7600/19000, loss: 2.66120

[Evaluate] dev score: 0.15000, dev loss: 2.38057

[Train] epoch: 202/500, step: 7700/19000, loss: 1.99124

[Evaluate] dev score: 0.14000, dev loss: 2.53635

[Train] epoch: 205/500, step: 7800/19000, loss: 1.79203

[Evaluate] dev score: 0.20000, dev loss: 2.23683

[Train] epoch: 207/500, step: 7900/19000, loss: 1.50086

[Evaluate] dev score: 0.17000, dev loss: 2.35432

[Train] epoch: 210/500, step: 8000/19000, loss: 1.80534

[Evaluate] dev score: 0.21000, dev loss: 2.25928

[Train] epoch: 213/500, step: 8100/19000, loss: 1.54931

[Evaluate] dev score: 0.16000, dev loss: 2.33652

[Train] epoch: 215/500, step: 8200/19000, loss: 1.46044

[Evaluate] dev score: 0.16000, dev loss: 2.37513

[Train] epoch: 218/500, step: 8300/19000, loss: 1.36323

[Evaluate] dev score: 0.20000, dev loss: 2.41325

[Train] epoch: 221/500, step: 8400/19000, loss: 1.77651

[Evaluate] dev score: 0.21000, dev loss: 2.18722

[Train] epoch: 223/500, step: 8500/19000, loss: 1.58329

[Evaluate] dev score: 0.23000, dev loss: 2.16207

[Train] epoch: 226/500, step: 8600/19000, loss: 1.33013

[Evaluate] dev score: 0.20000, dev loss: 2.05071

[Train] epoch: 228/500, step: 8700/19000, loss: 1.80897

[Evaluate] dev score: 0.23000, dev loss: 2.15144

[Train] epoch: 231/500, step: 8800/19000, loss: 1.51673

[Evaluate] dev score: 0.28000, dev loss: 2.07783

[Evaluate] best accuracy performence has been updated: 0.23000 --> 0.28000

[Train] epoch: 234/500, step: 8900/19000, loss: 1.33192

[Evaluate] dev score: 0.23000, dev loss: 2.12573

[Train] epoch: 236/500, step: 9000/19000, loss: 1.11562

[Evaluate] dev score: 0.20000, dev loss: 2.36624

[Train] epoch: 239/500, step: 9100/19000, loss: 1.60401

[Evaluate] dev score: 0.19000, dev loss: 2.16173

[Train] epoch: 242/500, step: 9200/19000, loss: 1.20686

[Evaluate] dev score: 0.22000, dev loss: 2.22325

[Train] epoch: 244/500, step: 9300/19000, loss: 1.55472

[Evaluate] dev score: 0.28000, dev loss: 2.08223

[Train] epoch: 247/500, step: 9400/19000, loss: 1.33528

[Evaluate] dev score: 0.23000, dev loss: 2.29607

[Train] epoch: 250/500, step: 9500/19000, loss: 2.20383

[Evaluate] dev score: 0.24000, dev loss: 2.09128

[Train] epoch: 252/500, step: 9600/19000, loss: 1.47973

[Evaluate] dev score: 0.24000, dev loss: 2.10758

[Train] epoch: 255/500, step: 9700/19000, loss: 1.06997

[Evaluate] dev score: 0.17000, dev loss: 2.07322

[Train] epoch: 257/500, step: 9800/19000, loss: 1.12165

[Evaluate] dev score: 0.27000, dev loss: 2.03426

[Train] epoch: 260/500, step: 9900/19000, loss: 1.70481

[Evaluate] dev score: 0.24000, dev loss: 2.49782

[Train] epoch: 263/500, step: 10000/19000, loss: 1.23637

[Evaluate] dev score: 0.23000, dev loss: 2.22058

[Train] epoch: 265/500, step: 10100/19000, loss: 1.24709

[Evaluate] dev score: 0.23000, dev loss: 1.93977

[Train] epoch: 268/500, step: 10200/19000, loss: 1.10016

[Evaluate] dev score: 0.25000, dev loss: 1.95701

[Train] epoch: 271/500, step: 10300/19000, loss: 1.01631

[Evaluate] dev score: 0.23000, dev loss: 2.00700

[Train] epoch: 273/500, step: 10400/19000, loss: 1.31807

[Evaluate] dev score: 0.33000, dev loss: 1.93509

[Evaluate] best accuracy performence has been updated: 0.28000 --> 0.33000

[Train] epoch: 276/500, step: 10500/19000, loss: 1.10099

[Evaluate] dev score: 0.25000, dev loss: 1.95030

[Train] epoch: 278/500, step: 10600/19000, loss: 1.42275

[Evaluate] dev score: 0.28000, dev loss: 1.88012

[Train] epoch: 281/500, step: 10700/19000, loss: 1.24542

[Evaluate] dev score: 0.32000, dev loss: 1.92783

[Train] epoch: 284/500, step: 10800/19000, loss: 1.14302

[Evaluate] dev score: 0.29000, dev loss: 1.96246

[Train] epoch: 286/500, step: 10900/19000, loss: 1.00825

[Evaluate] dev score: 0.25000, dev loss: 1.89334

[Train] epoch: 289/500, step: 11000/19000, loss: 1.88732

[Evaluate] dev score: 0.16000, dev loss: 2.54321

[Train] epoch: 292/500, step: 11100/19000, loss: 2.29179

[Evaluate] dev score: 0.16000, dev loss: 2.46154

[Train] epoch: 294/500, step: 11200/19000, loss: 1.41155

[Evaluate] dev score: 0.27000, dev loss: 2.16739

[Train] epoch: 297/500, step: 11300/19000, loss: 1.37701

[Evaluate] dev score: 0.26000, dev loss: 1.90036

[Train] epoch: 300/500, step: 11400/19000, loss: 1.37975

[Evaluate] dev score: 0.36000, dev loss: 1.79521

[Evaluate] best accuracy performence has been updated: 0.33000 --> 0.36000

[Train] epoch: 302/500, step: 11500/19000, loss: 1.06576

[Evaluate] dev score: 0.35000, dev loss: 1.79986

[Train] epoch: 305/500, step: 11600/19000, loss: 0.85137

[Evaluate] dev score: 0.18000, dev loss: 2.09030

[Train] epoch: 307/500, step: 11700/19000, loss: 1.47024

[Evaluate] dev score: 0.26000, dev loss: 1.99050

[Train] epoch: 310/500, step: 11800/19000, loss: 1.13850

[Evaluate] dev score: 0.35000, dev loss: 1.92296

[Train] epoch: 313/500, step: 11900/19000, loss: 0.83939

[Evaluate] dev score: 0.28000, dev loss: 1.96564

[Train] epoch: 315/500, step: 12000/19000, loss: 1.06862

[Evaluate] dev score: 0.36000, dev loss: 2.02571

[Train] epoch: 318/500, step: 12100/19000, loss: 0.49293

[Evaluate] dev score: 0.35000, dev loss: 1.81809

[Train] epoch: 321/500, step: 12200/19000, loss: 0.71738

[Evaluate] dev score: 0.32000, dev loss: 1.90492

[Train] epoch: 323/500, step: 12300/19000, loss: 1.13852

[Evaluate] dev score: 0.32000, dev loss: 1.85574

[Train] epoch: 326/500, step: 12400/19000, loss: 0.98937

[Evaluate] dev score: 0.34000, dev loss: 1.81281

[Train] epoch: 328/500, step: 12500/19000, loss: 2.65968

[Evaluate] dev score: 0.26000, dev loss: 2.03084

[Train] epoch: 331/500, step: 12600/19000, loss: 0.98626

[Evaluate] dev score: 0.29000, dev loss: 2.03959

[Train] epoch: 334/500, step: 12700/19000, loss: 0.84995

[Evaluate] dev score: 0.27000, dev loss: 2.27001

[Train] epoch: 336/500, step: 12800/19000, loss: 0.84074

[Evaluate] dev score: 0.29000, dev loss: 2.01113

[Train] epoch: 339/500, step: 12900/19000, loss: 1.55925

[Evaluate] dev score: 0.21000, dev loss: 2.67087

[Train] epoch: 342/500, step: 13000/19000, loss: 1.23020

[Evaluate] dev score: 0.23000, dev loss: 2.22787

[Train] epoch: 344/500, step: 13100/19000, loss: 1.30065

[Evaluate] dev score: 0.27000, dev loss: 1.95521

[Train] epoch: 347/500, step: 13200/19000, loss: 1.02801

[Evaluate] dev score: 0.37000, dev loss: 1.73946

[Evaluate] best accuracy performence has been updated: 0.36000 --> 0.37000

[Train] epoch: 350/500, step: 13300/19000, loss: 1.45356

[Evaluate] dev score: 0.38000, dev loss: 1.81108

[Evaluate] best accuracy performence has been updated: 0.37000 --> 0.38000

[Train] epoch: 352/500, step: 13400/19000, loss: 1.02183

[Evaluate] dev score: 0.38000, dev loss: 1.76576

[Train] epoch: 355/500, step: 13500/19000, loss: 0.72209

[Evaluate] dev score: 0.30000, dev loss: 1.89568

[Train] epoch: 357/500, step: 13600/19000, loss: 0.96432

[Evaluate] dev score: 0.32000, dev loss: 1.88867

[Train] epoch: 360/500, step: 13700/19000, loss: 0.61385

[Evaluate] dev score: 0.35000, dev loss: 1.87466

[Train] epoch: 363/500, step: 13800/19000, loss: 0.83263

[Evaluate] dev score: 0.35000, dev loss: 1.93427

[Train] epoch: 365/500, step: 13900/19000, loss: 0.94496

[Evaluate] dev score: 0.41000, dev loss: 1.71097

[Evaluate] best accuracy performence has been updated: 0.38000 --> 0.41000

[Train] epoch: 368/500, step: 14000/19000, loss: 0.40011

[Evaluate] dev score: 0.41000, dev loss: 1.78513

[Train] epoch: 371/500, step: 14100/19000, loss: 0.56881

[Evaluate] dev score: 0.35000, dev loss: 1.86246

[Train] epoch: 373/500, step: 14200/19000, loss: 0.86142

[Evaluate] dev score: 0.36000, dev loss: 1.87656

[Train] epoch: 376/500, step: 14300/19000, loss: 0.72850

[Evaluate] dev score: 0.43000, dev loss: 1.68163

[Evaluate] best accuracy performence has been updated: 0.41000 --> 0.43000

[Train] epoch: 378/500, step: 14400/19000, loss: 1.07902

[Evaluate] dev score: 0.44000, dev loss: 1.82957

[Evaluate] best accuracy performence has been updated: 0.43000 --> 0.44000

[Train] epoch: 381/500, step: 14500/19000, loss: 0.74099

[Evaluate] dev score: 0.41000, dev loss: 1.71232

[Train] epoch: 384/500, step: 14600/19000, loss: 0.99168

[Evaluate] dev score: 0.39000, dev loss: 1.80167

[Train] epoch: 386/500, step: 14700/19000, loss: 0.77459

[Evaluate] dev score: 0.41000, dev loss: 1.81092

[Train] epoch: 389/500, step: 14800/19000, loss: 0.73715

[Evaluate] dev score: 0.35000, dev loss: 1.94274

[Train] epoch: 392/500, step: 14900/19000, loss: 2.01447

[Evaluate] dev score: 0.19000, dev loss: 2.59065

[Train] epoch: 394/500, step: 15000/19000, loss: 1.15893

[Evaluate] dev score: 0.39000, dev loss: 1.82417

[Train] epoch: 397/500, step: 15100/19000, loss: 1.28561

[Evaluate] dev score: 0.38000, dev loss: 1.86641

[Train] epoch: 400/500, step: 15200/19000, loss: 1.94360

[Evaluate] dev score: 0.34000, dev loss: 2.03064

[Train] epoch: 402/500, step: 15300/19000, loss: 0.77416

[Evaluate] dev score: 0.41000, dev loss: 1.78454

[Train] epoch: 405/500, step: 15400/19000, loss: 0.54969

[Evaluate] dev score: 0.36000, dev loss: 1.89881

[Train] epoch: 407/500, step: 15500/19000, loss: 0.70231

[Evaluate] dev score: 0.38000, dev loss: 1.83338

[Train] epoch: 410/500, step: 15600/19000, loss: 0.94526

[Evaluate] dev score: 0.40000, dev loss: 1.90280

[Train] epoch: 413/500, step: 15700/19000, loss: 0.54051

[Evaluate] dev score: 0.39000, dev loss: 1.95081

[Train] epoch: 415/500, step: 15800/19000, loss: 0.42230

[Evaluate] dev score: 0.38000, dev loss: 2.07481

[Train] epoch: 418/500, step: 15900/19000, loss: 0.37952

[Evaluate] dev score: 0.41000, dev loss: 1.84083

[Train] epoch: 421/500, step: 16000/19000, loss: 0.55044

[Evaluate] dev score: 0.33000, dev loss: 2.18017

[Train] epoch: 423/500, step: 16100/19000, loss: 0.33902

[Evaluate] dev score: 0.33000, dev loss: 2.17286

[Train] epoch: 426/500, step: 16200/19000, loss: 1.16409

[Evaluate] dev score: 0.33000, dev loss: 1.91024

[Train] epoch: 428/500, step: 16300/19000, loss: 1.20286

[Evaluate] dev score: 0.31000, dev loss: 2.25520

[Train] epoch: 431/500, step: 16400/19000, loss: 0.88689

[Evaluate] dev score: 0.31000, dev loss: 2.08541

[Train] epoch: 434/500, step: 16500/19000, loss: 0.48423

[Evaluate] dev score: 0.36000, dev loss: 2.01878

[Train] epoch: 436/500, step: 16600/19000, loss: 0.60877

[Evaluate] dev score: 0.31000, dev loss: 2.16242

[Train] epoch: 439/500, step: 16700/19000, loss: 0.68841

[Evaluate] dev score: 0.35000, dev loss: 2.03878

[Train] epoch: 442/500, step: 16800/19000, loss: 2.38079

[Evaluate] dev score: 0.30000, dev loss: 2.25089

[Train] epoch: 444/500, step: 16900/19000, loss: 1.02631

[Evaluate] dev score: 0.38000, dev loss: 2.03126

[Train] epoch: 447/500, step: 17000/19000, loss: 0.56591

[Evaluate] dev score: 0.39000, dev loss: 1.93640

[Train] epoch: 450/500, step: 17100/19000, loss: 1.17256

[Evaluate] dev score: 0.40000, dev loss: 1.92226

[Train] epoch: 452/500, step: 17200/19000, loss: 0.97210

[Evaluate] dev score: 0.37000, dev loss: 1.94448

[Train] epoch: 455/500, step: 17300/19000, loss: 0.51179

[Evaluate] dev score: 0.44000, dev loss: 1.81071

[Train] epoch: 457/500, step: 17400/19000, loss: 0.65707

[Evaluate] dev score: 0.40000, dev loss: 1.97037

[Train] epoch: 460/500, step: 17500/19000, loss: 0.51341

[Evaluate] dev score: 0.36000, dev loss: 2.01482

[Train] epoch: 463/500, step: 17600/19000, loss: 0.49713

[Evaluate] dev score: 0.42000, dev loss: 1.93025

[Train] epoch: 465/500, step: 17700/19000, loss: 0.50930

[Evaluate] dev score: 0.48000, dev loss: 1.84325

[Evaluate] best accuracy performence has been updated: 0.44000 --> 0.48000

[Train] epoch: 468/500, step: 17800/19000, loss: 0.10676

[Evaluate] dev score: 0.46000, dev loss: 1.74837

[Train] epoch: 471/500, step: 17900/19000, loss: 0.40460

[Evaluate] dev score: 0.46000, dev loss: 1.82788

[Train] epoch: 473/500, step: 18000/19000, loss: 0.33236

[Evaluate] dev score: 0.43000, dev loss: 1.84958

[Train] epoch: 476/500, step: 18100/19000, loss: 0.23765

[Evaluate] dev score: 0.39000, dev loss: 2.14286

[Train] epoch: 478/500, step: 18200/19000, loss: 1.04484

[Evaluate] dev score: 0.38000, dev loss: 2.11414

[Train] epoch: 481/500, step: 18300/19000, loss: 0.56013

[Evaluate] dev score: 0.45000, dev loss: 1.81655

[Train] epoch: 484/500, step: 18400/19000, loss: 0.54174

[Evaluate] dev score: 0.47000, dev loss: 1.89915

[Train] epoch: 486/500, step: 18500/19000, loss: 0.59791

[Evaluate] dev score: 0.45000, dev loss: 2.01580

[Train] epoch: 489/500, step: 18600/19000, loss: 1.05052

[Evaluate] dev score: 0.41000, dev loss: 2.10556

[Train] epoch: 492/500, step: 18700/19000, loss: 0.40325

[Evaluate] dev score: 0.38000, dev loss: 2.14820

[Train] epoch: 494/500, step: 18800/19000, loss: 0.72360

[Evaluate] dev score: 0.51000, dev loss: 1.89030

[Evaluate] best accuracy performence has been updated: 0.48000 --> 0.51000

[Train] epoch: 497/500, step: 18900/19000, loss: 0.61484

[Evaluate] dev score: 0.37000, dev loss: 2.12140

[Evaluate] dev score: 0.40000, dev loss: 2.14802

[Train] Training done!

====> Training SRN with data of length 35.

[Train] epoch: 0/500, step: 0/19000, loss: 3.00431

[Train] epoch: 2/500, step: 100/19000, loss: 2.81409

[Evaluate] dev score: 0.10000, dev loss: 2.86038

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.10000

[Train] epoch: 5/500, step: 200/19000, loss: 2.48829

[Evaluate] dev score: 0.10000, dev loss: 2.83834

[Train] epoch: 7/500, step: 300/19000, loss: 2.50157

[Evaluate] dev score: 0.10000, dev loss: 2.83114

[Train] epoch: 10/500, step: 400/19000, loss: 2.42964

[Evaluate] dev score: 0.10000, dev loss: 2.82958

[Train] epoch: 13/500, step: 500/19000, loss: 2.45548

[Evaluate] dev score: 0.10000, dev loss: 2.82845

[Train] epoch: 15/500, step: 600/19000, loss: 2.37207

[Evaluate] dev score: 0.09000, dev loss: 2.82992

[Train] epoch: 18/500, step: 700/19000, loss: 2.51337

[Evaluate] dev score: 0.09000, dev loss: 2.82961

[Train] epoch: 21/500, step: 800/19000, loss: 2.69406

[Evaluate] dev score: 0.09000, dev loss: 2.83124

[Train] epoch: 23/500, step: 900/19000, loss: 2.64306

[Evaluate] dev score: 0.09000, dev loss: 2.83631

[Train] epoch: 26/500, step: 1000/19000, loss: 2.65695

[Evaluate] dev score: 0.08000, dev loss: 2.86136

[Train] epoch: 28/500, step: 1100/19000, loss: 3.27639

[Evaluate] dev score: 0.07000, dev loss: 2.88220

[Train] epoch: 31/500, step: 1200/19000, loss: 2.79157

[Evaluate] dev score: 0.08000, dev loss: 2.89781

[Train] epoch: 34/500, step: 1300/19000, loss: 3.04551

[Evaluate] dev score: 0.06000, dev loss: 2.93995

[Train] epoch: 36/500, step: 1400/19000, loss: 2.93477

[Evaluate] dev score: 0.08000, dev loss: 2.94270

[Train] epoch: 39/500, step: 1500/19000, loss: 2.77189

[Evaluate] dev score: 0.08000, dev loss: 2.96031

[Train] epoch: 42/500, step: 1600/19000, loss: 3.06350

[Evaluate] dev score: 0.05000, dev loss: 2.98653

[Train] epoch: 44/500, step: 1700/19000, loss: 2.72340

[Evaluate] dev score: 0.08000, dev loss: 2.97084

[Train] epoch: 47/500, step: 1800/19000, loss: 2.26065

[Evaluate] dev score: 0.08000, dev loss: 2.98128

[Train] epoch: 50/500, step: 1900/19000, loss: 3.51374

[Evaluate] dev score: 0.06000, dev loss: 3.05056

[Train] epoch: 52/500, step: 2000/19000, loss: 2.28991

[Evaluate] dev score: 0.09000, dev loss: 2.96934

[Train] epoch: 55/500, step: 2100/19000, loss: 2.21684

[Evaluate] dev score: 0.04000, dev loss: 3.05883

[Train] epoch: 57/500, step: 2200/19000, loss: 2.45989

[Evaluate] dev score: 0.09000, dev loss: 3.00637

[Train] epoch: 60/500, step: 2300/19000, loss: 2.22667

[Evaluate] dev score: 0.08000, dev loss: 3.03758

[Train] epoch: 63/500, step: 2400/19000, loss: 2.20506

[Evaluate] dev score: 0.04000, dev loss: 3.06743

[Train] epoch: 65/500, step: 2500/19000, loss: 2.32074

[Evaluate] dev score: 0.09000, dev loss: 3.04321

[Train] epoch: 68/500, step: 2600/19000, loss: 2.39051

[Evaluate] dev score: 0.08000, dev loss: 3.03114

[Train] epoch: 71/500, step: 2700/19000, loss: 2.44667

[Evaluate] dev score: 0.04000, dev loss: 3.09897

[Train] epoch: 73/500, step: 2800/19000, loss: 2.47564

[Evaluate] dev score: 0.08000, dev loss: 3.04176

[Train] epoch: 76/500, step: 2900/19000, loss: 2.47968

[Evaluate] dev score: 0.07000, dev loss: 3.09992

[Train] epoch: 78/500, step: 3000/19000, loss: 3.01808

[Evaluate] dev score: 0.06000, dev loss: 3.06690

[Train] epoch: 81/500, step: 3100/19000, loss: 2.60049

[Evaluate] dev score: 0.09000, dev loss: 3.06983

[Train] epoch: 84/500, step: 3200/19000, loss: 2.94721

[Evaluate] dev score: 0.03000, dev loss: 3.13936

[Train] epoch: 86/500, step: 3300/19000, loss: 2.56022

[Evaluate] dev score: 0.08000, dev loss: 3.08554

[Train] epoch: 89/500, step: 3400/19000, loss: 2.65393

[Evaluate] dev score: 0.07000, dev loss: 3.10632

[Train] epoch: 92/500, step: 3500/19000, loss: 3.06452

[Evaluate] dev score: 0.05000, dev loss: 3.11420

[Train] epoch: 94/500, step: 3600/19000, loss: 2.41711

[Evaluate] dev score: 0.05000, dev loss: 3.11503

[Train] epoch: 97/500, step: 3700/19000, loss: 1.93109

[Evaluate] dev score: 0.05000, dev loss: 3.14805

[Train] epoch: 100/500, step: 3800/19000, loss: 3.11701

[Evaluate] dev score: 0.05000, dev loss: 3.14282

[Train] epoch: 102/500, step: 3900/19000, loss: 2.41227

[Evaluate] dev score: 0.05000, dev loss: 3.13719

[Train] epoch: 105/500, step: 4000/19000, loss: 2.06184

[Evaluate] dev score: 0.04000, dev loss: 3.17650

[Train] epoch: 107/500, step: 4100/19000, loss: 2.33742

[Evaluate] dev score: 0.03000, dev loss: 3.16420

[Train] epoch: 110/500, step: 4200/19000, loss: 1.94291

[Evaluate] dev score: 0.03000, dev loss: 3.14704

[Train] epoch: 113/500, step: 4300/19000, loss: 1.99334

[Evaluate] dev score: 0.04000, dev loss: 3.18473

[Train] epoch: 115/500, step: 4400/19000, loss: 2.28772

[Evaluate] dev score: 0.04000, dev loss: 3.15456

[Train] epoch: 118/500, step: 4500/19000, loss: 2.22207

[Evaluate] dev score: 0.05000, dev loss: 3.16795

[Train] epoch: 121/500, step: 4600/19000, loss: 2.30175

[Evaluate] dev score: 0.03000, dev loss: 3.22080

[Train] epoch: 123/500, step: 4700/19000, loss: 2.50926

[Evaluate] dev score: 0.05000, dev loss: 3.16373

[Train] epoch: 126/500, step: 4800/19000, loss: 2.39664

[Evaluate] dev score: 0.02000, dev loss: 3.23268

[Train] epoch: 128/500, step: 4900/19000, loss: 2.99498

[Evaluate] dev score: 0.05000, dev loss: 3.20429

[Train] epoch: 131/500, step: 5000/19000, loss: 2.39326

[Evaluate] dev score: 0.02000, dev loss: 3.19307

[Train] epoch: 134/500, step: 5100/19000, loss: 2.84603

[Evaluate] dev score: 0.06000, dev loss: 3.22510

[Train] epoch: 136/500, step: 5200/19000, loss: 2.46238

[Evaluate] dev score: 0.10000, dev loss: 3.18917

[Train] epoch: 139/500, step: 5300/19000, loss: 2.56155

[Evaluate] dev score: 0.03000, dev loss: 3.22571

[Train] epoch: 142/500, step: 5400/19000, loss: 2.95418

[Evaluate] dev score: 0.06000, dev loss: 3.20950

[Train] epoch: 144/500, step: 5500/19000, loss: 2.27598

[Evaluate] dev score: 0.10000, dev loss: 3.20326

[Train] epoch: 147/500, step: 5600/19000, loss: 1.87571

[Evaluate] dev score: 0.04000, dev loss: 3.28482

[Train] epoch: 150/500, step: 5700/19000, loss: 3.15116

[Evaluate] dev score: 0.08000, dev loss: 3.20008

[Train] epoch: 152/500, step: 5800/19000, loss: 2.24573

[Evaluate] dev score: 0.11000, dev loss: 3.22793

[Evaluate] best accuracy performence has been updated: 0.10000 --> 0.11000

[Train] epoch: 155/500, step: 5900/19000, loss: 1.83945

[Evaluate] dev score: 0.04000, dev loss: 3.29700

[Train] epoch: 157/500, step: 6000/19000, loss: 2.40596

[Evaluate] dev score: 0.07000, dev loss: 3.23488

[Train] epoch: 160/500, step: 6100/19000, loss: 1.84838

[Evaluate] dev score: 0.11000, dev loss: 3.24570

[Train] epoch: 163/500, step: 6200/19000, loss: 2.09208

[Evaluate] dev score: 0.10000, dev loss: 3.28577

[Train] epoch: 165/500, step: 6300/19000, loss: 2.25885

[Evaluate] dev score: 0.11000, dev loss: 3.26718

[Train] epoch: 168/500, step: 6400/19000, loss: 2.18948

[Evaluate] dev score: 0.07000, dev loss: 3.28632

[Train] epoch: 171/500, step: 6500/19000, loss: 2.25166

[Evaluate] dev score: 0.11000, dev loss: 3.30716

[Train] epoch: 173/500, step: 6600/19000, loss: 2.44842

[Evaluate] dev score: 0.08000, dev loss: 3.26738

[Train] epoch: 176/500, step: 6700/19000, loss: 2.20460

[Evaluate] dev score: 0.08000, dev loss: 3.31942

[Train] epoch: 178/500, step: 6800/19000, loss: 2.96396

[Evaluate] dev score: 0.12000, dev loss: 3.31938

[Evaluate] best accuracy performence has been updated: 0.11000 --> 0.12000

[Train] epoch: 181/500, step: 6900/19000, loss: 2.38121

[Evaluate] dev score: 0.09000, dev loss: 3.29187

[Train] epoch: 184/500, step: 7000/19000, loss: 3.03378

[Evaluate] dev score: 0.10000, dev loss: 3.34054

[Train] epoch: 186/500, step: 7100/19000, loss: 2.33013

[Evaluate] dev score: 0.07000, dev loss: 3.36421

[Train] epoch: 189/500, step: 7200/19000, loss: 2.49606

[Evaluate] dev score: 0.11000, dev loss: 3.31687

[Train] epoch: 192/500, step: 7300/19000, loss: 2.67140

[Evaluate] dev score: 0.06000, dev loss: 3.45639

[Train] epoch: 194/500, step: 7400/19000, loss: 2.08948

[Evaluate] dev score: 0.09000, dev loss: 3.35918

[Train] epoch: 197/500, step: 7500/19000, loss: 1.85620

[Evaluate] dev score: 0.04000, dev loss: 3.56707

[Train] epoch: 200/500, step: 7600/19000, loss: 2.42474

[Evaluate] dev score: 0.07000, dev loss: 3.46748

[Train] epoch: 202/500, step: 7700/19000, loss: 2.21470

[Evaluate] dev score: 0.10000, dev loss: 3.37716

[Train] epoch: 205/500, step: 7800/19000, loss: 1.51413

[Evaluate] dev score: 0.08000, dev loss: 3.43916

[Train] epoch: 207/500, step: 7900/19000, loss: 1.84103

[Evaluate] dev score: 0.09000, dev loss: 3.42960

[Train] epoch: 210/500, step: 8000/19000, loss: 2.16340

[Evaluate] dev score: 0.06000, dev loss: 3.61552

[Train] epoch: 213/500, step: 8100/19000, loss: 2.11005

[Evaluate] dev score: 0.06000, dev loss: 3.52098

[Train] epoch: 215/500, step: 8200/19000, loss: 2.90220

[Evaluate] dev score: 0.04000, dev loss: 3.72608

[Train] epoch: 218/500, step: 8300/19000, loss: 2.12024

[Evaluate] dev score: 0.07000, dev loss: 3.42635

[Train] epoch: 221/500, step: 8400/19000, loss: 2.33284

[Evaluate] dev score: 0.08000, dev loss: 3.39700

[Train] epoch: 223/500, step: 8500/19000, loss: 2.46337

[Evaluate] dev score: 0.07000, dev loss: 3.44008

[Train] epoch: 226/500, step: 8600/19000, loss: 2.26763

[Evaluate] dev score: 0.10000, dev loss: 3.41867

[Train] epoch: 228/500, step: 8700/19000, loss: 2.81310

[Evaluate] dev score: 0.09000, dev loss: 3.39319

[Train] epoch: 231/500, step: 8800/19000, loss: 2.22531

[Evaluate] dev score: 0.08000, dev loss: 3.47192

[Train] epoch: 234/500, step: 8900/19000, loss: 2.94576

[Evaluate] dev score: 0.09000, dev loss: 3.41143

[Train] epoch: 236/500, step: 9000/19000, loss: 2.05171

[Evaluate] dev score: 0.11000, dev loss: 3.44928

[Train] epoch: 239/500, step: 9100/19000, loss: 2.87318

[Evaluate] dev score: 0.09000, dev loss: 3.49551

[Train] epoch: 242/500, step: 9200/19000, loss: 2.46142

[Evaluate] dev score: 0.12000, dev loss: 3.48500

[Train] epoch: 244/500, step: 9300/19000, loss: 2.07170

[Evaluate] dev score: 0.09000, dev loss: 3.46349

[Train] epoch: 247/500, step: 9400/19000, loss: 1.97844

[Evaluate] dev score: 0.12000, dev loss: 3.43405

[Train] epoch: 250/500, step: 9500/19000, loss: 2.21456

[Evaluate] dev score: 0.14000, dev loss: 3.50427

[Evaluate] best accuracy performence has been updated: 0.12000 --> 0.14000

[Train] epoch: 252/500, step: 9600/19000, loss: 2.00247

[Evaluate] dev score: 0.08000, dev loss: 3.47073

[Train] epoch: 255/500, step: 9700/19000, loss: 1.58534

[Evaluate] dev score: 0.11000, dev loss: 3.44047

[Train] epoch: 257/500, step: 9800/19000, loss: 1.88306

[Evaluate] dev score: 0.12000, dev loss: 3.51736

[Train] epoch: 260/500, step: 9900/19000, loss: 2.07824

[Evaluate] dev score: 0.05000, dev loss: 3.63262

[Train] epoch: 263/500, step: 10000/19000, loss: 1.98986

[Evaluate] dev score: 0.08000, dev loss: 3.60623

[Train] epoch: 265/500, step: 10100/19000, loss: 3.02486

[Evaluate] dev score: 0.07000, dev loss: 4.00362

[Train] epoch: 268/500, step: 10200/19000, loss: 1.98225

[Evaluate] dev score: 0.13000, dev loss: 3.38930

[Train] epoch: 271/500, step: 10300/19000, loss: 2.13870

[Evaluate] dev score: 0.10000, dev loss: 3.52621

[Train] epoch: 273/500, step: 10400/19000, loss: 1.93393

[Evaluate] dev score: 0.14000, dev loss: 3.47569

[Train] epoch: 276/500, step: 10500/19000, loss: 1.93391

[Evaluate] dev score: 0.12000, dev loss: 3.49054

[Train] epoch: 278/500, step: 10600/19000, loss: 3.01760

[Evaluate] dev score: 0.07000, dev loss: 3.51724

[Train] epoch: 281/500, step: 10700/19000, loss: 2.27679

[Evaluate] dev score: 0.09000, dev loss: 3.56385

[Train] epoch: 284/500, step: 10800/19000, loss: 2.85812

[Evaluate] dev score: 0.12000, dev loss: 3.54293

[Train] epoch: 286/500, step: 10900/19000, loss: 1.71791

[Evaluate] dev score: 0.11000, dev loss: 3.58159

[Train] epoch: 289/500, step: 11000/19000, loss: 2.66969

[Evaluate] dev score: 0.10000, dev loss: 3.65050

[Train] epoch: 292/500, step: 11100/19000, loss: 2.42285

[Evaluate] dev score: 0.11000, dev loss: 3.66876

[Train] epoch: 294/500, step: 11200/19000, loss: 2.13112

[Evaluate] dev score: 0.11000, dev loss: 3.51758

[Train] epoch: 297/500, step: 11300/19000, loss: 1.57486

[Evaluate] dev score: 0.09000, dev loss: 3.61839

[Train] epoch: 300/500, step: 11400/19000, loss: 2.15850

[Evaluate] dev score: 0.16000, dev loss: 3.63911

[Evaluate] best accuracy performence has been updated: 0.14000 --> 0.16000

[Train] epoch: 302/500, step: 11500/19000, loss: 1.85429

[Evaluate] dev score: 0.14000, dev loss: 3.59516

[Train] epoch: 305/500, step: 11600/19000, loss: 1.70082

[Evaluate] dev score: 0.09000, dev loss: 3.70437

[Train] epoch: 307/500, step: 11700/19000, loss: 1.56460

[Evaluate] dev score: 0.14000, dev loss: 3.67066

[Train] epoch: 310/500, step: 11800/19000, loss: 1.59756

[Evaluate] dev score: 0.10000, dev loss: 3.68991

[Train] epoch: 313/500, step: 11900/19000, loss: 2.06244

[Evaluate] dev score: 0.11000, dev loss: 3.73349

[Train] epoch: 315/500, step: 12000/19000, loss: 1.84967

[Evaluate] dev score: 0.09000, dev loss: 3.72864

[Train] epoch: 318/500, step: 12100/19000, loss: 2.00136

[Evaluate] dev score: 0.05000, dev loss: 3.81271

[Train] epoch: 321/500, step: 12200/19000, loss: 2.08392

[Evaluate] dev score: 0.13000, dev loss: 3.58693

[Train] epoch: 323/500, step: 12300/19000, loss: 1.88450

[Evaluate] dev score: 0.09000, dev loss: 3.69639

[Train] epoch: 326/500, step: 12400/19000, loss: 1.95728

[Evaluate] dev score: 0.13000, dev loss: 3.69149

[Train] epoch: 328/500, step: 12500/19000, loss: 2.31034

[Evaluate] dev score: 0.12000, dev loss: 3.72813

[Train] epoch: 331/500, step: 12600/19000, loss: 2.28758

[Evaluate] dev score: 0.07000, dev loss: 3.72696

[Train] epoch: 334/500, step: 12700/19000, loss: 2.50280

[Evaluate] dev score: 0.10000, dev loss: 3.66609

[Train] epoch: 336/500, step: 12800/19000, loss: 1.97075

[Evaluate] dev score: 0.08000, dev loss: 3.85751

[Train] epoch: 339/500, step: 12900/19000, loss: 2.38283

[Evaluate] dev score: 0.12000, dev loss: 3.92110

[Train] epoch: 342/500, step: 13000/19000, loss: 3.11025

[Evaluate] dev score: 0.06000, dev loss: 3.80147

[Train] epoch: 344/500, step: 13100/19000, loss: 2.22414

[Evaluate] dev score: 0.12000, dev loss: 3.88601

[Train] epoch: 347/500, step: 13200/19000, loss: 1.65477

[Evaluate] dev score: 0.09000, dev loss: 3.84104

[Train] epoch: 350/500, step: 13300/19000, loss: 2.06503

[Evaluate] dev score: 0.13000, dev loss: 3.81716

[Train] epoch: 352/500, step: 13400/19000, loss: 1.74867

[Evaluate] dev score: 0.11000, dev loss: 3.89316

[Train] epoch: 355/500, step: 13500/19000, loss: 1.20198

[Evaluate] dev score: 0.08000, dev loss: 3.97099

[Train] epoch: 357/500, step: 13600/19000, loss: 1.97768

[Evaluate] dev score: 0.10000, dev loss: 3.77641

[Train] epoch: 360/500, step: 13700/19000, loss: 1.54542

[Evaluate] dev score: 0.12000, dev loss: 3.80024

[Train] epoch: 363/500, step: 13800/19000, loss: 1.80397

[Evaluate] dev score: 0.11000, dev loss: 3.89743

[Train] epoch: 365/500, step: 13900/19000, loss: 1.65752

[Evaluate] dev score: 0.06000, dev loss: 3.96979

[Train] epoch: 368/500, step: 14000/19000, loss: 1.76249

[Evaluate] dev score: 0.10000, dev loss: 3.84691

[Train] epoch: 371/500, step: 14100/19000, loss: 1.93570

[Evaluate] dev score: 0.06000, dev loss: 3.92212

[Train] epoch: 373/500, step: 14200/19000, loss: 2.00120

[Evaluate] dev score: 0.07000, dev loss: 3.80000

[Train] epoch: 376/500, step: 14300/19000, loss: 1.82584

[Evaluate] dev score: 0.08000, dev loss: 3.97911

[Train] epoch: 378/500, step: 14400/19000, loss: 2.07816

[Evaluate] dev score: 0.13000, dev loss: 4.00881

[Train] epoch: 381/500, step: 14500/19000, loss: 2.55730

[Evaluate] dev score: 0.10000, dev loss: 3.85067

[Train] epoch: 384/500, step: 14600/19000, loss: 2.52722

[Evaluate] dev score: 0.10000, dev loss: 4.03586

[Train] epoch: 386/500, step: 14700/19000, loss: 1.51734

[Evaluate] dev score: 0.10000, dev loss: 4.04365

[Train] epoch: 389/500, step: 14800/19000, loss: 2.16044

[Evaluate] dev score: 0.12000, dev loss: 3.91848

[Train] epoch: 392/500, step: 14900/19000, loss: 2.39587

[Evaluate] dev score: 0.09000, dev loss: 4.06027

[Train] epoch: 394/500, step: 15000/19000, loss: 2.08945

[Evaluate] dev score: 0.09000, dev loss: 3.96779

[Train] epoch: 397/500, step: 15100/19000, loss: 1.62046

[Evaluate] dev score: 0.10000, dev loss: 4.16261

[Train] epoch: 400/500, step: 15200/19000, loss: 1.80772

[Evaluate] dev score: 0.09000, dev loss: 4.07835

[Train] epoch: 402/500, step: 15300/19000, loss: 2.10772

[Evaluate] dev score: 0.04000, dev loss: 4.07414

[Train] epoch: 405/500, step: 15400/19000, loss: 1.19625

[Evaluate] dev score: 0.09000, dev loss: 3.99218

[Train] epoch: 407/500, step: 15500/19000, loss: 1.29747

[Evaluate] dev score: 0.05000, dev loss: 4.15454

[Train] epoch: 410/500, step: 15600/19000, loss: 1.47176

[Evaluate] dev score: 0.05000, dev loss: 4.07975

[Train] epoch: 413/500, step: 15700/19000, loss: 1.52952

[Evaluate] dev score: 0.10000, dev loss: 4.01823

[Train] epoch: 415/500, step: 15800/19000, loss: 1.44208

[Evaluate] dev score: 0.05000, dev loss: 4.09251

[Train] epoch: 418/500, step: 15900/19000, loss: 1.63375

[Evaluate] dev score: 0.09000, dev loss: 4.00943

[Train] epoch: 421/500, step: 16000/19000, loss: 1.98549

[Evaluate] dev score: 0.08000, dev loss: 4.23662

[Train] epoch: 423/500, step: 16100/19000, loss: 1.68825

[Evaluate] dev score: 0.08000, dev loss: 3.99106

[Train] epoch: 426/500, step: 16200/19000, loss: 1.52688

[Evaluate] dev score: 0.07000, dev loss: 4.01947

[Train] epoch: 428/500, step: 16300/19000, loss: 1.94006

[Evaluate] dev score: 0.08000, dev loss: 4.03054

[Train] epoch: 431/500, step: 16400/19000, loss: 2.21154

[Evaluate] dev score: 0.06000, dev loss: 4.09608

[Train] epoch: 434/500, step: 16500/19000, loss: 2.00266

[Evaluate] dev score: 0.04000, dev loss: 4.19250

[Train] epoch: 436/500, step: 16600/19000, loss: 1.43209

[Evaluate] dev score: 0.09000, dev loss: 4.05459

[Train] epoch: 439/500, step: 16700/19000, loss: 1.79468

[Evaluate] dev score: 0.08000, dev loss: 4.20954

[Train] epoch: 442/500, step: 16800/19000, loss: 2.28426

[Evaluate] dev score: 0.09000, dev loss: 4.20930

[Train] epoch: 444/500, step: 16900/19000, loss: 2.26806

[Evaluate] dev score: 0.12000, dev loss: 4.03653

[Train] epoch: 447/500, step: 17000/19000, loss: 1.56902

[Evaluate] dev score: 0.09000, dev loss: 4.09475

[Train] epoch: 450/500, step: 17100/19000, loss: 3.10300

[Evaluate] dev score: 0.10000, dev loss: 4.39859

[Train] epoch: 452/500, step: 17200/19000, loss: 1.91385

[Evaluate] dev score: 0.09000, dev loss: 3.98219

[Train] epoch: 455/500, step: 17300/19000, loss: 0.94746

[Evaluate] dev score: 0.11000, dev loss: 4.19855

[Train] epoch: 457/500, step: 17400/19000, loss: 1.49264

[Evaluate] dev score: 0.11000, dev loss: 4.13229

[Train] epoch: 460/500, step: 17500/19000, loss: 1.31355

[Evaluate] dev score: 0.08000, dev loss: 4.11570

[Train] epoch: 463/500, step: 17600/19000, loss: 1.46964

[Evaluate] dev score: 0.08000, dev loss: 4.14099

[Train] epoch: 465/500, step: 17700/19000, loss: 1.29687

[Evaluate] dev score: 0.06000, dev loss: 4.08282

[Train] epoch: 468/500, step: 17800/19000, loss: 1.41345

[Evaluate] dev score: 0.10000, dev loss: 4.11643

[Train] epoch: 471/500, step: 17900/19000, loss: 1.87349

[Evaluate] dev score: 0.10000, dev loss: 4.08407

[Train] epoch: 473/500, step: 18000/19000, loss: 1.47560

[Evaluate] dev score: 0.07000, dev loss: 4.26525

[Train] epoch: 476/500, step: 18100/19000, loss: 1.45131

[Evaluate] dev score: 0.09000, dev loss: 4.16154

[Train] epoch: 478/500, step: 18200/19000, loss: 2.13642

[Evaluate] dev score: 0.10000, dev loss: 4.08857

[Train] epoch: 481/500, step: 18300/19000, loss: 2.29550

[Evaluate] dev score: 0.09000, dev loss: 4.35599

[Train] epoch: 484/500, step: 18400/19000, loss: 2.55872

[Evaluate] dev score: 0.11000, dev loss: 4.27496

[Train] epoch: 486/500, step: 18500/19000, loss: 1.34361

[Evaluate] dev score: 0.11000, dev loss: 4.19306

[Train] epoch: 489/500, step: 18600/19000, loss: 1.85293

[Evaluate] dev score: 0.09000, dev loss: 4.19850

[Train] epoch: 492/500, step: 18700/19000, loss: 2.05553

[Evaluate] dev score: 0.06000, dev loss: 4.08807

[Train] epoch: 494/500, step: 18800/19000, loss: 2.19286

[Evaluate] dev score: 0.09000, dev loss: 3.84456

[Train] epoch: 497/500, step: 18900/19000, loss: 1.95186

[Evaluate] dev score: 0.03000, dev loss: 3.84246

[Evaluate] dev score: 0.05000, dev loss: 3.85738

[Train] Training done!

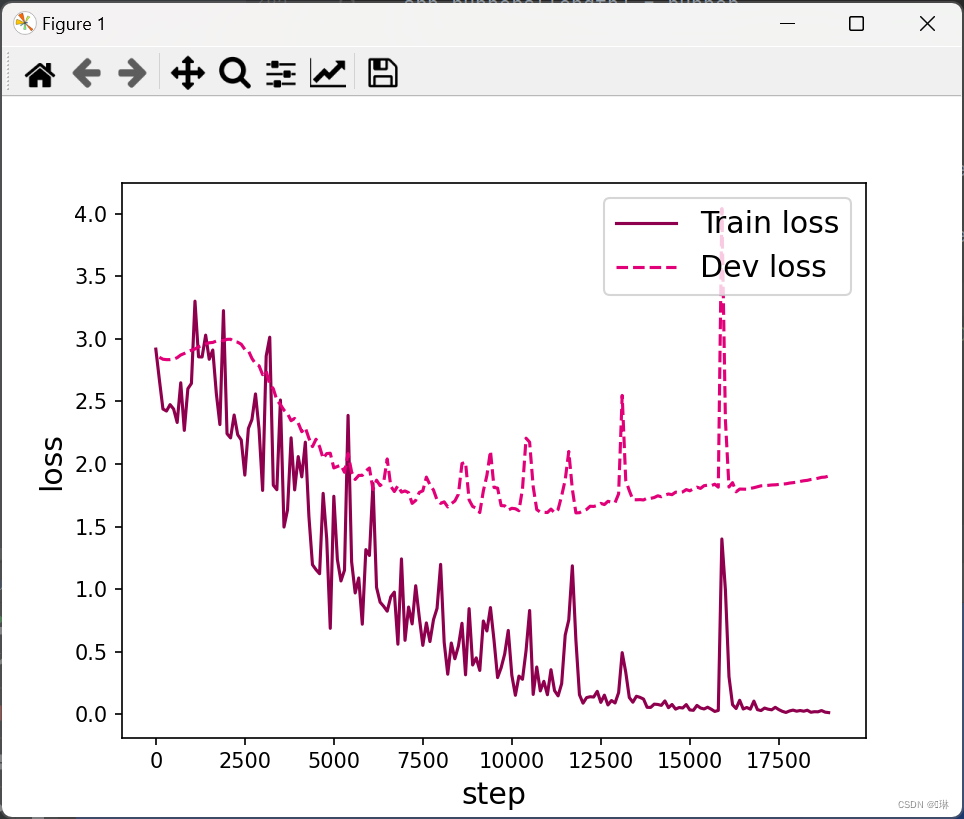

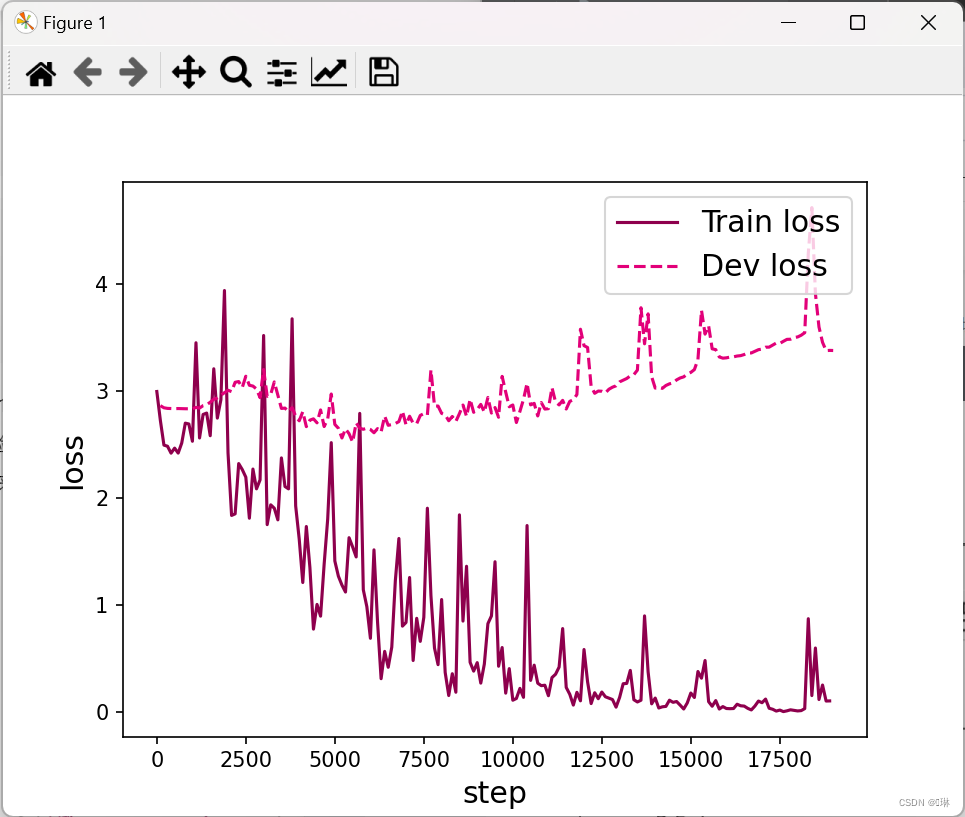

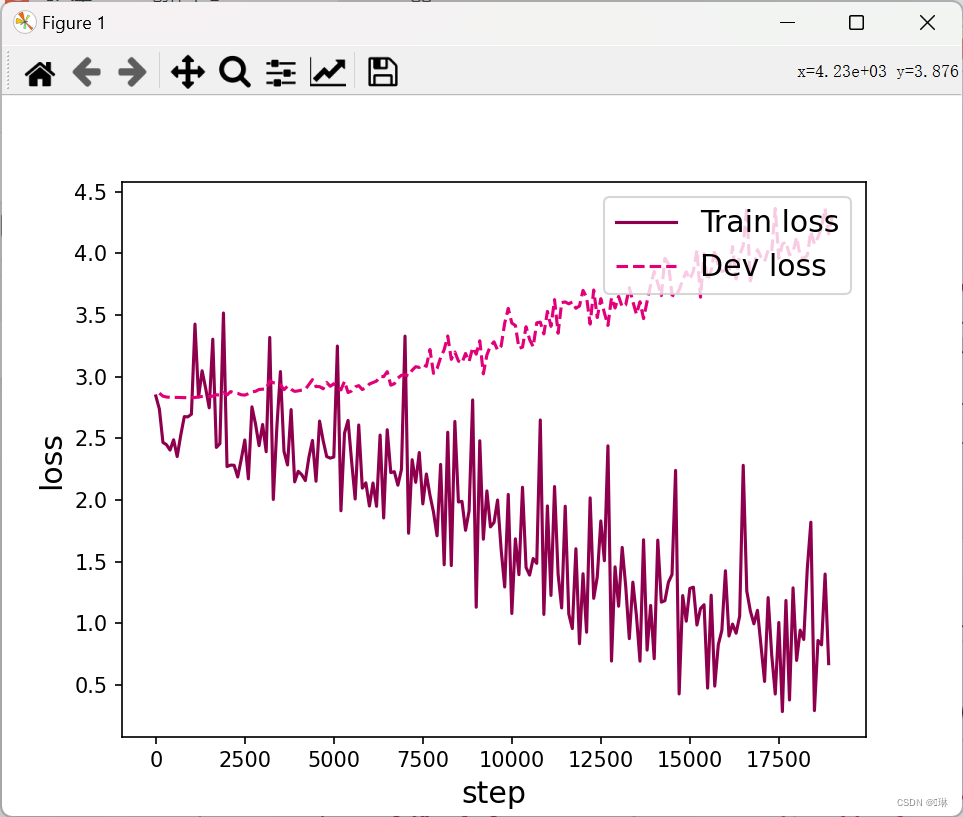

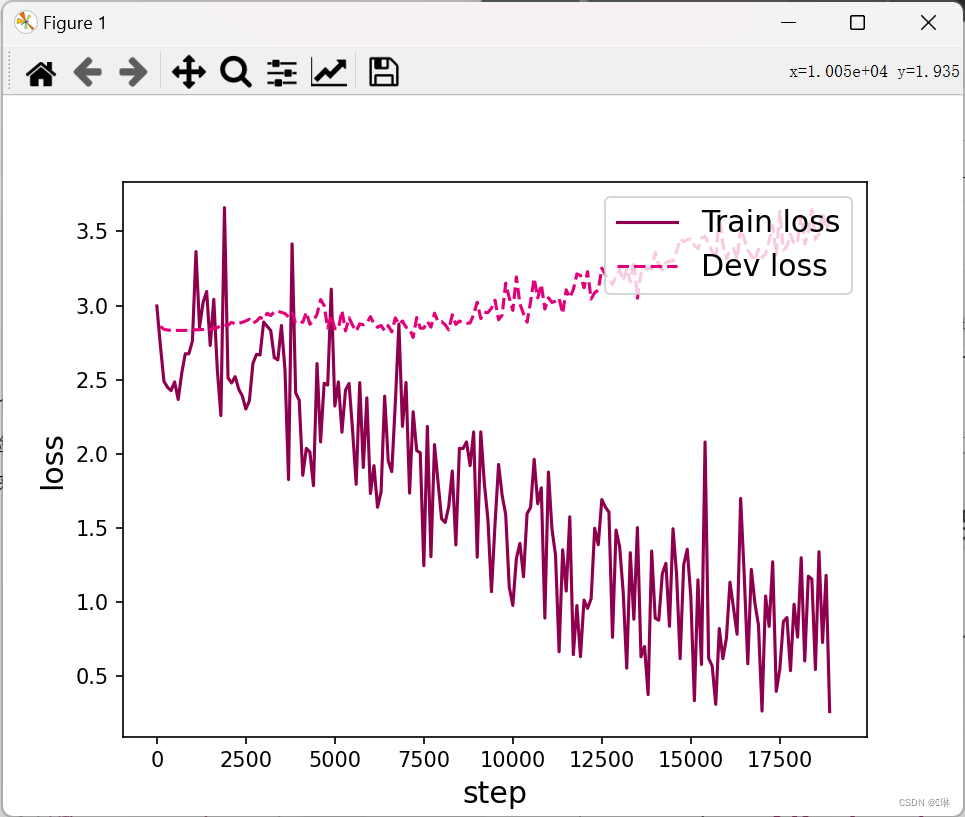

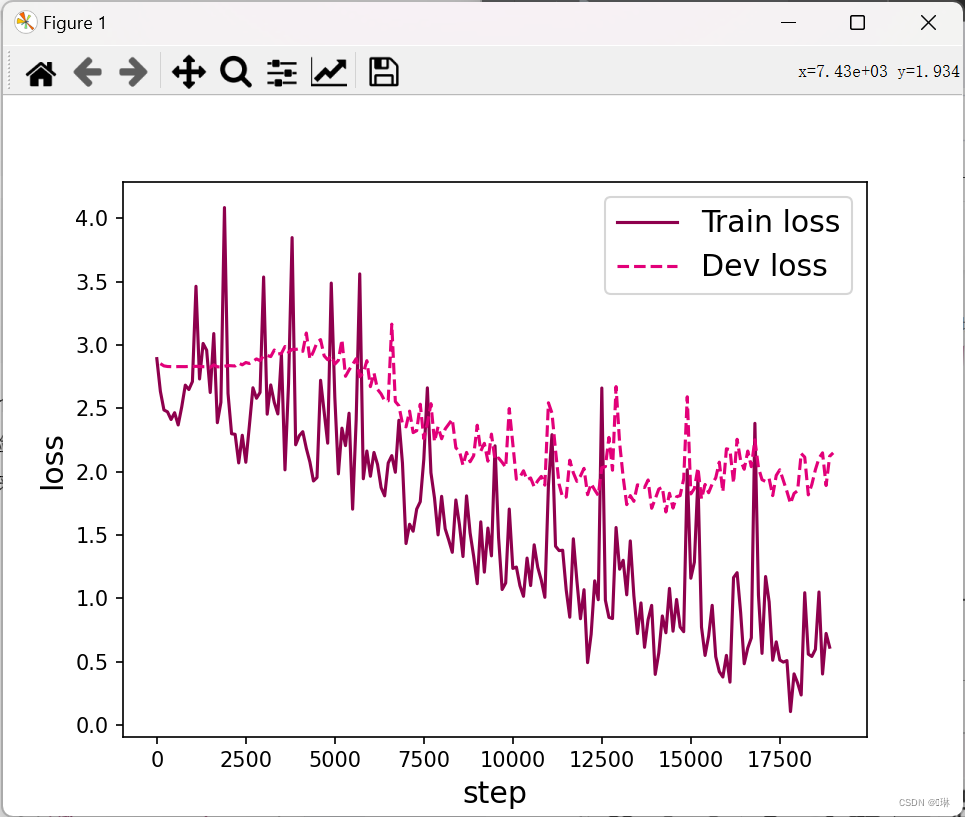

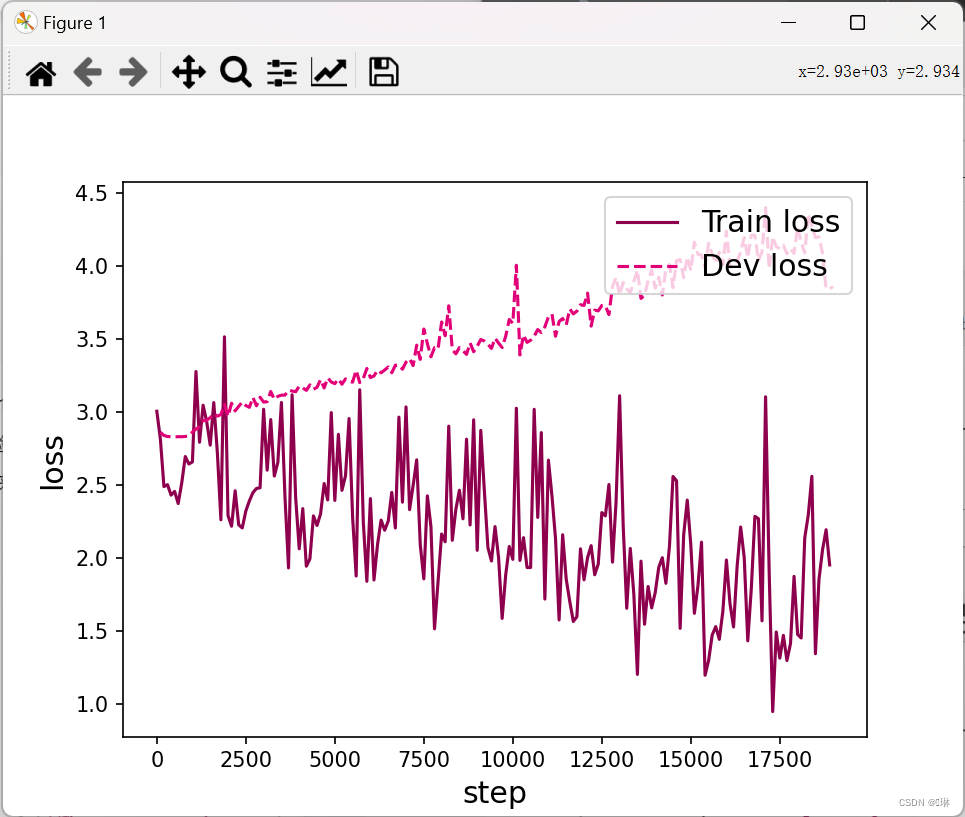

3、损失曲线展示

def plot_training_loss(runner, fig_name, sample_step):

plt.figure()

train_items = runner.train_step_losses[::sample_step]

train_steps = [x[0] for x in train_items]

train_losses = [x[1] for x in train_items]

plt.plot(train_steps, train_losses, color='#8E004D', label="Train loss")

dev_steps = [x[0] for x in runner.dev_losses]

dev_losses = [x[1] for x in runner.dev_losses]

plt.plot(dev_steps, dev_losses, color='#E20079', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='x-large')

plt.xlabel("step", fontsize='x-large')

plt.legend(loc='upper right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

# 画出训练过程中的损失图

for length in lengths:

runner = srn_runners[length]

fig_name = f"./images/6.6_{length}.pdf"

plot_training_loss(runner, fig_name, sample_step=100)

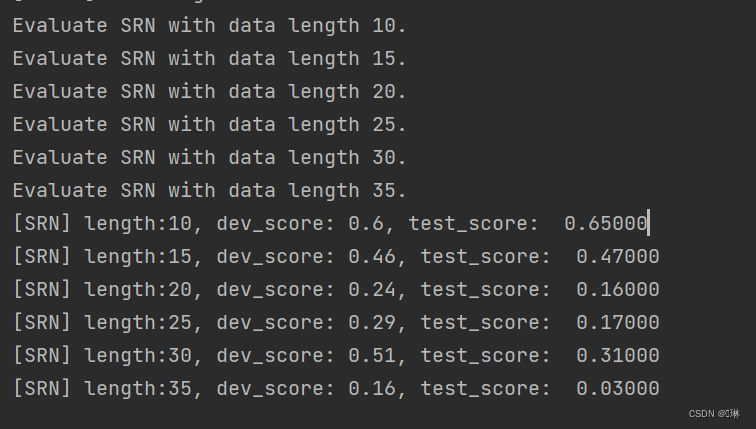

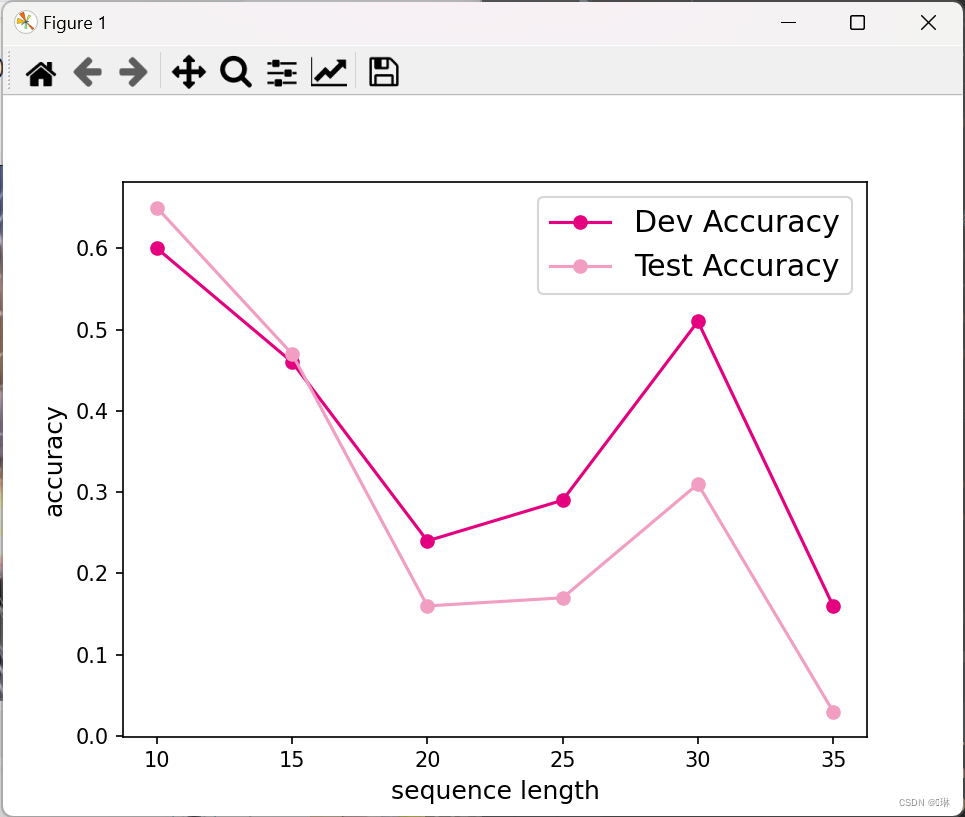

四、模型评价

srn_dev_scores = []

srn_test_scores = []

for length in lengths:

print(f"Evaluate SRN with data length {length}.")

runner = srn_runners[length]

# 加载训练过程中效果最好的模型

model_path = os.path.join(save_dir, f"best_srn_model_{length}.pdparams")

runner.load_model(model_path)

# 加载长度为length的数据

data_path = f"./datasets/{length}"

train_examples, dev_examples, test_examples = load_data(data_path)

test_set = DigitSumDataset(test_examples)

test_loader = DataLoader(test_set, batch_size=batch_size)

# 使用测试集评价模型,获取测试集上的预测准确率

score, _ = runner.evaluate(test_loader)

srn_test_scores.append(score)

srn_dev_scores.append(max(runner.dev_scores))

for length, dev_score, test_score in zip(lengths, srn_dev_scores, srn_test_scores):

print(f"[SRN] length:{length}, dev_score: {dev_score}, test_score: {test_score: .5f}")

import matplotlib.pyplot as plt

plt.plot(lengths, srn_dev_scores, '-o', color='#e4007f', label="Dev Accuracy")

plt.plot(lengths, srn_test_scores, '-o', color='#f19ec2', label="Test Accuracy")

# 绘制坐标轴和图例

plt.ylabel("accuracy", fontsize='large')

plt.xlabel("sequence length", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

fig_name = "./images/6.7.pdf"

plt.savefig(fig_name)

plt.show()

CSDN保存代码好方便!!!

6201

6201

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?