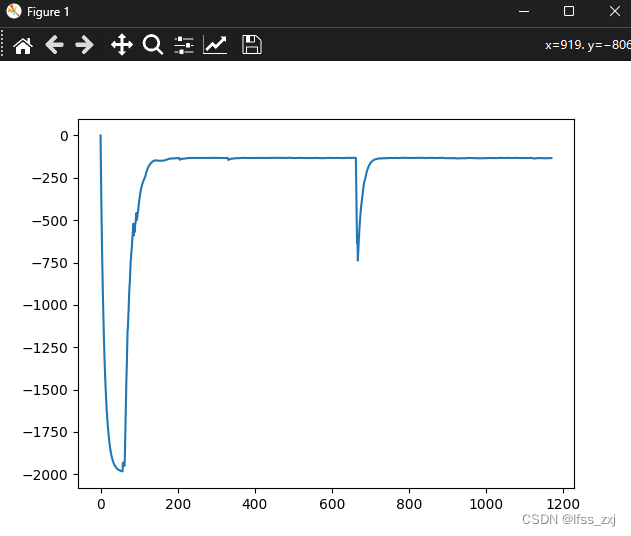

基本上就是最基础的DDPG算法,稍微改了reward机制(不改是真收敛不了),大概200轮左右收敛(我设置的是每轮超过1000次动作之后还是没有抵达终点的话跳出)

首先我们需要了解state的具体情况,state[0]是山地车的位置,起始位置是-0.5;state[1]是山地车的速度,负的表示向左移动,正的表示向右移动,依据这个就可以设计奖励值了。

1、第一种奖励值设置方法是基于state[0]的,也就是reward=abs(state[0]+0.5),表示离起点越远,奖励越高,不过根据实际来说效果一般

2、第二种奖励值设置方法是基于state[1]的,也就是reward=abs(state[1]),表示速度越大,给予越大的奖励值,前期效果还行,但后期很难抵达终点(大概原因就是他认为不去终点更容易拿到更多奖励)

3、第三种奖励值设置方法还是基于state[1]的,只不过把思路稍微转换一下,即reward=abs(state[1])-2,因为时间拖的越久,total_reward肯定越低,这样的话他在第一次抵达终点之后,会愿意更多的去终点,解决了第二种方法的缺陷

除了以上三种奖励设置之外,还有更多的奖励设置方法(比如把state[0]和state[1]结合起来之类的)就留给你们自己去想了,总的来说第三种方法是我目前找到的最容易收敛的方法

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?