代码解析

对论文BERT for Joint Intent Classification and Slot Filling的代码解析

加载模型并处理数据

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument("--task", default=None, required=True, type=str, help="The name of the task to train")

parser.add_argument("--model_dir", default=None, required=True, type=str, help="Path to save, load model")

parser.add_argument("--data_dir", default="./data", type=str, help="The input data dir")

parser.add_argument("--intent_label_file", default="intent_label.txt", type=str, help="Intent Label file")

parser.add_argument("--slot_label_file", default="slot_label.txt", type=str, help="Slot Label file")

parser.add_argument("--model_type", default="bert", type=str, help="Model type selected in the list: " + ", ".join(MODEL_CLASSES.keys()))

parser.add_argument('--seed', type=int, default=1234, help="random seed for initialization")

parser.add_argument("--train_batch_size", default=32, type=int, help="Batch size for training.")

parser.add_argument("--eval_batch_size", default=64, type=int, help="Batch size for evaluation.")

parser.add_argument("--max_seq_len", default=50, type=int, help="The maximum total input sequence length after tokenization.")

parser.add_argument("--learning_rate", default=5e-5, type=float, help="The initial learning rate for Adam.")

parser.add_argument("--num_train_epochs", default=10.0, type=float, help="Total number of training epochs to perform.")

parser.add_argument("--weight_decay", default=0.0, type=float, help="Weight decay if we apply some.")

parser.add_argument('--gradient_accumulation_steps', type=int, default=1,

help="Number of updates steps to accumulate before performing a backward/update pass.")

parser.add_argument("--adam_epsilon", default=1e-8, type=float, help="Epsilon for Adam optimizer.")

parser.add_argument("--max_grad_norm", default=1.0, type=float, help="Max gradient norm.")

parser.add_argument("--max_steps", default=-1, type=int, help="If > 0: set total number of training steps to perform. Override num_train_epochs.")

parser.add_argument("--warmup_steps", default=0, type=int, help="Linear warmup over warmup_steps.")

parser.add_argument("--dropout_rate", default=0.1, type=float, help="Dropout for fully-connected layers")

parser.add_argument('--logging_steps', type=int, default=200, help="Log every X updates steps.")

parser.add_argument('--save_steps', type=int, default=200, help="Save checkpoint every X updates steps.")

parser.add_argument("--do_train", action="store_true", help="Whether to run training.")

parser.add_argument("--do_eval", action="store_true", help="Whether to run eval on the test set.")

parser.add_argument("--no_cuda", action="store_true", help="Avoid using CUDA when available")

parser.add_argument("--ignore_index", default=0, type=int,

help='Specifies a target value that is ignored and does not contribute to the input gradient')

parser.add_argument('--slot_loss_coef', type=float, default=1.0, help='Coefficient for the slot loss.')

# CRF option

parser.add_argument("--use_crf", action="store_true", help="Whether to use CRF")

parser.add_argument("--slot_pad_label", default="PAD", type=str, help="Pad token for slot label pad (to be ignore when calculate loss)")

args = parser.parse_args()

# args.model_name_or_path = MODEL_PATH_MAP[args.model_type]

args.model_name_or_path = "C:\\Users\\acer-pc\\Desktop\\JointBERT-master\\bert-base-uncased/"

main(args)

首先是设置各种参数,设置train,evaluate,crf都为true,不使用cuda,预训练模型为bert,采用snips数据集。

还有一个重要的参数,设置model_name_or_path,这里填写预训练模型的路径,预训练模型需要先从链接https://huggingface.co/models下载。千万不要用谷歌官方的github的bert模型或者其他版本的预训练模型,它们的config.json文件不一样,模型的封装是不同的。

接下来进入main函数中

def main(args):

init_logger()

set_seed(args)

tokenizer = load_tokenizer(args)

train_dataset = load_and_cache_examples(args, tokenizer, mode="train")

dev_dataset = load_and_cache_examples(args, tokenizer, mode="dev")

test_dataset = load_and_cache_examples(args, tokenizer, mode="test")

trainer = Trainer(args, train_dataset, dev_dataset, test_dataset)

if args.do_train:

trainer.train()

if args.do_eval:

trainer.load_model()

trainer.evaluate("test")

tokenizer = load_tokenizer(args) 说一下,进入load_tokenizer函数

def load_tokenizer(args):

return MODEL_CLASSES[args.model_type][2].from_pretrained(args.model_name_or_path)

再度step into

MODEL_CLASSES = {

'bert': (BertConfig, JointBERT, BertTokenizer),

'distilbert': (DistilBertConfig, JointDistilBERT, DistilBertTokenizer),

'albert': (AlbertConfig, JointAlbert, AlbertTokenizer)

}

from_pretrained是transformers库里的函数,这是导入bert预训练模型的标准步骤,要分别对tokenizer和model进行from_pretrained。就必须保证在args.model_name_or_path的路径中,必须要含已经下载好的预训练模型,pytorch的预训练模型是pytorch_model.bin,必须包含在bert-base-uncased目录下。对于tokenizer所需要的加载的文件,应该是vocab.txt,在运行代码的时候发现该文件好像能够自动在线下载。

对于加载预训练模型的步骤,可以参考Huggingface简介及BERT代码浅析.

如果一开始的args.model_name_or_path用的不是Huggingface的模型或者压根就没有bert-base-uncased路径,那么会报错。

报错的内容是叫你检查bert-base-uncased是否是正确的字符串或者告诉你预训练模型找不到。

step into函数load_and_cache_examples

def load_and_cache_examples(args, tokenizer, mode):

processor = processors[args.task](args)

# Load data features from cache or dataset file

cached_features_file = os.path.join(

args.data_dir,

'cached_{}_{}_{}_{}'.format(

mode,

args.task,

list(filter(None, "bert-base-uncased")).pop(),

args.max_seq_len

)

)

if os.path.exists(cached_features_file):

logger.info("Loading features from cached file %s", cached_features_file)

features = torch.load(cached_features_file)

else:

# Load data features from dataset file

logger.info("Creating features from dataset file at %s", args.data_dir)

if mode == "train":

examples = processor.get_examples("train")

elif mode == "dev":

examples = processor.get_examples("dev")

elif mode == "test":

examples = processor.get_examples("test")

else:

raise Exception("For mode, Only train, dev, test is available")

# Use cross entropy ignore index as padding label id so that only real label ids contribute to the loss later

pad_token_label_id = args.ignore_index

features = convert_examples_to_features(examples, args.max_seq_len, tokenizer,

pad_token_label_id=pad_token_label_id)

logger.info("Saving features into cached file %s", cached_features_file)

torch.save(features, cached_features_file)

# Convert to Tensors and build dataset

all_input_ids = torch.tensor([f.input_ids for f in features], dtype=torch.long)

all_attention_mask = torch.tensor([f.attention_mask for f in features], dtype=torch.long)

all_token_type_ids = torch.tensor([f.token_type_ids for f in features], dtype=torch.long)

all_intent_label_ids = torch.tensor([f.intent_label_id for f in features], dtype=torch.long)

all_slot_labels_ids = torch.tensor([f.slot_labels_ids for f in features], dtype=torch.long)

dataset = TensorDataset(all_input_ids, all_attention_mask,

all_token_type_ids, all_intent_label_ids, all_slot_labels_ids)

return dataset

这儿是数据预处理最重要的一步,进入processors看看

processors = {

"atis": JointProcessor,

"snips": JointProcessor

}

step into到JointProcessor

class JointProcessor(object):

"""Processor for the JointBERT data set """

def __init__(self, args):

self.args = args

self.intent_labels = get_intent_labels(args)

self.slot_labels = get_slot_labels(args)

self.input_text_file = 'seq.in'

self.intent_label_file = 'label'

self.slot_labels_file = 'seq.out'

@classmethod

def _read_file(cls, input_file, quotechar=None):

"""Reads a tab separated value file."""

with open(input_file, "r", encoding="utf-8") as f:

lines = []

for line in f:

lines.append(line.strip())

return lines

def _create_examples(self, texts, intents, slots, set_type):

"""Creates examples for the training and dev sets."""

examples = []

for i, (text, intent, slot) in enumerate(zip(texts, intents, slots)):

guid = "%s-%s" % (set_type, i)

# 1. input_text

words = text.split() # Some are spaced twice

# 2. intent

intent_label = self.intent_labels.index(intent) if intent in self.intent_labels else self.intent_labels.index("UNK")

# 3. slot

slot_labels = []

for s in slot.split():

slot_labels.append(self.slot_labels.index(s) if s in self.slot_labels else self.slot_labels.index("UNK"))

assert len(words) == len(slot_labels)

examples.append(InputExample(guid=guid, words=words, intent_label=intent_label, slot_labels=slot_labels))

return examples

def get_examples(self, mode):

"""

Args:

mode: train, dev, test

"""

data_path = os.path.join(self.args.data_dir, self.args.task, mode)

logger.info("LOOKING AT {}".format(data_path))

return self._create_examples(texts=self._read_file(os.path.join(data_path, self.input_text_file)),

intents=self._read_file(os.path.join(data_path, self.intent_label_file)),

slots=self._read_file(os.path.join(data_path, self.slot_labels_file)),

set_type=mode)

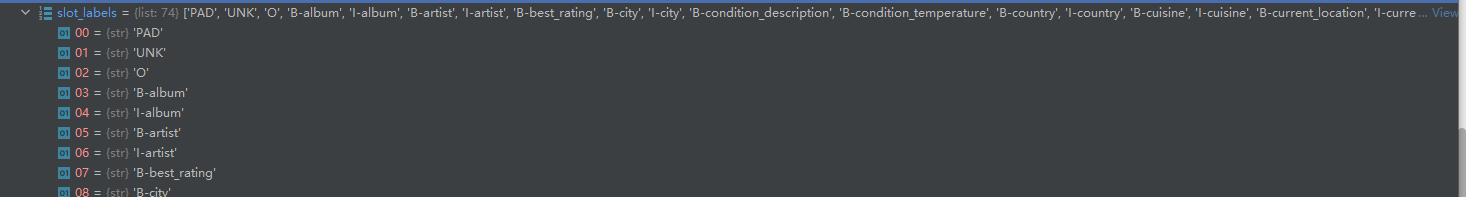

get_intent_labels函数就是实现

同理

get_slot_labels实现了

返回到load_and_cache_examples函数

接下来是创建cached_features_file并保存到本地里面

稍微解释一下list(filter(None, “bert-base-uncased”)).pop() 里的filter函数

它的第一个参数必须是函数,第二个参数必须是序列,它用于用于过滤序列,过滤掉不符合条件的元素,返回符合条件的元素组成新列表。

序列中的每个元素作为参数传递给函数进行判断,返回True或者False,最后将返回True的元素放到新列表中。

参考自python中的filter()函数.

后面的50指的是maxlen为50,不足50的用0填充。这里面的步骤 很复杂,肯定要经过word2id,然后padding

最后features变为

每一个元素又是一个字典,该字典包含

每一个键对应的值都有50个,因为maxlen为50,0表示padding的数值。

此后,通过列表推导式,求all_input_ids到all_slot_labels_ids。将他们转换成tensor格式。

然后用TensorDataset函数,该函数参考PyTorch 小功能之 TensorDataset.

TensorDataset 可以用来对 tensor 进行打包,就好像 python 中的 zip 功能。该类通过每一个 tensor 的第一个维度进行索引。因此,该类中的 tensor 第一维度必须相等。

总之就是提取all_input_ids到all_slot_labels_ids第一维的元素,把他们的第一维元素进行打包,得到13084个样本,每个样本都包含了tensor类型的all_input_ids到all_slot_labels_ids信息。

返回到main函数中

train_dataset = load_and_cache_examples(args, tokenizer, mode="train")

dev_dataset = load_and_cache_examples(args, tokenizer, mode="dev")

test_dataset = load_and_cache_examples(args, tokenizer, mode="test")

trainer = Trainer(args, train_dataset, dev_dataset, test_dataset)

对三个数据集进行以上预处理,再创建trainer对象

class Trainer(object):

def __init__(self, args, train_dataset=None, dev_dataset=None, test_dataset=None):

self.args = args

self.train_dataset = train_dataset

self.dev_dataset = dev_dataset

self.test_dataset = test_dataset

self.intent_label_lst = get_intent_labels(args)

self.slot_label_lst = get_slot_labels(args)

# Use cross entropy ignore index as padding label id so that only real label ids contribute to the loss later

self.pad_token_label_id = args.ignore_index

self.config_class, self.model_class, _ = MODEL_CLASSES[args.model_type]

self.config = self.config_class.from_pretrained(args.model_name_or_path, finetuning_task=args.task)

self.model = self.model_class.from_pretrained(args.model_name_or_path,

config=self.config,

args=args,

intent_label_lst=self.intent_label_lst,

slot_label_lst=self.slot_label_lst)

# GPU or CPU

self.device = "cuda" if torch.cuda.is_available() and not args.no_cuda else "cpu"

self.model.to(self.device)

调用 其构造函数,分析该函数,有一个 self.pad_token_label_id = args.ignore_index 意思是对于我们padding的数值0,在计算交叉熵损失的时候忽略掉它,不计算它的梯度。

然后加载预训练模型,到此,预训练模型加载完毕。

返回到main中

进行训练和评估

if args.do_train:

trainer.train()

if args.do_eval:

trainer.load_model()

trainer.evaluate("test")

这儿的train和evaluate函数不做介绍。

434

434

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?