1 用numpy 建立基本函数

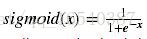

1.1 s型函数,np.exp()

# GRADED FUNCTION: basic_sigmoid

import math

def basic_sigmoid(x):

"""

Compute sigmoid of x.

Arguments:

x -- A scalar

Return:

s -- sigmoid(x)

"""

### START CODE HERE ### (≈ 1 line of code)

s = 1/(1+math.exp(-x))

### END CODE HERE ###

return s

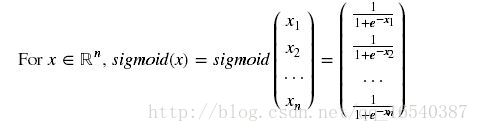

basic_sigmoid(3)事实上,因为,在深度学习中我们使用的是向量和矩阵,所以,我们很少使用“math”。这也是为什么“numpy”非常有用的原因。

import numpy as np

# example of np.exp

x = np.array([1, 2, 3])

print(np.exp(x)) # result is (exp(1), exp(2), exp(3))输出:[ 2.71828183 7.3890561 20.08553692]

# GRADED FUNCTION: sigmoid

import numpy as np # this means you can access numpy functions by writing np.function() instead of numpy.function()

def sigmoid(x):

"""

Compute the sigmoid of x

Arguments:

x -- A scalar or numpy array of any size

Return:

s -- sigmoid(x)

"""

### START CODE HERE ### (≈ 1 line of code)

s = 1/(1+np.exp(-x))

### END CODE HERE ###

return s

x = np.array([1, 2, 3])

sigmoid(x)输出:array([ 0.73105858, 0.88079708, 0.95257413])

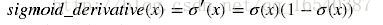

1.2 s型函数的梯度

# GRADED FUNCTION: sigmoid_derivative

def sigmoid_derivative(x):

"""

Compute the gradient (also called the slope or derivative) of the sigmoid function with respect to its input x.

You can store the output of the sigmoid function into variables and then use it to calculate the gradient.

Arguments:

x -- A scalar or numpy array

Return:

ds -- Your computed gradient.

"""

### START CODE HERE ### (≈ 2 lines of code)

s = sigmoid(x)

ds = s * (1 - s)

### END CODE HERE ###

return ds

x = np.array([1, 2, 3])

本文介绍了使用Numpy进行神经网络基础函数的实现,包括s型函数及其梯度、数组重塑、归一化行、广播与softmax函数。详细展示了各个函数的输出结果,并强调了向量化在深度学习中的重要性,特别是numpy库的高效内置函数。

本文介绍了使用Numpy进行神经网络基础函数的实现,包括s型函数及其梯度、数组重塑、归一化行、广播与softmax函数。详细展示了各个函数的输出结果,并强调了向量化在深度学习中的重要性,特别是numpy库的高效内置函数。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

914

914

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?