文章目录

介绍

能够实现中文分词的R包有Rwordseg包和jiebaR包,从目前来看jiebaR包的功能更加强大,效率也更高。这里将介绍如何使用jiebaR包实现中文分词。

worker()函数介绍

worker()函数可以创建一个jiebaR对象,包括分割器、查找器、重点词提取器等等,随后可进行具体的工作。

worker(type = "mix", dict = DICTPATH, hmm = HMMPATH,

user = USERPATH, idf = IDFPATH, stop_word = STOPPATH, write = T,

qmax = 20, topn = 5, encoding = "UTF-8", detect = T,

symbol = F, lines = 1e+05, output = NULL, bylines = F,

user_weight = "max")

参数介绍

-

type

The type of jiebaR workers including mix, mp, hmm, full, query, tag, simhash, and keywords. -

dict

A path to main dictionary, default value is DICTPATH, and the value is used for mix, mp, query, full, tag, simhash and keywords workers. -

hmm

A path to Hidden Markov Model, default value is HMMPATH, full, and the value is used for mix, hmm, query, tag, simhash and keywords workers. -

user

A path to user dictionary, default value is USERPATH, and the value is used for mix, full, tag and mp workers. -

idf

A path to inverse document frequency, default value is IDFPATH, and the value is used for simhash and keywords workers. -

stop_word

A path to stop word dictionary, default value is STOPPATH, and the value is used for simhash, keywords, tagger and segment workers. Encoding of this file is checked by file_coding, and it should be UTF-8 encoding. For segment workers, the default STOPPATH will not be used, so you should provide another file path. -

write

Whether to write the output to a file, or return a the result in a object. This value will only be used when the input is a file path. The default value is TRUE. The value is used for segment and speech tagging workers. -

qmax

Max query length of words, and the value is used for query workers. -

topn

The number of keywords, and the value is used for simhash and keywords workers. -

encoding

The encoding of the input file. If encoding detection is enable, the value of encoding will be ignore. -

detect

Whether to detect the encoding of input file using file_coding function. If encoding detection is enable, the value of encoding will be ignore. -

symbol

Whether to keep symbols in the sentence. -

lines

The maximal number of lines to read at one time when input is a file. The value is used for segmentation and speech tagging workers. -

output

A path to the output file, and default worker will generate file name by system time stamp, the value is used for segmentation and speech tagging workers. -

bylines

return the result by the lines of input files -

user_weight

the weight of the user dict words. “min” “max” or “median”.

使用方式

是进行分词时,下面的3种使用方式是等价的:

segment(words,worker)

worker<=words

worker[words]

其中,words代表待分词的文本,worker是worker()对象。

new_user_word()函数介绍

该函数用于条件用户自定义的词汇

new_user_word(worker, words, tags = rep("n", length(words)))

参数介绍

-

worker

a jieba worker -

words

the new words,是一个向量 -

tags

the new words tags, default “n”,

freq()函数介绍

freq(x)对一个字符串向量x进行词频统计,随后可以基于此绘制词云图

实例

利用默认库进行分词

library(jiebaR)

engine<-worker()

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

segment(words,engine)

# 或者 engine<=words

# 或者 engine[words]

# [1] "4" "月" "28" "日" "在" "北京市" "新型"

# [8] "冠状病毒" "肺炎" "疫情" "防控" "工作" "第" "318"

# [15] "场" "新闻" "发布会" "上" "市" "疾控中心" "副"

# [22] "主任" "全国" "新型" "冠状病毒" "肺炎" "专家" "组成员"

# [29] "庞" "星火" "通报" "4" "月" "27" "日"

# [36] "15" "时至" "28" "日" "15" "时" "本市"

# [43] "新增" "本土" "新冠" "肺炎" "病毒感染者" "56" "例"

# [50] "其中" "确诊" "病例" "53" "例" "无症状" "感染者"

# [57] "3" "例" "房山区" "20" "例" "朝阳区" "14"

# [64] "例" "顺义区" "8" "例" "通州区" "6" "例"

# [71] "海淀区" "3" "例" "丰台区" "2" "例" "东城区"

# [78] "1" "例" "石景山区" "1" "例" "大兴区" "1"

# [85] "例" "社区" "筛查" "6" "例" "主动" "就诊"

# [92] "2" "例" "风险" "人员" "48" "例"

利用自定义词库进行分割

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_new_word<-worker()

new_user_word(engine_new_word, c("新闻发布会","新型冠状病毒"))

segment(words,engine_new_word)

# [1] "4" "月" "28" "日" "在" "北京市"

# [7] "新型冠状病毒" "肺炎" "疫情" "防控" "工作" "第"

# [13] "318" "场" "新闻发布会" "上" "市" "疾控中心"

# [19] "副" "主任" "全国" "新型冠状病毒" "肺炎" "专家"

# [25] "组成员" "庞" "星火" "通报" "4" "月"

# [31] "27" "日" "15" "时至" "28" "日"

# [37] "15" "时" "本市" "新增" "本土" "新冠"

# [43] "肺炎" "病毒感染者" "56" "例" "其中" "确诊"

# [49] "病例" "53" "例" "无症状" "感染者" "3"

# [55] "例" "房山区" "20" "例" "朝阳区" "14"

# [61] "例" "顺义区" "8" "例" "通州区" "6"

# [67] "例" "海淀区" "3" "例" "丰台区" "2"

# [73] "例" "东城区" "1" "例" "石景山区" "1"

# [79] "例" "大兴区" "1" "例" "社区" "筛查"

# [85] "6" "例" "主动" "就诊" "2" "例"

# [91] "风险" "人员" "48" "例"

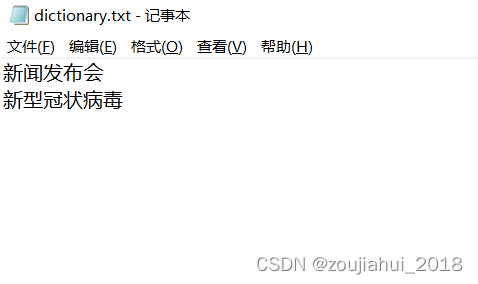

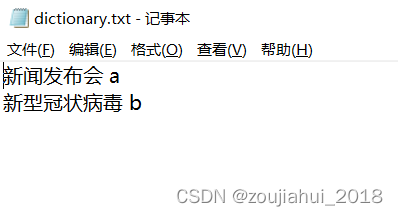

通过文本文件添加用户自定义词库

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_user<-worker(user='dictionary.txt')

segment(words,engine_user)

# [1] "4" "月" "28" "日" "在" "北京市"

# [7] "新型冠状病毒" "肺炎" "疫情" "防控" "工作" "第"

# [13] "318" "场" "新闻发布会" "上" "市" "疾控中心"

# [19] "副" "主任" "全国" "新型冠状病毒" "肺炎" "专家"

# [25] "组成员" "庞" "星火" "通报" "4" "月"

# [31] "27" "日" "15" "时至" "28" "日"

# [37] "15" "时" "本市" "新增" "本土" "新冠"

# [43] "肺炎" "病毒感染者" "56" "例" "其中" "确诊"

# [49] "病例" "53" "例" "无症状" "感染者" "3"

# [55] "例" "房山区" "20" "例" "朝阳区" "14"

# [61] "例" "顺义区" "8" "例" "通州区" "6"

# [67] "例" "海淀区" "3" "例" "丰台区" "2"

# [73] "例" "东城区" "1" "例" "石景山区" "1"

# [79] "例" "大兴区" "1" "例" "社区" "筛查"

# [85] "6" "例" "主动" "就诊" "2" "例"

# [91] "风险" "人员" "48" "例"

注意事项

1.如果你的词库是用记事本写的话,因为编码有时不是UTF-8,使用时会出现 各种错误,甚至软件奔溃。所以建议使用notepad++编辑,将编码设置为utf-8,另存为txt文件。

2.如果你需要添加搜狗细胞词库的话,那你需要安装cidian包,它可以帮助 我们把搜狗细胞词库转换为jiebaR可以使用的词库。

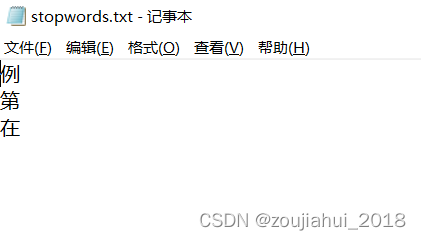

自定义停用词

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_user<-worker(user='dictionary.txt',stop_word = "stopwords.txt")

segment(words,engine_user)

# [1] "4" "月" "28" "日" "北京市" "新型冠状病毒"

# [7] "肺炎" "疫情" "防控" "工作" "318" "场"

# [13] "新闻发布会" "上" "市" "疾控中心" "副" "主任"

# [19] "全国" "新型冠状病毒" "肺炎" "专家" "组成员" "庞"

# [25] "星火" "通报" "4" "月" "27" "日"

# [31] "15" "时至" "28" "日" "15" "时"

# [37] "本市" "新增" "本土" "新冠" "肺炎" "病毒感染者"

# [43] "56" "其中" "确诊" "病例" "53" "无症状"

# [49] "感染者" "3" "房山区" "20" "朝阳区" "14"

# [55] "顺义区" "8" "通州区" "6" "海淀区" "3"

# [61] "丰台区" "2" "东城区" "1" "石景山区" "1"

# [67] "大兴区" "1" "社区" "筛查" "6" "主动"

# [73] "就诊" "2" "风险" "人员" "48"

进行分词并词频统计

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_user<-worker(user='dictionary.txt',stop_word = "stopwords.txt")

freq(segment(words,engine_user))

# char freq

# 1 就诊 1

# 2 主动 1

# 3 社区 1

# 4 大兴区 1

# 5 1 3

# 6 丰台区 1

# 7 通州区 1

# 8 筛查 1

# 9 14 1

# 10 3 2

# 11 无症状 1

# 12 病例 1

# ...

词性标注

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_user<-worker(user='dictionary.txt',stop_word = "stopwords.txt",type="tag")

segment(words,engine_user)

# x m m m ns b

# "4" "月" "28" "日" "北京市" "新型冠状病毒"

# n n vn vn m q

# "肺炎" "疫情" "防控" "工作" "318" "场"

# a f n n b b

# "新闻发布会" "上" "市" "疾控中心" "副" "主任"

# n b n n l nr

# "全国" "新型冠状病毒" "肺炎" "专家" "组成员" "庞"

# n n x m m m

# "星火" "通报" "4" "月" "27" "日"

# m x m m m n

# "15" "时至" "28" "日" "15" "时"

# n v n x n n

# "本市" "新增" "本土" "新冠" "肺炎" "病毒感染者"

# m r v n m i

# "56" "其中" "确诊" "病例" "53" "无症状"

# n x ns m ns m

# "感染者" "3" "房山区" "20" "朝阳区" "14"

# ns x ns x ns x

# "顺义区" "8" "通州区" "6" "海淀区" "3"

# ns x ns x ns x

# "丰台区" "2" "东城区" "1" "石景山区" "1"

# ns x n vn x b

# "大兴区" "1" "社区" "筛查" "6" "主动"

# v x n n m

# "就诊" "2" "风险" "人员" "48"

注意事项

如果用户自定义的词库中不指定tag的值,输出结果就会认为是x

提取关键词

library(jiebaR)

words<-"4月28日,在北京市新型冠状病毒肺炎疫情防控工作第318场新闻发布会上,市疾控中心副主任、全国新型冠状病毒肺炎专家组成员庞星火通报,4月27日15时至28日15时,本市新增本土新冠肺炎病毒感染者56例,其中确诊病例53例、无症状感染者3例;房山区20例、朝阳区14例、顺义区8例、通州区6例、海淀区3例、丰台区2例、东城区1例、石景山区1例、大兴区1例。社区筛查6例、主动就诊2例、风险人员48例。"

engine_user<-worker(user='dictionary.txt',stop_word = "stopwords.txt",type="keywords",topn=10)

engine_user<=words

# 234.784 35.2176 35.2176 26.4953 23.4784 23.4784

# " " "1" "日" "肺炎" "15" "28"

# 23.4784 23.4784 23.4784 23.4784

# "3" "新型冠状病毒" "2" "6"

1768

1768

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?