知乎:Cassie

链接:https://zhuanlan.zhihu.com/p/721908386

写在前面

Qwen团队在 2024年9月19日开源了Qwen2-VL-72B 模型,并发布了技术报告。这里简单介绍下“国货之光”——Qwen2-VL-72B 的技术细节。

1. Contributions

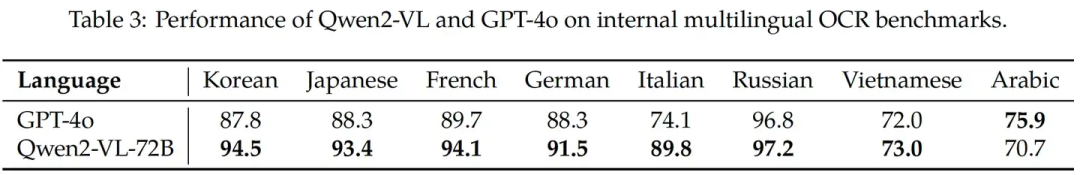

(1) 多语言模型

Qwen 在多语言OCR任务上的表现。

Qwen 在多语言OCR任务上的表现。

(2) 支持任意分辨率、比例的素材(img + video + text)输入,在视觉评测集(DocVQA, InfoVQA, RealWorldQA, MTVQA, MathVista等)上取得SoTA结果

当前的LVLM是把输入的图片处理成固定尺寸,例如224*224,作者认为这会限制模型捕捉不同尺度信息的能力,同时会使高分辨率图像丢失太多信息;

vision encoder的训练不够,有研究通过ft vision encoder获得了模型性能的提升;

为了提升作者对不通分辨率图片的处理能力,作者移除了绝对位置编码PE,引入了2D Rotary Position Embedd

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?