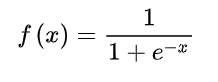

torch.sigmoid()

torch.nn.Sigmoid()

CODE

torch.sigmoid()

import torch

a = torch.randn(4)

print(a)

print(torch.sigmoid(a))

tensor([ 1.2622, -0.3935, -0.0859, 1.0130])

tensor([0.7794, 0.4029, 0.4785, 0.7336])

torch.nn.Sigmoid()

import torch

a = torch.tensor([1.2622, -0.3935, -0.0859, 1.0130])

m = torch.nn.Sigmoid()

b = m(a)

print(a)

print(b)

tensor([ 1.2622, -0.3935, -0.0859, 1.0130])

tensor([0.7794, 0.4029, 0.4785, 0.7336])

torch.nn.Sigmoid,首字母S大写,是一个类。

torch.sigmoid,首字母s小写,是一个方法。

举例:

def structure_loss(pred, mask):

weit = 1+5*torch.abs(F.avg_pool2d(mask, kernel_size=31, stride=1, padding=15)-mask)

wbce = F.binary_cross_entropy_with_logits(pred, mask, reduction='none')

wbce = (weit*wbce).sum(dim=(2,3))/weit.sum(dim=(2,3))

pred = torch.sigmoid(pred)

inter = ((pred * mask) * weit).sum(dim=(2, 3))

union = ((pred + mask) * weit).sum(dim=(2, 3))

wiou = 1-(inter+1)/(union-inter+1)

return (wbce+wiou).mean()

Pytorch:激活函数及其梯度 (Tanh函数 ReLU函数)

Tanh函数

ReLU函数

6975

6975

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?