前置知识

平移不变性

卷积核考虑到图像的某个部分,卷积核本身都是不变得

局部性

考虑图像的某个部分

卷积层

填充

在上述卷积的过程稿中,输入为33但是输出变成22,输出的维度小于输入的维度。而通常我们都需要做许多层的卷积,总不能把数据越搞越小,所以要将数据进行填充,通常填充0。

在卷积后输出通常为如下:

(

n

h

−

k

n

+

1

)

×

(

n

w

−

k

w

+

1

)

(n_h-k_n+1) ×(n_w-k_w+1)

(nh−kn+1)×(nw−kw+1) k为卷积核,h为高,w为宽

如果填充

p

h

p_h

ph行

p

w

p_w

pw列则输出为:

(

n

h

−

k

n

+

1

+

p

h

)

×

(

n

w

−

k

w

+

1

+

p

w

)

(n_h-k_n+1+p_h) ×(n_w-k_w+1+p_w)

(nh−kn+1+ph)×(nw−kw+1+pw),通常为了让输入输出相等,填充行数和列数通常取

p

h

=

k

h

−

1

,

p

w

=

k

w

−

1

p_h=k_h-1,p_w=k_w-1

ph=kh−1,pw=kw−1。

所以通常情况下会取卷积核的size为奇数,使得填充为偶数,这样上下填充的就比较对称了。

步幅

步幅就是每次卷积核移动的数目

输入和输出通道输入 X :

输入:

c

i

×

n

h

×

n

w

c_i×n_h×n_w

ci×nh×nw

卷积核 W

:

c

i

×

c

o

×

k

h

×

k

w

:c_i×c_o×k_h×k_w

:ci×co×kh×kw

偏差 B :

c

i

×

c

o

c_i×c_o

ci×co

输出 Y :

c

o

×

m

h

×

m

w

c_o×m_h×m_w

co×mh×mw

1×1卷积层

这个卷积层将卷积核的高和宽都设置为1,这样在卷积的时候不用考虑元素周围的东西,输入数据和输出数据的高宽是相等的,只是通道的维度改变。因此通常使用1×1卷积层来改变数据的通道维数。这个操作也就相当于一个 c i × c o c_i×c_o ci×co的全连接层。

池化层

卷积层我们学习的结果就是kernel和bias,而池化层就不用学习kernel因为它已经给kernel定义好了,分别为sum,avg,max。与卷积层相比,我觉得池化层更起到了一个数据处理的作用。

基础架构

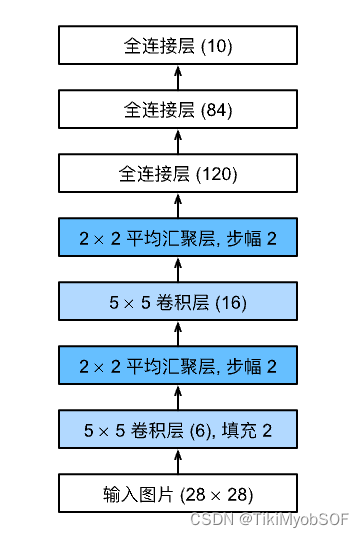

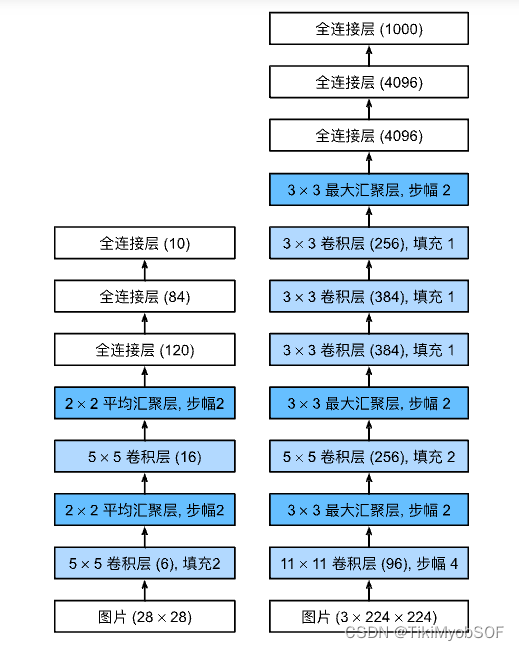

LeNet

是最早发布的卷积申请网络之一,它的组成为:

import torch

from torch import nn

from d2l import torch as d2l

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Flatten(),

nn.Linear(16 * 5 * 5, 120), nn.Sigmoid(),

nn.Linear(120, 84), nn.Sigmoid(),

nn.Linear(84, 10))

AlexNet

import torch

from torch import nn

from d2l import torch as d2l

net = nn.Sequential(

# 这里,我们使用一个11*11的更大窗口来捕捉对象。

# 同时,步幅为4,以减少输出的高度和宽度。

# 另外,输出通道的数目远大于LeNet

nn.Conv2d(1, 96, kernel_size=11, stride=4, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 减小卷积窗口,使用填充为2来使得输入与输出的高和宽一致,且增大输出通道数

nn.Conv2d(96, 256, kernel_size=5, padding=2), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 使用三个连续的卷积层和较小的卷积窗口。

# 除了最后的卷积层,输出通道的数量进一步增加。

# 在前两个卷积层之后,汇聚层不用于减少输入的高度和宽度

nn.Conv2d(256, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 256, kernel_size=3, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Flatten(),

# 这里,全连接层的输出数量是LeNet中的好几倍。使用dropout层来减轻过拟合

nn.Linear(6400, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

nn.Linear(4096, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

# 最后是输出层。由于这里使用Fashion-MNIST,所以用类别数为10,而非论文中的1000

nn.Linear(4096, 10))

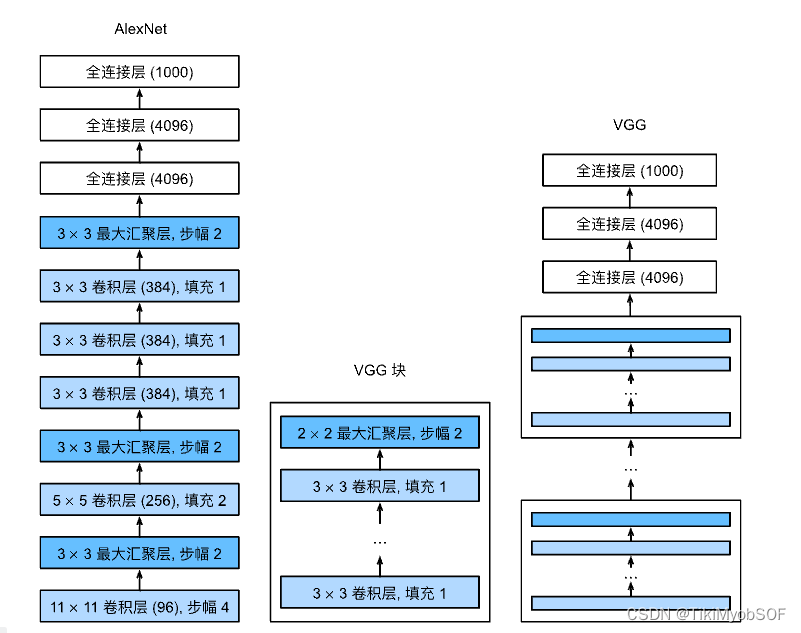

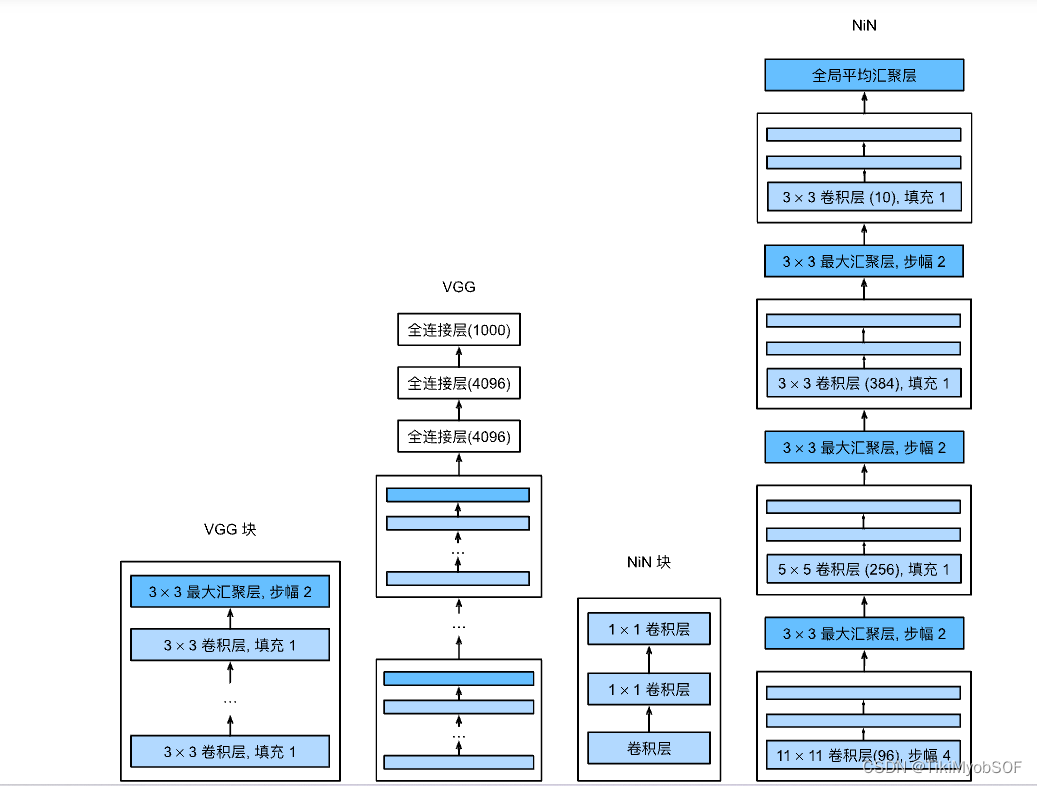

VGG

vgg将若干个网络封装成一个块,而vgg网络是由这些块和若干个全连接组成

由上述AlexNet在0-7层是若干个pooling和cov交替组成组成而vgg网络则是由若干个vgg块组成。

import torch

from torch import nn

from d2l import torch as d2l

conv_arch = ((1, 64), (1, 128), (2, 256), (2, 512), (2, 512))

def vgg_block(num_convs, in_channels, out_channels):

layers = []

for _ in range(num_convs):

layers.append(nn.Conv2d(in_channels, out_channels,

kernel_size=3, padding=1))

layers.append(nn.ReLU())

in_channels = out_channels

layers.append(nn.MaxPool2d(kernel_size=2,stride=2))

return nn.Sequential(*layers)

def vgg11(conv_arch):

conv_blks = []

in_channels = 1

# 卷积层部分

for (num_convs, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_convs, in_channels, out_channels))

in_channels = out_channels

return nn.Sequential(

*conv_blks, nn.Flatten(),

# 全连接层部分

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 10))

net = vgg11(conv_arch)

NIN

NiN块的是由一个卷积层个两个1*1的卷积层构成,其卷积层可以有不同的高宽和通道。NiN网络用**全局平均汇聚层(就是一个窗口大小为数据本身的pooling层,会将单个通道内的所有数据计算为一个标量,这个网络最后特征维数就是通道数)**代替了全连接层

import torch

from torch import nn

from d2l import torch as d2l

def nin_block(in_channels, out_channels, kernel_size, strides, padding):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size, strides, padding),

nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU())

net = nn.Sequential(

nin_block(1, 96, kernel_size=11, strides=4, padding=0),

nn.MaxPool2d(3, stride=2),

nin_block(96, 256, kernel_size=5, strides=1, padding=2),

nn.MaxPool2d(3, stride=2),

nin_block(256, 384, kernel_size=3, strides=1, padding=1),

nn.MaxPool2d(3, stride=2),

nn.Dropout(0.5),

# 标签类别数是10

nin_block(384, 10, kernel_size=3, strides=1, padding=1),

nn.AdaptiveAvgPool2d((1, 1)),

# 将四维的输出转成二维的输出,其形状为(批量大小,10)

nn.Flatten())

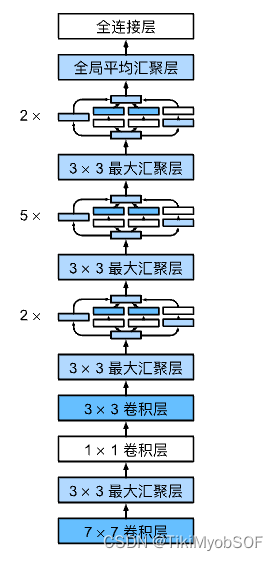

GoogLeNet

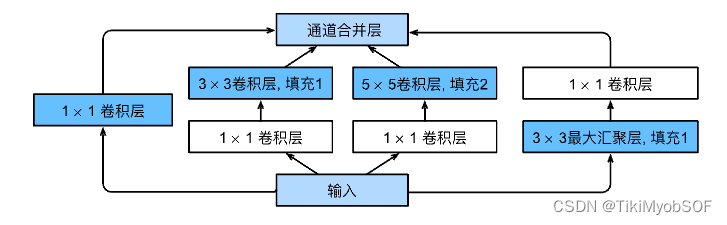

Inception块

这个块的意图就在于对不同网络进行尝试,哪个path优秀就用哪个。而最左边就的1*1conv层就是对通道数进行适应,该path就是防止其他三个path都不优秀,那性能最起码可以维持不变,有些残差块的意味。

架构

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

class Inception(nn.Module):

# c1--c4是每条路径的输出通道数

def __init__(self, in_channels, c1, c2, c3, c4, **kwargs):

super(Inception, self).__init__(**kwargs)

# 线路1,单1x1卷积层

self.p1_1 = nn.Conv2d(in_channels, c1, kernel_size=1)

# 线路2,1x1卷积层后接3x3卷积层

self.p2_1 = nn.Conv2d(in_channels, c2[0], kernel_size=1)

self.p2_2 = nn.Conv2d(c2[0], c2[1], kernel_size=3, padding=1)

# 线路3,1x1卷积层后接5x5卷积层

self.p3_1 = nn.Conv2d(in_channels, c3[0], kernel_size=1)

self.p3_2 = nn.Conv2d(c3[0], c3[1], kernel_size=5, padding=2)

# 线路4,3x3最大汇聚层后接1x1卷积层

self.p4_1 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

self.p4_2 = nn.Conv2d(in_channels, c4, kernel_size=1)

def forward(self, x):

p1 = F.relu(self.p1_1(x))

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 在通道维度上连结输出

return torch.cat((p1, p2, p3, p4), dim=1)

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(nn.Conv2d(64, 64, kernel_size=1),

nn.ReLU(),

nn.Conv2d(64, 192, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b3 = nn.Sequential(Inception(192, 64, (96, 128), (16, 32), 32),

Inception(256, 128, (128, 192), (32, 96), 64),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b4 = nn.Sequential(Inception(480, 192, (96, 208), (16, 48), 64),

Inception(512, 160, (112, 224), (24, 64), 64),

Inception(512, 128, (128, 256), (24, 64), 64),

Inception(512, 112, (144, 288), (32, 64), 64),

Inception(528, 256, (160, 320), (32, 128), 128),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b5 = nn.Sequential(Inception(832, 256, (160, 320), (32, 128), 128),

Inception(832, 384, (192, 384), (48, 128), 128),

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten())

net = nn.Sequential(b1, b2, b3, b4, b5, nn.Linear(1024, 10))

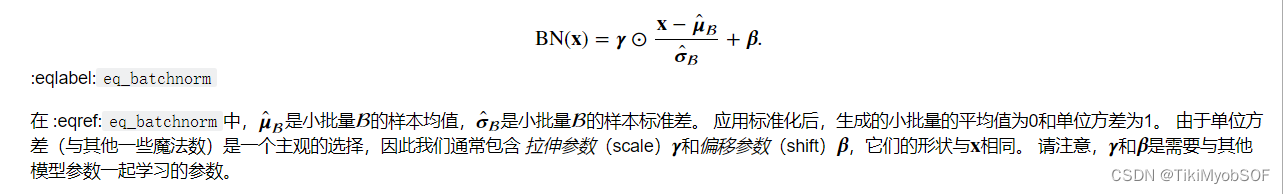

batchNorm

在训练神经网络的时候会有一个现象:由于比较神经网络在梯度下降的时候,随着loss到数据的梯度下降,梯度是慢慢变小的,通常就会使得靠近loss的网络被先训练好,而靠近data的网络由于梯度比较少,通常训练的比较慢。这就导致考虑loss的网络已经被训练好了,但是因为靠近data的网络被改变导致考虑loss的网络白训练了。如果使data在特征维度保持规范化,就可以避免这一问题。

要注意,batchNorm层要在激活函数之前

import torch

from torch import nn

from d2l import torch as d2l

def batch_norm(X, gamma, beta, moving_mean, moving_var, eps, momentum):

# 通过is_grad_enabled来判断当前模式是训练模式还是预测模式

if not torch.is_grad_enabled():

# 如果是在预测模式下,直接使用传入的移动平均所得的均值和方差

X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)

else:

assert len(X.shape) in (2, 4)

if len(X.shape) == 2:

# 使用全连接层的情况,计算特征维上的均值和方差

mean = X.mean(dim=0)

var = ((X - mean) ** 2).mean(dim=0)

else:

# 使用二维卷积层的情况,计算通道维上(axis=1)的均值和方差。

# 这里我们需要保持X的形状以便后面可以做广播运算

mean = X.mean(dim=(0, 2, 3), keepdim=True)

var = ((X - mean) ** 2).mean(dim=(0, 2, 3), keepdim=True)

# 训练模式下,用当前的均值和方差做标准化

X_hat = (X - mean) / torch.sqrt(var + eps)

# 更新移动平均的均值和方差

moving_mean = momentum * moving_mean + (1.0 - momentum) * mean

moving_var = momentum * moving_var + (1.0 - momentum) * var

Y = gamma * X_hat + beta # 缩放和移位

return Y, moving_mean.data, moving_var.data

# batchnorm层

class BatchNorm(nn.Module):

# num_features:完全连接层的输出数量或卷积层的输出通道数。

# num_dims:2表示完全连接层,4表示卷积层

def __init__(self, num_features, num_dims):

super().__init__()

if num_dims == 2:

shape = (1, num_features)

else:

shape = (1, num_features, 1, 1)

# 参与求梯度和迭代的拉伸和偏移参数,分别初始化成1和0

self.gamma = nn.Parameter(torch.ones(shape))

self.beta = nn.Parameter(torch.zeros(shape))

# 非模型参数的变量初始化为0和1

self.moving_mean = torch.zeros(shape)

self.moving_var = torch.ones(shape)

def forward(self, X):

# 如果X不在内存上,将moving_mean和moving_var

# 复制到X所在显存上

if self.moving_mean.device != X.device:

self.moving_mean = self.moving_mean.to(X.device)

self.moving_var = self.moving_var.to(X.device)

# 保存更新过的moving_mean和moving_var

Y, self.moving_mean, self.moving_var = batch_norm(

X, self.gamma, self.beta, self.moving_mean,

self.moving_var, eps=1e-5, momentum=0.9)

return Y

# 使用案例

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5), BatchNorm(6, num_dims=4), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), BatchNorm(16, num_dims=4), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2), nn.Flatten(),

nn.Linear(16*4*4, 120), BatchNorm(120, num_dims=2), nn.Sigmoid(),

nn.Linear(120, 84), BatchNorm(84, num_dims=2), nn.Sigmoid(),

nn.Linear(84, 10))

# 如何调包

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5), nn.BatchNorm2d(6), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), nn.BatchNorm2d(16), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2), nn.Flatten(),

nn.Linear(256, 120), nn.BatchNorm1d(120), nn.Sigmoid(),

nn.Linear(120, 84), nn.BatchNorm1d(84), nn.Sigmoid(),

nn.Linear(84, 10))

ResNet(残差网络)

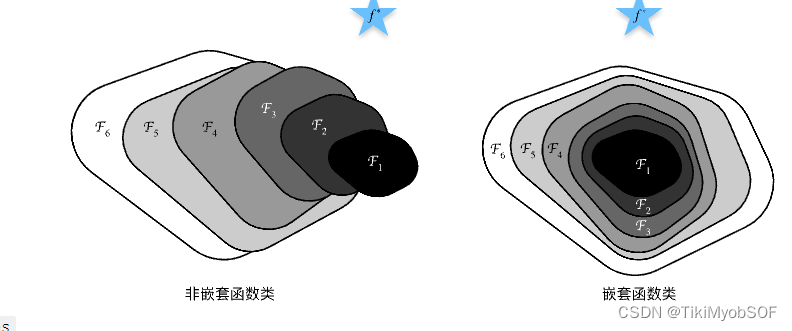

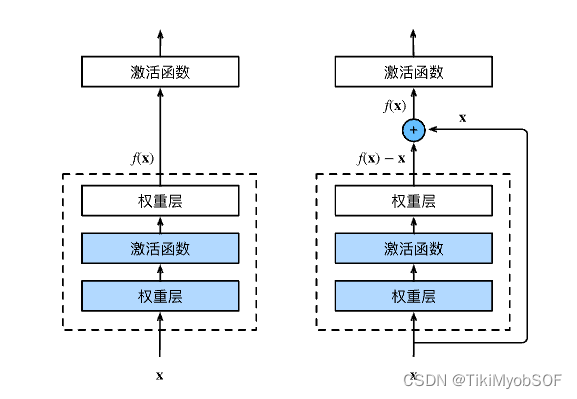

越深的网络一定越好嘛?

不一定,随着网络层数的加深网络训练有可能会朝向不是问题最优解方向走去。

上图的

f

f

f为target,但是随着网络深度的增加,从

F

1

F_1

F1到

F

6

F_6

F6左图里target先近后远,右图里target越来越近。

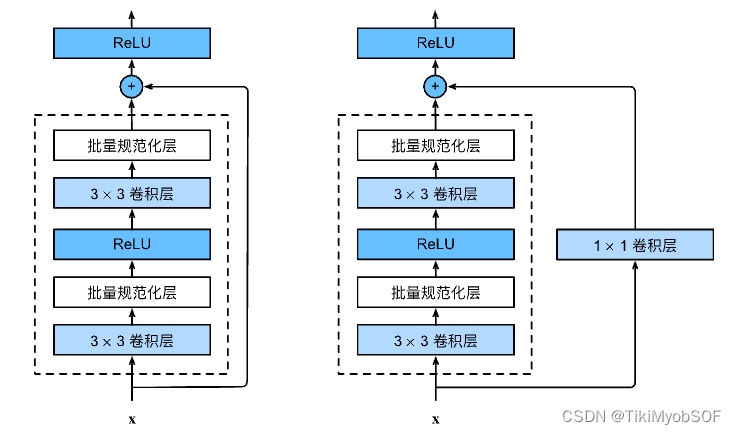

残差块

右图就是残差块,在残差块保证了如果这个层的网络训练不是很好,那训练效果最起码为维持x不动,而不会下降。

右图加了1*1cov层,为了适应通道数。

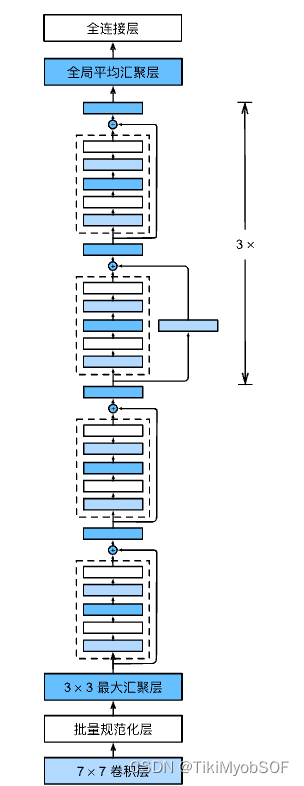

ResNet模型就是使用残差块来构造的模型

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

# 构造残差块

class Residual(nn.Module): #@save

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return F.relu(Y)

# 由num_residuals个残差块组成的模块

def resnet_(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(Residual(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual(num_channels, num_channels))

return blk

# 残差网络

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))

net = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),# 使用Linear的时候要将n维的数据flatten成2维,其中第一维是batchsize

nn.Flatten(), nn.Linear(512, 10))

1462

1462

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?