先给出个链接,如果对SSD算法原理不了解的可以看下我在bilibili上的视频讲解:

之前看了SSD的论文,但也只是仅仅停留在论文层面,这几天在github上找到了一位大神在一年前用Tensorflow实现了SSD算法。这几天也抽空阅读了下代码,主要分析了下几个重要的模块,接下来做一个简单的总结。

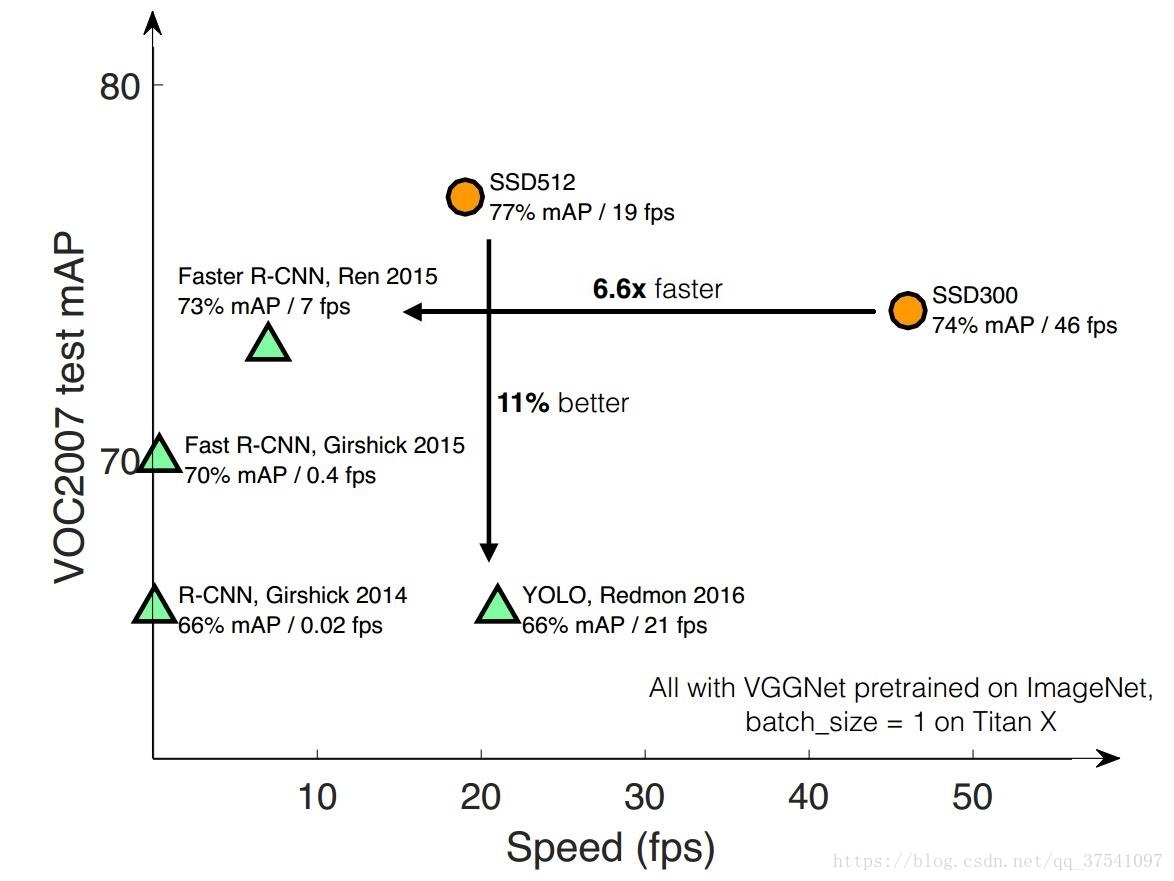

SSD(Single Shot MultiBox Detector)是大神Wei Liu在 ECCV 2016上发表的一种的目标检测算法。对于输入图像大小300x300的版本在VOC2007数据集上达到了72.1%mAP的准确率并且检测速度达到了惊人的58FPS( Faster RCNN:73.2%mAP,7FPS; YOLOv1: 63.4%mAP,45FPS ),500x500的版本达到了75.1%mAP的准确率。当然算法YOLOv2已经赶上了SSD,YOLOv3已经超越SSD,但SSD算法依旧值得研究。

首先放出Tensorflow版的SSD代码,链接。

接下来,通过5个方面(SSD重要参数设置、SSD网络结构、默认框(default box)的生成、默认框与GT的匹配以及偏差计算、Loss函数计算)来分析ssd300x300版本的代码。

SSD重要参数设置

在ssd_vgg_300.py文件中初始化重要的网络参数,主要有用于生成默认框的特征层,每层默认框的默认尺寸以及长宽比例:

default_params = SSDParams(

img_shape=(300, 300), # 图片输入尺寸

num_classes=21, # 预测类别20+1(背景)

no_annotation_label=21,

feat_layers=['block4', 'block7', 'block8', 'block9', 'block10', 'block11'], # 用于生成default box的特征层

feat_shapes=[(38, 38), (19, 19), (10, 10), (5, 5), (3, 3), (1, 1)], # 对应特征层的特征图尺寸

anchor_size_bounds=[0.15, 0.90], # Smin = 0.15, Smax = 0.9

# anchor_size_bounds=[0.20, 0.90],

anchor_sizes=[(21., 45.), # 当前层与下一层的预测默认矩形边框尺寸,即Sk的值,与论文中的计算公式并不对应

(45., 99.),

(99., 153.),

(153., 207.),

(207., 261.),

(261., 315.)],

anchor_ratios=[[2, .5], # 生成默认框的形状比例,不包含1:1的比例

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5, 3, 1./3],

[2, .5],

[2, .5]],

anchor_steps=[8, 16, 32, 64, 100, 300], # 特征图上一步对应在原图上的跨度 anchor_step*feat_shapey与等于300

anchor_offset=0.5, # 偏移

normalizations=[20, -1, -1, -1, -1, -1], # 特征层是否正则处理

prior_scaling=[0.1, 0.1, 0.2, 0.2] # 默认框与真实框的差异缩放比例

)

除了以上参数需要注意外,还要留意下各个种类及其对应的Label

在pascalvoc_common.py文件中给出了相应信息,注意0代表着背景的概率。

VOC_LABELS = {

'none': (0, 'Background'),

'aeroplane': (1, 'Vehicle'),

'bicycle': (2, 'Vehicle'),

'bird': (3, 'Animal'),

'boat': (4, 'Vehicle'),

'bottle': (5, 'Indoor'),

'bus': (6, 'Vehicle'),

'car': (7, 'Vehicle'),

'cat': (8, 'Animal'),

'chair': (9, 'Indoor'),

'cow': (10, 'Animal'),

'diningtable': (11, 'Indoor'),

'dog': (12, 'Animal'),

'horse': (13, 'Animal'),

'motorbike': (14, 'Vehicle'),

'person': (15, 'Person'),

'pottedplant': (16, 'Indoor'),

'sheep': (17, 'Animal'),

'sofa': (18, 'Indoor'),

'train': (19, 'Vehicle'),

'tvmonitor': (20, 'Indoor'),

}

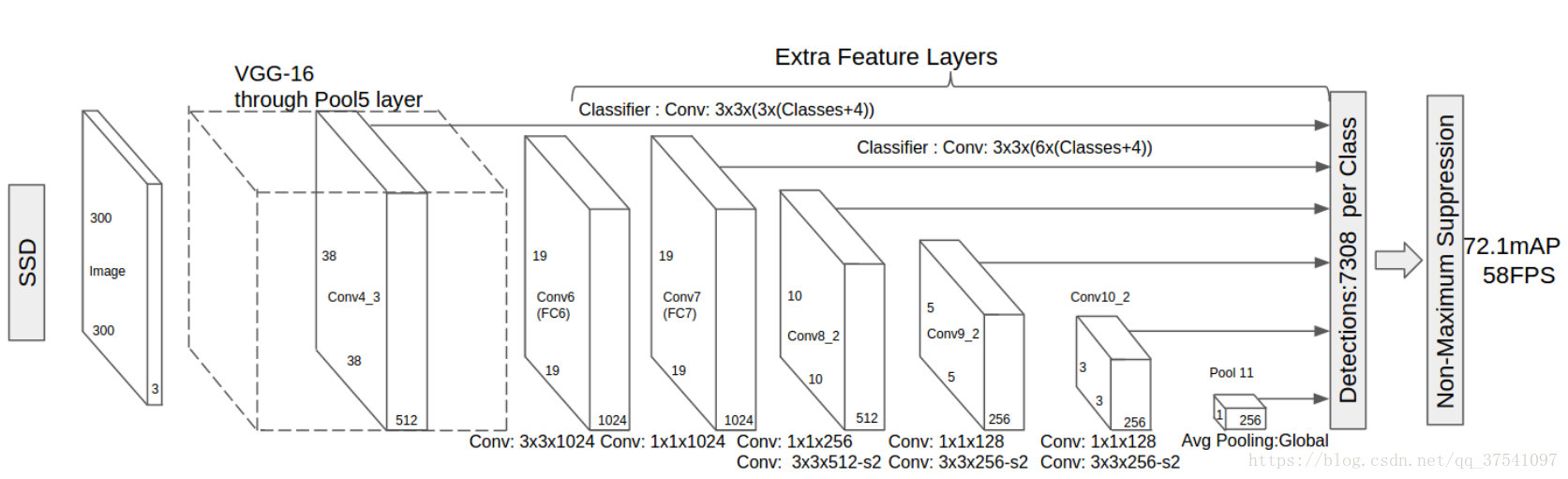

SSD网络结构

上图是SSD原论文中的网络结构图,特征提取网络(前置网络)为VGG-16,可结合SSD中的一些重要参数进行理解。

上图是SSD原论文中的网络结构图,特征提取网络(前置网络)为VGG-16,可结合SSD中的一些重要参数进行理解。

在代码中作者主要利用了Tensorflow的Slim框架搭建的网络。

# 建立SSD网络

def ssd_net(inputs,

num_classes=SSDNet.default_params.num_classes,

feat_layers=SSDNet.default_params.feat_layers,

anchor_sizes=SSDNet.default_params.anchor_sizes,

anchor_ratios=SSDNet.default_params.anchor_ratios,

normalizations=SSDNet.default_params.normalizations,

is_training=True,

dropout_keep_prob=0.5,

prediction_fn=slim.softmax,

reuse=None,

scope='ssd_300_vgg'):

"""SSD net definition.

"""

# if data_format == 'NCHW':

# inputs = tf.transpose(inputs, perm=(0, 3, 1, 2))

# End_points collect relevant activations for external use.

# 用于收集每一层的输出

end_points = {}

with tf.variable_scope(scope, 'ssd_300_vgg', [inputs], reuse=reuse):

# Original VGG-16 blocks.

net = slim.repeat(inputs, 2, slim.conv2d, 64, [3, 3], scope='conv1')

end_points['block1'] = net

net = slim.max_pool2d(net, [2, 2], scope='pool1')

# Block 2.

net = slim.repeat(net, 2, slim.conv2d, 128, [3, 3], scope='conv2')

end_points['block2'] = net

net = slim.max_pool2d(net, [2, 2], scope='pool2')

# Block 3.

net = slim.repeat(net, 3, slim.conv2d, 256, [3, 3], scope='conv3')

end_points['block3'] = net

net = slim.max_pool2d(net, [2, 2], scope='pool3')

# Block 4.

net = slim.repeat(net, 3, slim.conv2d, 512, [3, 3], scope='conv4')

end_points['block4'] = net

net = slim.max_pool2d(net, [2, 2], scope='pool4')

# Block 5.

net = slim.repeat(net, 3, slim.conv2d, 512, [3, 3], scope='conv5')

end_points['block5'] = net

net = slim.max_pool2d(net, [3, 3], stride=1, scope='pool5')

# Additional SSD blocks.

# Block 6: let's dilate the hell out of it!

net = slim.conv2d(net, 1024, [3, 3], rate=6, scope='conv6')

end_points['block6'] = net

net = tf.layers.dropout(net, rate=dropout_keep_prob, training=is_training)

# Block 7: 1x1 conv. Because the fuck.

net = slim.conv2d(net, 1024, [1, 1], scope='conv7')

end_points['block7'] = net

net = tf.layers.dropout(net, rate=dropout_keep_prob, training=is_training)

# Block 8/9/10/11: 1x1 and 3x3 convolutions stride 2 (except lasts).

end_point = 'block8'

with tf.variable_scope(end_point):

net = slim.conv2d(net, 256, [1, 1], scope='conv1x1')

net = custom_layers.pad2d(net, pad=(1, 1))

net = slim.conv2d(net, 512, [3, 3], stride=2, scope='conv3x3', padding='VALID')

end_points[end_point] = net

end_point = 'block9'

with tf.variable_scope(end_point):

net = slim.conv2d(net, 128, [1, 1], scope='conv1x1')

net = custom_layers.pad2d(net, pad=(1, 1))

net = slim.conv2d(net, 256, [3, 3], stride=2, scope='conv3x3', padding='VALID')

end_points[end_point] = net

end_point = 'block10'

with tf.variable_scope(end_point):

net = slim.conv2d(net, 128, [1, 1], scope='conv1x1')

net = slim.conv2d(net, 256, [3, 3], scope='conv3x3', padding='VALID')

end_points[end_point] = net

end_point = 'block11'

with tf.variable_scope(end_point):

net = slim.conv2d(net, 128, [1, 1], scope='conv1x1')

net = slim.conv2d(net, 256, [3, 3], scope='conv3x3', padding='VALID')

end_points[end_point] = net

# Prediction and localisations layers.

# 预测类别和位置调整

predictions = []

logits = []

localisations = []

for i, layer in enumerate(feat_layers):

with tf.variable_scope(layer + '_box'):

# 接受特征层的输出,生成类别和位置预测

p, l = ssd_multibox_layer(end_points[layer],

num_classes,

anchor_sizes[i],

anchor_ratios[i],

normalizations[i])

# 收集每一层的预测结果

predictions.append(prediction_fn(p)) # prediction_fc为softmax函数,预测类别

logits.append(p) # 概率

localisations.append(l) # 预测位置偏移

return predictions, localisations, logits, end_points

网络结构的搭建比较简单,这里在简单分析下接在每个用于预测的特征层后的卷积层(用于生成默认框对应目标类别以及中心点偏移量和长宽调整比例),ssd_multibox_layer函数:

def ssd_multibox_layer(inputs, # 输入的特征层

num_classes,

sizes, # 当前层与下一层的预测默认矩形边框尺寸,即Sk的值

ratios=[1], # 矩形框长宽比

normalization=-1, # 是否正则化

bn_normalization=False):

"""Construct a multibox layer, return a class and localization predictions.

生成预测中心偏移量和宽高调整比例

"""

net = inputs

if normalization > 0:

net = custom_layers.l2_normalization(net, scaling=True)

# Number of anchors.

num_anchors = len(sizes) + len(ratios)

# Location.默认框位置偏移量预测

num_loc_pred = num_anchors * 4

loc_pred = slim.conv2d(net, num_loc_pred, [3, 3], activation_fn=None,

scope='conv_loc')

loc_pred = custom_layers.channel_to_last(loc_pred)

loc_pred = tf.reshape(loc_pred,

tensor_shape(loc_pred, 4)[:-1]+[num_anchors, 4])

# Class prediction.默认框内目标类别预测

num_cls_pred = num_anchors * num_classes

cls_pred = slim.conv2d(net, num_cls_pred, [3, 3], activation_fn=None,

scope='conv_cls')

cls_pred = custom_layers.channel_to_last(cls_pred)

cls_pred = tf.reshape(cls_pred,

tensor_shape(cls_pred, 4)[:-1]+[num_anchors, num_classes])

return cls_pred, loc_pred

默认框(default box)的生成

对与每个用来预测的特征图,按照不同的大小(scale)和长宽比(ratio)生成k个默认框(default box),k的值由scale和ratio共同决定。例如对于特征层'block4',特征图尺寸为38x38,默认框size为(21., 45.)(注意45是下一特征层默认框的大小),默认框ratio为(2., 0.5),故k=4(尺寸21的有1:1, 1:2, 2:1三个默认框,以及论文中额外添加的一个尺寸为sqrt(21, 45)的1:1一个默认框),该层总共会生成38x38x4个默认框。

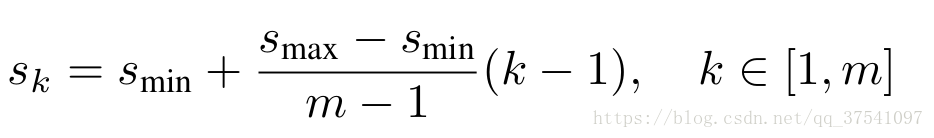

对于默认框的大小论文由给出计算公式:

Smin论文中为0.2代码中为1.5, Smax论文中代码中都为0.9。m是用于预测的特征图个数,k代表层数。但代码中每层默认框大小并不与计算公式对应。

# 生成一层anchor box

def ssd_anchor_one_layer(img_shape, # 原始图像shape

feat_shape, # 特征图shape

sizes, # 默认box大小

ratios, # 默认box长宽比

step, # 特征图上一步对应在原图上的跨度

offset=0.5,

dtype=np.float32):

"""Computer SSD default anchor boxes for one feature layer.

Determine the relative position grid of the centers, and the relative

width and height.

Arguments:

feat_shape: Feature shape, used for computing relative position grids;

size: Absolute reference sizes;

ratios: Ratios to use on these features;

img_shape: Image shape, used for computing height, width relatively to the

former;

offset: Grid offset.

Return:

y, x, h, w: Relative x and y grids, and height and width.

"""

# Compute the position grid: simple way.

# y, x = np.mgrid[0:feat_shape[0], 0:feat_shape[1]]

# y = (y.astype(dtype) + offset) / feat_shape[0]

# x = (x.astype(dtype) + offset) / feat_shape[1]

# Weird SSD-Caffe computation using steps values...

# 计算默认框中心坐标(相对原图)

y, x = np.mgrid[0:feat_shape[0], 0:feat_shape[1]]

y = (y.astype(dtype) + offset) * step / img_shape[0]

x = (x.astype(dtype) + offset) * step / img_shape[1]

# Expand dims to support easy broadcasting.

y = np.expand_dims(y, axis=-1)

x = np.expand_dims(x, axis=-1)

# Compute relative height and width.

# Tries to follow the original implementation of SSD for the order.

num_anchors = len(sizes) + len(ratios) # 默认框的个数

h = np.zeros((num_anchors, ), dtype=dtype) # 初始化高

w = np.zeros((num_anchors, ), dtype=dtype) # 初始化宽

# Add first anchor boxes with ratio=1.

h[0] = sizes[0] / img_shape[0] # 添加长宽比为1的默认框

w[0] = sizes[0] / img_shape[1]

di = 1

if len(sizes) > 1:

h[1] = math.sqrt(sizes[0] * sizes[1]) / img_shape[0] # 添加一组特殊的默认框,长宽比为1,大小为sqrt(s(i) + s(i+1))

w[1] = math.sqrt(sizes[0] * sizes[1]) / img_shape[1]

di += 1

for i, r in enumerate(ratios): # 添加不同比例的默认框(ratios中不含1)

h[i+di] = sizes[0] / img_shape[0] / math.sqrt(r)

w[i+di] = sizes[0] / img_shape[1] * math.sqrt(r)

return y, x, h, w

默认框与GT的匹配、以及偏差计算

该部分主要是默认框的匹配策略(和原论文中的Matching strategy有些不同,该部分仅仅是寻找与之IOU最大的GTbox,并没有通过阈值0.5去筛选正样本),将每个默认框与ground truth box进行匹配,寻找与之IOU(交并比)最大的ground truth box,并计算每个默认框与之匹配的ground truth box的偏差(矩形框中心坐标x、y方向偏移量,以及高h宽w的缩放比例)。

根据程序的运行流程首先是ssd_vgg_300.py文件中的bboxes_encode函数:

def bboxes_encode(self, labels, bboxes, anchors, # lables是GT box对应的标签, bboxes是GT box对应的坐标信息

scope=None): # anchors是生成的默认框

"""Encode labels and bounding boxes.

"""

return ssd_common.tf_ssd_bboxes_encode(

labels, bboxes, anchors,

self.params.num_classes,

self.params.no_annotation_label,

ignore_threshold=0.5,

prior_scaling=self.params.prior_scaling,

scope=scope)接下来我们在分析bboxes_encode函数中的tf_ssd_bboxes_encode函数(位于ssd_common.py文件中):

def tf_ssd_bboxes_encode(labels, # 真实标签

bboxes, # 真实bbox

anchors, # 存放每一个预测层生成的默认框

num_classes,

no_annotation_label,

ignore_threshold=0.5,

prior_scaling=[0.1, 0.1, 0.2, 0.2],

dtype=tf.float32,

scope='ssd_bboxes_encode'):

"""Encode groundtruth labels and bounding boxes using SSD net anchors.

Encoding boxes for all feature layers.

Arguments:

labels: 1D Tensor(int64) containing groundtruth labels;

bboxes: Nx4 Tensor(float) with bboxes relative coordinates;

anchors: List of Numpy array with layer anchors;

matching_threshold: Threshold for positive match with groundtruth bboxes;

prior_scaling: Scaling of encoded coordinates.

Return:

(target_labels, target_localizations, target_scores):

Each element is a list of target Tensors.

"""

with tf.name_scope(scope):

target_labels = [] # 存放匹配到的GTbox的label的容器

target_localizations = [] # 存放匹配到的GTbox的位置信息的容器

target_scores = [] # 存放默认框与匹配到的GTbox的IOU(交并比)

for i, anchors_layer in enumerate(anchors): # 遍历每个预测层的默认框

with tf.name_scope('bboxes_encode_block_%i' % i):

t_labels, t_loc, t_scores = \

tf_ssd_bboxes_encode_layer(labels, bboxes, anchors_layer, # 匹配默认框的ground truth box并计算偏差

num_classes, no_annotation_label,

ignore_threshold,

prior_scaling, dtype)

target_labels.append(t_labels) # 匹配到的ground truth box对应标签

target_localizations.append(t_loc) # 默认框与匹配到的ground truth box的坐标差异

target_scores.append(t_scores) # 默认框与匹配到的ground truth box的IOU(交并比)

return target_labels, target_localizations, target_scores在tf_ssd_bboxes_encode函数中最主要的就是tf_ssd_bboxes_encode_layer函数(需要注意的是:在该函数中仅仅只是寻找与每个默认框最匹配的GTbox,并没有进行筛选正负样本,关于正负样本的选取会在下一部分losses计算中讲述),接下来我们在仔细分析该函数:

def tf_ssd_bboxes_encode_layer(labels, # GTbox类别

bboxes, # GTbox的位置信息

anchors_layer, # 默认框坐标信息(中心点坐标以及宽、高)

num_classes,

no_annotation_label,

ignore_threshold=0.5,

prior_scaling=[0.1, 0.1, 0.2, 0.2],

dtype=tf.float32):

"""Encode groundtruth labels and bounding boxes using SSD anchors from

one layer.

Arguments:

labels: 1D Tensor(int64) containing groundtruth labels;

bboxes: Nx4 Tensor(float) with bboxes relative coordinates;

anchors_layer: Numpy array with layer anchors;

matching_threshold: Threshold for positive match with groundtruth bboxes;

prior_scaling: Scaling of encoded coordinates.

Return:

(target_labels, target_localizations, target_scores): Target Tensors.

"""

# Anchors coordinates and volume.

yref, xref, href, wref = anchors_layer

ymin = yref - href / 2. # 转换到默认框的左上角坐标以及右下角坐标

xmin = xref - wref / 2.

ymax = yref + href / 2.

xmax = xref + wref / 2.

vol_anchors = (xmax - xmin) * (ymax - ymin) # 默认框的面积

# Initialize tensors...

# 初始化各参数

shape = (yref.shape[0], yref.shape[1], href.size)

feat_labels = tf.zeros(shape, dtype=tf.int64) # 存放默认框匹配的GTbox标签

feat_scores = tf.zeros(shape, dtype=dtype) # 存放默认框与匹配的GTbox的IOU(交并比)

feat_ymin = tf.zeros(shape, dtype=dtype) # 存放默认框匹配到的GTbox的坐标信息

feat_xmin = tf.zeros(shape, dtype=dtype)

feat_ymax = tf.ones(shape, dtype=dtype)

feat_xmax = tf.ones(shape, dtype=dtype)

def jaccard_with_anchors(bbox): # 计算重叠度函数

"""Compute jaccard score between a box and the anchors.

"""

int_ymin = tf.maximum(ymin, bbox[0])

int_xmin = tf.maximum(xmin, bbox[1])

int_ymax = tf.minimum(ymax, bbox[2])

int_xmax = tf.minimum(xmax, bbox[3])

h = tf.maximum(int_ymax - int_ymin, 0.)

w = tf.maximum(int_xmax - int_xmin, 0.)

# Volumes.

inter_vol = h * w

union_vol = vol_anchors - inter_vol \

+ (bbox[2] - bbox[0]) * (bbox[3] - bbox[1])

jaccard = tf.div(inter_vol, union_vol)

return jaccard

def intersection_with_anchors(bbox):

"""Compute intersection between score a box and the anchors.

"""

int_ymin = tf.maximum(ymin, bbox[0])

int_xmin = tf.maximum(xmin, bbox[1])

int_ymax = tf.minimum(ymax, bbox[2])

int_xmax = tf.minimum(xmax, bbox[3])

h = tf.maximum(int_ymax - int_ymin, 0.)

w = tf.maximum(int_xmax - int_xmin, 0.)

inter_vol = h * w

scores = tf.div(inter_vol, vol_anchors)

return scores

def condition(i, feat_labels, feat_scores, # 循环条件

feat_ymin, feat_xmin, feat_ymax, feat_xmax):

"""Condition: check label index.

"""

r = tf.less(i, tf.shape(labels)) # tf.shape(labels)GTbox的个数,当i<=tf.shape(labels)是返回True

return r[0]

def body(i, feat_labels, feat_scores, # 循环执行主体

feat_ymin, feat_xmin, feat_ymax, feat_xmax):

"""Body: update feature labels, scores and bboxes.

Follow the original SSD paper for that purpose:

- assign values when jaccard > 0.5;

- only update if beat the score of other bboxes.

寻找该层所有默认框匹配满足条件的GTbox

"""

# Jaccard score.

label = labels[i]

bbox = bboxes[i]

jaccard = jaccard_with_anchors(bbox) # 计算该层所有的默认框与该真实框的交并比

# Mask: check threshold + scores + no annotations + num_classes.

mask = tf.greater(jaccard, feat_scores) # 交并比是否比之前匹配的GTbox大

# mask = tf.logical_and(mask, tf.greater(jaccard, matching_threshold))

mask = tf.logical_and(mask, feat_scores > -0.5) # 暂不清楚意义,但这里并不是为了获取正样本所以并不是大于0.5

mask = tf.logical_and(mask, label < num_classes) # 感觉没有任何意义真实标签label肯定小于num_classes,防止出错?

imask = tf.cast(mask, tf.int64) # 转型

fmask = tf.cast(mask, dtype) # dtype float32

# Update values using mask. 根据mask更新标签和交并比

feat_labels = imask * label + (1 - imask) * feat_labels # 当imask为1时更新标签

feat_scores = tf.where(mask, jaccard, feat_scores) # 当mask为true时更新为jaccard,否则为feat_score

feat_ymin = fmask * bbox[0] + (1 - fmask) * feat_ymin # 当fmask为1.0时更新坐标信息

feat_xmin = fmask * bbox[1] + (1 - fmask) * feat_xmin

feat_ymax = fmask * bbox[2] + (1 - fmask) * feat_ymax

feat_xmax = fmask * bbox[3] + (1 - fmask) * feat_xmax

# Check no annotation label: ignore these anchors...

# interscts = intersection_with_anchors(bbox)

# mask = tf.logical_and(interscts > ignore_threshold,

# label == no_annotation_label)

# # Replace scores by -1.

# feat_scores = tf.where(mask, -tf.cast(mask, dtype), feat_scores)

return [i+1, feat_labels, feat_scores,

feat_ymin, feat_xmin, feat_ymax, feat_xmax]

# Main loop definition.

i = 0

[i, feat_labels, feat_scores,

feat_ymin, feat_xmin,

feat_ymax, feat_xmax] = tf.while_loop(condition, body, # tf.while_loop是一个循环函数condition是循环条件,body是循环体

[i, feat_labels, feat_scores, # 第三项是参数

feat_ymin, feat_xmin,

feat_ymax, feat_xmax])

# Transform to center / size. 转换回中心坐标以及宽高

feat_cy = (feat_ymax + feat_ymin) / 2.

feat_cx = (feat_xmax + feat_xmin) / 2.

feat_h = feat_ymax - feat_ymin

feat_w = feat_xmax - feat_xmin

# Encode features.

feat_cy = (feat_cy - yref) / href / prior_scaling[0] # 默认框中心与匹配的真实框中心坐标偏差

feat_cx = (feat_cx - xref) / wref / prior_scaling[1]

feat_h = tf.log(feat_h / href) / prior_scaling[2] # 高和宽的偏差

feat_w = tf.log(feat_w / wref) / prior_scaling[3]

# Use SSD ordering: x / y / w / h instead of ours.

feat_localizations = tf.stack([feat_cx, feat_cy, feat_w, feat_h], axis=-1)

return feat_labels, feat_localizations, feat_scores

Loss函数计算

SSD的Loss函数包含两项:(1)预测类别损失(2)预测位置偏移量损失:

Loss中的N代表着被挑选出来的默认框个数(包括正样本和负样本),L(los)即位置偏移量损失是Smooth L1 loss(是默认框与GTbox之间的位置偏移与网络预测出的位置偏移量之间的损失),L(conf)即预测类别损失是多类别softmax loss,α的值设置为1. Smooth L1 loss定义为:

L(los)损失函数的定义为:

根据函数定义我们可以看到L(los)损失函数主要有四部分:中心坐标cx的偏移量损失,中心点坐标cy的偏移损失,宽度w的缩放损失以及高度h的缩放损失。式中的l表示的是预测的坐标偏移量,g表示的是默认框与之匹配的GTbox的坐标偏移量。

L(conf)多类别softmax loss损失定义为:

根据函数定义我们可以看到L(conf)损失由两部分组成:正样本(Pos)损失和负样本(Neg)损失。

接下来我们来分析下ssd_vgg_300.py文件中的ssd_losses函数,需要注意的是负样本的选取(论文中Hard negative mining部分),什么是hard negative mining,主要是为了降低假阳性即背景被识别成目标,粘一段百度的回答:对于目标检测中我们会事先标记处ground truth,然后再算法中会生成一系列proposal,这些proposal有跟标记的ground truth重合的也有没重合的,那么重合度(IOU)超过一定阈值(通常0.5)的则认定为是正样本,以下的则是负样本。然后扔进网络中训练。However,这也许会出现一个问题那就是正样本的数量远远小于负样本,这样训练出来的分类器的效果总是有限的,会出现许多false positive,把其中得分较高的这些false positive当做所谓的Hard negative,既然mining出了这些Hard negative,就把这些扔进网络再训练一次,从而加强分类器判别假阳性的能力。

def ssd_losses(logits, localisations, # logits预测类别 localisation预测偏移位置

gclasses, glocalisations, gscores, # gclasses正确类别 glocalisation实际偏移位置 gscores与GT的交并比

match_threshold=0.5,

negative_ratio=3.,

alpha=1.,

label_smoothing=0.,

device='/cpu:0',

scope=None):

with tf.name_scope(scope, 'ssd_losses'):

lshape = tfe.get_shape(logits[0], 5)

num_classes = lshape[-1]

batch_size = lshape[0]

# Flatten out all vectors! 展平所有向量

flogits = []

fgclasses = []

fgscores = []

flocalisations = []

fglocalisations = []

for i in range(len(logits)):

flogits.append(tf.reshape(logits[i], [-1, num_classes]))

fgclasses.append(tf.reshape(gclasses[i], [-1]))

fgscores.append(tf.reshape(gscores[i], [-1]))

flocalisations.append(tf.reshape(localisations[i], [-1, 4]))

fglocalisations.append(tf.reshape(glocalisations[i], [-1, 4]))

# And concat the crap!

logits = tf.concat(flogits, axis=0)

gclasses = tf.concat(fgclasses, axis=0)

gscores = tf.concat(fgscores, axis=0)

localisations = tf.concat(flocalisations, axis=0)

glocalisations = tf.concat(fglocalisations, axis=0)

dtype = logits.dtype

# Compute positive matching mask... 计算正样本数目

pmask = gscores > match_threshold # 交并比是否大于0.5

fpmask = tf.cast(pmask, dtype)

n_positives = tf.reduce_sum(fpmask) # 正样本数目

# Hard negative mining...

no_classes = tf.cast(pmask, tf.int32)

predictions = slim.softmax(logits)

nmask = tf.logical_and(tf.logical_not(pmask), # 交并比小于0.5并大于-0.5的负样本

gscores > -0.5)

fnmask = tf.cast(nmask, dtype) # 转成float型

nvalues = tf.where(nmask, # True时为背景概率,False时为1.0

predictions[:, 0], # 0 是 background

1. - fnmask)

nvalues_flat = tf.reshape(nvalues, [-1])

# Number of negative entries to select.

max_neg_entries = tf.cast(tf.reduce_sum(fnmask), tf.int32) # 所有供选择的负样本数目

n_neg = tf.cast(negative_ratio * n_positives, tf.int32) + batch_size

n_neg = tf.minimum(n_neg, max_neg_entries) # 负样本的个数

val, idxes = tf.nn.top_k(-nvalues_flat, k=n_neg) # 按顺序排获取前k个值,以及对应id

max_hard_pred = -val[-1] # 负样本的背景概率阈值

# Final negative mask.

nmask = tf.logical_and(nmask, nvalues < max_hard_pred) # 交并比小于0.5并大于-0.5的负样本,且概率小于max_hard_pred

fnmask = tf.cast(nmask, dtype)

# Add cross-entropy loss.

with tf.name_scope('cross_entropy_pos'):

loss = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits,

labels=gclasses)

loss = tf.div(tf.reduce_sum(loss * fpmask), batch_size, name='value') # fpmask是正样本的mask,正1,负0

tf.losses.add_loss(loss)

with tf.name_scope('cross_entropy_neg'):

loss = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits,

labels=no_classes)

loss = tf.div(tf.reduce_sum(loss * fnmask), batch_size, name='value') # fnmask是负样本的mask,负为1,正为0

tf.losses.add_loss(loss)

# Add localization loss: smooth L1, L2, ...

with tf.name_scope('localization'):

# Weights Tensor: positive mask + random negative.

weights = tf.expand_dims(alpha * fpmask, axis=-1)

loss = custom_layers.abs_smooth(localisations - glocalisations)

loss = tf.div(tf.reduce_sum(loss * weights), batch_size, name='value')

tf.losses.add_loss(loss)

SSD算法解析

SSD算法解析

本文深入解析SSD目标检测算法,涵盖重要参数配置、网络结构、默认框生成等关键环节,并探讨损失函数计算及正负样本匹配策略。

本文深入解析SSD目标检测算法,涵盖重要参数配置、网络结构、默认框生成等关键环节,并探讨损失函数计算及正负样本匹配策略。

5221

5221

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?