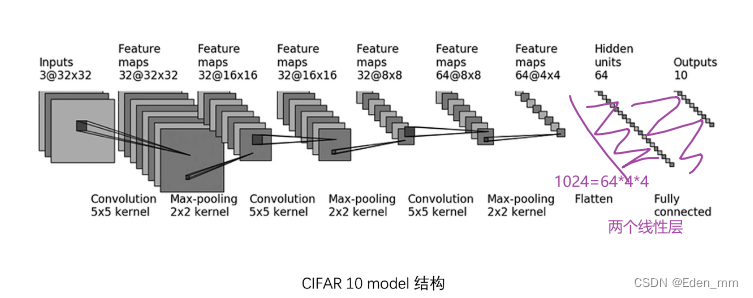

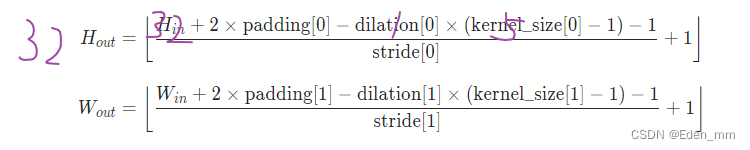

由官网公式,计算出padding可取2,stride可取1https://pytorch.org/docs/stable/generated/torch.nn.Conv2d.html#torch.nn.Conv2d

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Linear, Flatten

class Lixinyu(nn.Module):

def __init__(self):

super(Lixinyu, self).__init__()

self.conv1 = Conv2d(3, 32, 5, stride=1, padding=2)

self.maxpool1 = MaxPool2d(2)

self.conv2 = Conv2d(32, 32, 5, padding=2)

self.maxpool2 = MaxPool2d(2)

self.conv3 = Conv2d(32, 64, 5, padding=2)

self.maxpool3 = MaxPool2d(2)

self.flatten = Flatten()

self.linear1 = Linear(1024, 64)

self.linear2 = Linear(64, 10)

def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.conv3(x)

x = self.maxpool3(x)

x = self.flatten(x)

x = self.linear1(x)

x = self.linear2(x)

return x

lixinyu = Lixinyu()

print(lixinyu)

D:\anaconda\python.exe C:/Users/ASUS/Desktop/tudui/nn_sequential.py

Lixinyu(

(conv1): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(flatten): Flatten(start_dim=1, end_dim=-1)

(linear1): Linear(in_features=1024, out_features=64, bias=True)

(linear2): Linear(in_features=64, out_features=10, bias=True)

)

Process finished with exit code 0

检查所搭建的网络是否为所想要的

正常无实例化,更改linear中的 self.linear1 = Linear(1024, 64)为 self.linear1 = Linear(102400, 64),并不会报错!但当运用以下后,出错会报错

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Linear, Flatten

class Lixinyu(nn.Module):

def __init__(self):

super(Lixinyu, self).__init__()

self.conv1 = Conv2d(3, 32, 5, stride=1, padding=2)

self.maxpool1 = MaxPool2d(2)

self.conv2 = Conv2d(32, 32, 5, padding=2)

self.maxpool2 = MaxPool2d(2)

self.conv3 = Conv2d(32, 64, 5, padding=2)

self.maxpool3 = MaxPool2d(2)

self.flatten = Flatten()

self.linear1 = Linear(1024, 64)

self.linear2 = Linear(64, 10)

def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.conv3(x)

x = self.maxpool3(x)

x = self.flatten(x)

x = self.linear1(x)

x = self.linear2(x)

return x

lixinyu = Lixinyu()

print(lixinyu)

input = torch.ones(64, 3, 32, 32) #######

output = lixinyu(input)

print(output.shape)

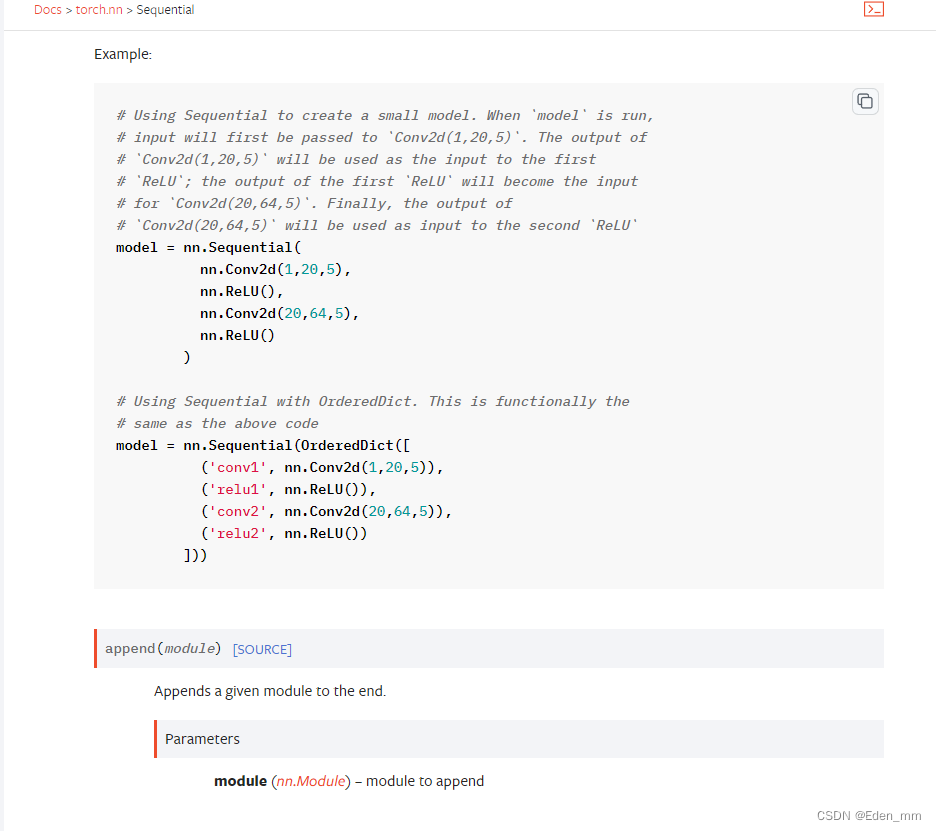

使用Sequential定义

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Linear, Flatten, Sequential

class Lixinyu(nn.Module):

def __init__(self):

super(Lixinyu, self).__init__()

self.model1 = Sequential(Conv2d(3, 32, 5, stride=1, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10))

def forward(self, x):

x = self.model1(x)

return x

lixinyu = Lixinyu()

print(lixinyu)

input = torch.ones(64, 3, 32, 32)

output = lixinyu(input)

print(output.shape)

D:\anaconda\python.exe C:/Users/ASUS/Desktop/tudui/nn_sequential.py

Lixinyu(

(model1): Sequential(

(0): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(4): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(5): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(6): Flatten(start_dim=1, end_dim=-1)

(7): Linear(in_features=1024, out_features=64, bias=True)

(8): Linear(in_features=64, out_features=10, bias=True)

)

)

torch.Size([64, 10])

Process finished with exit code 0

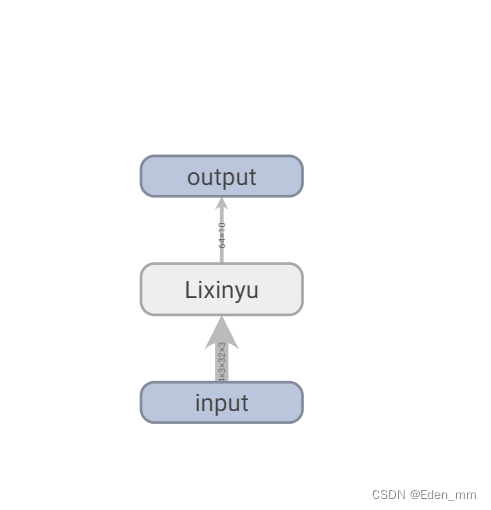

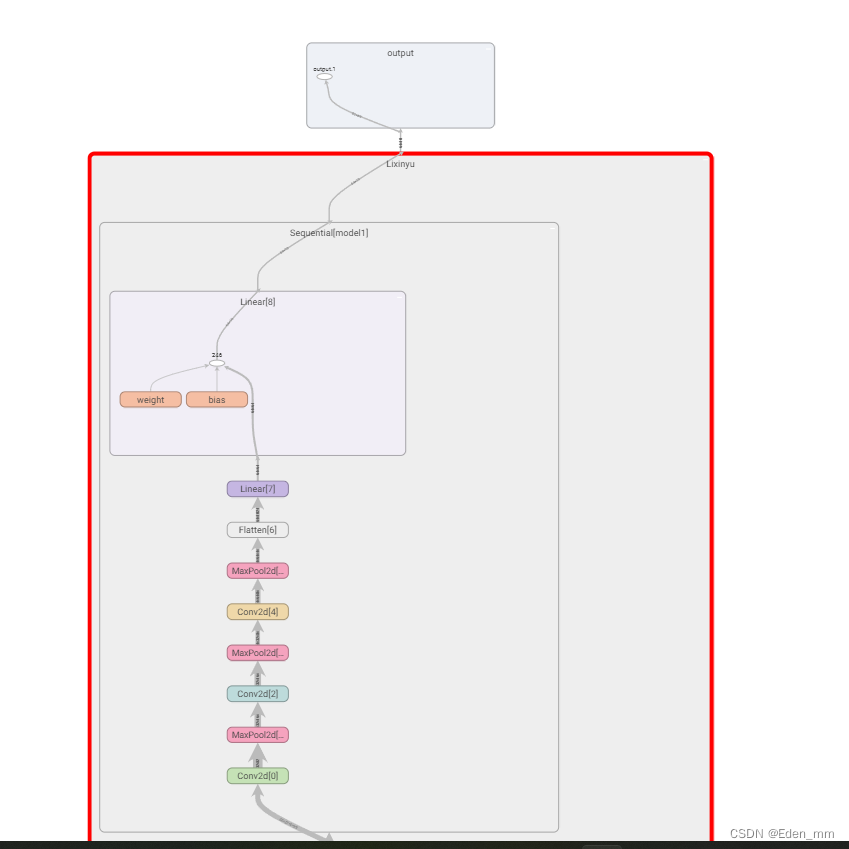

tensorboard可显示网络层

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Linear, Flatten, Sequential

from torch.utils.tensorboard import SummaryWriter

class Lixinyu(nn.Module):

def __init__(self):

super(Lixinyu, self).__init__()

self.model1 = Sequential(Conv2d(3, 32, 5, stride=1, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10))

def forward(self, x):

x = self.model1(x)

return x

lixinyu = Lixinyu()

print(lixinyu)

input = torch.ones(64, 3, 32, 32)

output = lixinyu(input)

print(output.shape)

writer = SummaryWriter("p22")

writer.add_graph(lixinyu, input) #################

writer.close()

双击打开

2213

2213

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?