yolov8 目标检测、分割、姿势估计、跟踪任务

文章目录

前言

计算机视觉神器YOLOV8开源之后,成为了目标检测、图像分割、人体姿态估计、关键点检测等领域的新标杆。

一、目标检测任务实现

1.图片检测

代码如下(示例):

from ultralytics import YOLO

import cv2

# 加载预训练模型

model = YOLO("yolov8n.pt", task='detect')

# model = YOLO("yolov8n.pt") task参数也可以不填写,它会根据模型去识别相应任务类别

# 检测图片

results = model("./images/multi-person.jpeg")

res = results[0].plot()

cv2.imshow("YOLOv8 Inference", res)

cv2.waitKey(0)

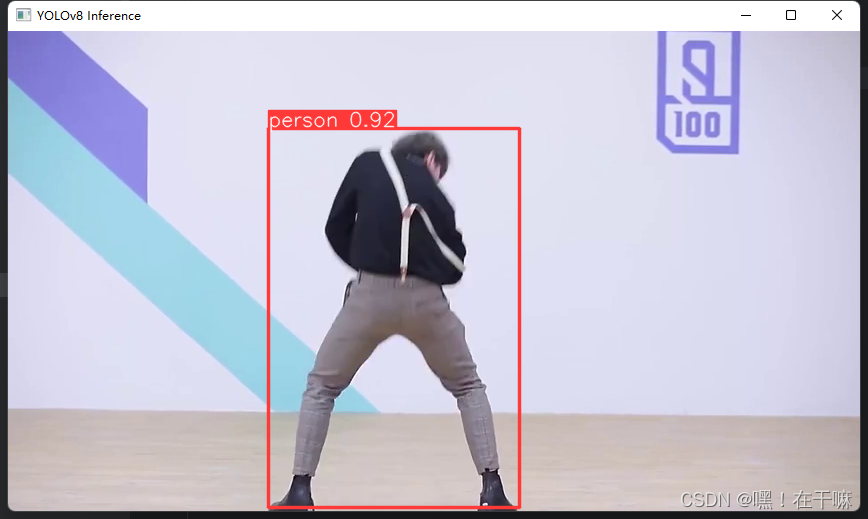

2.检测视频

代码如下(示例):

import cv2

from ultralytics import YOLO

model = YOLO('yolov8n.pt')

# 打开文件

video_path = "./videos/cxk.mp4"

cap = cv2.VideoCapture(video_path)

# 循环播放视频

while cap.isOpened():

# 读取视频的每一帧

success, frame = cap.read()

if success:

# 运行yolov8

results = model(frame)

# 可视化结果

annotated_frame = results[0].plot()

cv2.imshow("YOLOv8 Inference", annotated_frame)

# 按住q键盘播放结束

if cv2.waitKey(1) & 0xFF == ord("q"):

break

else:

#结束播放则循环结束

break

# 释放捕捉和窗口

cap.release()

cv2.destroyAllWindows()

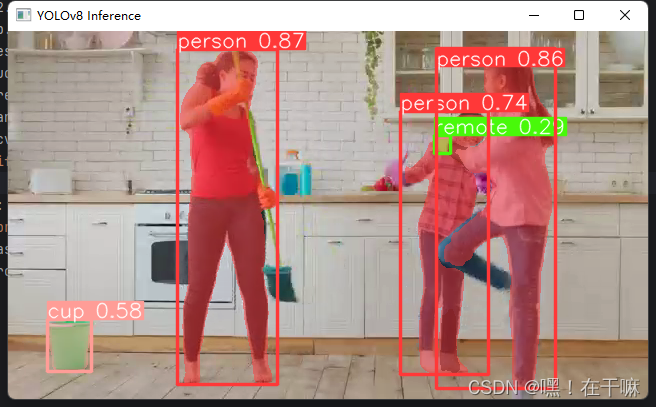

二、分割任务

1.分割图片

代码如下(示例):

from ultralytics import YOLO

import cv2

# 加载模型

model = YOLO('yolov8n-seg.pt')

results = model('./images/multi-person.jpeg')

res = results[0].plot(boxes=False) #boxes=False表示不展示预测框,True表示同时展示预测框

cv2.imshow("YOLOv8 Inference", res,)

cv2.waitKey(0)

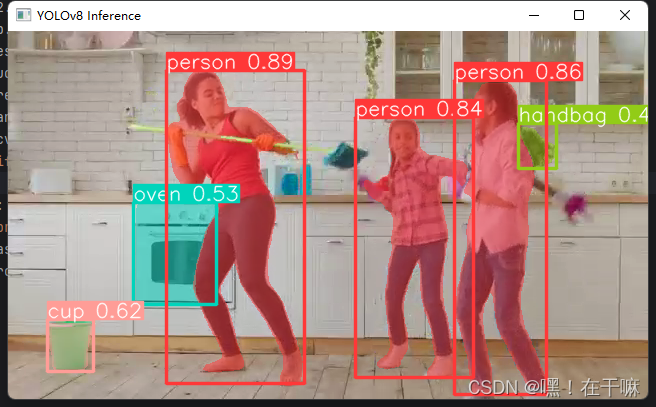

2.分割视频

代码如下(示例):

import cv2

from ultralytics import YOLO

model = YOLO('yolov8n-seg.pt', task='segment')

video_path = "./videos/mother_wx.mp4"

cap = cv2.VideoCapture(video_path)

while cap.isOpened():

success, frame = cap.read()

if success:

results = model(frame)

annotated_frame = results[0].plot()

cv2.imshow("YOLOv8 Inference", annotated_frame)

if cv2.waitKey(1) & 0xFF == ord("q"):

break

else:

break

cap.release()

cv2.destroyAllWindows()

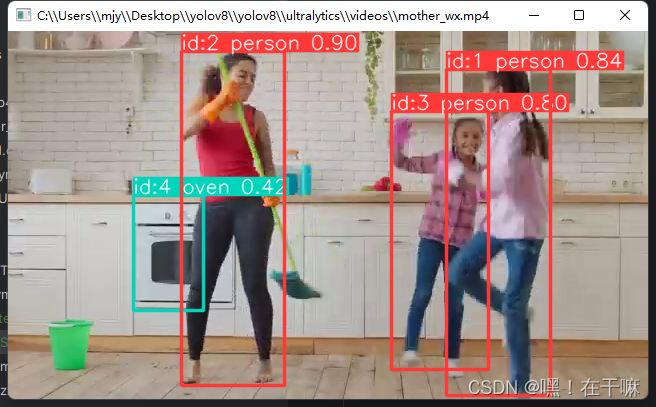

三、追踪任务

1.追踪视频

代码如下(示例):(和检测相比就多了个id)

from ultralytics import YOLO

model = YOLO('yolov8n.pt',task='detect')

results = model.track(source="./videos/mother_wx.mp4", show=True)

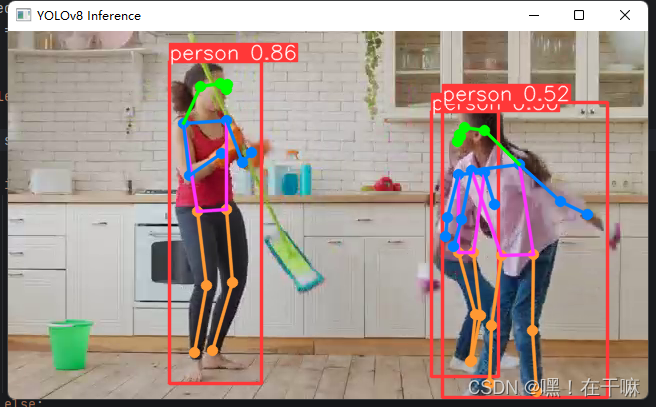

四、姿态检测任务

1.姿态检测图片

代码如下(示例):

from ultralytics import YOLO

import cv2

model = YOLO('yolov8n-pose.pt')

results = model('./images/multi-person.jpeg')

res = results[0].plot()

cv2.imshow("YOLOv8 Inference", res)

cv2.waitKey(0)

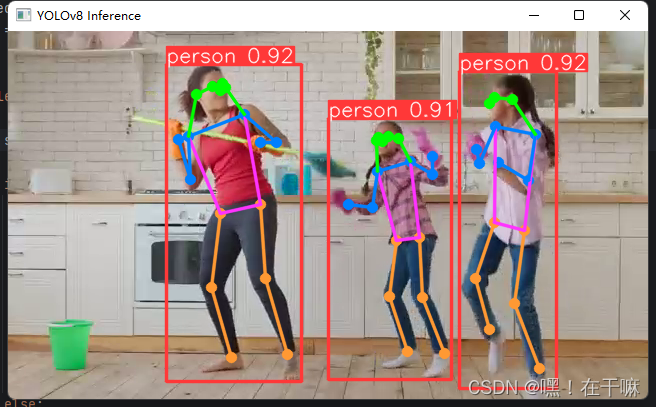

2.姿态检测视频

代码如下(示例):

import cv2

from ultralytics import YOLO

model = YOLO('yolov8n-pose.pt', task='pose')

video_path = "./videos/mother_wx.mp4"

cap = cv2.VideoCapture(video_path)

while cap.isOpened():

success, frame = cap.read()

if success:

results = model(frame)

annotated_frame = results[0].plot()

cv2.imshow("YOLOv8 Inference", annotated_frame)

if cv2.waitKey(1) & 0xFF == ord("q"):

break

else:

break

cap.release()

cv2.destroyAllWindows()

605

605

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?