前言

随机梯度下降为梯度下降的延伸版本

一、与梯度下降的区别

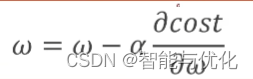

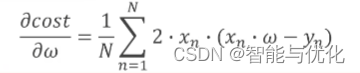

梯度下降采取如下公式(利用所有数据的平均损失)

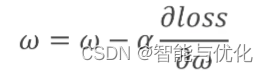

随机梯度下降采取如下公式(只利用一个数据的损失值),避免训练过程陷入鞍点

二、具体实例

1.求解说明

forward返回估计值

loss返回一组数据的损失值

gradient返回一组数据的梯度

2.代码示例

代码如下(示例):

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

w = 1.0

def forward(x):

return x * w

# calculate loss function

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) ** 2

# define the gradient function sgd

def gradient(x, y):

return 2 * x * (x * w - y)

epoch_list = []

loss_list = []

print('predict (before training)', 4, forward(4))

for epoch in range(100):

for x, y in zip(x_data, y_data):

grad = gradient(x, y)

w = w - 0.01 * grad # update weight by every grad of sample of training set

l = loss(x, y)

print("\tgrad:", x, y, grad,l)

print("progress:", epoch, "w=", w, "loss=", l)

epoch_list.append(epoch)

loss_list.append(l)

print('predict (after training)', 4, forward(4))

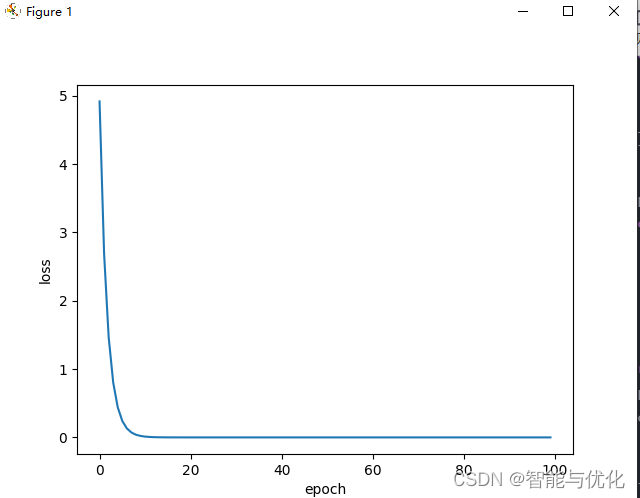

plt.plot(epoch_list, loss_list)

plt.ylabel('loss')

plt.xlabel('epoch')

plt.show()

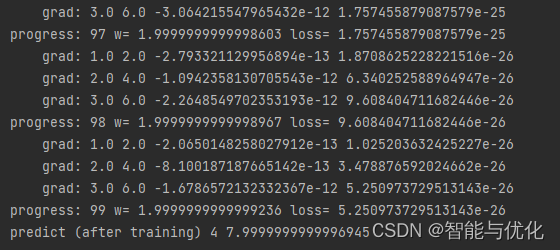

得到如下结果

总结

PyTorch学习2:梯度下降算法2(随机梯度下降)

3160

3160

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?