一、问题描述

日志的ETL操作(ETL:数据从来源端经过抽取(Extract)、转换(Transform)、加载(Load)至目的端的过程)

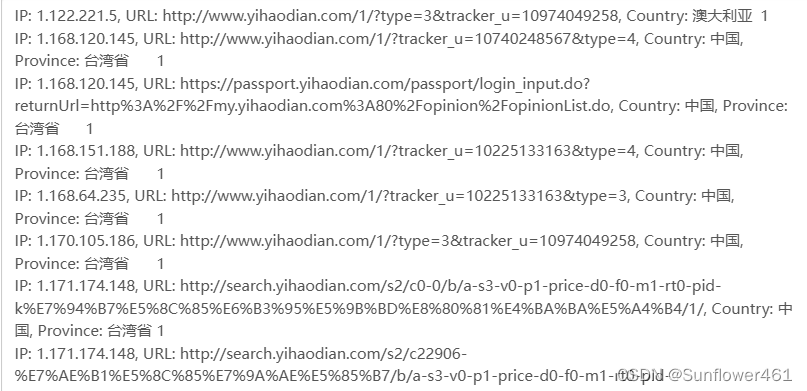

为什么要ETL:没有必要解析出所有数据,只需要解析出有价值的字段即可。本项目中需要解析出:ip、url、pageId(topicId对应的页面Id)、country、province、city

二、实现步骤及代码

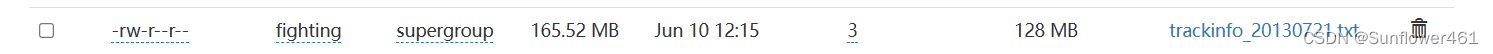

(1)将文件上传至hdfs上

(2)代码部分

Map阶段:

package page;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class PageViewMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

String[] parts = line.split("\\x01"); // 使用特殊字符分隔符 (ASCII SOH)

String count = "count";

if (parts.length >= 2) { // 确保行包含至少两部分

String page = count;

word.set(page);

context.write(word, one);

} else {

// 处理不合法的行

System.err.println("Skipping invalid line: " + line);

}

}

}

Reducer阶段

package page;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class PageViewReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

ETLDriver部分

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class LogETLDriver {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "Log ETL");

job.setJarByClass(LogETLDriver.class);

job.setMapperClass(LogETLMapper.class);

job.setCombinerClass(LogETLReducer.class);

job.setReducerClass(LogETLReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("输入文件路径"));

FileOutputFormat.setOutputPath(job, new Path("输出文件路径"));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

三、结果展示

8106

8106

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?