keepalived实现nginx负载均衡机高可用

文章目录

环境说明

| 系统信息 | 主机名 | IP |

|---|---|---|

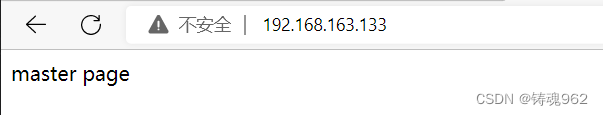

| rhel8.5 | master | 192.168.163.133 |

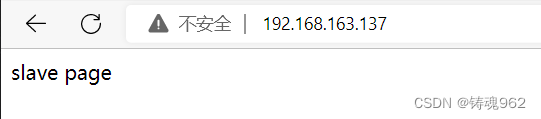

| rhel8.5 | slave | 192.168.163.137 |

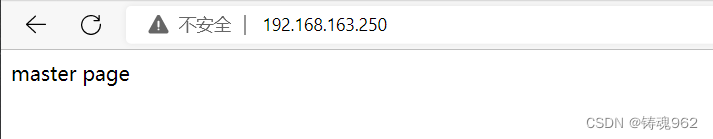

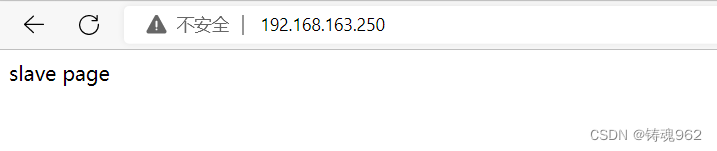

本次高可用虚拟IP(VIP)地址暂定为 192.168.163.250

1. 在主备机上分别安装nginx

在master上安装nginx

[root@localhost ~]# hostnamectl set-hostname master.example.com

[root@localhost ~]# bash

[root@master ~]# dnf module list|grep nginx

nginx 1.14 [d] common [d] nginx webserver

nginx 1.16 common [d] nginx webserver

nginx 1.18 common [d] nginx webserver

nginx 1.20 common [d] nginx webserver

nginx mainline common, minimal nginx webserver

[root@master ~]# dnf -y module install nginx:1.20

[root@master ~]# cd /usr/share/nginx/html/

[root@master html]# ls

404.html icons nginx-logo.png

50x.html index.html poweredby.png

[root@master html]# ll index.html

lrwxrwxrwx. 1 root root 25 Aug 26 2021 index.html -> ../../testpage/index.html

[root@master html]# cd /usr/share/testpage/

[root@master testpage]# ls

index.html

[root@master testpage]# echo 'master page'>index.html

[root@master testpage]# cat index.html

master page

[root@master testpage]# pwd

/usr/share/testpage

[root@master ~]# systemctl start nginx

[root@master ~]# systemctl enable nginx --now

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

//关闭防火墙与SELINUX

[root@master ~]# systemctl stop firewalld

[root@master ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@master ~]# setenforce 0

[root@master ~]# sed -ri 's/^(SELINUX=).*/\1disabled/g' /etc/selinux/config

在slave上安装nginx

[root@localhost ~]# hostnamectl set-hostname slave.example.com

[root@localhost ~]# bash

[root@slave ~]# dnf -y module install nginx:1.20

[root@slave ~]# echo 'slave page'>/usr/share/testpage/index.html [root@slave ~]# cat /usr/share/testpage/index.html

slave page

[root@slave ~]# systemctl start nginx

[root@slave ~]# systemctl enable nginx --now

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

//关闭防火墙与SELINUX

[root@slave ~]# systemctl stop firewalld

[root@slave ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@slave ~]# setenforce 0

[root@slave ~]# sed -ri 's/^(SELINUX=).*/\1disabled/g' /etc/selinux/config

2. keepalived安装

配置主keepalived

//安装keepalived

[root@master ~]# dnf list all|grep keepalived

keepalived.x86_64 2.1.5-6.el8 AppStream

[root@master ~]# dnf -y install keepalived

//查看安装生成的文件

[root@master ~]# rpm -ql keepalived

/etc/keepalived //配置目录

/etc/keepalived/keepalived.conf //此为主配置文件

/etc/sysconfig/keepalived

/usr/bin/genhash

/usr/lib/systemd/system/keepalived.service //此为服务控制文件

/usr/libexec/keepalived

/usr/sbin/keepalived

.....此处省略N行

用同样的方法在备服务器上安装keepalived

3. keepalived配置

3.1 配置主keepalived

[root@master ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_instance VI_1 {

state MASTER

interface ens160

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 12345

}

virtual_ipaddress {

192.168.163.250

}

}

virtual_server 192.168.163.250 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.163.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.163.137 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@master ~]# systemctl enable keepalived --now

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

3.2 配置备keepalived

[root@slave ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface ens160

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 12345

}

virtual_ipaddress {

192.168.163.250

}

}

virtual_server 192.168.163.250 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.163.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.163.137 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@slave ~]# systemctl enable keepalived --now

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

3.3 查看VIP在哪里

在MASTER上查看

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:34:b3:73 brd ff:ff:ff:ff:ff:ff

inet 192.168.163.133/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1643sec preferred_lft 1643sec

inet 192.168.163.250/32 scope global ens160 //可以看到此处有VIP

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:b373/64 scope link noprefixroute

valid_lft forever preferred_lft forever

在SLAVE上查看

[root@slave ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:ed:37:de brd ff:ff:ff:ff:ff:ff

inet 192.168.163.137/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1634sec preferred_lft 1634sec

inet6 fe80::20c:29ff:feed:37de/64 scope link noprefixroute

valid_lft forever preferred_lft forever

4. 让keepalived监控nginx负载均衡机

keepalived通过脚本来监控nginx负载均衡机的状态

在master上编写脚本

[root@master ~]# mkdir /scripts

[root@master ~]# cd /scripts/

[root@master scripts]# vim check_n.sh

#!/bin/bash

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl stop keepalived

fi

[root@master scripts]# chmod +x check_n.sh

[root@master scripts]# ll

total 4

-rwxr-xr-x. 1 root root 143 Aug 31 18:41 check_n.sh

[root@master scripts]# vim notify.sh

#!/bin/bash

VIP=$2

sendmail (){

subject="${VIP}'s server keepalived state is translate"

content="`date +'%F %T'`: `hostname`'s state change to master"

echo $content | mail -s "$subject" 1470044516@qq.com

}

case "$1" in

master)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -lt 1 ];then

systemctl start nginx

fi

sendmail

;;

backup)

nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l)

if [ $nginx_status -gt 0 ];then

systemctl stop nginx

fi

;;

*)

echo "Usage:$0 master|backup VIP"

;;

esac

[root@master scripts]# chmod +x notify.sh

[root@master scripts]# ll

total 8

-rwxr-xr-x. 1 root root 143 Aug 31 18:41 check_n.sh

-rwxr-xr-x. 1 root root 663 Aug 31 18:45 notify.sh

在slave上编写脚本

[root@slave ~]# mkdir /scripts

[root@slave ~]# cd /scripts/

[root@slave scripts]# chmod +x notify.sh

[root@slave scripts]# ll

total 8

-rwxr-xr-x 1 root root 168 Oct 19 23:38 check_n.sh

-rwxr-xr-x 1 root root 594 Oct 20 03:24 notify.sh

此处的脚本名称应避免与服务名相同,推荐用服务名的首字母代替,如check_n,不要给脚本起名check_nginx

5. 配置keepalived加入监控脚本的配置

配置主keepalived

[root@master ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_script nginx_check {

script "/scripts/check_n.sh"

interval 1

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface ens160

virtual_router_id 51

priority 100

advert_int 1

authentication {

state MASTER

interface ens160

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 12345

}

virtual_ipaddress {

192.168.163.250

}

track_script {

nginx_check

}

notify_master "/scripts/notify.sh master 192.168.163.250"

notify_backup "/scripts/notify.sh backup 192.168.163.250"

}

virtual_server 192.168.163.250 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.163.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

[root@master ~]# systemctl restart keepalived

配置备keepalived

backup无需检测nginx是否正常,当升级为MASTER时启动nginx,当降级为BACKUP时关闭

[root@slave ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface ens160

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 12345

}

virtual_ipaddress {

192.168.163.250

}

notify_master "/scripts/notify.sh master 192.168.163.250"

notify_backup "/scripts/notify.sh backup 192.168.163.250"

}

virtual_server 192.168.163.250 80 {

delay_loop 6

lb_algo rr

lb_kind DR

persistence_timeout 50

protocol TCP

real_server 192.168.163.133 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 192.168.163.137 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

[root@slave ~]# systemctl restart keepalived

备起主挂

[root@slave ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; e>

Active: active (running) since Thu 2022-09-01 12:34:05 CST; 1>

......

[root@slave ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 128 [::]:80 [::]:*

[root@slave ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:ed:37:de brd ff:ff:ff:ff:ff:ff

inet 192.168.163.137/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1040sec preferred_lft 1040sec

inet 192.168.163.250/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:feed:37de/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@master ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; e>

Active: inactive (dead) since Thu 2022-09-01 01:11:14 CST; 11>

......

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:34:b3:73 brd ff:ff:ff:ff:ff:ff

inet 192.168.163.133/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1049sec preferred_lft 1049sec

inet6 fe80::20c:29ff:fe34:b373/64 scope link noprefixroute

valid_lft forever preferred_lft forever

备挂主起

[root@slave ~]# systemctl stop keepalived nginx

[root@slave ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:22 [::]:*

[root@slave ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; e>

Active: inactive (dead) since Thu 2022-09-01 12:48:43 CST; 26>

Process: 2921 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIO>

Main PID: 2924 (code=exited, status=0/SUCCESS)

Sep 01 12:34:14 slave.example.com Keepalived_vrrp[2927]: Sending>

Sep 01 12:48:42 slave.example.com systemd[1]: Stopping LVS and V>

Sep 01 12:48:42 slave.example.com Keepalived[2924]: Stopping

Sep 01 12:48:42 slave.example.com Keepalived_vrrp[2927]: (VI_1) >

Sep 01 12:48:42 slave.example.com Keepalived_vrrp[2927]: (VI_1) >

Sep 01 12:48:43 slave.example.com Keepalived_vrrp[2927]: Stopped>

Sep 01 12:48:43 slave.example.com Keepalived[2924]: CPU usage (s>

Sep 01 12:48:43 slave.example.com Keepalived[2924]: Stopped Keep>

Sep 01 12:48:43 slave.example.com systemd[1]: keepalived.service>

Sep 01 12:48:43 slave.example.com systemd[1]: Stopped LVS and VR>

[root@slave ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:ed:37:de brd ff:ff:ff:ff:ff:ff

inet 192.168.163.137/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1269sec preferred_lft 1269sec

inet6 fe80::20c:29ff:feed:37de/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@master ~]# systemctl restart nginx keepalived

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 [::]:80 [::]:*

LISTEN 0 128 [::]:22 [::]:*

[root@master ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:34:b3:73 brd ff:ff:ff:ff:ff:ff

inet 192.168.163.133/24 brd 192.168.163.255 scope global dynamic noprefixroute ens160

valid_lft 1124sec preferred_lft 1124sec

inet 192.168.163.250/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:b373/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@master ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

Loaded: loaded (/usr/lib/systemd/system/keepalived.service; e>

Active: active (running) since Sat 2022-09-03 16:38:12 CST; 2>

......

6 脑裂

在高可用(HA)系统中,当联系2个节点的“心跳线”断开时,本来为一整体、动作协调的HA系统,就分裂成为2个独立的个体。由于相互失去了联系,都以为是对方出了故障。两个节点上的HA软件像“裂脑人”一样,争抢“共享资源”、争起“应用服务”,就会发生严重后果——或者共享资源被瓜分、2边“服务”都起不来了;或者2边“服务”都起来了,但同时读写“共享存储”,导致数据损坏(常见如数据库轮询着的联机日志出错)。

对付HA系统“裂脑”的对策,目前达成共识的的大概有以下几条:

- 添加冗余的心跳线,例如:双线条线(心跳线也HA),尽量减少“裂脑”发生几率;

- 启用磁盘锁。正在服务一方锁住共享磁盘,“裂脑”发生时,让对方完全“抢不走”共享磁盘资源。但使用锁磁盘也会有一个不小的问题,如果占用共享盘的一方不主动“解锁”,另一方就永远得不到共享磁盘。现实中假如服务节点突然死机或崩溃,就不可能执行解锁命令。后备节点也就接管不了共享资源和应用服务。于是有人在HA中设计了“智能”锁。即:正在服务的一方只在发现心跳线全部断开(察觉不到对端)时才启用磁盘锁。平时就不上锁了。

- 设置仲裁机制。例如设置参考IP(如网关IP),当心跳线完全断开时,2个节点都各自ping一下参考IP,不通则表明断点就出在本端。不仅“心跳”、还兼对外“服务”的本端网络链路断了,即使启动(或继续)应用服务也没有用了,那就主动放弃竞争,让能够ping通参考IP的一端去起服务。更保险一些,ping不通参考IP的一方干脆就自我重启,以彻底释放有可能还占用着的那些共享资源

6.1 脑裂产生的原因

一般来说,脑裂的发生,有以下几种原因:

- 高可用服务器对之间心跳线链路发生故障,导致无法正常通信

- 因心跳线坏了(包括断了,老化)

- 因网卡及相关驱动坏了,ip配置及冲突问题(网卡直连)

- 因心跳线间连接的设备故障(网卡及交换机)

- 因仲裁的机器出问题(采用仲裁的方案)

- 高可用服务器上开启了 iptables防火墙阻挡了心跳消息传输

- 高可用服务器上心跳网卡地址等信息配置不正确,导致发送心跳失败

- 其他服务配置不当等原因,如心跳方式不同,心跳广插冲突、软件Bug等

注意:

Keepalived配置里同一 VRRP实例如果 virtual_router_id两端参数配置不一致也会导致裂脑问题发生。

6.2 脑裂的常见解决方案

在实际生产环境中,我们可以从以下几个方面来防止裂脑问题的发生:

- 同时使用串行电缆和以太网电缆连接,同时用两条心跳线路,这样一条线路坏了,另一个还是好的,依然能传送心跳消息

- 当检测到裂脑时强行关闭一个心跳节点(这个功能需特殊设备支持,如Stonith、feyce)。相当于备节点接收不到心跳消患,通过单独的线路发送关机命令关闭主节点的电源

- 做好对裂脑的监控报警(如邮件及手机短信等或值班).在问题发生时人为第一时间介入仲裁,降低损失。例如,百度的监控报警短信就有上行和下行的区别。报警消息发送到管理员手机上,管理员可以通过手机回复对应数字或简单的字符串操作返回给服务器.让服务器根据指令自动处理相应故障,这样解决故障的时间更短.

当然,在实施高可用方案时,要根据业务实际需求确定是否能容忍这样的损失。对于一般的网站常规业务.这个损失是可容忍的

6.3 对脑裂进行监控

对脑裂的监控应在备用服务器上进行,通过添加zabbix自定义监控进行。

监控什么信息呢?监控备上有无VIP地址

备机上出现VIP有两种情况:

- 发生了脑裂

- 正常的主备切换

监控只是监控发生脑裂的可能性,不能保证一定是发生了脑裂,因为正常的主备切换VIP也是会到备上的。

监控脚本如下:

[root@slave ~]# mkdir -p /scripts && cd /scripts

[root@slave scripts]# vim check_keepalived.sh

#!/bin/bash

if [ `ip a show ens33 |grep 192.168.163.250|wc -l` -ne 0 ]

then

echo "keepalived is error!"

else

echo "keepalived is OK !"

fi

编写脚本时要注意,网卡要改成你自己的网卡名称,VIP也要改成你自己的VIP,最后不要忘了给脚本赋予执行权限,且要修改/scripts目录的属主属组为zabbix

3970

3970

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?