本系列文章面向深度学习研发者,希望通过Image Caption Generation,一个有意思的具体任务,深入浅出地介绍深度学习的知识。本系列文章涉及到很多深度学习流行的模型,如CNN,RNN/LSTM,Attention等。本文为第10篇。

作者:李理

目前就职于环信,即时通讯云平台和全媒体智能客服平台,在环信从事智能客服和智能机器人相关工作,致力于用深度学习来提高智能机器人的性能。相关文章:

李理:从Image Caption Generation理解深度学习(part I)

李理:从Image Caption Generation理解深度学习(part II)

李理:从Image Caption Generation理解深度学习(part III)

李理:自动梯度求解 反向传播算法的另外一种视角

李理:自动梯度求解——cs231n的notes

李理:自动梯度求解——使用自动求导实现多层神经网络

李理:详解卷积神经网络

李理:Theano tutorial和卷积神经网络的Theano实现 Part1

李理:Theano tutorial和卷积神经网络的Theano实现 Part2

1. 内容简介

前面的部分介绍了卷积神经网络的原理以及怎么使用Theano的自动梯度来实现卷积神经网络,这篇文章将继续介绍卷积神经网络相关内容。

首先会介绍DropOut和Batch Normalization技术,dropout可以提高模型的泛化能力。而Batch Normalization是加速训练收敛速度的非常简单但又好用的一种实用技术,我们会通过cs231n的作业2来实现DropOut和Batch Normalization。

然后我们再完成作业2的另外一部分——通过计算图分解实现卷积神经网络。

接下来是简单的介绍使用caffe来训练imagenet的技术已经怎么在python里使用caffe,这些技术在后面会用到。

我们最后会简单的介绍一下图像分类的一些最新技术,包括极深度的ResNet(152层的ResNet),Inception。

2. Batch Normalization

2.1 简介

前面我们也讨论过来了,训练神经网络我们一般使用mini-batch的sgd算法,使用mini-batch而不是一个样本的好处是作为全部样本的采样,一个mini-batch的“随机”梯度和batch的梯度方向更接近(当然这是相对于一个训练样本来说的);另外一个好处是使用一个mini-batch的数据可以利用硬件的数据并行能力。比如通常的batch是几十到几百,而且为了利用数据并行的lib如blas或者GPU,一般都是8的倍数,比如16或者128这样的数字。

根据之前我们训练全连接网络的经验(如果读者网络可以再回归一下我们之前的文章,训练一个5层的全连接网络来识别cifar10的图片,要调到50%以上的准确率的例子,参考自动梯度求解——使用自动求导实现多层神经网络),要想让训练能收敛,选择合适的超参数如learning_rate或者参数的初始化非常重要,如果选择的值不合适,很可能无法收敛。当然使用更好的算法如momentum或者adam等可以让算法更容易收敛,但是对于很深的网络依然很难训练。

因为层次越多,error往前传播就越小,而且很多神经元会“saturation”。比如sigmoid激活函数在|x|比较小的时候图像解决直线y=x,从而梯度是1,但随着|x|变大,梯度变得很小,从而参数的delta就非常小,参数变化很小。使用ReLU这样的激活函数能缓解saturation的问题,但是还有“internal covariate shift”的问题(接下来会介绍这个问题)依然很难解决。而Batch Normalization就能解决这个问题同时也能解决saturation的问题。

Batch Normalization是Google的Sergey Ioffe 和 Christian Szegedy提出的,相同的网络结构,使用这种方法比原始的网络训练速度要提高14倍。作者通过训练多个模型的ensemble,在ImageNet上的top5分类错误率降到了4.8%。

2.2 covariate shift和internal covariate shift

2.2.1 covariate shift

假设一个模型的输入是X(比如在MNIST任务,X是一个784的向量),输出是Y(比如MNIST是0-9的10个类别)。很多Discriminative 模型学到的是P(Y|X),神经网络也是这样的模型。而covariate shift问题是由于训练数据的领域模型 Ps(X) 和测试数据的 Pt(X) 分布不一致造成的,这里的下标s和t是source和target的缩写,代表训练和测试。

乍一看这个应该不是什么问题。毕竟我们的目标是分类,只要 Ps(Y|X) 和 Pt(Y|X) 是一样的就行了。和X的分别 Ps(X) 以及 Pt(X) 有什么关系呢?

问题的关键是我们训练的模型一般都是参数化的模型 Ps(Y|X;θ) ,也就是我们用一个参数化的模型来学习X和Y的关系。我们根据训练数据上的loss来选择最佳的 θ∗。但是在很多时候,我们没法学习出一个完美的模型,因此总会有一些X使得 P(Y|X)≠P(Y|X;θ) 。那在这个时候P(X)就会带来影响。

比如说我这个模型在 X1和 X2 都会出错,也就是都有loss。我们可以调整参数,当然完美的情况是调参的结果使得两个点上的loss都变小。可惜这一点做不到,我们只能是一个变小一个变大,那么我们应该倾向与哪个呢?很显然要看 P(X1)和 P(X2) 哪个大。如果 P(X1) 大,也就是说 X1 更容易出现,那么当然应该让 X1 的loss更小(从而分类正确的可能性更大,如果是回归的话更是这样)。

现在问题来了,如果训练数据中Ps(X)和测试数据Pt(X)不一样,那么就会带来问题。举一个极端的例子,假设我们的X只有两种取值数据1和数据2,他们的类别是不同的,但是我们的feature很不好,根本没法区分出X1和X2来,也就是说X1=X2。因此我们的模型肯定无法正确的分类出数据1和数据2来。但是我们的模型必须做出选择,那怎么选择呢?当然要看P(X1)和P(X2)哪个大,我们尽量把出现概率大的那个分对。如训练的时候数据1出现的概率大,那么我们的分类器会把他分类成数据1的类别。但是如果我们测试的数据确实数据2的概率大,那么我们的模型就会有问题。

解决这个问题的方法有很多,其中一种思路是重新训练一个新的模型,对训练数据进行”加权“。不过这和我们的Batch Normalization关系不大,就不展开了。介绍它的目的是让大家知道有这样一个问题,如果在实际的工作中碰到训练数据的分布和测试数据的分布不一样,要想想这个会不会带来问题。

2.2.2 internal covariate shift

通过前面的分析,我们知道如果训练时和测试时输入的分布变化,会给模型带来问题。当然在日常的应用中,这个问题一般不会太明显,因为一般情况数据的分布差别不会太大(尤其是P(Y|X)不会,否则之前的训练数据完全没法用了,可以认为是两个不同的任务了),但是在很深的网络里这个问题会带来问题,使得训练收敛速度变慢。因为前面的层的结果会传递到后面的层,而且层次越多,前面的细微变化就会带来后面的巨大变化。如果某一层的输入分布总是变化的话,那么它就会无所适从,很难调整好参数。我们一般会对输入数据进行”白化“除理,使得它的均值是0,方差是1。但是之后的层就很难保证了,因为随着前面层参数的调整,后面的层的输入是很难保证的。比较坏的情况是,比如最后一层,经过一个minibatch,把参数调整好的比之前好一些了,但是它之前的所有层的参数也都变了,从而导致下一轮训练的时候输入的范围都发生变化了,那么它肯定就很难正确的分类了。这就是所谓的internal covariate shift。

2.3 解决方法——Batch Normalization

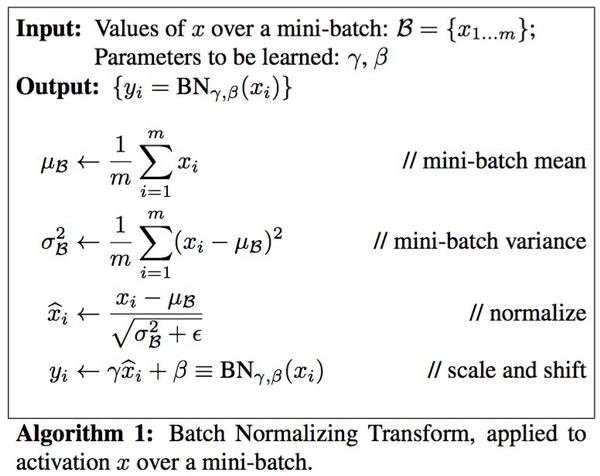

那怎么能解决这个问题呢?如果我们能保证每次minibatch时每个层的输入数据都是均值0方差1,那么就可以解决这个问题。因此我们可以加一个batch normalization层对这个minibatch的数据进行处理。但是这样也带来一个问题,把某个层的输出限制在均值为0方差为1的分布会使得网络的表达能力变弱。因此作者又给batch normalization层进行一些限制的放松,给它增加两个可学习的参数 β 和 γ ,对数据进行缩放和平移,平移参数 β 和缩放参数 γ 是学习出来的。极端的情况这两个参数等于mini-batch的均值和方差,那么经过batch normalization之后的数据和输入完全一样,当然一般的情况是不同的。

Batch Normalization的算法很简单,如下图所示:

2.4 Batch Normalization的预测

我们训练时使用一个minibatch的数据,因此可以计算均值和方差,但是预测时一次只有一个数据,所以均值方差都是0,那么BN层什么也不干,原封不动的输出。这肯定会用问题,因为模型训练时都是进过处理的,但是测试时又没有,那么结果肯定不对。

解决的方法是使用训练的所有数据,也就是所谓的population上的统计。原文中使用的就是这种方法,不过这需要训练完成之后在多出一个步骤。另外一种常见的办法就是基于momentum的指数衰减,这种方法就是我们下面作业要完成的算法。

公式如下:

running_mean = momentum * running_mean + (1 - momentum) * sample_mean

running_var = momentum * running_va

本文深入探讨Batch Normalization在深度学习中的作用,特别是其加速训练过程和解决内部协变量漂移问题的能力。通过cs231n课程的作业,详细介绍了Batch Normalization的原理、实现和预测阶段的操作。实验证明,Batch Normalization能够显著提高模型训练速度,降低对初始化参数的敏感度。

本文深入探讨Batch Normalization在深度学习中的作用,特别是其加速训练过程和解决内部协变量漂移问题的能力。通过cs231n课程的作业,详细介绍了Batch Normalization的原理、实现和预测阶段的操作。实验证明,Batch Normalization能够显著提高模型训练速度,降低对初始化参数的敏感度。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

232

232

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?