基于贝叶斯进行分类思想:计算该样本点属于每个标签的概率,选择概率最大的那个标签作为分类结果。

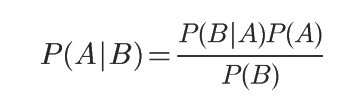

首先需要知道贝叶斯条件概率公式:

P(A)为先验概率,在B发生之前 A的概率

P(A|B)为后验概率,在B发生后A的概率

P(B|A)/P(B)为调整因子,用于调整在B发生之后,对P(A)进行调整,P(A)的值是变大了,还是变小了

在分类上的应用

废话少说,直接上图,字迹潦草,还望见谅

代码:

from numpy import *

def loadDataSet():

postingList=[['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'],

['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'],

['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'],

['stop', 'posting', 'stupid', 'worthless', 'garbage'],

['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'],

['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']]

classVec = [0,1,0,1,0,1] #1 代表侮辱性单词, 0 正常言论

return postingList,classVec

#该函数用于删除将输入集中重复的单词,set()方法是最适合的

def createVocabList(dataSet):

#创建一个空集

vocabSet = set([])

for document in dataSet:

#求并集

vocabSet = vocabSet | set(document)

#返回单词唯一的数据集

return list(vocabSet)

#将输入集数据进行处理

def setOfWords2Vec(vocabList,inputSet):

#创建一个语词汇表长度相同的全为0的返回向量

returnVec = [0]*len(vocabList)

for word in inputSet:

#如果输入集的单词在词汇表中,就将与词汇表相同的索引上的值设为1

if word in vocabList:

returnVec[vocabList.index(word)] = 1

else:

print("the word:%d is not in vocabulary"%word)

return returnVec

#训练算法 trainMatrix:训练集矩阵 trainCategory:训练集类标签

def trainNB0(trainMatrix,trainCategory):

#得到文档个数

numTrainDocs = len(trainMatrix)

#一个文档的单词数量

numWords = len(trainMatrix[0])

#侮辱性文档占总文档的比例也就P(y1),y1代表侮辱文档

pAbusive = sum(trainCategory)/float(numTrainDocs)

#p0记录正常文档的相关数据,p1记录侮辱文档的相关数据

p0Num = ones(numWords);p1Num = ones(numWords)

p0Denom = 2.0; p1Denom = 2.0

#对于每篇训练文档

for i in range(numTrainDocs):

#如果是侮辱性文档

if trainCategory[i] ==1:

#将每个特征(单词)出现的次数记录下来

p1Num += trainMatrix[i]

#记录特征(单词)出现的总数

p1Denom += sum(trainMatrix[i])

else:

#对于正常文档进行相同的操作

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

#分别计算侮辱文档中每个特征(单词)出现的概率也就是P(xi|y1)的值

p1Vect = log(p1Num / p1Denom)

# 分别计算正常文档中每个特征(单词)出现的概率也就是P(xi|y0)的值

p0Vect = log(p0Num / p0Denom)

#返回数据

return p0Vect,p1Vect,pAbusive

"""vec2Classify:需要分类的词条数组

p0Vec:正常词条的条件概率,P(Xi|y0)

p1Vec:侮辱词条的条件概率,P(Xi|y1)

pClass1:侮辱性文档的概率,P(y1)

"""

def classifyNB(vec2Classify,p0Vec,p1Vec,pClass1):

#分别计算是侮辱性或正常性文档的概率,P(Xi|y0) * P(y1)

#这里采用了优化

p1 = sum(vec2Classify * p1Vec) + log(pClass1)

p0 = sum(vec2Classify * p0Vec) + log(1.0 - pClass1)

print "侮辱词语的概率:",p1

print "正常词语的概率:",p0

if p1 > p0:

return 1

else:

return 0

def testingNB():

#加载数据

listOposts,listClasses = loadDataSet()

myVocabList = createVocabList(listOposts)

trainMat = []

#获得训练集

for postinDoc in listOposts:

trainMat.append(setOfWords2Vec(myVocabList, postinDoc))

#得到条件概率以及侮辱性文档概率

p0v, p1v, pAb = trainNB0(array(trainMat), array(listClasses))

testEntry = ['love','my','dalmation']

#将测试集转换为可以分类的样本点

thisDoc = array(setOfWords2Vec(myVocabList,testEntry))

print (testEntry,'classified as:',classifyNB(thisDoc,p0v,p1v,pAb))

testEntry = ['stupid', 'garbage']

thisDoc = array(setOfWords2Vec(myVocabList, testEntry))

print (testEntry, 'classified as:', classifyNB(thisDoc, p0v, p1v, pAb))

testingNB()

实验结果:

示例:使用朴素贝叶斯过滤垃圾邮件

代码:

#准备数据:将数据切分为词条,

def textParse(bigString):

import re

listOfTokens = re.split(r'\w*',bigString)

#返回列表,并将所有词条变为小写

return [tok.lower() for tok in listOfTokens if len(tok) > 2]

#测试算法:使用朴素贝叶斯进行交叉验证

def spamTest():

docList = [];classList = []; fullText = []

for i in range(1,26):

#导入spam与ham文件夹下的文本作为词列表

wordList = textParse(open('email/spam/%d.txt'%i).read())

docList.append(wordList)

fullText.extend(wordList)

#设置类标签

classList.append(1)

wordList = textParse(open('email/ham/%d.txt'%i).read())

docList.append(wordList)

fullText.extend(wordList)

classList.append(0)

#将重复的单词删除,生成词列表

vocabList = createVocabList(docList)

#50个训练集

trainingSet = range(50);testSet = []

#在50个训练邮件中随机抽取10个进行测试

for i in range(10):

randIndex = int(random.uniform(0,len(trainingSet)))

testSet.append(trainingSet[randIndex])

del(trainingSet[randIndex])

trainMat = []; trainClasses = []

#剩下的作为训练集

for docIndex in trainingSet:

trainMat.append(setOfWords2Vec(vocabList,docList[docIndex]))

trainClasses.append(classList[docIndex])

#有训练算法,得到条件概率

p0V,p1V,pSpam = trainNB0(array(trainMat),array(trainClasses))

errorCount = 0.0

#通过训练集计算错误率

for docIndex in testSet:

wordVector = setOfWords2Vec(vocabList,docList[docIndex])

if classifyNB(array(wordVector),p0V,p1V,pSpam)!= classList[docIndex]:

errorCount += 1

print ("the error rate is:",float(errorCount/len(testSet)))

spamTest()

实验结果:

1423

1423

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?