论文:https://arxiv.org/pdf/1903.00834.pdf

Project Page:http://web.eecs.utk.edu/~zzhang61/project_page/SRNTT/SRNTT.html

Adobe、田纳西大学

新型超分辨率方法:用神经网络迁移参照图像纹理 - Alan_Fire - 博客园

SRNTT:Image Super-Resolution by Neural Texture Transfer_清风吹斜阳-CSDN博客

基于跟LR及其不相似的图片恢复细节时会抑制不相似的位置,同时也避免制造出虚假的细节。

1、论文贡献(共三点):

- 提出了一种新的网络super-resolution feedback network (SRFBN)

- 提出了一种新的结构feedback block (FB)

- 提出了一种新的训练策略curriculum-based training strategy

2、算法大概流程

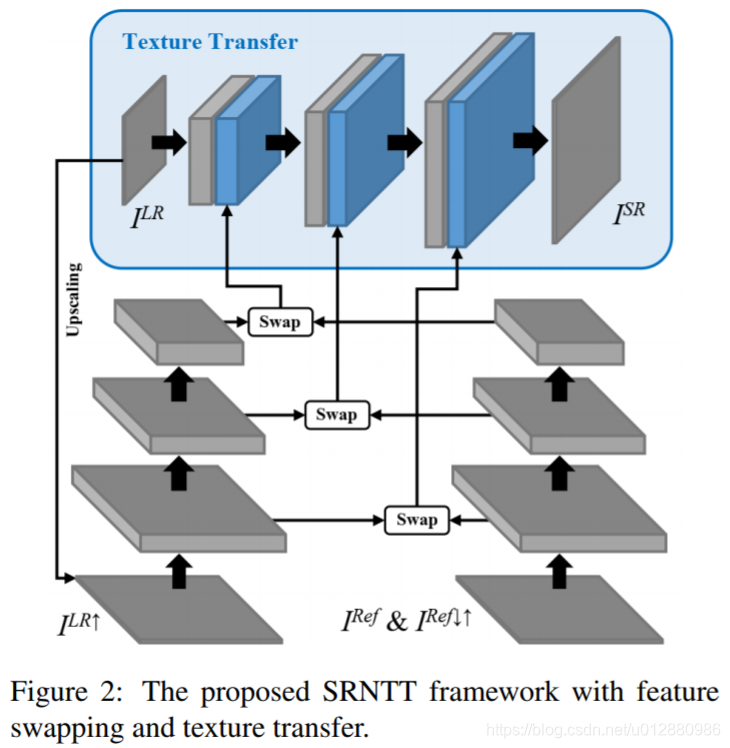

SRNTT structure

processes

- Feature Swapping

First,apply bicubic up-sampling on ILR to get an upscaled LR image ILR↑

Then,sequentially apply bicubic downsampling and up-sampling with the same factor on IRef to obtain a blurry Ref image IRef↓↑ that matches the frequency band of ILR↑

借鉴CrossNet [41]优点 Instead of estimating a global transformation or optical flow, we match the local patches in ILR↑ and IRef↓↑ so that there is no constraint on the global structure of the Ref image

Pi(·) denotes sampling the i-th patch from neural feature map, and si,j is the similarity between the i-th LR patch and the j-th Ref patch.

Sj is the similarity map for the j-th Ref patch, and ∗ denotes the correlation operation

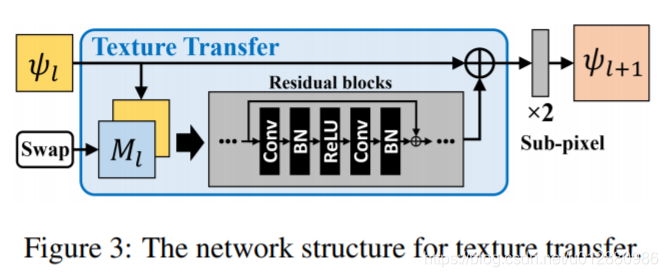

- Neural Texture Transfer

Losses

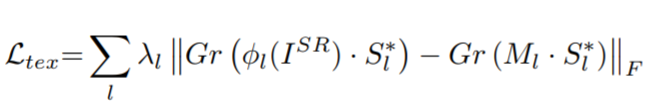

- texture loss

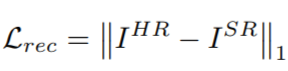

- Reconstruction loss

- Perceptual loss

where V and C indicate the volume and channel number of the feature maps, respectively, and φi denotes the ith channel of the feature maps extracted from the hidden layer(relu5_1) of VGG19 model. || · ||F denotes the Frobenius norm

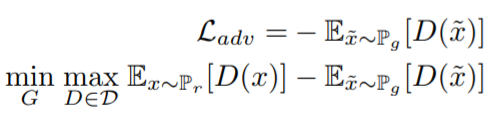

- Adversarial loss

3、实验

Settings

特征提取层来自于不同的VGG层,relu1_1,relu2_1,relu3_1。

的权值分别为1,1e-4,1e-6,1e-4

学习率设置为1e-4.优化器为adam.

网络先只用重构损失训练2epochs,再用全部的损失训练20epochs。

论文还对IRef做数据增强,获取其对应的放缩和旋转

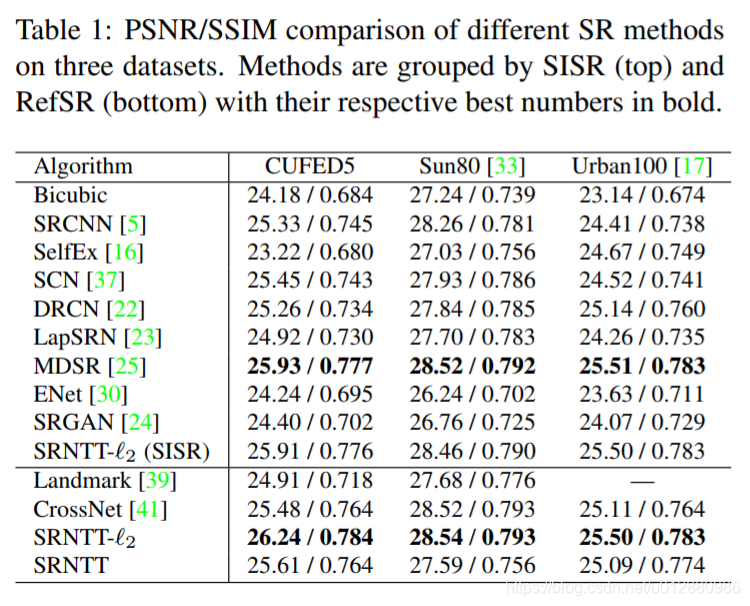

Results

SRNTT-L2是用MSE

6661

6661

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?