opencv4.5.4在objdetect模块中添加了基于深度学习的人脸检测与识别功能,该项目由OpenCv China于仕琪团队、邓伟洪团队贡献。

测试代码项目下载链接: FaceDetector人脸检测、识别 DNN模型 demo。

1、介绍

基于深度学习的人脸识别基本上分为两步完成,

- 脸检测与对齐:人脸检测与landmark检测,实现人脸对齐,分为2D/3D对齐

- 人脸特征提取与识别;提取人脸特征数据从128维到2048维都有可能,获取特征之后识别

识别按应用情形分2种:一种是1:1称为比对或验证,确定两张人脸是否为同一人;另外一种1:N称为鉴别或识别,从N个人脸中找出最相近的一个。

示例sample路径为sources\samples\dnn\face_detect.cpp和\sources\samples\dnn\face_match.cpp,使用的模型文件下载链接:

https://github.com/ShiqiYu/libfacedetection.train/blob/master/tasks/task1/onnx/yunet.onnx

https://drive.google.com/file/d/1ClK9WiB492c5OZFKveF3XiHCejoOxINW/view

模型文件亦在后续测试提供的项目中包含。

类似的方案还有 SeetaFace2。为方便使用,本文将人脸识别(1:N)的封装到一个类中。

1.1、检测

YuNet基于Anchor人脸检测,输入尺寸320*320,在4个尺度上生成正方形anchor,可检测最小人人脸尺寸10*10,最大256*256参考MobileNet使用Depth-wise卷积代替部分卷积减少模型参数和体积,在wider face上训练和测试,使用EIou损失函数训练,提高人脸定位能力。除了人脸定位外,还额外标注了5个人脸关键点(左眼、右眼、鼻尖、左嘴角、右嘴角)。

opencv代码使用 static Ptr<FaceDetectorYN> create() 方法创建一个检测器对象,再调用int detect()函数进行推理。

class CV_EXPORTS_W FaceDetectorYN

{

public:

virtual ~FaceDetectorYN() {};

CV_WRAP virtual void setInputSize(const Size& input_size) = 0;

CV_WRAP virtual Size getInputSize() = 0;

CV_WRAP virtual void setScoreThreshold(float score_threshold) = 0;

CV_WRAP virtual float getScoreThreshold() = 0;

CV_WRAP virtual void setNMSThreshold(float nms_threshold) = 0;

CV_WRAP virtual float getNMSThreshold() = 0;

CV_WRAP virtual void setTopK(int top_k) = 0;

CV_WRAP virtual int getTopK() = 0;

CV_WRAP virtual int detect(InputArray image, OutputArray faces) = 0;

CV_WRAP static Ptr<FaceDetectorYN> create(const String& model,

const String& config,

const Size& input_size,

float score_threshold = 0.9f,

float nms_threshold = 0.3f,

int top_k = 5000,

int backend_id = 0,

int target_id = 0);

检测输出的结果按行存放在一个Mat中,每个人脸检测的结果为15维向量,定义为

(tl_x, tl_y, w, h, re_x, re_y, le_x, le_y, nt_x, nt_y, rcm_x, rcm_y, lcm_x, lcm_y, score)

'tl': top left point of the bounding box

're': right eye

'le': left eye

'nt': nose tip

'rcm': right corner of mouth

'lcm': left corner of mouth

1.2、识别

识别使用SFace模型,相关链接为 github 和 paper。

在前一小节人脸检测的基础上进行识别,需要进行人脸对齐、特征提取、特征比对三个步骤。提取的人脸特征维度为128,特征比对时可选 Cosine(默认)和 L2 Norm两种度量方式。

在数据集上,推荐使用 Threshold = 1.128(L2 Norm)、Threshold = 0.363(cosine)阈值参数的精度可达99.6%。

class CV_EXPORTS_W FaceRecognizerSF

{

public:

virtual ~FaceRecognizerSF() {};

enum DisType { FR_COSINE=0, FR_NORM_L2=1 };

// 根据检测的人脸定位信息进行对齐裁剪

CV_WRAP virtual void alignCrop(InputArray src_img, InputArray face_box, OutputArray aligned_img) const = 0;

// 提取crop后的人脸区域特征

CV_WRAP virtual void feature(InputArray aligned_img, OutputArray face_feature) = 0;

// 两个128维人脸特征距离

CV_WRAP virtual double match(InputArray _face_feature1, InputArray _face_feature2, int dis_type = FaceRecognizerSF::FR_COSINE) const = 0;

CV_WRAP static Ptr<FaceRecognizerSF> create(const String& model, const String& config, int backend_id = 0, int target_id = 0);

};

2、人脸识别(1:N)解决方案

封装检测与识别功能的FaceSolution类,

2.1、FaceSolution.hpp

#pragma once

#include "opencv2/core.hpp"

#include "opencv2/imgcodecs.hpp"

#include "opencv2/imgproc.hpp"

#include "opencv2/objdetect.hpp"

#include "opencv2/dnn.hpp"

#include "map"

struct FaceResult {

std::string name; // 默认 Unknow

cv::Matx<float, 1, 15> info; // 人脸信息 1*15

};

class FaceSolution {

public:

void initFaceModels(const std::string& detectModelPath, // 检测模型

const std::string& recogModelPath, // 识别模型

const std::string& faceDatabaseDir, // 人脸库

bool useCUDA = false); //

// 检测人脸定位信息

void detectFace(const cv::Mat &frame, std::vector<FaceResult> &results);

// 进行人脸识别(所有人脸进行1:N比对)

void matchFace(const cv::Mat &frame, std::vector<FaceResult> &results, bool l2 = false);

// 注册人脸到faceDatabase

void registFace(const cv::Mat &faceRoi, const std::string& name);

private:

cv::Ptr<cv::FaceDetectorYN> faceDetector;

cv::Ptr<cv::FaceRecognizerSF> faceRecognizer;

std::map<std::string, cv::Mat> faceDatabase; // 模拟注册的人脸数据库

};

2.3、FaceSolution.cpp

人脸特征相似度度量有normL2和cosine方法,对应的判断是否为同一人的阈值分别为1.128和0.363,normL2方法小于阈值1.128判定为同一人,cosine方法大于阈值0.363判定为同一人。

#include "FaceSolution.hpp"

#include "iostream"

void FaceSolution::initFaceModels(const std::string& detectModelPath,

const std::string& recogModelPath,

const std::string& faceDatabaseDir,

bool useCUDA)

{

int backend_id = 0;

int target_id = 0;

if(useCUDA) {

backend_id = cv::dnn::DNN_BACKEND_CUDA;

target_id = cv::dnn::DNN_TARGET_CUDA;

}

this->faceDetector = cv::FaceDetectorYN::create(detectModelPath, "", cv::Size(320, 320), 0.9f, 0.3f, 500, backend_id, target_id);

this->faceRecognizer = cv::FaceRecognizerSF::create(recogModelPath, "", backend_id, target_id);

std::vector<std::string> fileNames;

if(faceDatabaseDir.length()) {

std::cout << "Register faces:" << std::endl;

cv::glob(faceDatabaseDir, fileNames);

for(const auto& file_path : fileNames) {

cv::Mat image = cv::imread(file_path);

int posSlash = static_cast<int>(file_path.rfind("\\"));

int posDot = static_cast<int>(file_path.rfind("."));

std::string image_name = file_path.substr(posSlash + 1, posDot - posSlash - 1);

this->registFace(image, image_name);

std::cout << " file name: " << file_path.substr(posSlash) << std::endl;

}

}

}

void FaceSolution::detectFace(const cv::Mat &image, std::vector<FaceResult> &results)

{

// Set input size before inference

this->faceDetector->setInputSize(image.size());

// Inference

cv::Mat faces;

//double t = (double)cv::getTickCount();

this->faceDetector->detect(image, faces);

//t = cv::getTickFrequency()/(cv::getTickCount() - t);

//std::cout << cv::format("FPS: %.2f", t) << std::endl;

for(int i = 0; i < faces.rows; i++) {

FaceResult fr;

fr.name = "Unknown";

faces.row(i).copyTo(fr.info);

results.emplace_back(fr);

}

}

void FaceSolution::matchFace(const cv::Mat &frame, std::vector<FaceResult> &results, bool l2)

{

const double cosine_similar_thresh = 0.363;

const double l2norm_similar_thresh = 1.128;

for(auto & face : results) {

cv::Mat aligned_face, feature;

faceRecognizer->alignCrop(frame, face.info, aligned_face);

faceRecognizer->feature(aligned_face, feature);

double min_dist = 100.0;

double max_cosine = 0.0;

std::string matchedName = "Unknown";

for(const auto& item : this->faceDatabase) {

if(l2) {

double L2_score = faceRecognizer->match(feature, item.second, cv::FaceRecognizerSF::DisType::FR_NORM_L2);

if(L2_score < min_dist) {

min_dist = L2_score;

matchedName = item.first;

}

}

else {

double cos_score = faceRecognizer->match(feature, item.second, cv::FaceRecognizerSF::DisType::FR_COSINE);

if(cos_score > max_cosine) {

max_cosine = cos_score;

matchedName = item.first;

}

}

}

if(l2 && min_dist < l2norm_similar_thresh) {

face.name.clear();

face.name.append(matchedName);

}

else if(max_cosine > cosine_similar_thresh) {

face.name.clear();

face.name.append(matchedName);

}

}

}

void FaceSolution::registFace(const cv::Mat &frame, const std::string& name)

{

this->faceDetector->setInputSize(frame.size());

// Inference

cv::Mat faces;

this->faceDetector->detect(frame, faces);

cv::Mat aligned_face, feature;

faceRecognizer->alignCrop(frame, faces.row(0), aligned_face);

faceRecognizer->feature(aligned_face, feature);

this->faceDatabase.emplace(name, feature.clone()); // deep copy

}

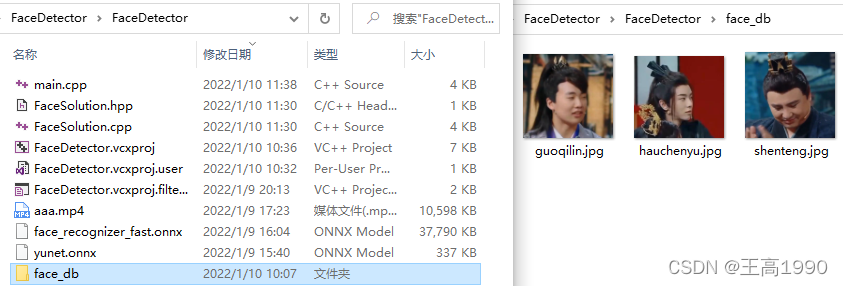

3、人脸识别测试

使用前面章节的FaceSolution,以视频aaa.mp4为例进行检测与识别,并且从视频从截取了3张人脸用于建库使用。文件结构如下:

测试代码项目下载链接:FaceDetector人脸检测、识别 DNN模型 demo。

#include "opencv2/core.hpp"

#include "opencv2/highgui.hpp"

#include "opencv2/videoio.hpp"

#include "opencv2/imgcodecs.hpp"

#include "opencv2/imgproc.hpp"

#include "FaceSolution.hpp"

#ifdef _DEBUG

#define OPENCV_VERSION CVAUX_STR(CV_VERSION_MAJOR) "" CVAUX_STR(CV_VERSION_MINOR) "" CVAUX_STR(CV_VERSION_REVISION) "d"

#else

#define OPENCV_VERSION CVAUX_STR(CV_VERSION_MAJOR) "" CVAUX_STR(CV_VERSION_MINOR) "" CVAUX_STR(CV_VERSION_REVISION) ""

#endif

#pragma comment(lib, "opencv_core" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_imgcodecs" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_imgproc" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_videoio" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_highgui" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_objdetect" OPENCV_VERSION ".lib")

//#pragma comment(lib, "opencv_world" OPENCV_VERSION ".lib")

void drawResult(cv::Mat& image, const std::vector<FaceResult>& faces);

int main()

{

std::string detect_model_path = "yunet.onnx";

std::string recog_model_path = "face_recognizer_fast.onnx";

cv::Ptr<FaceSolution> faceSolution = cv::makePtr<FaceSolution>();

faceSolution->initFaceModels(detect_model_path, recog_model_path, "./face_db", true);

cv::VideoCapture cap("aaa.mp4");

//cv::VideoCapture cap(0);

if(!cap.isOpened()) {

return 0;

}

cv::namedWindow("face",0);

cv::Mat frame;

while(cap.read(frame)) {

// 提前缩放,降低detect inference的时间

if(frame.cols > 720)

cv::resize(frame, frame, frame.size() / 2);

// 检测与识别

std::vector<FaceResult> faceResult;

faceSolution->detectFace(frame, faceResult);

faceSolution->matchFace(frame, faceResult);

// 结果

drawResult(frame, faceResult);

cv::imshow("face", frame);

if(cv::waitKey(1) == 27)

break;

}

}

void drawResult(cv::Mat& image, const std::vector<FaceResult>& faces)

{

for(auto& face : faces) {

// (tl_x, tl_y, w, h, re_x, re_y, le_x, le_y, nt_x, nt_y, rcm_x, rcm_y, lcm_x, lcm_y, score)

// 'tl': top left point of the bounding box

// 're': right eye, 'le': left eye

// 'nt': nose tip

// 'rcm': right corner of mouth, 'lcm': left corner of mouth

cv::Rect faceRect(face.info(0), face.info(1), face.info(2), face.info(3));

cv::Point rightEys(face.info(4), face.info(5));

cv::Point leftEys(face.info(6), face.info(7));

cv::Point noseTip(face.info(8), face.info(9));

cv::Point rightMouthCorner(face.info(10), face.info(11));

cv::Point leftMouthCorner(face.info(12), face.info(13));

float score = face.info(14);

cv::rectangle(image, faceRect, {0,0,255});

cv::circle(image, rightEys, 2, {0,0,255}, -1);

cv::circle(image, leftEys, 2, {0,0,255}, -1);

cv::circle(image, noseTip, 2, {0,0,255}, -1);

cv::circle(image, rightMouthCorner, 2, {0,0,255}, -1);

cv::circle(image, leftMouthCorner, 2, {0,0,255}, -1);

cv::rectangle(image, faceRect, {0,0,255});

cv::circle(image, rightEys, 2, {0,0,255}, -1);

cv::circle(image, leftEys, 2, {0,0,255}, -1);

cv::circle(image, noseTip, 2, {0,0,255}, -1);

cv::circle(image, rightMouthCorner, 2, {0,0,255}, -1);

cv::circle(image, leftMouthCorner, 2, {0,0,255}, -1);

cv::putText(image, cv::format("%s: %.2f", face.name.c_str(), score), cv::Point(faceRect.x, faceRect.y - 16), 2, 1, {0,0,255});

}

}

识别结果截图如下:

3763

3763

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?