%% 清空环境变量

warning off % 关闭报警信息

close all % 关闭开启的图窗

clear % 清空变量

clc % 清空命令行

P_train = res(1: num_train_s, 1: f_)';

T_train = res(1: num_train_s, f_ + 1: end)';

M = size(P_train, 2);

P_test = res(num_train_s + 1: end, 1: f_)';

T_test = res(num_train_s + 1: end, f_ + 1: end)';

N = size(P_test, 2);

% 数据归一化

[p_train, ps_input] = mapminmax(P_train, 0, 1);

p_test = mapminmax('apply', P_test, ps_input);

[t_train, ps_output] = mapminmax(T_train, 0, 1);

t_test = mapminmax('apply', T_test, ps_output);

%% 数据平铺

for i = 1:size(P_train,2)

trainD{i,:} = (reshape(p_train(:,i),size(p_train,1),1,1));

end

for i = 1:size(p_test,2)

testD{i,:} = (reshape(p_test(:,i),size(p_test,1),1,1));

end

targetD = t_train;

targetD_test = t_test;

numFeatures = size(p_train,1);

layers0 = [ ...

% 输入特征

sequenceInputLayer([numFeatures,1,1],'name','input') %输入层设置

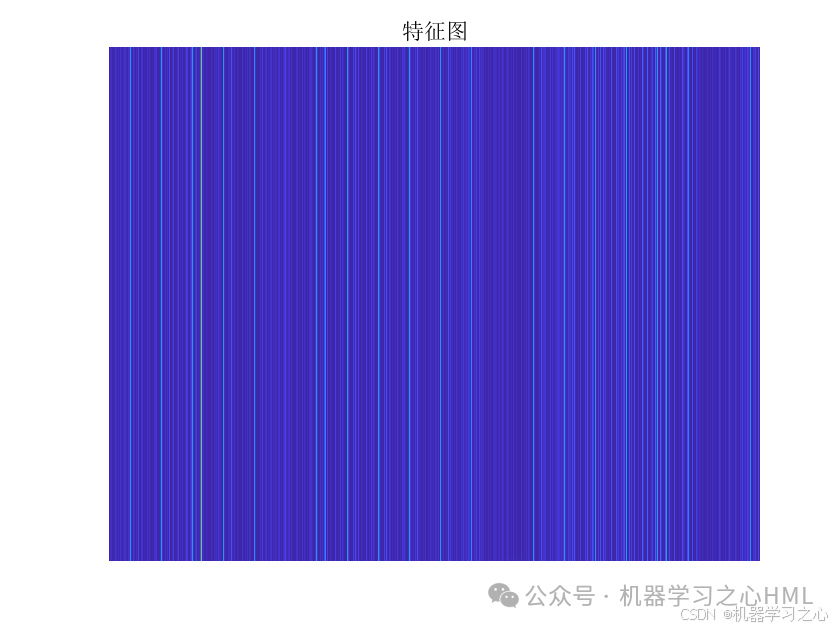

sequenceFoldingLayer('name','fold') %使用序列折叠层对图像序列的时间步长进行独立的卷积运算。

% CNN特征提取

convolution2dLayer([3,1],16,'Stride',[1,1],'name','conv1') %添加卷积层,64,1表示过滤器大小,10过滤器个数,Stride是垂直和水平过滤的步长

batchNormalizationLayer('name','batchnorm1') % BN层,用于加速训练过程,防止梯度消失或梯度爆炸

reluLayer('name','relu1') % ReLU激活层,用于保持输出的非线性性及修正梯度的问题

% 池化层

maxPooling2dLayer([2,1],'Stride',2,'Padding','same','name','maxpool') % 第一层池化层,包括3x3大小的池化窗口,步长为1,same填充方式

% 展开层

sequenceUnfoldingLayer('name','unfold') %独立的卷积运行结束后,要将序列恢复

%平滑层

flattenLayer('name','flatten')

bilstmLayer(25,'Outputmode','last','name','hidden1')

dropoutLayer(0.1,'name','dropout_1') % Dropout层,以概率为0.2丢弃输入

selfAttentionLayer(2,16,"Name","selfattention") % 多头自注意力机制层

fullyConnectedLayer(1,'name','fullconnect') % 全连接层设置(影响输出维度)(cell层出来的输出层) %

regressionLayer('Name','output') ];

lgraph0 = layerGraph(layers0);

lgraph0 = connectLayers(lgraph0,'fold/miniBatchSize','unfold/miniBatchSize');

- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

- 8.

- 9.

- 10.

- 11.

- 12.

- 13.

- 14.

- 15.

- 16.

- 17.

- 18.

- 19.

- 20.

- 21.

- 22.

- 23.

- 24.

- 25.

- 26.

- 27.

- 28.

- 29.

- 30.

- 31.

- 32.

- 33.

- 34.

- 35.

- 36.

- 37.

- 38.

- 39.

- 40.

- 41.

- 42.

- 43.

- 44.

- 45.

- 46.

- 47.

- 48.

- 49.

- 50.

- 51.

- 52.

- 53.

- 54.

- 55.

- 56.

- 57.

- 58.

- 59.

- 60.

- 61.

- 62.

1301

1301

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?