Paper Review

Uplift model with multiple treatments

1. Estimation and Inference of Heterogeneous Treatment Effects using Random Forest

二元干预情形下估计 τ ( x ) = E [ Y 1 − Y 0 ∣ X = x ] \tau(x)=E[Y^1-Y^0|X=x] τ(x)=E[Y1−Y0∣X=x]

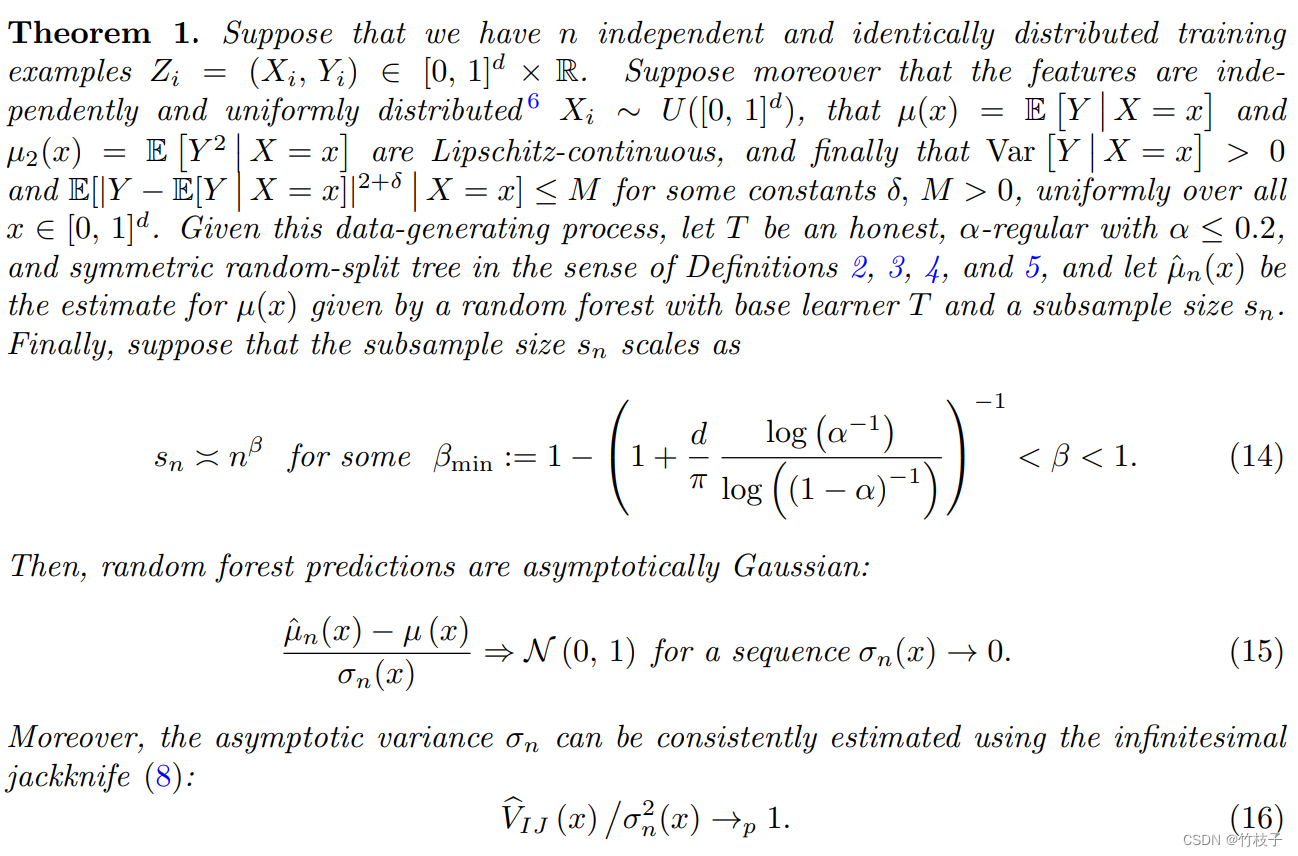

1.1 Asymptotic analysis

-

Under some condition,

( τ ^ ( x ) − τ ( x ) ) / Var [ τ ^ ( x ) ] ⇒ N ( 0 , 1 ) (\hat{\tau}(x)-\tau(x)) / \sqrt{\operatorname{Var}[\hat{\tau}(x)]} \Rightarrow \mathcal{N}(0,1) (τ^(x)−τ(x))/Var[τ^(x)]⇒N(0,1) -

Var [ τ ^ ( x ) ] \operatorname{Var}[\hat{\tau}(x)] Var[τ^(x)]可以用infinitesimal jackknife估计 V ^ I J ( x ) / Var [ τ ^ ( x ) ] → 1 \widehat{V}_{I J}(x) / \operatorname{Var}[\hat{\tau}(x)] \rightarrow 1 V IJ(x)/Var[τ^(x)]→1

V ^ I J ( x ) = n − 1 n ( n n − s ) 2 ∑ i = 1 n Cov ∗ [ τ ^ b ∗ ( x ) , N i b ∗ ] 2 \widehat{V}_{I J}(x)=\frac{n-1}{n}\left(\frac{n}{n-s}\right)^2 \sum_{i=1}^n \operatorname{Cov}_*\left[\hat{\tau}_b^*(x), N_{i b}^*\right]^2 V IJ(x)=nn−1(n−sn)2i=1∑nCov∗[τ^b∗(x),Nib∗]2

其中,系数项 ( n − 1 ) n / ( n − s ) 2 (n-1)n/(n-s)^2 (n−1)n/(n−s)2只能对无放回的子抽样做修正

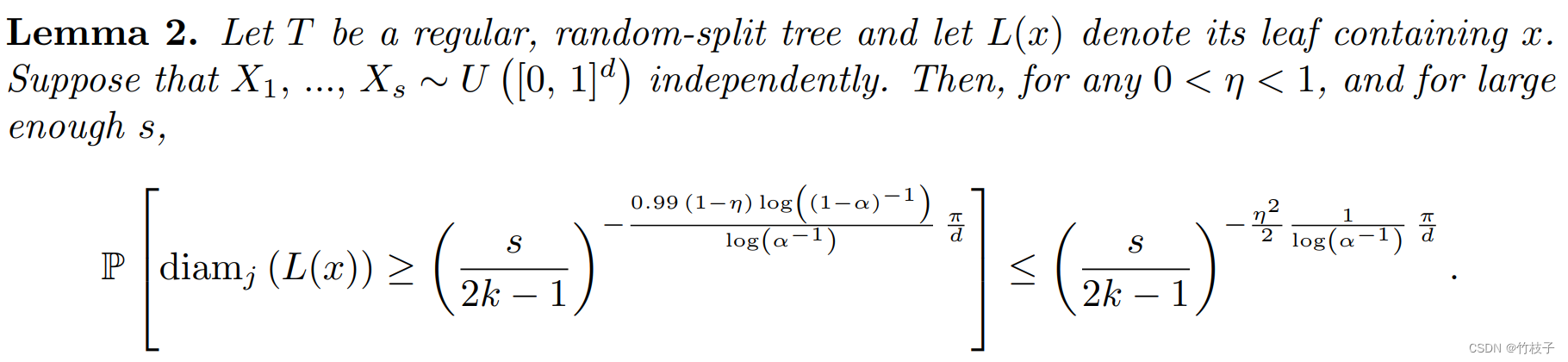

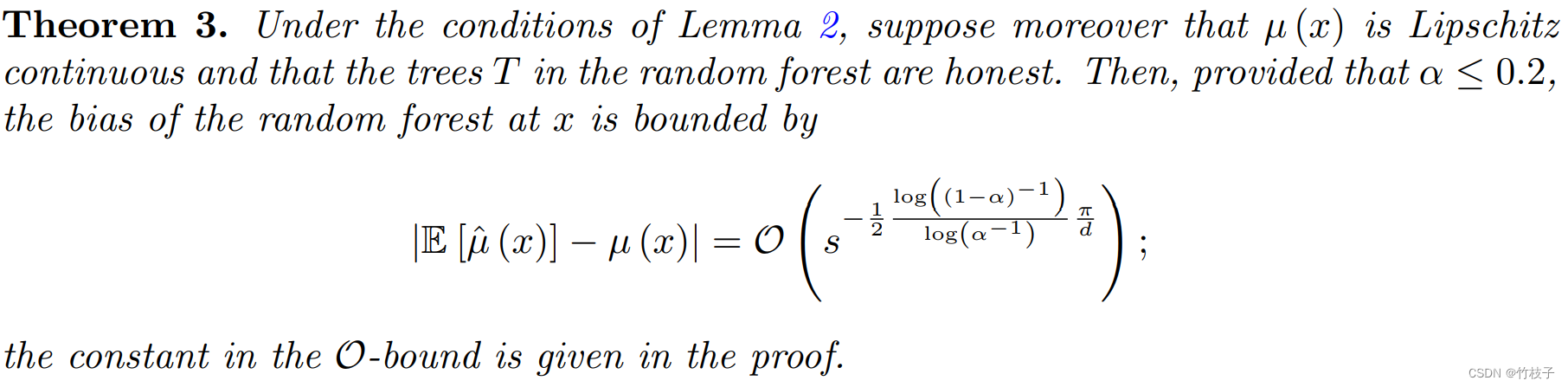

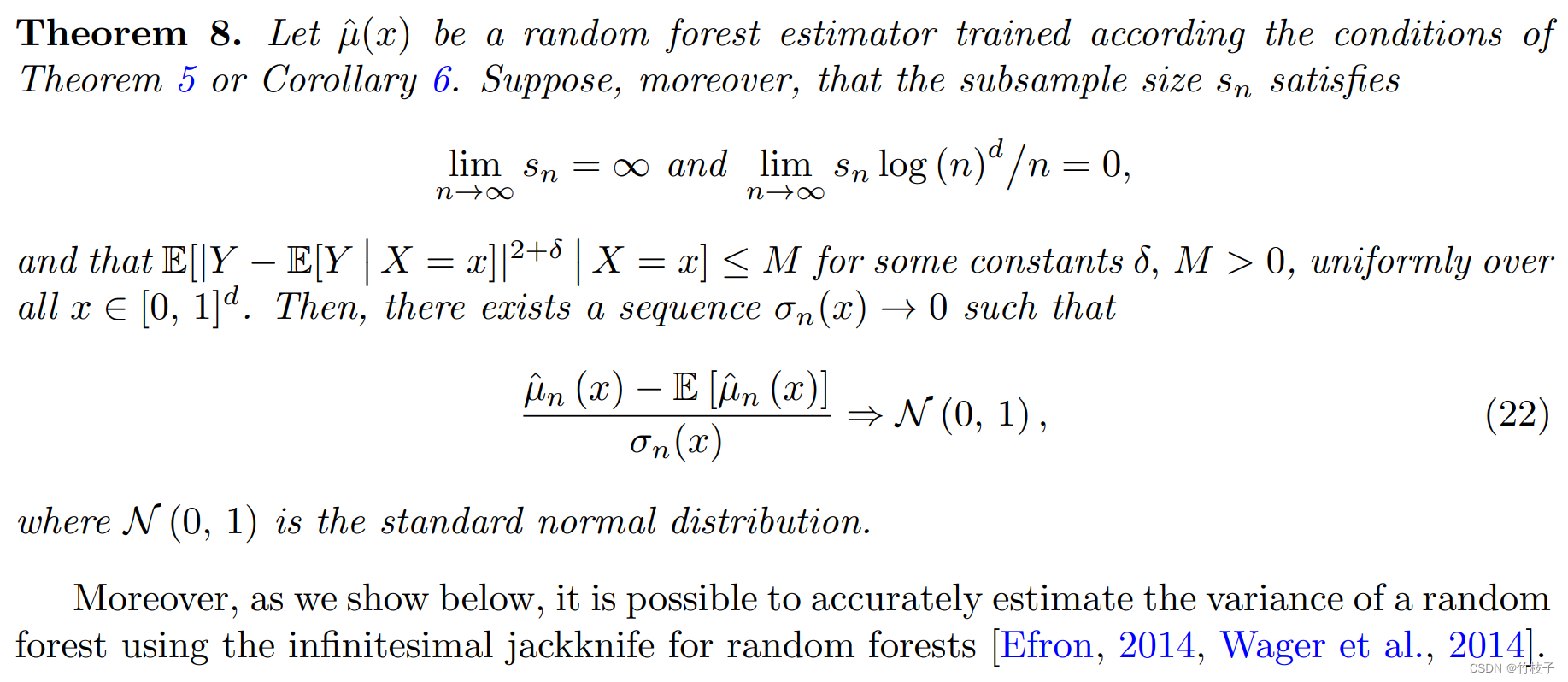

证明过程分为两步:

- 先证明偏差 E [ μ ^ n ( x ) − μ ( x ) ] E[\hat \mu_n(x)-\mu(x)] E[μ^n(x)−μ(x)]的bound

- 再证明 μ ^ n ( x ) − E [ μ ^ n ( x ) ] \hat \mu_n(x)-E[\hat \mu_n(x)] μ^n(x)−E[μ^n(x)]近似正态

利用Hajek projection和k-PNN先证明T is ν-incremental

T ∘ = E [ T ] + ∑ i = 1 n ( E [ T ∣ Z i ] − E [ T ] ) \stackrel{\circ}{T}=\mathbb{E}[T]+\sum_{i=1}^n\left(\mathbb{E}\left[T \mid Z_i\right]-\mathbb{E}[T]\right) T∘=E[T]+i=1∑n(E[T∣Zi]−E[T])

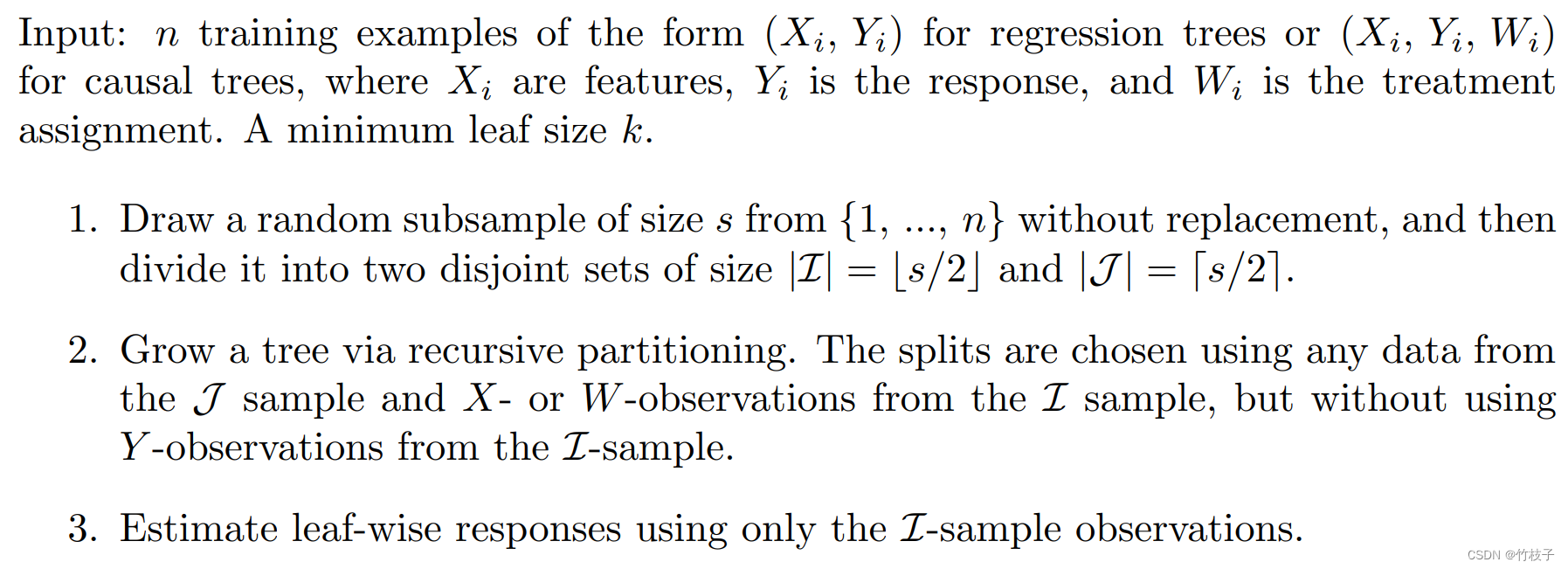

1.2 Double-Sample Trees

回归树T分裂准则为最小化MSE, μ ^ ( x ) = 1 ∣ { i : X i ∈ L ( x ) } ∣ ∑ { i : X i ∈ L ( x ) } Y i = Y ˉ L \hat{\mu}(x)=\frac{1}{\left|\left\{i: X_i \in L(x)\right\}\right|} \sum_{\left\{i: X_i \in L(x)\right\}} Y_i=\bar Y_L μ^(x)=∣{ i:Xi∈L(x)}∣1∑{ i:Xi∈L(x)}Yi=YˉL

∑ i ∈ J ( μ ^ ( X i ) − Y i ) 2 = ∑ i ∈ J Y i 2 − ∑ i ∈ J μ ^ ( X i ) 2 \sum_{i \in \mathcal{J}}\left(\hat{\mu}\left(X_i\right)-Y_i\right)^2=\sum_{i \in \mathcal{J}} Y_i^2-\sum_{i \in \mathcal{J}} \hat{\mu}\left(X_i\right)^2 i∈J∑(μ^(Xi)−Yi)2=i∈J∑Yi2−i∈J∑μ^(Xi)2

考虑到 ∑ i ∈ J μ ^ ( X i ) = ∑ i ∈ J Y i \sum_{i \in \mathcal{J}} \hat{\mu}\left(X_i\right)=\sum_{i \in \mathcal{J}} Y_i ∑i∈Jμ^(Xi)=∑i∈JYi,上式等价于最大化 μ ^ ( X i ) \hat{\mu}(X_i) μ^(Xi)的方差

2. Generalized Random Forests

2.1 Algorithm

1. Forest-based local estimation

目的:给定 ( O i , X i ) (O_i, X_i) (Oi,Xi), 估计 θ ( ⋅ ) \theta(\cdot) θ(⋅),如估计HTE时, O i = ( Y i , W i ) O_i=(Y_i, W_i) Oi=(Yi,Wi)。

方法:求解方程 E [ ψ θ ( x ) , v ( x ) ( O i ) ∣ X i = x ] = 0 \mathbb{E}\left[\psi_{\theta(x), v(x)}\left(O_i\right) \mid X_i=x\right]=0 E[ψθ(x),v(x)(Oi)∣Xi=x]=0,其中, θ ( x ) , v ( x ) \theta(x), v(x) θ(x),v(x)分别是感兴趣的参数和无关参数

- 权重估计阶段: α i ( x ) \alpha_i(x) αi(x)衡量 x i x_i xi和 x x x的相似程度,将同一叶子结点中的"“共现频率”"作为其权重

α b i ( x ) = 1 ( { X i ∈ L b ( x ) } ) ∣ L b ( x ) ∣ , α i ( x ) = 1 B ∑ b = 1 B α b i ( x ) \alpha_{b i}(x)=\frac{\mathbf{1}\left(\left\{X_i \in L_b(x)\right\}\right)}{\left|L_b(x)\right|}, \quad \alpha_i(x)=\frac{1}{B} \sum_{b=1}^B \alpha_{b i}(x) αbi(x)=∣Lb(x)∣1({ Xi∈Lb(x)}),αi(x)=B1b=1∑Bαbi(x)

其中 L b ( x ) L_b(x) Lb(x)为第b棵树 x x x所在叶子结点的所有数据 - 加权求解

( θ ^ ( x ) , v ^ ( x ) ) ∈ argmin θ , v { ∥ ∑ i = 1 n α i ( x ) ψ θ , v ( O i ) ∥ 2 } (\hat{\theta}(x), \hat{v}(x)) \in \underset{\theta, v}{\operatorname{argmin}}\left\{\left\|\sum_{i=1}^n \alpha_i(x) \psi_{\theta, v}\left(O_i\right)\right\|_2\right\} (θ^(x),v^(x))∈θ,vargmin{ i=1∑nαi(x)ψθ,v(Oi) 2}

例子:求解 μ ( x ) = E [ Y i ∣ X i = x ] = 0 \mu(x)=\mathbb{E}\left[Y_i \mid X_i=x\right]=0 μ(x)=E[Yi∣Xi=x]=0,取 ψ u ( x ) ( Y i ) = Y i − μ ( x ) \psi_{u(x)}\left(Y_i\right)=Y_i-\mu(x) ψu(x)(Yi)=Yi−μ(x),则有 ∑ i = 1 n 1 B ∑ α b i ( x ) ( Y i − μ ^ ( x ) ) = 0 \sum_{i=1}^n \frac{1}{B} \sum \alpha_{b i}(x)\left(Y_i-\hat{\mu}(x)\right)=0 ∑i=1nB1∑αbi(

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

779

779

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?