- 本文翻译自WILDML的博客Implementing a CNN for Text Classification in TensorFlow

- 博客地址: http://www.wildml.com/2015/12/implementing-a-cnn-for-text-classification-in-tensorflow/

- github地址:https://github.com/dennybritz/cnn-text-classification-tf

在这篇文章中,我们将实现一个类似于Kim Yoon的卷积神经网络语句分类模型。 本文提出的模型在一系列文本分类任务(如情绪分析)中实现了良好的分类性能,并已成为新的文本分类架构的标准基准。

我假设你已经熟悉了应用于NLP的卷积神经网络的基础知识。 如果没有,我建议先阅读NLP的理解卷积神经网络,以获得必要的背景。

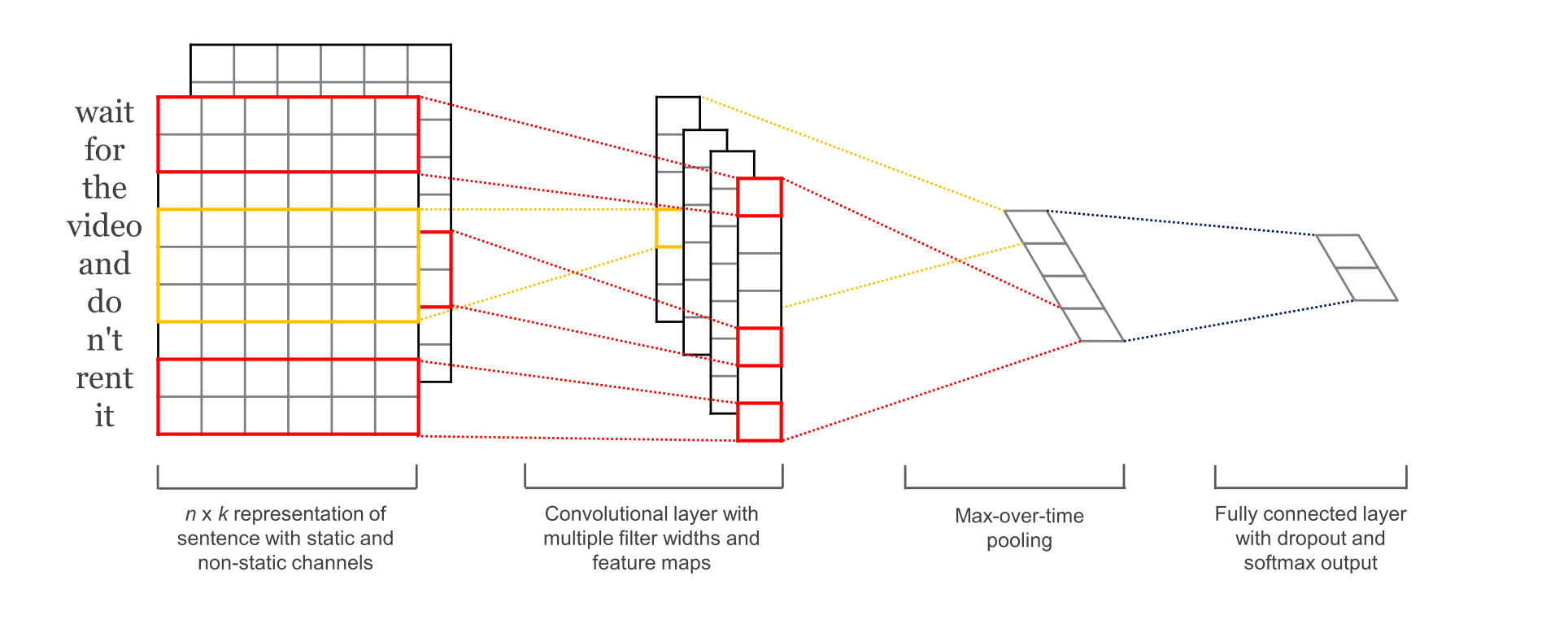

我们将在这篇文章中建立的网络大致如下:

第一层将单词嵌入到低维向量中。 下一层使用多个过滤器大小对嵌入的字矢量进行卷积。 例如,一次滑过3个,4个或5个字。 接下来,我们将卷积层的结果最大化为一个长的特征向量,添加退出正则化,并使用softmax层对结果进行分类。

因为这是一个教育文章,我决定从原始文件中简化模型:

- 我们不会将预先训练的word2vec矢量用于我们的词嵌入。 相反,我们从头开始学习嵌入。

- 我们不会对权重向量执行L2规范约束。 (和从业者指南)对句子分类的卷积神经网络的敏感性分析发现,约束对最终结果几乎没有影响。

- 原始实验用两个输入数据通道 - 静态和非静态字矢量。 我们只使用一个通道。

定义网络层

import tensorflow as tf

import numpy as np

class TextCNN(object):

"""

A CNN for text classification.

Uses an embedding layer, followed by a convolutional, max-pooling and softmax layer.

"""

def __init__(

self, sequence_length, num_classes, vocab_size, embedding_size, filter_sizes, num_filters,

l2_reg_lambda=0.0):

'''

:param sequence_length:The length of our sentences. Remember that we padded all our sentences to have the same length (59 for our data set).

:param num_classes:Number of classes in the output layer, two in our case (positive and negative).

:param vocab_size:The size of our vocabulary. This is needed to define the size of our embedding layer, which will have shape [vocabulary_size, embedding_size].

:param embedding_size: The dimensionality of our embeddings.

:param filter_sizes:The number of words we want our convolutional filters to cover. We will have num_filters for each size specified here.

For example, [3, 4, 5] means that we will have filters that slide over 3, 4 and 5 words respectively, for a total of 3 * num_filters filters.

:param num_filters:The number of filters per filter size (see above).

:param l2_reg_lambda:l2_reg_lambda

'''

# Placeholders for input, output and dropout

self.input_x = tf.placeholder(tf.int32, [None, sequence_length], name="input_x")

self.input_y = tf.placeholder(tf.float32, [None, num_classes], name="input_y")

self.dropout_keep_prob = tf.placeholder(tf.float32, name="dropout_keep_prob")

# Keeping track of l2 regularization loss (optional)

l2_loss = tf.constant(0.0)

# Embedding layer

# W是我们在训练中学习的嵌入矩阵。 我们使用随机均匀分布来初始化它。 tf.nn.embedding_lookup创建实际的嵌入操作。

# 嵌入操作的结果是形状为[None,sequence_length,embedding_size]的三维张量。

# TensorFlow的卷积conv2d操作期望具有对应于批次,宽度,高度和通道的尺寸的四维张量。

# 我们嵌入的结果不包含通道尺寸,所以我们手动添加它们,留下一层形状[None,sequence_length,embedding_size,1]。

with tf.device('/cpu:0'), tf.name_scope('embedding'):

self.W = tf.Variable(tf.random_uniform([vocab_size, embedding_size], -1.0, 1.0))

self.embedded_chars = tf.nn.embedding_lookup(self.W, self.input_x)

self.embedded_chars_expanded = tf.expand_dims(self.embedded_chars, -1)

# Create a convolution + maxpool layer for each filter size

# 我们使用不同大小的过滤器。 因为每个卷积产生不同形状的张量,我们需要迭代它们,为它们中的每一个创建一个层,然后将结果合并成一个大的特征向量。

pooled_outputs = []

for i, filter_size in enumerate(filter_sizes):

with tf.name_scope('conv-maxpool-%s' % filter_size):

# Convolution Layer

filter_shape = [filter_size, embedding_size, 1, num_filters]

W = tf.Variable(tf.truncated_normal(filter_shape, stddev=0.1), name='W')

b = tf.Variable(tf.constant(0.1, shape=[num_filters]), name='b')

# “VALID”填充意味着我们将过滤器滑过我们的句子而不填充边缘,执行一个窄的卷积,给出一个形状[1,sequence_length - filter_size + 1,1,1]的输出。

conv = tf.nn.conv2d(

self.embedded_chars_expanded,

W,

strides=[1, 1, 1, 1],

padding='VALID',

name='conv')

# Apply nonlinearity

h = tf.nn.relu(tf.nn.bias_add(conv, b), name='relu')

# Maxpooling over the outputs

# 在特定过滤器大小的输出上执行最大化池将留下一张张量[batch_size,1,num_filters]。 这本质上是一个特征向量,其中最后一个维度对应于我们的特征。

pooled = tf.nn.max_pool(

h,

ksize=[1, sequence_length - filter_size + 1, 1, 1],

strides=[1, 1, 1, 1],

padding='VALID',

name='pool'

)

pooled_outputs.append(pooled)

# Combine all the pooled features

num_filters_total = num_filters * len(filter_sizes)

self.h_pool = tf.concat(pooled_outputs, 3)

# 一旦我们从每个过滤器大小得到所有的汇集输出张量,我们将它们组合成一个长形特征向量[batch_size,num_filters_total]。

# 在tf.reshape中使用-1可以告诉TensorFlow在可能的情况下平坦化维度。

self.h_pool_flat = tf.reshape(self.h_pool, [-1, num_filters_total])

# Add dropout

with tf.name_scope('dropout'):

self.h_drop = tf.nn.dropout(self.h_pool_flat, self.dropout_keep_prob)

# Final(unnormalized) scores and predictions

# tf.nn.xw_plus_b是执行Wx + b矩阵乘法的便利包装器。

with tf.name_scope('output'):

W = tf.get_variable('W', shape=[num_filters_total, num_classes],

initializer=tf.contrib.layers.xavier_initializer())

b = tf.Vairable(tf.constant(0.1, shape=[num_classes]), name='b')

l2_loss += tf.nn.l2_loss(W)

l2_loss += tf.nn.l2_loss(b)

self.scores = tf.nn.xw_plus_b(self.h_drop, W, b, name='scores')

self.predictions = tf.argmax(self.scores, 1, name='predictions')

# Calculate mean cross-entropy loss

with tf.name_scope('loss'):

losses = tf.nn.softmax_cross_entropy_with_logits(logits=self.scores, labels=self.input_y)

self.loss = tf.reduce_mean(losses) + l2_reg_lambda * l2_loss

# Accuracy

with tf.name_scope('accuracy'):

correct_predictions = tf.equal(self.predictions, tf.argmax(self.input_y, 1))

self.accuracy = tf.reduce_mean(tf.cast(correct_predictions, 'float'), name='accuracy')

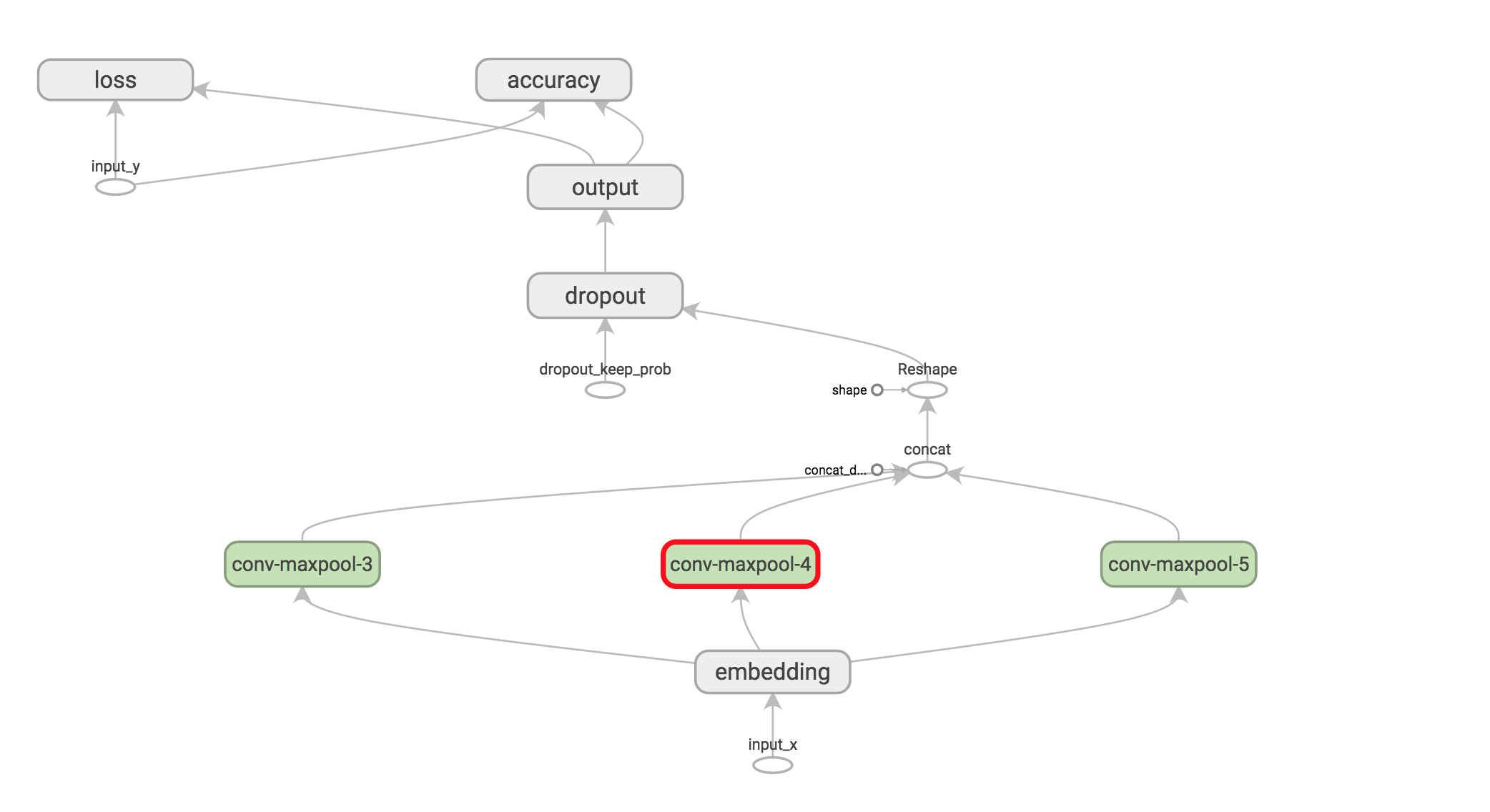

可视化网络

训练过程

import tensorflow as tf

import numpy as np

import os

import time

import datetime

import data_helpers

from text_cnn import TextCNN

from tensorflow.contrib import learn

# Parameters

# ==================================================

# Data loading params

tf.flags.DEFINE_float("dev_sample_percentage", .1, "Percentage of the training data to use for validation")

tf.flags.DEFINE_string("positive_data_file", "./data/rt-polaritydata/rt-polarity.pos", "Data source for the positive data.")

tf.flags.DEFINE_string("negative_data_file", "./data/rt-polaritydata/rt-polarity.neg", "Data source for the negative data.")

# Model Hyperparameters

tf.flags.DEFINE_integer("embedding_dim", 128, "Dimensionality of character embedding (default: 128)")

tf.flags.DEFINE_string("filter_sizes", "3,4,5", "Comma-separated filter sizes (default: '3,4,5')")

tf.flags.DEFINE_integer("num_filters", 128, "Number of filters per filter size (default: 128)")

tf.flags.DEFINE_float("dropout_keep_prob", 0.5, "Dropout keep probability (default: 0.5)")

tf.flags.DEFINE_float("l2_reg_lambda", 0.0, "L2 regularization lambda (default: 0.0)")

# Training parameters

tf.flags.DEFINE_integer("batch_size", 64, "Batch Size (default: 64)")

tf.flags.DEFINE_integer("num_epochs", 200, "Number of training epochs (default: 200)")

tf.flags.DEFINE_integer("evaluate_every", 100, "Evaluate model on dev set after this many steps (default: 100)")

tf.flags.DEFINE_integer("checkpoint_every", 100, "Save model after this many steps (default: 100)")

tf.flags.DEFINE_integer("num_checkpoints", 5, "Number of checkpoints to store (default: 5)")

# Misc Parameters

tf.flags.DEFINE_boolean("allow_soft_placement", True, "Allow device soft device placement")

tf.flags.DEFINE_boolean("log_device_placement", False, "Log placement of ops on devices")

FLAGS = tf.flags.FLAGS

# FLAGS._parse_flags()

# print("\nParameters:")

# for attr, value in sorted(FLAGS.__flags.items()):

# print("{}={}".format(attr.upper(), value))

# print("")

def preprocess():

# Data Preparation

# ==================================================

# Load data

print("Loading data...")

x_text, y = data_helpers.load_data_and_labels(FLAGS.positive_data_file, FLAGS.negative_data_file)

# Build vocabulary

max_document_length = max([len(x.split(" ")) for x in x_text])

vocab_processor = learn.preprocessing.VocabularyProcessor(max_document_length)

x = np.array(list(vocab_processor.fit_transform(x_text)))

# Randomly shuffle data

np.random.seed(10)

shuffle_indices = np.random.permutation(np.arange(len(y)))

x_shuffled = x[shuffle_indices]

y_shuffled = y[shuffle_indices]

# Split train/test set

# TODO: This is very crude, should use cross-validation

dev_sample_index = -1 * int(FLAGS.dev_sample_percentage * float(len(y)))

x_train, x_dev = x_shuffled[:dev_sample_index], x_shuffled[dev_sample_index:]

y_train, y_dev = y_shuffled[:dev_sample_index], y_shuffled[dev_sample_index:]

del x, y, x_shuffled, y_shuffled

print("Vocabulary Size: {:d}".format(len(vocab_processor.vocabulary_)))

print("Train/Dev split: {:d}/{:d}".format(len(y_train), len(y_dev)))

return x_train, y_train, vocab_processor, x_dev, y_dev

def train(x_train, y_train, vocab_processor, x_dev, y_dev):

# Training

# ==================================================

with tf.Graph().as_default():

session_conf = tf.ConfigProto(

allow_soft_placement=FLAGS.allow_soft_placement,

log_device_placement=FLAGS.log_device_placement)

sess = tf.Session(config=session_conf)

with sess.as_default():

cnn = TextCNN(

sequence_length=x_train.shape[1],

num_classes=y_train.shape[1],

vocab_size=len(vocab_processor.vocabulary_),

embedding_size=FLAGS.embedding_dim,

filter_sizes=list(map(int, FLAGS.filter_sizes.split(","))),

num_filters=FLAGS.num_filters,

l2_reg_lambda=FLAGS.l2_reg_lambda)

# Define Training procedure

global_step = tf.Variable(0, name="global_step", trainable=False)

optimizer = tf.train.AdamOptimizer(1e-3)

grads_and_vars = optimizer.compute_gradients(cnn.loss)

train_op = optimizer.apply_gradients(grads_and_vars, global_step=global_step)

# Keep track of gradient values and sparsity (optional)

grad_summaries = []

for g, v in grads_and_vars:

if g is not None:

grad_hist_summary = tf.summary.histogram("{}/grad/hist".format(v.name), g)

sparsity_summary = tf.summary.scalar("{}/grad/sparsity".format(v.name), tf.nn.zero_fraction(g))

grad_summaries.append(grad_hist_summary)

grad_summaries.append(sparsity_summary)

grad_summaries_merged = tf.summary.merge(grad_summaries)

# Output directory for models and summaries

timestamp = str(int(time.time()))

out_dir = os.path.abspath(os.path.join(os.path.curdir, "runs", timestamp))

print("Writing to {}\n".format(out_dir))

# Summaries for loss and accuracy

loss_summary = tf.summary.scalar("loss", cnn.loss)

acc_summary = tf.summary.scalar("accuracy", cnn.accuracy)

# Train Summaries

train_summary_op = tf.summary.merge([loss_summary, acc_summary, grad_summaries_merged])

train_summary_dir = os.path.join(out_dir, "summaries", "train")

train_summary_writer = tf.summary.FileWriter(train_summary_dir, sess.graph)

# Dev summaries

dev_summary_op = tf.summary.merge([loss_summary, acc_summary])

dev_summary_dir = os.path.join(out_dir, "summaries", "dev")

dev_summary_writer = tf.summary.FileWriter(dev_summary_dir, sess.graph)

# Checkpoint directory. Tensorflow assumes this directory already exists so we need to create it

checkpoint_dir = os.path.abspath(os.path.join(out_dir, "checkpoints"))

checkpoint_prefix = os.path.join(checkpoint_dir, "model")

if not os.path.exists(checkpoint_dir):

os.makedirs(checkpoint_dir)

saver = tf.train.Saver(tf.global_variables(), max_to_keep=FLAGS.num_checkpoints)

# Write vocabulary

vocab_processor.save(os.path.join(out_dir, "vocab"))

# Initialize all variables

sess.run(tf.global_variables_initializer())

def train_step(x_batch, y_batch):

"""

A single training step

"""

feed_dict = {

cnn.input_x: x_batch,

cnn.input_y: y_batch,

cnn.dropout_keep_prob: FLAGS.dropout_keep_prob

}

_, step, summaries, loss, accuracy = sess.run(

[train_op, global_step, train_summary_op, cnn.loss, cnn.accuracy],

feed_dict)

time_str = datetime.datetime.now().isoformat()

print("{}: step {}, loss {:g}, acc {:g}".format(time_str, step, loss, accuracy))

train_summary_writer.add_summary(summaries, step)

def dev_step(x_batch, y_batch, writer=None):

"""

Evaluates model on a dev set

"""

feed_dict = {

cnn.input_x: x_batch,

cnn.input_y: y_batch,

cnn.dropout_keep_prob: 1.0

}

step, summaries, loss, accuracy = sess.run(

[global_step, dev_summary_op, cnn.loss, cnn.accuracy],

feed_dict)

time_str = datetime.datetime.now().isoformat()

print("{}: step {}, loss {:g}, acc {:g}".format(time_str, step, loss, accuracy))

if writer:

writer.add_summary(summaries, step)

# Generate batches

batches = data_helpers.batch_iter(

list(zip(x_train, y_train)), FLAGS.batch_size, FLAGS.num_epochs)

# Training loop. For each batch...

for batch in batches:

x_batch, y_batch = zip(*batch)

train_step(x_batch, y_batch)

current_step = tf.train.global_step(sess, global_step)

if current_step % FLAGS.evaluate_every == 0:

print("\nEvaluation:")

dev_step(x_dev, y_dev, writer=dev_summary_writer)

print("")

if current_step % FLAGS.checkpoint_every == 0:

path = saver.save(sess, checkpoint_prefix, global_step=current_step)

print("Saved model checkpoint to {}\n".format(path))

def main(argv=None):

x_train, y_train, vocab_processor, x_dev, y_dev = preprocess()

train(x_train, y_train, vocab_processor, x_dev, y_dev)

if __name__ == '__main__':

tf.app.run()

本文翻译自WILDML的博客,介绍如何在TensorFlow中实现CNN进行文本分类。参照了Kim Yoon的模型,该模型在文本分类任务中表现出色。我们将构建的网络包括单词嵌入、不同大小的卷积过滤器、最大池化和softmax层。虽然简化了原始模型(不使用预训练的word2vec、无L2正则化、单个输入通道),但依然能展示CNN在NLP中的应用。

本文翻译自WILDML的博客,介绍如何在TensorFlow中实现CNN进行文本分类。参照了Kim Yoon的模型,该模型在文本分类任务中表现出色。我们将构建的网络包括单词嵌入、不同大小的卷积过滤器、最大池化和softmax层。虽然简化了原始模型(不使用预训练的word2vec、无L2正则化、单个输入通道),但依然能展示CNN在NLP中的应用。

459

459

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?