介绍

这是一个简单的三层全连接网络,所谓全连接网络,就是每一层的每一个节点均与前一层的每一个节点相连,如下所示:

h

1

h_1

h1、

h

2

h_2

h2均与

i

1

i_1

i1、

i

2

i_2

i2相连 ;

o

1

o_1

o1、

o

2

o_2

o2也均与

h

1

h_1

h1、

h

2

h_2

h2相连

计算步骤

初始状态:

前向计算:

根据初始状态图可得以下公式:

第一层到第二层的节点间计算:

{

n

e

t

h

1

=

w

1

×

i

1

+

w

2

×

i

2

+

b

1

n

e

t

h

2

=

w

3

×

i

1

+

w

4

×

i

2

+

b

1

\left\{ \begin{array}{l} net_{h1}=w1\times i1+w2\times i2+b1\\ net_{h2}=w3\times i1+w4\times i2+b1\\ \end{array} \right. \

{neth1=w1×i1+w2×i2+b1neth2=w3×i1+w4×i2+b1

使用激活函数得到

o

u

t

h

1

out_{h1}

outh1、

o

u

t

h

2

out_{h2}

outh2:

{

o

u

t

h

1

=

1

1

+

e

−

n

e

t

h

1

o

u

t

h

2

=

1

1

+

e

−

n

e

t

h

2

\left\{ \begin{array}{l} out_{h1}=\frac{1}{1+e^{-net_{h1}}}\\ out_{h2}=\frac{1}{1+e^{-net_{h2}}}\\ \end{array} \right. \

{outh1=1+e−neth11outh2=1+e−neth21

第二层到第三层的节点间计算:

{

n

e

t

o

1

=

w

5

×

o

u

t

h

1

+

w

6

×

o

u

t

h

2

+

b

2

n

e

t

o

2

=

w

7

×

o

u

t

h

1

+

w

8

×

o

u

t

h

2

+

b

2

\left\{ \begin{array}{l} net_{o1}=w5\times out_{h1}+w6\times out_{h2}+b2\\ net_{o2}=w7\times out_{h1}+w8\times out_{h2}+b2\\ \end{array} \right. \

{neto1=w5×outh1+w6×outh2+b2neto2=w7×outh1+w8×outh2+b2

使用激活函数得到

o

u

t

o

1

out_{o1}

outo1、

o

u

t

o

2

out_{o2}

outo2:

{

o

u

t

o

1

=

1

1

+

e

−

n

e

t

o

1

o

u

t

o

2

=

1

1

+

e

−

n

e

t

o

2

\left\{ \begin{array}{l} out_{o1}=\frac{1}{1+e^{-net_{o1}}}\\ out_{o2}=\frac{1}{1+e^{-net_{o2}}}\\ \end{array} \right. \

{outo1=1+e−neto11outo2=1+e−neto21

根据公式可得以下值:

以

o

u

t

h

1

out_ {h1}

outh1为例:

{

n

e

t

h

1

=

w

1

×

i

1

+

w

2

×

i

2

+

b

1

=

0.15

×

0.05

+

0.2

×

0.1

+

0.35

=

0.3775

o

u

t

h

1

=

1

1

+

e

−

0.3775

=

0.593269992

\left\{ \begin{array}{l} net_{h1}=w1\times i1+w2\times i2+b1 =0.15\times 0.05+0.2\times 0.1+0.35 = 0.3775\\ out_{h1}=\frac{1}{1+e^{-0.3775}} = 0.593269992\\ \end{array} \right. \

{neth1=w1×i1+w2×i2+b1=0.15×0.05+0.2×0.1+0.35=0.3775outh1=1+e−0.37751=0.593269992

同理可得

o

u

t

h

2

=

0.596884378

out_ {h2}= 0.596884378

outh2=0.596884378

以

o

u

t

o

1

out_ {o1}

outo1为例:

{

n

e

t

o

1

=

w

5

×

o

u

t

h

1

+

w

6

×

o

u

t

h

2

+

b

2

=

0.4

×

0.593269992

+

0.45

×

0.596884378

+

0.6

=

1.105905967

o

u

t

h

1

=

1

1

+

e

−

1.105905967

=

0.75136507

\left\{ \begin{array}{l} net_{o1}=w5\times out_{h1}+w6\times out_{h2}+b2 =0.4\times 0.593269992+0.45\times 0.596884378+0.6 = 1.105905967\\ out_{h1}=\frac{1}{1+e^{-1.105905967}} = 0.75136507\\ \end{array} \right. \

{neto1=w5×outh1+w6×outh2+b2=0.4×0.593269992+0.45×0.596884378+0.6=1.105905967outh1=1+e−1.1059059671=0.75136507

同理可得

o

u

t

o

2

=

0.772928465

out_ {o2}= 0.772928465

outo2=0.772928465

通过得到的结果可以看到输出的结果和预期输出的结果存在差异,则需要更新参数

反向计算(更新参数):

η是学习率(learning rate),学习率是有最优值的,过大会导致模型不收敛,过小则导致模型收敛特别慢或者无法学习

这里指定学习率η = 0.5

更新参数

w

1

w_1

w1、

w

3

w_3

w3、

w

3

w_3

w3、

w

4

w_4

w4

一次反向传播完成,再次正向计算可得到新的实际输出

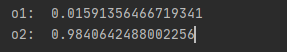

迭代上述运算过程10000次后,输出为[0.01591356466719341, 0.9840642488002256],已经

非常接近期望输出

代码

import numpy as np

# i1 = 0.05; i2 = 0.10

# out1 = 0.01;out2 = 0.99

# w1 = 0.15 ; w2 = 0.20; w3 = 0.25; w4 = 0.30; b1 = 0.35

# w5 = 0.4 ; w6 = 0.45; w7 = 0.5; w8 = 0.55; b2 = 0.6

def cacu():

i1 = 0.05; i2 = 0.10;

out1 = 0.01;out2 = 0.99

w1 = 0.15; w2 = 0.20; w3 = 0.25; w4 = 0.30; b1 = 0.35

w5 = 0.4; w6 = 0.45; w7 = 0.5; w8 = 0.55; b2 = 0.6

for i in range(10000):

net_h1 = w1 * i1 + w2 * i2 + b1

net_h2 = w3 * i1 + w4 * i2 + b1

h1 = 1 / ( np.exp(-net_h1)+1 )

h2 = 1 / ( np.exp(-net_h2)+1 )

net_o1 = w5 * h1 + w6 * h2 + b2

net_o2 = w7 * h1 + w8 * h2 + b2

o1 = 1 / ( np.exp(-net_o1)+1 )

o2 = 1 / ( np.exp(-net_o2)+1 )

print("o1:",o1,o2 )

E_total = (o1-out1)*(o1-out1)*0.5 + (o2-out2)*(o2-out2)*0.5

print("total:",E_total)

Etotal_O1 = (out1 - o1) * -1

Eo1_neto1 = o1 *( 1-o1 )

neto1_w5 = h1

change_w5 = Etotal_O1 * Eo1_neto1 * neto1_w5

w5_new = w5 - change_w5 *0.5

print("w5: ",w5_new)

neto1_w6 = h2

change_w6 = Etotal_O1 * Eo1_neto1 * neto1_w6

w6_new = w6 - change_w6 * 0.5

print("w6: ",w6_new)

Etotal_O2 = (out2 - o2) * -1

Eo2_neto2 = o2 * (1 - o2)

neto2_w7 = h1

change_w7 = Etotal_O2 * Eo2_neto2 * neto2_w7

w7_new = w7 - change_w7 * 0.5

print("w7: ",w7_new)

neto2_w8 = h2

change_w8 = Etotal_O2 * Eo2_neto2 * neto2_w8

w8_new = w8 - change_w8 * 0.5

print("w8: ",w8_new)

#计算 Etotal_w1

# 计算 Etotal_outh1

# 计算 E01_outh1

E01_outh1 = Etotal_O1 * Eo1_neto1 * w5

print("E01_outh1 : ", E01_outh1)

# 计算 E02_outh1

E02_outh1 = Etotal_O2 * Eo2_neto2 * w7

print("E02_outh1 : ", E02_outh1)

Etotal_outh1 = E02_outh1 + E01_outh1

print("Etotal_outh1 : ", Etotal_outh1)

# 计算 outh1_neth1

outh1_neth1 = h1 * ( 1- h1 )

print("out1_neth1: ",outh1_neth1)

# 计算neth1_w1

neth1_w1 = i1

Etotal_w1 = Etotal_outh1 * outh1_neth1 * neth1_w1

print("Etotal_w1:",Etotal_w1)

w1_new = w1 - 0.5 * Etotal_w1

print("w1_new:",w1_new)

# 计算neth1_w2

neth1_w2 = i2

Etotal_w2 = Etotal_outh1 * outh1_neth1 * neth1_w2

print("Etotal_w1:", Etotal_w2)

w2_new = w2 - 0.5 * Etotal_w2

print("w2_new:", w2_new)

# 计算 Etotal_w3

# 计算 Etotal_outh2

# 计算 E01_outh2

E01_outh2 = Etotal_O1 * Eo1_neto1 * w6

print("E01_outh1 : ", E01_outh1)

# 计算 E02_outh2

E02_outh2 = Etotal_O2 * Eo2_neto2 * w8

print("E02_outh1 : ", E02_outh1)

Etotal_outh1 = E02_outh2 + E01_outh2

print("Etotal_outh1 : ", Etotal_outh1)

# 计算 outh2_neth2

outh2_neth2 = h2 * (1 - h2)

print("out1_neth1: ", outh2_neth2)

# 计算neth1_w3

neth1_w3 = i1

Etotal_w3 = Etotal_outh1 * outh1_neth1 * neth1_w3

print("Etotal_w3:", Etotal_w3)

w3_new = w3 - 0.5 * Etotal_w3

print("w3_new:", w3_new)

# 计算neth1_w4

neth1_w4 = i2

Etotal_w4 = Etotal_outh1 * outh1_neth1 * neth1_w4

print("Etotal_w4:", Etotal_w4)

w4_new = w4 - 0.5 * Etotal_w4

print("w4_new:", w4_new)

w1 = w1_new; w2 = w2_new; w3 = w3_new; w4 = w4_new

w5 = w5_new; w6 = w6_new; w7 = w7_new; w8 = w8_new

print("o1: ", o1 )

print("o2: ", o2)

def main():

cacu()

if __name__ == "__main__":

main()

示例输出:

622

622

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?