文章目录

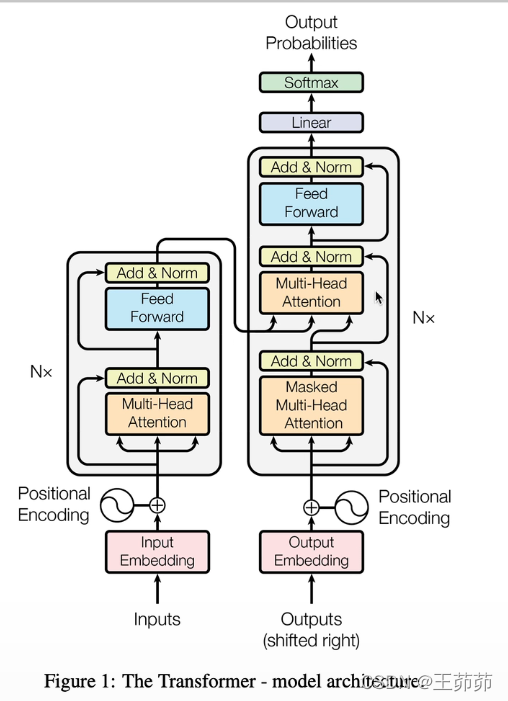

模型架构

Encoder:

N个block组成,每个block由一个自注意层和+一个FFN层组成

Decoder:

N个block组成,每个block由一个masked自注意层+交叉注意层+FFN层组成

为什么以第一个自注意层需要对输入的右侧进行mask? decoder的query的输入是串行的,在测试时前面的query输入时其实是看不见后面的序列,因此应该对其进行mask,以保证当前的判断仅依赖于此前的序列。

交叉注意层——q来自decoder;k,v来自encoder的输出

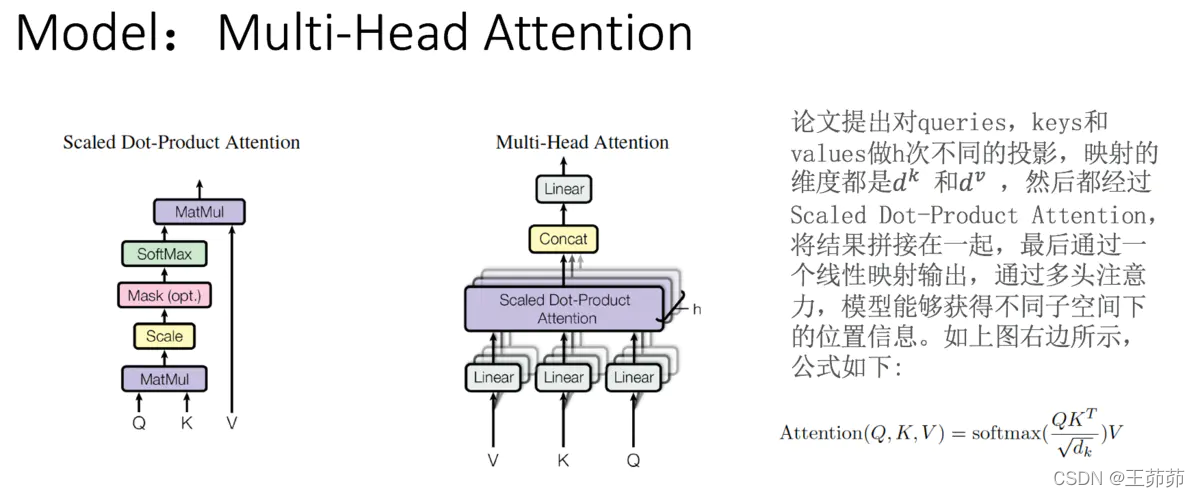

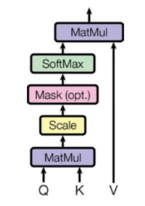

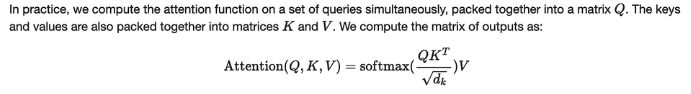

使用的是点乘注意力得分计算方法:

这里把attention抽象为对 value() 的每个 token进行加权,而加权的weight就是 attentionweight,而 attention weight 就是根据 query和 key 计算得到,其意义为:为了用 value求出 query的结果, 根据 query和 key 来决定注意力应该放在value的哪部分。

这里把attention抽象为对 value() 的每个 token进行加权,而加权的weight就是 attentionweight,而 attention weight 就是根据 query和 key 计算得到,其意义为:为了用 value求出 query的结果, 根据 query和 key 来决定注意力应该放在value的哪部分。

为什么要除以√d_k? 是因为如果d_k太大,点乘的值太大,如果不做scaling,结果就没有加法注意力好。另外,点乘的结果过大,这使得经过softmax之后的梯度很小,不利于反向传播的进行,所以我们通过对点乘的结果进行尺度化。

多头注意力,就是将多个上述部分的结果拼接起来:

位置编码:

位置编码会随着残差计算向后传播,

本文采用的是sin/cos位置编码,计算公式如下图所示:

源码

有五个相关类:

- Transformer

- TransformerEncoder

- TransformerDecoder

- TransformerEncoderLayer

- TransformerDecoderLayer

torch.nn.Transformer

import copy

from typing import Optional, Any, Union, Callable

import torch

from torch import Tensor

from .. import functional as F

from .module import Module

from .activation import MultiheadAttention

from .container import ModuleList

from ..init import xavier_uniform_

from .dropout import Dropout

from .linear import Linear

from .normalization import LayerNorm

class Transformer(Module):

r"""A transformer model. User is able to modify the attributes as needed. The architecture

is based on the paper "Attention Is All You Need". Ashish Vaswani, Noam Shazeer,

Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Lukasz Kaiser, and

Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information

Processing Systems, pages 6000-6010. Users can build the BERT(https://arxiv.org/abs/1810.04805)

model with corresponding parameters.

Args:

d_model: the number of expected features in the encoder/decoder inputs (default=512).

nhead: the number of heads in the multiheadattention models (default=8).

num_encoder_layers: the number of sub-encoder-layers in the encoder (default=6).

num_decoder_layers: the number of sub-decoder-layers in the decoder (default=6).

dim_feedforward: the dimension of the feedforward network model (default=2048).

dropout: the dropout value (default=0.1).

activation: the activation function of encoder/decoder intermediate layer, can be a string

("relu" or "gelu") or a unary callable. Default: relu

custom_encoder: custom encoder (default=None).

custom_decoder: custom decoder (default=None).

layer_norm_eps: the eps value in layer normalization components (default=1e-5).

batch_first: If ``True``, then the input and output tensors are provided

as (batch, seq, feature). Default: ``False`` (seq, batch, feature).

norm_first: if ``True``, encoder and decoder layers will perform LayerNorms before

other attention and feedforward operations, otherwise after. Default: ``False`` (after).

Examples::

>>> transformer_model = nn.Transformer(nhead=16, num_encoder_layers=12)

>>> src = torch.rand((10, 32, 512))

>>> tgt = torch.rand((20, 32, 512))

>>> out = transformer_model(src, tgt)

Note: A full example to apply nn.Transformer module for the word language model is available in

https://github.com/pytorch/examples/tree/master/word_language_model

"""

def __init__(self, d_model: int = 512, nhead: int = 8, num_encoder_layers: int = 6,

num_decoder_layers: int = 6, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

custom_encoder: Optional[Any] = None, custom_decoder: Optional[Any] = None,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

pass

def forward(self, src: Tensor, tgt: Tensor, src_mask: Optional[Tensor] = None, tgt_mask: Optional[Tensor] = None,

memory_mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None,

tgt_key_padding_mask: Optional[Tensor] = None, memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

pass

@staticmethod

def generate_square_subsequent_mask(sz: int) -> Tensor:

pass

def _reset_parameters(self):

pass

init

调用及参数

torch.nn.Transformer(d_model=512, nhead=8, num_encoder_layers=6, num_decoder_layers=6,

dim_feedforward=2048, dropout=0.1, activation='relu',

custom_encoder=None, custom_decoder=None)

参数:

d_model –编码器/解码器输入大小(默认 512)。

nhead –多头注意力模型的头数(默认为8)。

num_encoder_layers –编码器中子编码器层(block)的数量(默认为6)。

num_decoder_layers –解码器中子解码器层(block)的数量(默认为6)。

dim_feedforward –前馈网络模型的中间层维度(默认= 2048)。

dropout –默认值= 0.1。

activation–编码器/解码器中间层的激活函数,relu或gelu(默认值= relu)。

custom_encoder –自定义编码器(默认=None)。

custom_decoder –自定义解码器(默认=None)。

源码

def __init__(self, d_model: int = 512, nhead: int = 8, num_encoder_layers: int = 6,

num_decoder_layers: int = 6, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

custom_encoder: Optional[Any] = None, custom_decoder: Optional[Any] = None,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

factory_kwargs = {'device': device, 'dtype': dtype}

super(Transformer, self).__init__()

if custom_encoder is not None: //是否自定义编码器

self.encoder = custom_encoder

else:

encoder_layer = TransformerEncoderLayer(d_model, nhead, dim_feedforward, dropout,

activation, layer_norm_eps, batch_first, norm_first,

**factory_kwargs)

encoder_norm = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.encoder = TransformerEncoder(encoder_layer, num_encoder_layers, encoder_norm)

if custom_decoder is not None:

self.decoder = custom_decoder

else:

decoder_layer = TransformerDecoderLayer(d_model, nhead, dim_feedforward, dropout,

activation, layer_norm_eps, batch_first, norm_first,

**factory_kwargs)

decoder_norm = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.decoder = TransformerDecoder(decoder_layer, num_decoder_layers, decoder_norm)

self._reset_parameters()

self.d_model = d_model

self.nhead = nhead

self.batch_first = batch_first

forward

调用及参数

forward(src, tgt, src_mask=None, tgt_mask=None, memory_mask=None,

src_key_padding_mask=None, tgt_key_padding_mask=None, memory_key_padding_mask=None)

参数:

src – the sequence to the encoder (required).

tgt – the sequence to the decoder (required).

src_mask – the additive mask for the src sequence (optional).

tgt_mask – the additive mask for the tgt sequence (optional).

memory_mask – the additive mask for the encoder output (optional).

src_key_padding_mask – the ByteTensor mask for src keys per batch (optional).

tgt_key_padding_mask – the ByteTensor mask for tgt keys per batch (optional).

memory_key_padding_mask – the ByteTensor mask for memory keys per batch (optional).

shape:

// S is the source sequence length, T is the target sequence length, N is the batch size, E is the feature number

src: (S, E)(S,E) for unbatched input, (S, N, E)(S,N,E) if batch_first=False or (N, S, E) if batch_first=True.

tgt: (T, E)(T,E) for unbatched input, (T, N, E)(T,N,E) if batch_first=False or (N, T, E) if batch_first=True.

src_mask: (S, S)(S,S).

tgt_mask: (T, T)(T,T).

memory_mask: (T, S)(T,S).

src_key_padding_mask: (S)(S) for unbatched input otherwise (N, S)(N,S).

tgt_key_padding_mask: (T)(T) for unbatched input otherwise (N, T)(N,T).

memory_key_padding_mask: (S)(S) for unbatched input otherwise (N, S)(N,S).

源码

def forward(self, src: Tensor, tgt: Tensor, src_mask: Optional[Tensor] = None, tgt_mask: Optional[Tensor] = None,

memory_mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None,

tgt_key_padding_mask: Optional[Tensor] = None, memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Take in and process masked source/target sequences.

Args:

src: the sequence to the encoder (required).

tgt: the sequence to the decoder (required).

src_mask: the additive mask for the src sequence (optional).

tgt_mask: the additive mask for the tgt sequence (optional).

memory_mask: the additive mask for the encoder output (optional).

src_key_padding_mask: the ByteTensor mask for src keys per batch (optional).

tgt_key_padding_mask: the ByteTensor mask for tgt keys per batch (optional).

memory_key_padding_mask: the ByteTensor mask for memory keys per batch (optional).

Shape:

- src: :math:`(S, E)` for unbatched input, :math:`(S, N, E)` if `batch_first=False` or

`(N, S, E)` if `batch_first=True`.

- tgt: :math:`(T, E)` for unbatched input, :math:`(T, N, E)` if `batch_first=False` or

`(N, T, E)` if `batch_first=True`.

- src_mask: :math:`(S, S)`.

- tgt_mask: :math:`(T, T)`.

- memory_mask: :math:`(T, S)`.

- src_key_padding_mask: :math:`(S)` for unbatched input otherwise :math:`(N, S)`.

- tgt_key_padding_mask: :math:`(T)` for unbatched input otherwise :math:`(N, T)`.

- memory_key_padding_mask: :math:`(S)` for unbatched input otherwise :math:`(N, S)`.

Note: [src/tgt/memory]_mask ensures that position i is allowed to attend the unmasked

positions. If a ByteTensor is provided, the non-zero positions are not allowed to attend

while the zero positions will be unchanged. If a BoolTensor is provided, positions with ``True``

are not allowed to attend while ``False`` values will be unchanged. If a FloatTensor

is provided, it will be added to the attention weight.

[src/tgt/memory]_key_padding_mask provides specified elements in the key to be ignored by

the attention. If a ByteTensor is provided, the non-zero positions will be ignored while the zero

positions will be unchanged. If a BoolTensor is provided, the positions with the

value of ``True`` will be ignored while the position with the value of ``False`` will be unchanged.

- output: :math:`(T, E)` for unbatched input, :math:`(T, N, E)` if `batch_first=False` or

`(N, T, E)` if `batch_first=True`.

Note: Due to the multi-head attention architecture in the transformer model,

the output sequence length of a transformer is same as the input sequence

(i.e. target) length of the decode.

where S is the source sequence length, T is the target sequence length, N is the

batch size, E is the feature number

Examples:

>>> output = transformer_model(src, tgt, src_mask=src_mask, tgt_mask=tgt_mask)

"""

is_batched = src.dim() == 3

if not self.batch_first and src.size(1) != tgt.size(1) and is_batched:

raise RuntimeError("the batch number of src and tgt must be equal")

elif self.batch_first and src.size(0) != tgt.size(0) and is_batched:

raise RuntimeError("the batch number of src and tgt must be equal")

if src.size(-1) != self.d_model or tgt.size(-1) != self.d_model:

raise RuntimeError("the feature number of src and tgt must be equal to d_model")

memory = self.encoder(src, mask=src_mask, src_key_padding_mask=src_key_padding_mask)

output = self.decoder(tgt, memory, tgt_mask=tgt_mask, memory_mask=memory_mask,

tgt_key_padding_mask=tgt_key_padding_mask,

memory_key_padding_mask=memory_key_padding_mask)

return output

torch.nn.TransformerEncoderLayer

class TransformerEncoderLayer(Module):

r"""TransformerEncoderLayer is made up of self-attn and feedforward network.

This standard encoder layer is based on the paper "Attention Is All You Need".

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez,

Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in

Neural Information Processing Systems, pages 6000-6010. Users may modify or implement

in a different way during application.

Args:

d_model: the number of expected features in the input (required).

nhead: the number of heads in the multiheadattention models (required).

dim_feedforward: the dimension of the feedforward network model (default=2048).

dropout: the dropout value (default=0.1).

activation: the activation function of the intermediate layer, can be a string

("relu" or "gelu") or a unary callable. Default: relu

layer_norm_eps: the eps value in layer normalization components (default=1e-5).

batch_first: If ``True``, then the input and output tensors are provided

as (batch, seq, feature). Default: ``False`` (seq, batch, feature).

norm_first: if ``True``, layer norm is done prior to attention and feedforward

operations, respectivaly. Otherwise it's done after. Default: ``False`` (after).

Examples::

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8)

>>> src = torch.rand(10, 32, 512)

>>> out = encoder_layer(src)

Alternatively, when ``batch_first`` is ``True``:

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8, batch_first=True)

>>> src = torch.rand(32, 10, 512)

>>> out = encoder_layer(src)

"""

__constants__ = ['batch_first', 'norm_first']

def __init__(self, d_model: int, nhead: int, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

def __setstate__(self, state):

def forward(self, src: Tensor, src_mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

# self-attention block

def _sa_block(self, x: Tensor,attn_mask: Optional[Tensor],

key_padding_mask: Optional[Tensor]) -> Tensor:

init

调用及参数

torch.nn.TransformerEncoderLayer(d_model, nhead,

dim_feedforward=2048, dropout=0.1, activation='relu')

参数:

d_model – the number of expected features in the input (required).

nhead – the number of heads in the multiheadattention models (required).

dim_feedforward – the dimension of the feedforward network model (default=2048).

dropout – the dropout value (default=0.1).

activation – the activation function of intermediate layer, relu or gelu (default=relu).

源码

def __init__(self, d_model: int, nhead: int, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

factory_kwargs = {'device': device, 'dtype': dtype}

super(TransformerEncoderLayer, self).__init__()

self.self_attn = MultiheadAttention(d_model, nhead, dropout=dropout, batch_first=batch_first,

**factory_kwargs)

# Implementation of Feedforward model

self.linear1 = Linear(d_model, dim_feedforward, **factory_kwargs)

self.dropout = Dropout(dropout)

self.linear2 = Linear(dim_feedforward, d_model, **factory_kwargs)

self.norm_first = norm_first

self.norm1 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.norm2 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.dropout1 = Dropout(dropout)

self.dropout2 = Dropout(dropout)

# Legacy string support for activation function.

if isinstance(activation, str):

self.activation = _get_activation_fn(activation)

else:

self.activation = activation

forward

调用及参数

forward(src, src_mask=None, src_key_padding_mask=None)

参数:

src – the sequnce to the encoder layer (required).

src_mask – the mask for the src sequence (optional).

src_key_padding_mask – the mask for the src keys per batch (optional).

源码

def forward(self, src: Tensor, src_mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the input through the encoder layer.

Args:

src: the sequence to the encoder layer (required).

src_mask: the mask for the src sequence (optional).

src_key_padding_mask: the mask for the src keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

# see Fig. 1 of https://arxiv.org/pdf/2002.04745v1.pdf

x = src

if self.norm_first:

x = x + self._sa_block(self.norm1(x), src_mask, src_key_padding_mask)

x = x + self._ff_block(self.norm2(x))

else:

x = self.norm1(x + self._sa_block(x, src_mask, src_key_padding_mask)) //self-attention

x = self.norm2(x + self._ff_block(x)) //FFN

return x

# self-attention block

def _sa_block(self, x: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

//self.self_attn==>MultiheadAttention

x = self.self_attn(x, x, x,

attn_mask=attn_mask,

key_padding_mask=key_padding_mask,

need_weights=False)[0]

return self.dropout1(x)

# feed forward block

def _ff_block(self, x: Tensor) -> Tensor:

x = self.linear2(self.dropout(self.activation(self.linear1(x))))

return self.dropout2(x)

torch.nn.TransformerEncoder

TransformerEncoder is a stack of N encoder layers

class TransformerEncoder(Module):

r"""TransformerEncoder is a stack of N encoder layers

Args:

encoder_layer: an instance of the TransformerEncoderLayer() class (required).

num_layers: the number of sub-encoder-layers in the encoder (required).

norm: the layer normalization component (optional).

Examples::

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8)

>>> transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=6)

>>> src = torch.rand(10, 32, 512)

>>> out = transformer_encoder(src)

"""

__constants__ = ['norm']

def __init__(self, encoder_layer, num_layers, norm=None):

def forward(self, src: Tensor, mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

init

调用及参数

torch.nn.TransformerEncoder(encoder_layer, num_layers, norm=None)

参数:

coder_layer – TransformerEncoderLayer类的实例(必需)。

num_layers –编码器中的子编码器层数(必填)。

norm –层归一化组件(可选)。

源码

def __init__(self, encoder_layer, num_layers, norm=None):

super(TransformerEncoder, self).__init__()

self.layers = _get_clones(encoder_layer, num_layers)

self.num_layers = num_layers

self.norm = norm

forward

调用及参数

forward(src, mask=None, src_key_padding_mask=None)

参数:

src – the sequnce to the encoder (required).

mask – the mask for the src sequence (optional).

src_key_padding_mask – the mask for the src keys per batch (optional).

源码

def forward(self, src: Tensor, mask: Optional[Tensor] = None, src_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the input through the encoder layers in turn.

Args:

src: the sequence to the encoder (required).

mask: the mask for the src sequence (optional).

src_key_padding_mask: the mask for the src keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

output = src

for mod in self.layers:

output = mod(output, src_mask=mask, src_key_padding_mask=src_key_padding_mask)

if self.norm is not None:

output = self.norm(output)

return output

torch.nn.TransformerDecoderLayer

TransformerDecoderLayer is made up of self-attn, multi-head-attn and feedforward network

class TransformerDecoderLayer(Module):

r"""TransformerDecoderLayer is made up of self-attn, multi-head-attn and feedforward network.

This standard decoder layer is based on the paper "Attention Is All You Need".

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez,

Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in

Neural Information Processing Systems, pages 6000-6010. Users may modify or implement

in a different way during application.

Args:

d_model: the number of expected features in the input (required).

nhead: the number of heads in the multiheadattention models (required).

dim_feedforward: the dimension of the feedforward network model (default=2048).

dropout: the dropout value (default=0.1).

activation: the activation function of the intermediate layer, can be a string

("relu" or "gelu") or a unary callable. Default: relu

layer_norm_eps: the eps value in layer normalization components (default=1e-5).

batch_first: If ``True``, then the input and output tensors are provided

as (batch, seq, feature). Default: ``False`` (seq, batch, feature).

norm_first: if ``True``, layer norm is done prior to self attention, multihead

attention and feedforward operations, respectivaly. Otherwise it's done after.

Default: ``False`` (after).

Examples::

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8)

>>> memory = torch.rand(10, 32, 512)

>>> tgt = torch.rand(20, 32, 512)

>>> out = decoder_layer(tgt, memory)

Alternatively, when ``batch_first`` is ``True``:

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8, batch_first=True)

>>> memory = torch.rand(32, 10, 512)

>>> tgt = torch.rand(32, 20, 512)

>>> out = decoder_layer(tgt, memory)

"""

__constants__ = ['batch_first', 'norm_first']

def __init__(self, d_model: int, nhead: int, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

def __setstate__(self, state):

def forward(self, tgt: Tensor, memory: Tensor, tgt_mask: Optional[Tensor] = None, memory_mask: Optional[Tensor] = None,

tgt_key_padding_mask: Optional[Tensor] = None, memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

# self-attention block

def _sa_block(self, x: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

# multihead attention block

def _mha_block(self, x: Tensor, mem: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

# feed forward block

def _ff_block(self, x: Tensor) -> Tensor:

init

调用及参数

torch.nn.TransformerDecoderLayer(d_model, nhead, dim_feedforward=2048, dropout=0.1, activation='relu')

参数:

d_model – the number of expected features in the input (required).

nhead – the number of heads in the multiheadattention models (required).

dim_feedforward – the dimension of the feedforward network model (default=2048).

dropout – the dropout value (default=0.1).

activation – the activation function of intermediate layer, relu or gelu (default=relu).

源码

def __init__(self, d_model: int, nhead: int, dim_feedforward: int = 2048, dropout: float = 0.1,

activation: Union[str, Callable[[Tensor], Tensor]] = F.relu,

layer_norm_eps: float = 1e-5, batch_first: bool = False, norm_first: bool = False,

device=None, dtype=None) -> None:

factory_kwargs = {'device': device, 'dtype': dtype}

super(TransformerDecoderLayer, self).__init__()

## 在Decoder中有两个MultiheadAttention,一个是masked self-Attention;一个是cross Attention

self.self_attn = MultiheadAttention(d_model, nhead, dropout=dropout, batch_first=batch_first,

**factory_kwargs)

self.multihead_attn = MultiheadAttention(d_model, nhead, dropout=dropout, batch_first=batch_first,

**factory_kwargs)

# Implementation of Feedforward model

self.linear1 = Linear(d_model, dim_feedforward, **factory_kwargs)

self.dropout = Dropout(dropout)

self.linear2 = Linear(dim_feedforward, d_model, **factory_kwargs)

self.norm_first = norm_first

## 因为有三个模块,所以此处定义三对,norm、dropout

self.norm1 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.norm2 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.norm3 = LayerNorm(d_model, eps=layer_norm_eps, **factory_kwargs)

self.dropout1 = Dropout(dropout)

self.dropout2 = Dropout(dropout)

self.dropout3 = Dropout(dropout)

# Legacy string support for activation function.

if isinstance(activation, str):

self.activation = _get_activation_fn(activation)

else:

self.activation = activation

forward

调用及参数

forward(tgt, memory, tgt_mask=None, memory_mask=None,

tgt_key_padding_mask=None, memory_key_padding_mask=None)

参数:

tgt – the sequence to the decoder layer (required).

memory – the sequnce from the last layer of the encoder (required).

tgt_mask – the mask for the tgt sequence (optional).

memory_mask – the mask for the memory sequence (optional).

tgt_key_padding_mask – the mask for the tgt keys per batch (optional).

memory_key_padding_mask – the mask for the memory keys per batch (optional).

源码

def forward(self, tgt: Tensor, memory: Tensor, tgt_mask: Optional[Tensor] = None, memory_mask: Optional[Tensor] = None,

tgt_key_padding_mask: Optional[Tensor] = None, memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the inputs (and mask) through the decoder layer.

Args:

tgt: the sequence to the decoder layer (required).

memory: the sequence from the last layer of the encoder (required).

tgt_mask: the mask for the tgt sequence (optional).

memory_mask: the mask for the memory sequence (optional).

tgt_key_padding_mask: the mask for the tgt keys per batch (optional).

memory_key_padding_mask: the mask for the memory keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

# see Fig. 1 of https://arxiv.org/pdf/2002.04745v1.pdf

x = tgt

if self.norm_first:

x = x + self._sa_block(self.norm1(x), tgt_mask, tgt_key_padding_mask)

x = x + self._mha_block(self.norm2(x), memory, memory_mask, memory_key_padding_mask)

x = x + self._ff_block(self.norm3(x))

else: ## 默认是执行这里

x = self.norm1(x + self._sa_block(x, tgt_mask, tgt_key_padding_mask))

x = self.norm2(x + self._mha_block(x, memory, memory_mask, memory_key_padding_mask))

x = self.norm3(x + self._ff_block(x))

return x

# self-attention block

def _sa_block(self, x: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

x = self.self_attn(x, x, x, ## 自注意层,因此query, key, value都是x

attn_mask=attn_mask,

key_padding_mask=key_padding_mask,

need_weights=False)[0]

return self.dropout1(x)

# multihead attention block

def _mha_block(self, x: Tensor, mem: Tensor,

attn_mask: Optional[Tensor], key_padding_mask: Optional[Tensor]) -> Tensor:

x = self.multihead_attn(x, mem, mem, ## 交叉注意层,query是x; key, value是encoder的输出

attn_mask=attn_mask,

key_padding_mask=key_padding_mask,

need_weights=False)[0]

return self.dropout2(x)

# feed forward block

def _ff_block(self, x: Tensor) -> Tensor:

x = self.linear2(self.dropout(self.activation(self.linear1(x))))

return self.dropout3(x)

torch.nn.TransformerDecoder

transformerDecoder是N个TransformerDecoderLayer的堆叠

class TransformerDecoder(Module):

r"""TransformerDecoder is a stack of N decoder layers

Args:

decoder_layer: an instance of the TransformerDecoderLayer() class (required).

num_layers: the number of sub-decoder-layers in the decoder (required).

norm: the layer normalization component (optional).

Examples::

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8)

>>> transformer_decoder = nn.TransformerDecoder(decoder_layer, num_layers=6)

>>> memory = torch.rand(10, 32, 512)

>>> tgt = torch.rand(20, 32, 512)

>>> out = transformer_decoder(tgt, memory)

"""

__constants__ = ['norm']

def __init__(self, decoder_layer, num_layers, norm=None):

def forward(self, tgt: Tensor, memory: Tensor, tgt_mask: Optional[Tensor] = None,

memory_mask: Optional[Tensor] = None, tgt_key_padding_mask: Optional[Tensor] = None,

memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

init

调用及参数

torch.nn.TransformerDecoder(decoder_layer, num_layers, norm=None)

参数:

decoder_layer – TransformerDecoderLayer()类的实例(必需)。

num_layers –解码器中子解码器层的数量(必需)。

norm –层归一化组件(可选)。

源码

def __init__(self, decoder_layer, num_layers, norm=None):

super(TransformerDecoder, self).__init__()

self.layers = _get_clones(decoder_layer, num_layers)

self.num_layers = num_layers

self.norm = norm

forward

调用及参数

forward(tgt, memory, tgt_mask=None, memory_mask=None,

tgt_key_padding_mask=None, memory_key_padding_mask=None)

参数:

tgt – the sequence to the decoder (required).

memory – the sequnce from the last layer of the encoder (required).

tgt_mask – the mask for the tgt sequence (optional).

memory_mask – the mask for the memory sequence (optional).

tgt_key_padding_mask – the mask for the tgt keys per batch (optional).

memory_key_padding_mask – the mask for the memory keys per batch (optional).

源码

def forward(self, tgt: Tensor, memory: Tensor, tgt_mask: Optional[Tensor] = None,

memory_mask: Optional[Tensor] = None, tgt_key_padding_mask: Optional[Tensor] = None,

memory_key_padding_mask: Optional[Tensor] = None) -> Tensor:

r"""Pass the inputs (and mask) through the decoder layer in turn.

Args:

tgt: the sequence to the decoder (required).

memory: the sequence from the last layer of the encoder (required).

tgt_mask: the mask for the tgt sequence (optional).

memory_mask: the mask for the memory sequence (optional).

tgt_key_padding_mask: the mask for the tgt keys per batch (optional).

memory_key_padding_mask: the mask for the memory keys per batch (optional).

Shape:

see the docs in Transformer class.

"""

output = tgt

for mod in self.layers:

output = mod(output, memory, tgt_mask=tgt_mask,

memory_mask=memory_mask,

tgt_key_padding_mask=tgt_key_padding_mask,

memory_key_padding_mask=memory_key_padding_mask)

if self.norm is not None:

output = self.norm(output)

return output

其它相关函数

def _get_clones(module, N):

return ModuleList([copy.deepcopy(module) for i in range(N)])

def _get_activation_fn(activation):

if activation == "relu":

return F.relu

elif activation == "gelu":

return F.gelu

raise RuntimeError("activation should be relu/gelu, not {}".format(activation))

Attention部分讲解

注意力函数可以描述为:将query和一组键值(key,value)对映射为输出output,其中query、key、value和output都是向量。output由value的加权和计算得到,其中分配给每个value的权重由query与相应key的兼容函数计算得到。

在本文中计算权重的方法就是 “Scaled Dot-Product Attention"

简单实现:

在Encoder中的自注意层:

K,Q,V都是input embedding+pos embedding经过三个映射得到的。

在Decoder中:

- 第一个自注意层:

K,Q,V都是input embedding+pos embedding经过三个映射得到的。 - 第二个交叉注意层:

Q是Decoder前面的输出经过映射得到的;K,V分别是是encoder的输出经过两种映射得到的。

294

294

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?