操作系统:win10

ML_Agent的版本:0.10.0

Unity版本:2017.4.13

参考链接 : https://github.com/Unity-Technologies/ml-agents/blob/latest_release/docs/Learning-Environment-Create-New.md.

目的:实现在一块有限大小的平面上静置一个盒子,还有一个随机碰撞盒子的小球,要避免小球从平面上掉下去

以下将介绍详细的步骤:

步骤1:创建Unity Project

(1)在Unity中打开ml-agents-0.10.0的文件夹下的UnitySDK文件夹,在assets文件夹下\Example文件夹下新建BallBox文件夹。如下图所示:

(2)在Hierarchy中添加plane、cube、sphere,分别命名为Floor、Target、RollerAgent,如下图所示:

步骤2:创建Brain

如下图创建RollerBallBrain

步骤3:创建Academy

新建EmptyObject,命名为Academy,在Inspector窗口点击Add Component 选择New Scripts ,命名为Roller Academy.代码为

using MLAgents;

public class RollerAcademy : Academy{ }

界面如下图所示

选择Add Brain to Broadcast Hub,选择RollerBallBrain,选择后如下图所示:

步骤4:创建Agent

添加Rigidbody,并添加新的脚本文件RollerAgent,代码内容:

using System.Collections.Generic;

using UnityEngine;

using MLAgents;

public class RollerAgent : Agent

{

Rigidbody rBody;

void Start () {

rBody = GetComponent<Rigidbody>();

}

public Transform Target;

public override void AgentReset()

{

if (this.transform.position.y < 0)

{

// If the Agent fell, zero its momentum

this.rBody.angularVelocity = Vector3.zero;

this.rBody.velocity = Vector3.zero;

this.transform.position = new Vector3( 0, 0.5f, 0);

}

// Move the target to a new spot

Target.position = new Vector3(Random.value * 8 - 4,

0.5f,

Random.value * 8 - 4);

}

using System.Collections.Generic;

using UnityEngine;

using MLAgents;

public class RollerAgent : Agent

{

Rigidbody rBody;

void Start () {

rBody = GetComponent<Rigidbody>();

}

public Transform Target;

public override void AgentReset()

{

if (this.transform.position.y < 0)

{

// If the Agent fell, zero its momentum

this.rBody.angularVelocity = Vector3.zero;

this.rBody.velocity = Vector3.zero;

this.transform.position = new Vector3( 0, 0.5f, 0);

}

// Move the target to a new spot

Target.position = new Vector3(Random.value * 8 - 4,

0.5f,

Random.value * 8 - 4);

}

}

public float speed = 10;

public override void AgentAction(float[] vectorAction)

{

// Actions, size = 2

Vector3 controlSignal = Vector3.zero;

controlSignal.x = vectorAction[0];

controlSignal.z = vectorAction[1];

rBody.AddForce(controlSignal * speed);

// Rewards

float distanceToTarget = Vector3.Distance(this.transform.position,

Target.position);

// Reached target

if (distanceToTarget < 1.42f)

{

SetReward(1.0f);

Done();

}

// Fell off platform

if (this.transform.position.y < 0)

{

Done();

}

}

}

添加脚本后,记得选择Brain和Target,如下图所示:

步骤5:训练网络

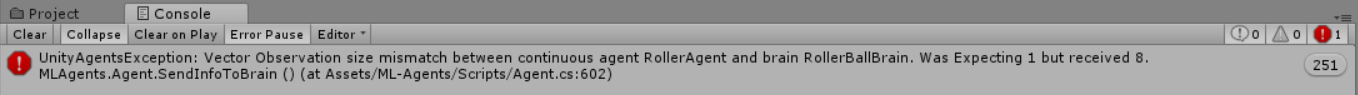

(1)选择步骤1创建的网络RollerBallBrain,进行如下图所示的设置:

如果不进行这一步的设置,后续运行后产生如下的问题:

(2)选择Academy,选中Control

(3)打开Anaconda Prompt,输入指令

cd C:\Users\MI\ml-agents-0.10.0

mlagents-learn config\trainer_config.yaml --run-id==ballbox001 --train

所得部分界面如下所示:

(4)回到Unity点击Plan按钮,进行网络的训练,部分训练界面如下所示:

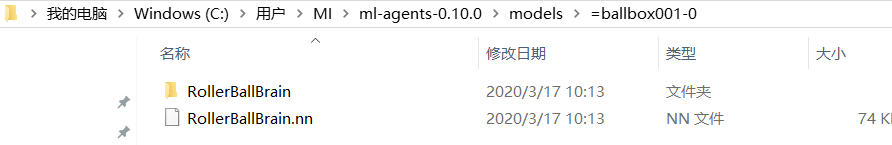

(5)训练完成的网络保存在

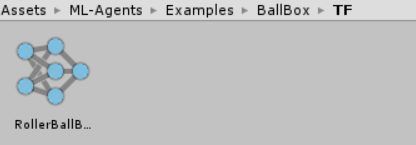

将RollerBallBrain.nn拖到

将RollerBallBrain.nn拖到

并将其添加到

最终,点击play就可以运行看训练效果啦!

1495

1495

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?