OpenVINO2022.1 C++在ubuntu20.04部署yolov5-6.x

模型转换路线:

pt --> onnx -->.xml,.bin --> OpenVINO runtime(C++)

1. 准备工作

- Github下载yolov5工程

- 安装依赖库,如pytorch,pip install -r requirements.txt

- 训练得到pt模型

2. pt --> onnx

- 官网装openvino-dev[onnx]:Intel® Distribution of OpenVINO™ Toolkit

- 使用yolov5工程中的模型转换模块将pt模型转换为onnx模型:

python export.py --data xxx.yaml --weights xxx.pt --img xxx(size) --batch 1

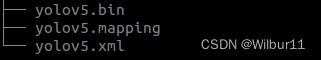

3. onnx --> IR(.xml, .bin)

- 用Model Optimizer将onnx模型转换为Intermediate Representation (IR),包含.xml和.bin文件,转换过程的其他参数参考Converting an ONNX Model.

mo --input_model xxx.onnx

4. IR(.xml, .bin) --> OpenVINO runtime(C++)

-

为了用C++跑模型,首先安装OpenVINO runtime,配置环境(安装),添加source,官方安装步骤:Install and Configure Intel® Distribution of OpenVINO™ Toolkit for Linux¶

-

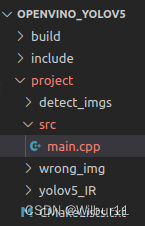

编写C++程序,写CmakeList.txt,用cmake编译:

附录

- main.cpp

- 头文件

#include <openvino/openvino.hpp> //OpenVINO >=2022.1

- 创建OpenVINO Core:

ov::Core core;

ov::CompiledModel compiled_model = core.compile_model("xxx.xml", "AUTO");

ov::InferRequest infer_request = compiled_model.create_infer_request();

- 获取输入、输出节点Tensor:

Tensor input_image_tensor = infer_request.get_tensor("images");

Tensor output_tensor = infer_request.get_tensor("output");

- 读入图片,将Mat图像转换为Tensor,然后让openvino runtime推理,获取推理结果并解析:

cv::Mat img = cv::imread(xxx.jpg);

fill_tensor_data_image(input_image_tensor, in_img);

infer_request.infer();

cv::Mat det_output(out_rows, out_cols, CV_32F, (float*)infer_output_tensor.data());

- NMS

vector<int> indexes;

cv::dnn::NMSBoxes(boxes, confidences, 0.25, 0.45, indexes);

95

95

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?