VS2019配置opencv3.4.1及opencv_contrib3.4.1

最近在做毕业设计,想用opencv里的SIFT,发现需要把OpenCV的扩展模块中的xfeatures给包含进来,所以需要安装拓展模块opencv_contrib,很痛苦。

《配库》

当我写代码的时候

我觉得

世上最痛苦的事是写代码

当我写论文的时候

我觉得

世上最痛苦的事是写论文

当我配库的时候

我才知道

世上最痛苦的事

是配库

更新!!!!

sift专利到期了,如果想用sift,opencv4.5之后可以不用配置附属库了

1.准备

1.visual studio2019

2.opencv3.4.1源码

3.opencv_contrib3.4.1(需要和opencv版本一致)

下载链接:https://github.com/opencv/opencv_contrib

4.cmake

2.步骤

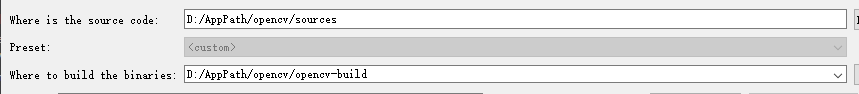

1.新建一个opencv-build文件夹,打开cmake,先填好下面两个路径:

然后开始Configure,之后会发现有一片红的

问题不大,再点一次configure,就会全部变白了

2.这时候,找到OPENCV_EXTRA_MODULES_PATH,填入拓展模块opencv_contrib里的modules文件夹的路径,并勾选OPENCV_ENABLE_NONFREE(否则没法使用SIFT),如下图:

3.勾选BUILD_opencv_world,在调用dll时,只调用这一个就行了,不用根据功能选择了

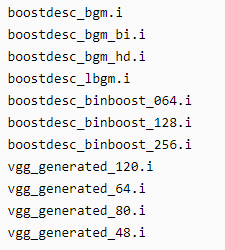

此时,再进行Configure,不幸的是,我遇到了一点问题,camke下面有一片红,提示以下这些东西没下载成功,那就自己下载下来放进相应的路径里面。

在网上找到的资源:

百度云链接:https://pan.baidu.com/s/1BeYF8kqEZLAJYQj-MvxpmA

提取码:e1wc

下载后拷贝到opencv_contrib/modules/xfeatures2d/src路径下,然后再次Configure,这时他还是在提示下载失败,不管它,如果没有其他问题,就点击Generate.

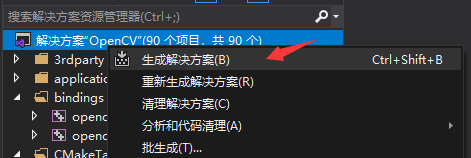

4.Generate Done之后,点击Open Project,就可以在VS中打开工程。选择平台为DebugX64,右键解决方案,选择生成解决方案,等待时间较长。

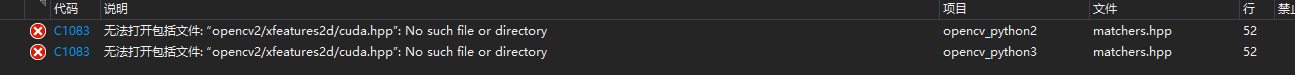

5.又遇到了问题,出现了错误

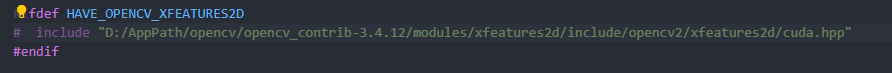

于是呢,双击这个错误,就可以跳转到对应的文件中,找到# include "opencv2/xfeatures2d/cuda.hpp"语句,并将其改为绝对路径:# include “D:/AppPath/opencv/opencv_contrib-3.4.12/modules/xfeatures2d/include/opencv2/xfeatures2d/cuda.hpp”

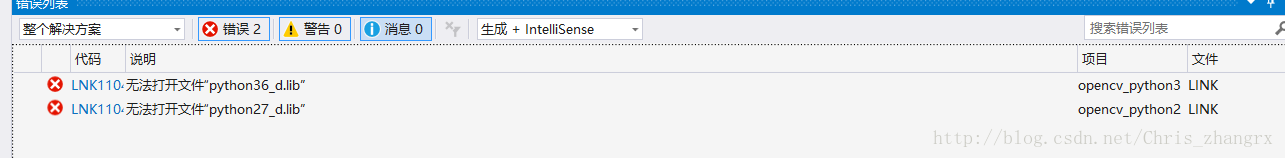

6.然后呢,又出现了另一个问题,累了

解决方法参考:https://blog.csdn.net/caomin1hao/article/details/80388136

当然你也可以不管它,我就没管,因为我此时已经游走在放弃的边缘了。

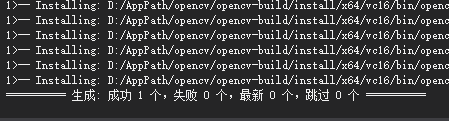

7.右键选择CMakeTargets,选择仅生成INSTALL

生成成功。

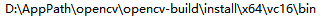

8.更改环境变量

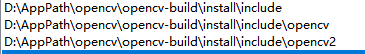

9.新建vs项目,在项目属性,包含目录中,添加

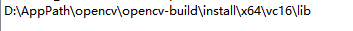

库目录中添加

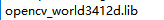

链接器-输入中添加

10.好了,结束了,找个测试用例试一下。

3.测试用例

#include <iostream>

#include <opencv2\opencv.hpp>

#include <opencv2\xfeatures2d.hpp>

using namespace std;

using namespace cv;

using namespace cv::xfeatures2d;

int main()

{

Mat firstImage = imread("26.jpg");

Mat secondImage = imread("27.jpg");

if (firstImage.empty() || secondImage.empty())

{

cout << "error" << endl;

return 0;

}

//resize(firstImage,firstImage,Size(800,1000),0,0,1);

//resize(secondImage,secondImage,Size(800,1000),0,0,1);

//第一步:获取SIFT特征

//difine a sift detector

Ptr<SIFT> sift = SIFT::create();

//sift->detect();

//SiftFeatureDetector siftDetector;

//store key points

vector<KeyPoint> firstKeypoint, secondKeypoint;

//detect image with SIFT,get key points

sift->detect(firstImage, firstKeypoint);

sift->detect(secondImage, secondKeypoint);

Mat firstOutImage, secondOutImage;

//draw key points at the out image and show to the user

drawKeypoints(firstImage, firstKeypoint, firstOutImage, Scalar(255, 0, 0));

drawKeypoints(secondImage, secondKeypoint, secondOutImage, Scalar(0, 255, 0));

imshow("first", firstOutImage);

imshow("second", secondOutImage);

Mat firstDescriptor, secondDescriptor;

sift->compute(firstImage,firstKeypoint,firstDescriptor);

sift->compute(secondImage,secondKeypoint,secondDescriptor);

Ptr<DescriptorMatcher > matcher = DescriptorMatcher::create("BruteForce");

Mat masks;

vector<DMatch> matches;

matcher->match(firstDescriptor, secondDescriptor, matches, masks);

//第二步:RANSAC方法剔除outliner

Mat matcheImage;

//将vector转化成Mat

Mat firstKeypointMat(matches.size(), 2, CV_32F), secondKeypointMat(matches.size(), 2, CV_32F);

for (int i = 0; i<matches.size(); i++)

{

firstKeypointMat.at<float>(i, 0) = firstKeypoint[matches[i].queryIdx].pt.x;

firstKeypointMat.at<float>(i, 1) = firstKeypoint[matches[i].queryIdx].pt.y;

secondKeypointMat.at<float>(i, 0) = secondKeypoint[matches[i].trainIdx].pt.x;

secondKeypointMat.at<float>(i, 1) = secondKeypoint[matches[i].trainIdx].pt.y;

}

//Calculate the fundamental Mat;

vector<uchar> ransacStatus;

Mat fundamentalMat = findFundamentalMat(firstKeypointMat, secondKeypointMat, ransacStatus, FM_RANSAC);

cout << fundamentalMat << endl;

//Calculate the number of outliner points;

int outlinerCount = 0;

for (int i = 0; i<matches.size(); i++)

{

if (ransacStatus[i] == 0)

{

outlinerCount++;

}

}

//Calculate inliner points;

vector<Point2f> firstInliner;

vector<Point2f> secondInliner;

vector<DMatch> inlinerMatches;

int inlinerCount = matches.size() - outlinerCount;

firstInliner.resize(inlinerCount);

secondInliner.resize(inlinerCount);

inlinerMatches.resize(inlinerCount);

int index = 0;

for (int i = 0; i<matches.size(); i++)

{

if (ransacStatus[i] != 0)

{

firstInliner[index].x = firstKeypointMat.at<float>(i, 0);

firstInliner[index].y = firstKeypointMat.at<float>(i, 1);

secondInliner[index].x = secondKeypointMat.at<float>(i, 0);

secondInliner[index].y = secondKeypointMat.at<float>(i, 1);

inlinerMatches[index].queryIdx = index;

inlinerMatches[index].trainIdx = index;

index++;

}

}

vector<KeyPoint> inlinerFirstKeypoint(inlinerCount);

vector<KeyPoint> inlinerSecondKeypoint(inlinerCount);

KeyPoint::convert(firstInliner, inlinerFirstKeypoint);

KeyPoint::convert(secondInliner, inlinerSecondKeypoint);

//cout<<fundamentalMat<<endl;

//select 50 keypoints

//matches.erase(matches.begin()+50,matches.end());

//inlinerMatches.erase(inlinerMatches.begin()+50,inlinerMatches.end());

drawMatches(firstImage, inlinerFirstKeypoint, secondImage, inlinerSecondKeypoint, inlinerMatches, matcheImage);

imshow("ransacMatches", matcheImage);

drawMatches(firstImage, firstKeypoint, secondImage, secondKeypoint, matches, matcheImage);

imshow("matches", matcheImage);

waitKey(0);

return 0;

}

4. 参考博客

1.https://blog.csdn.net/chentravelling/article/details/59540828?utm_medium=distribute.pc_relevant.none-task-blog-BlogCommendFromMachineLearnPai2-1.control&dist_request_id=&depth_1-utm_source=distribute.pc_relevant.none-task-blog-BlogCommendFromMachineLearnPai2-1.control

2.https://blog.csdn.net/weixin_41695564/article/details/79925379

7818

7818

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?