解决RuntimeError: CUDA out of memory,reserved memory is >> allocated memory

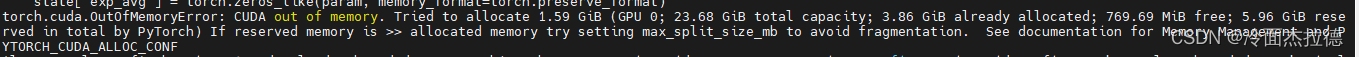

报错信息: torch.cuda.OutOfMemoryError: CUDA out of memory. Tried to allocate 1.59 GiB (GPU 0; 23.68 GiB total capacity; 3.86 GiB already allocated; 769.69 MiB free; 5.96 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

解决方法:

这里要注意是你的reserved memory is >> allocated memory可以使用的,但也不是一定有用,最后最后如果还不行的话可以试试(参数128可以调整)

代码如下:

import os

os.environ["PYTORCH_CUDA_ALLOC_CONF"] = "max_split_size_mb:128"

2116

2116

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?