B站刘二大人课程笔记整理lecture03 梯度下降

课程网址

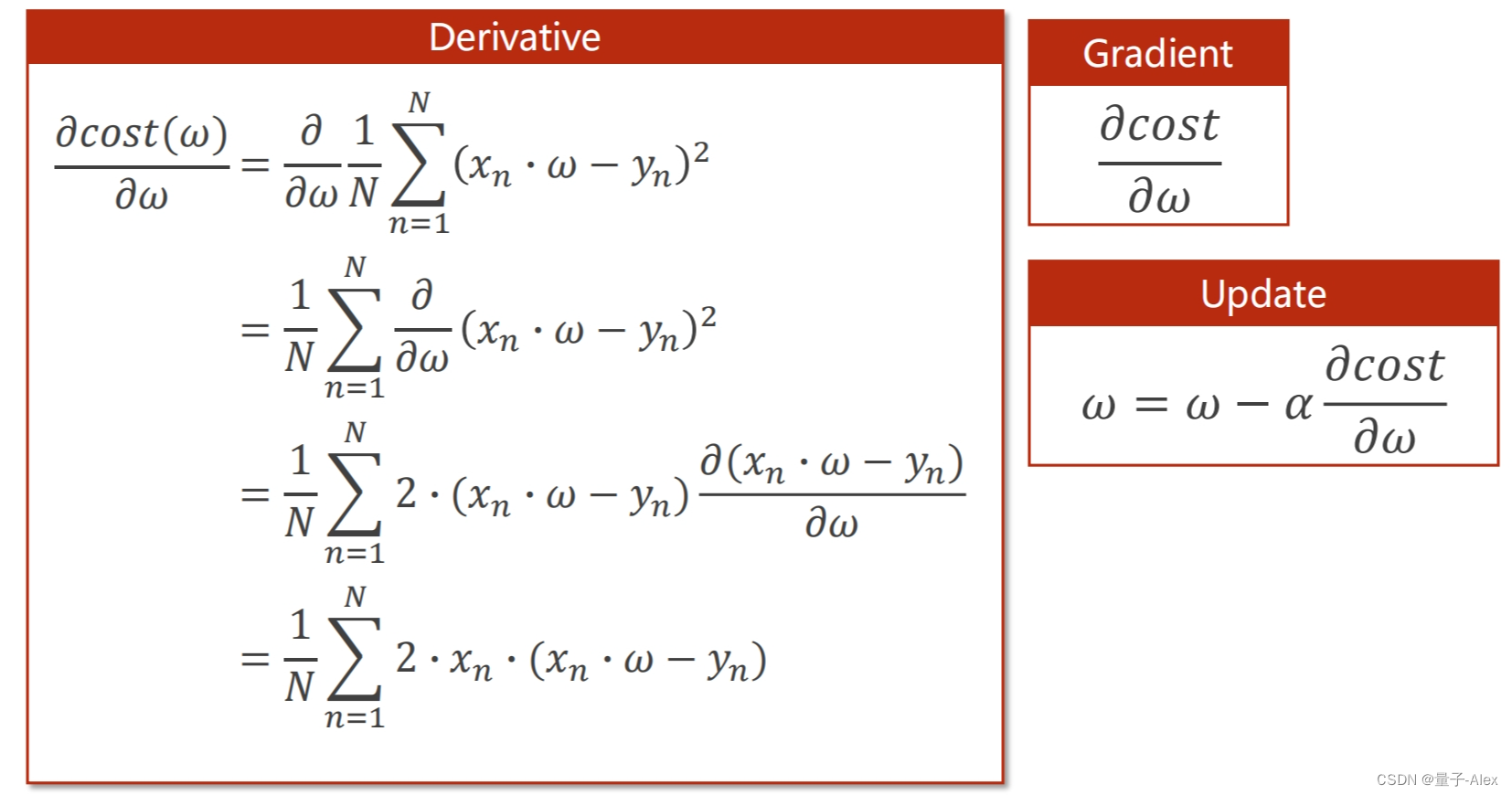

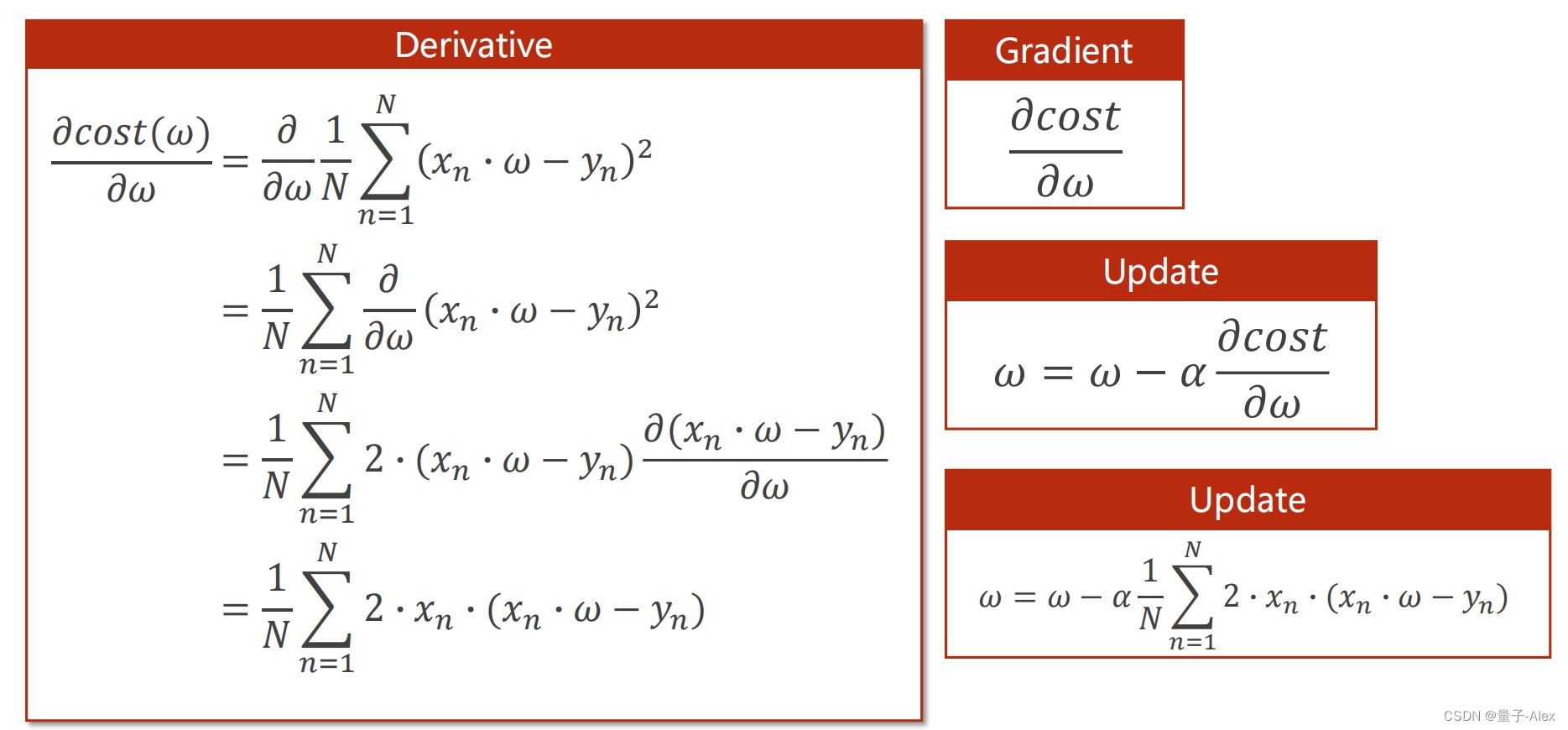

部分课件内容:

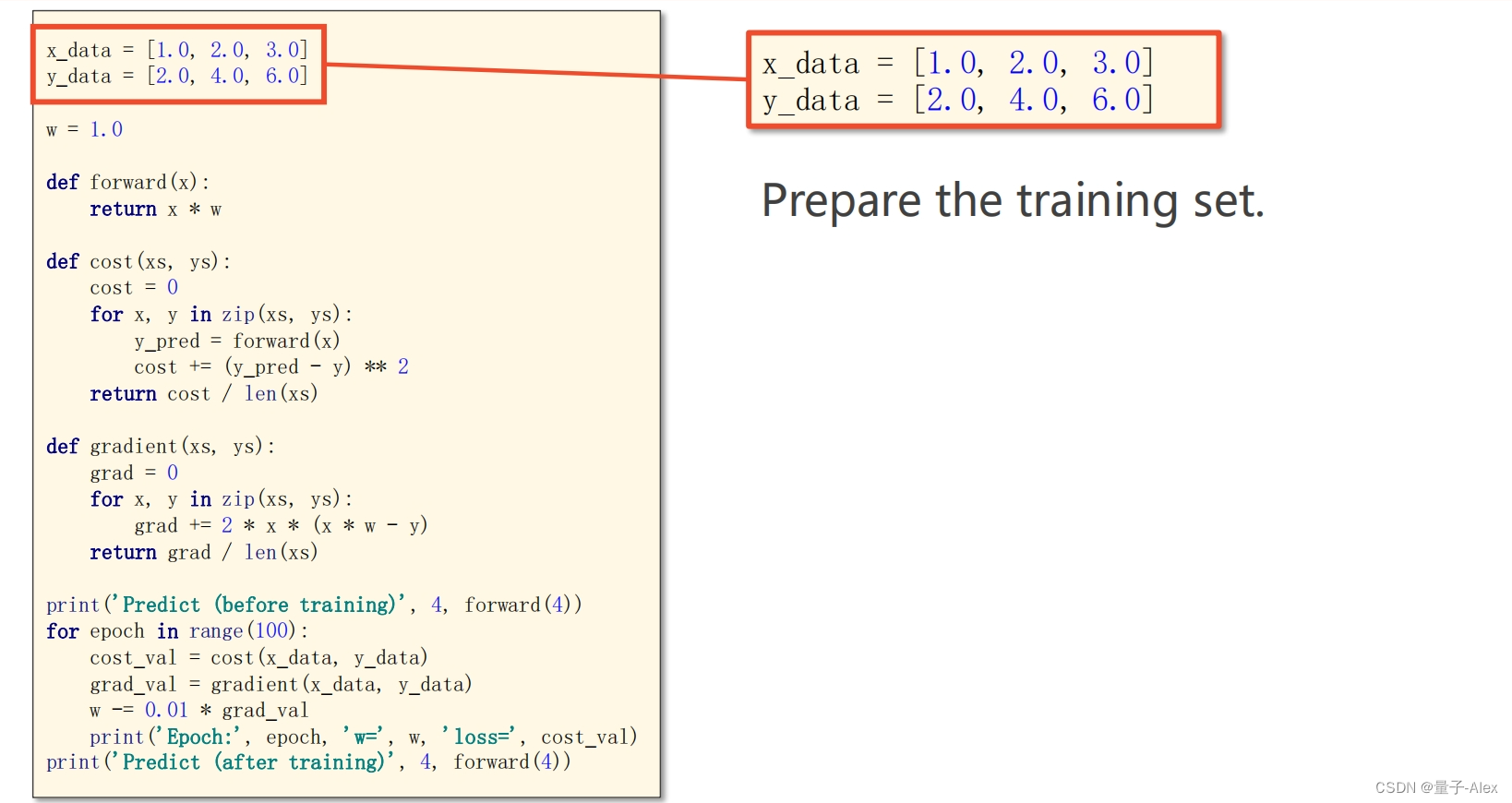

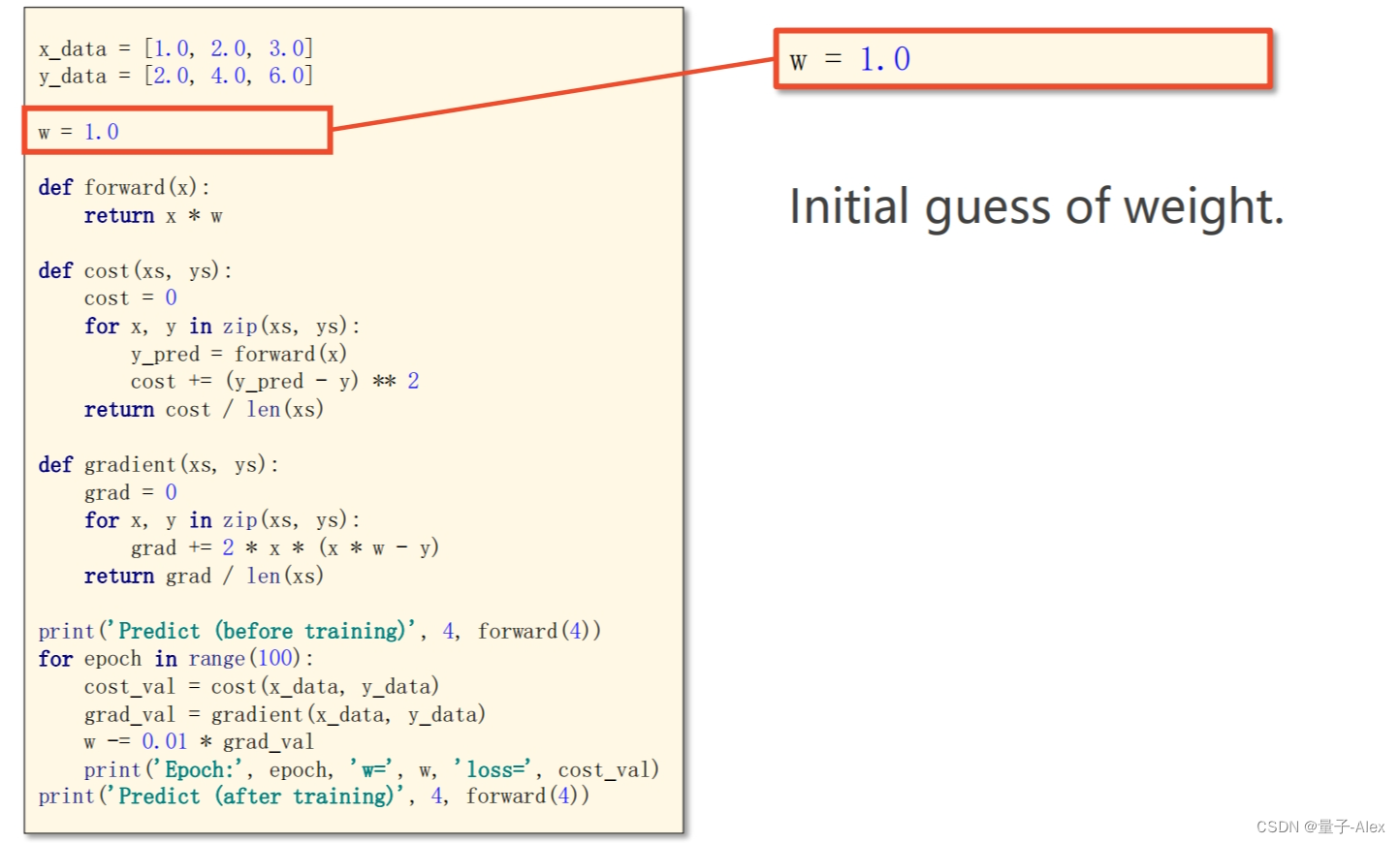

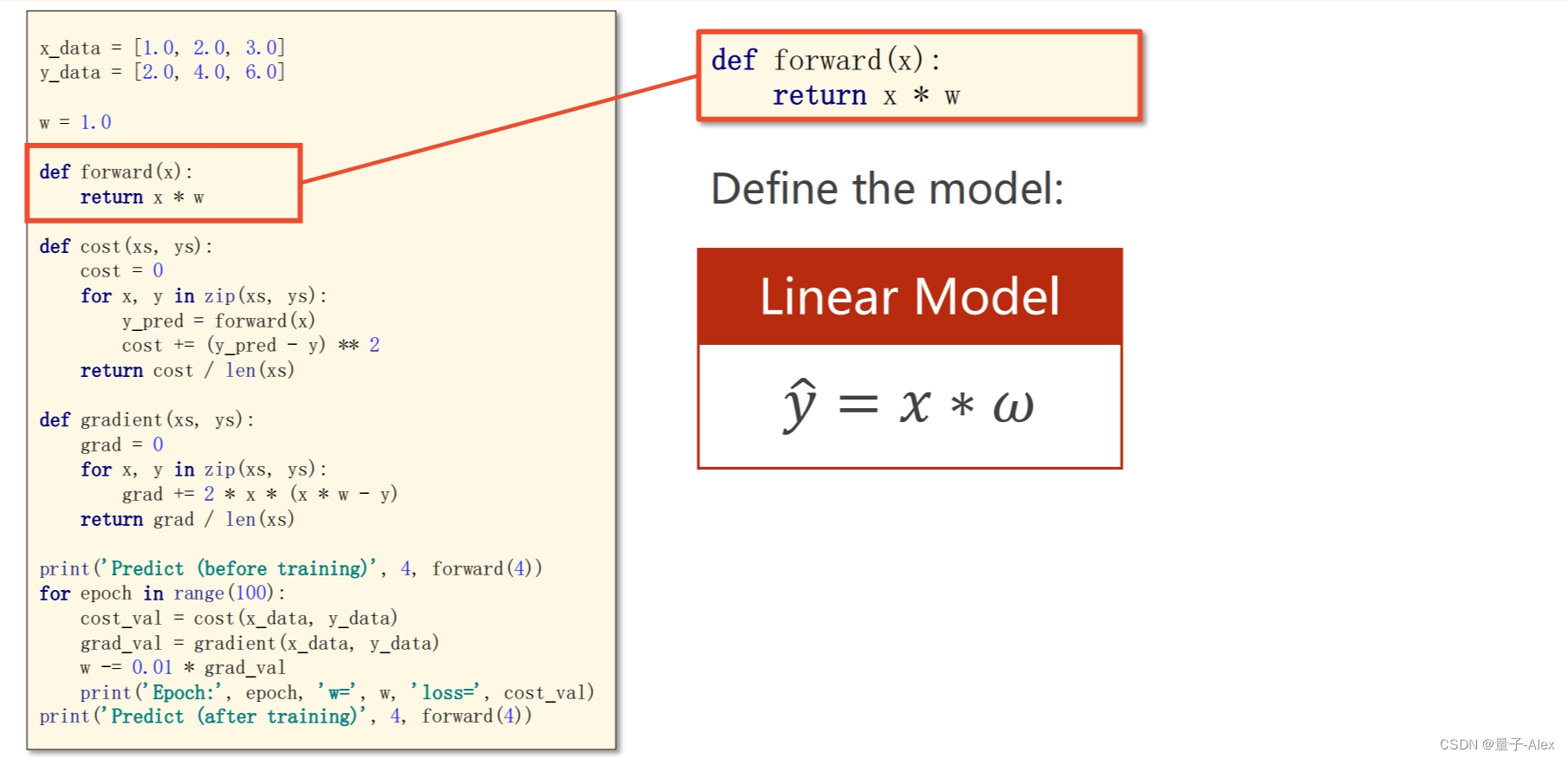

梯度下降的代码如下:

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0,2.0,3.0]

y_data = [2.0,4.0,6.0]

w = 1.0

def forward(x):

return x * w

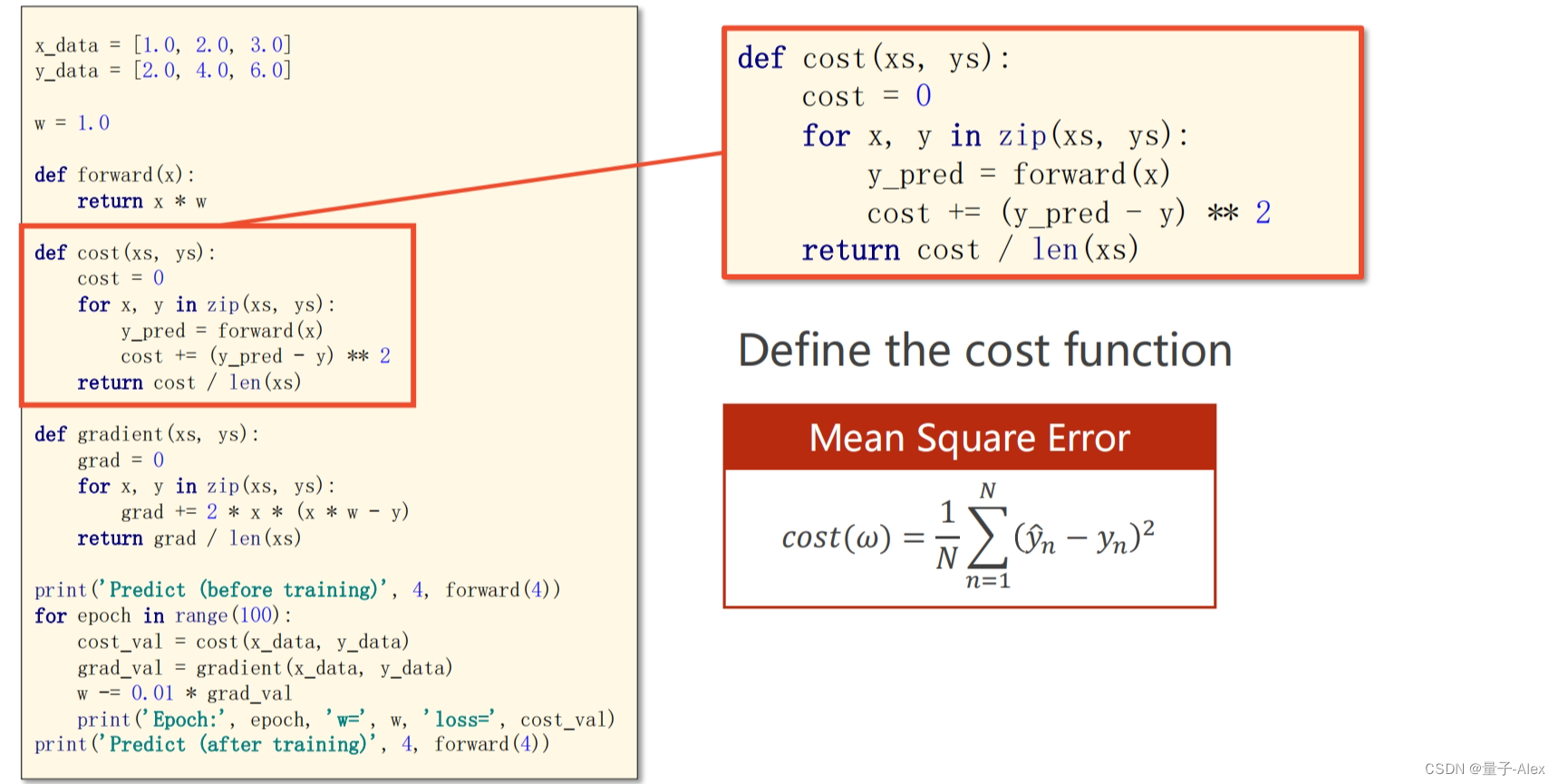

def cost(xs,ys):

cost = 0.0

for x,y in zip(xs,ys):

y_pred = forward(x)

cost += (y_pred - y) ** 2

return cost / len(xs)

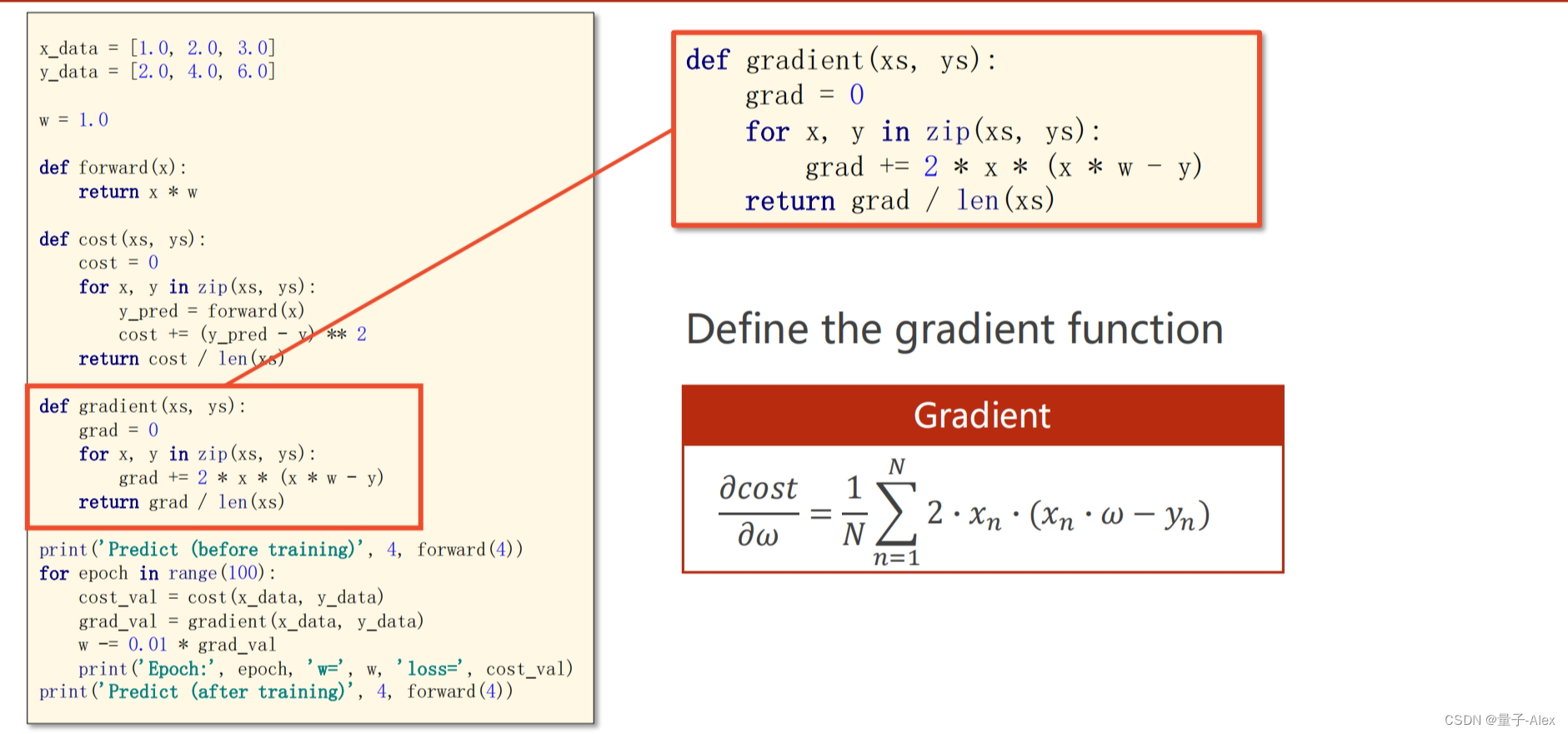

def gradient(xs,ys):

grad = 0

for x,y in zip(xs,ys):

grad += 2 * (x*w - y) * x

return grad / len(xs)

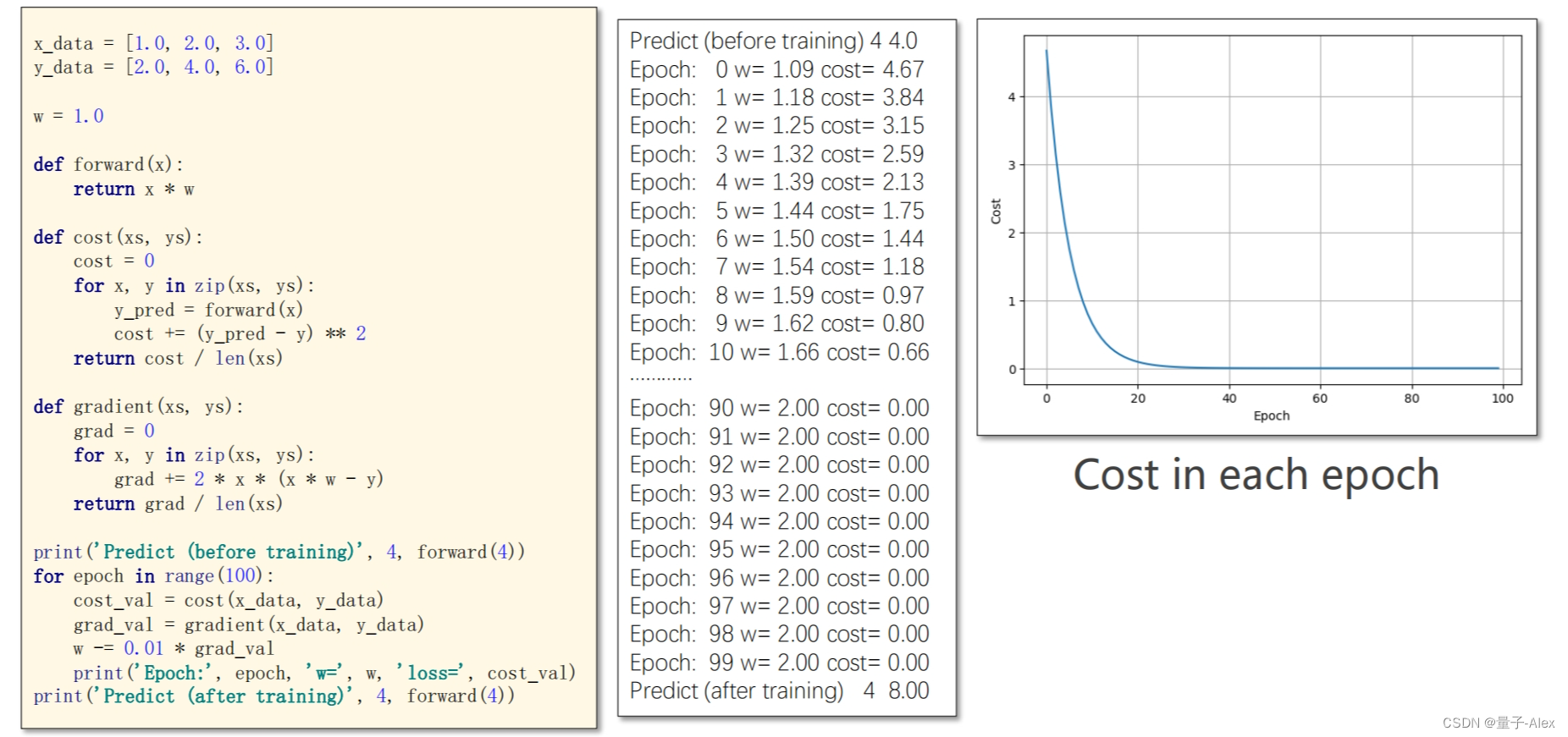

print('Predicted(before training)',4,forward(4))

for epoch in range(100):

cost_val = cost(x_data,y_data)

grad_val = gradient(x_data,y_data)

w = w - 0.01 * grad_val

print('Epoch:', epoch,'w:',w, 'Loss:', cost_val)

print('Predicted(after training)',4,forward(4))

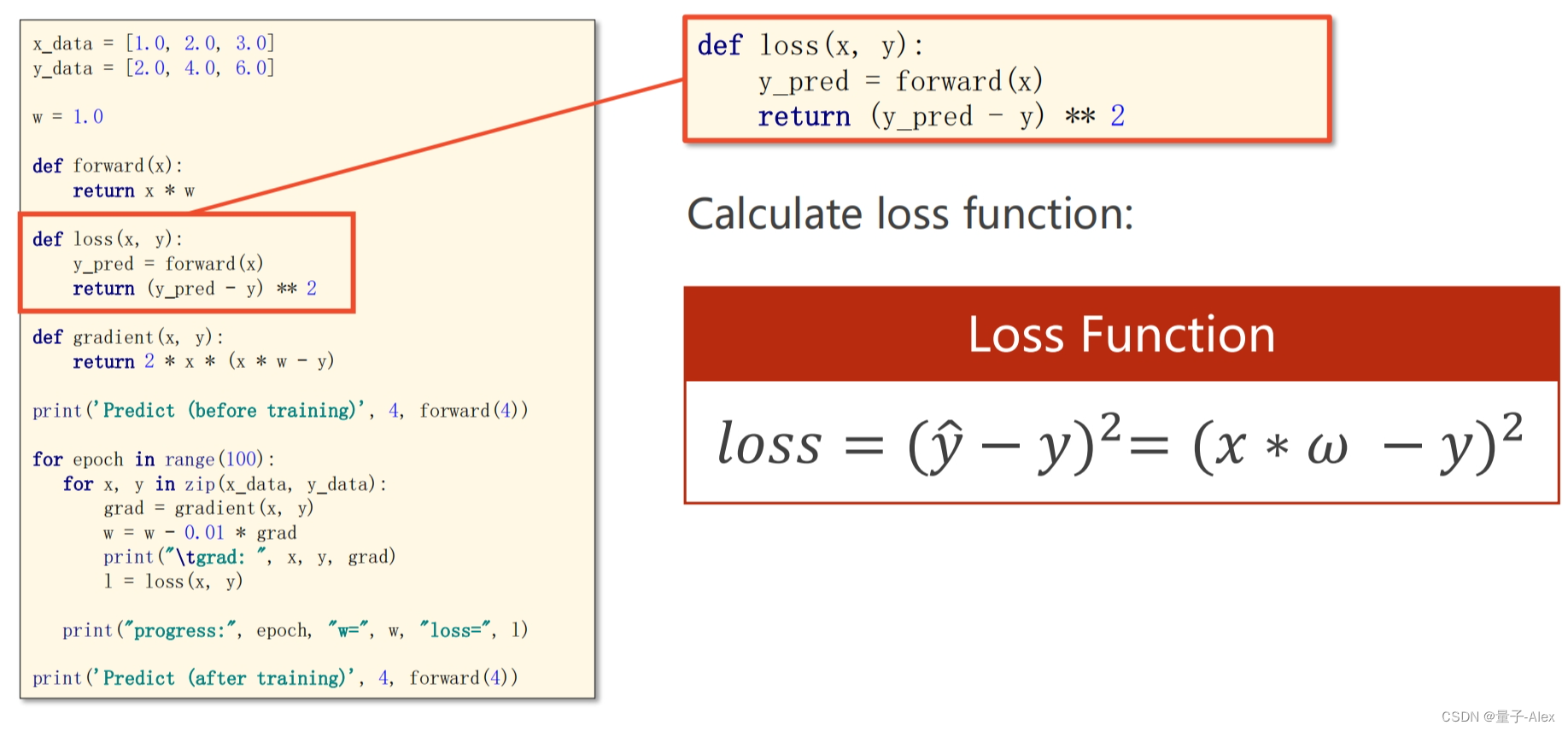

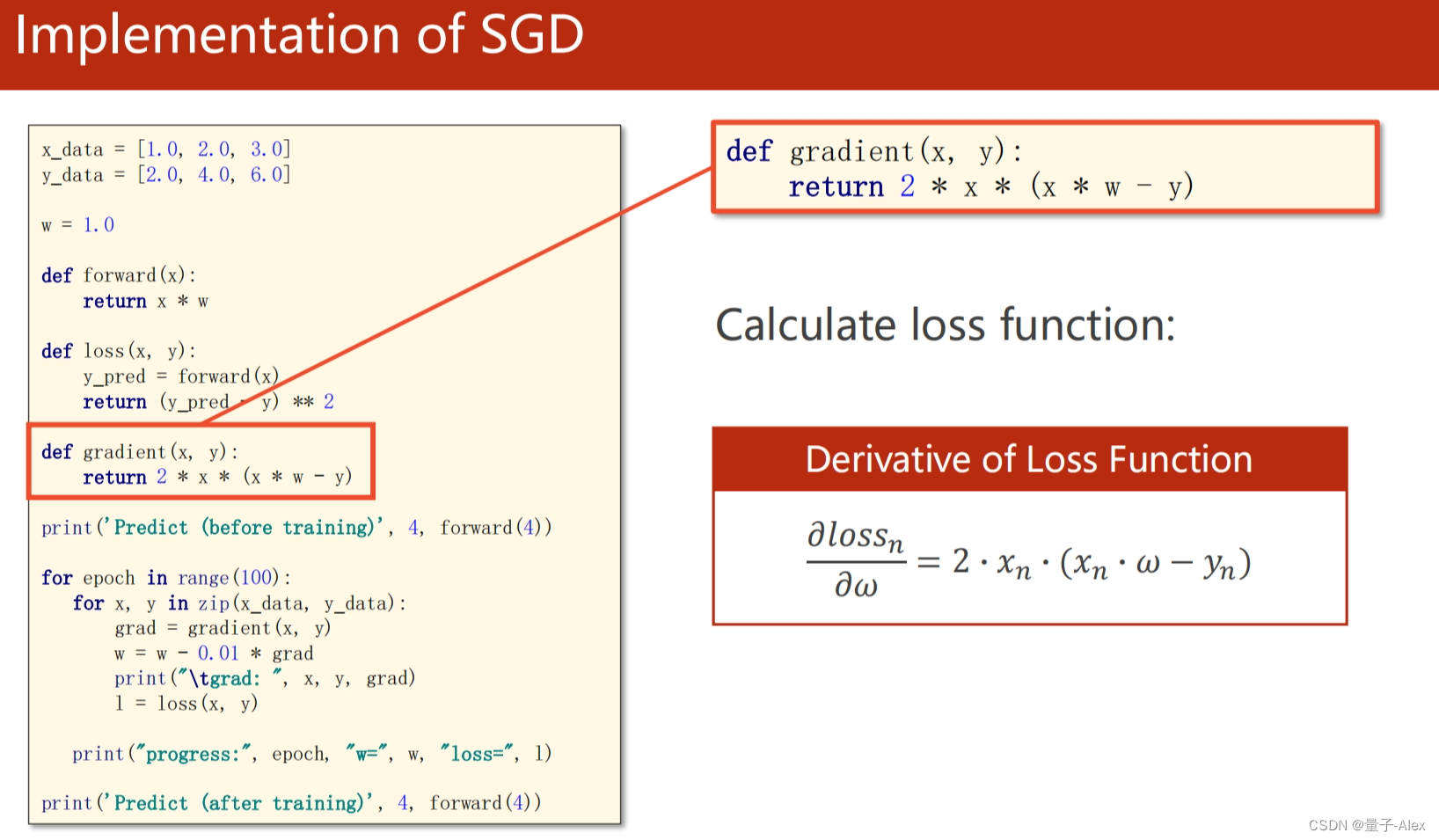

随机梯度下降的代码如下:

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0,2.0,3.0]

y_data = [2.0,4.0,6.0]

w = 1.0

def forward(x):

return x * w

def loss(x,y):

y_pred = forward(x)

return (y_pred - y) ** 2

def gradient(x,y):

return 2 * (x*w - y) * x

print('Predicted(before training)',4,forward(4))

for epoch in range(100):

for x,y in zip(x_data,y_data):

grad = gradient(x,y)

w = w - 0.01 * grad

print("\tgrad:",x,y,grad)

l = loss(x,y)

print('Epoch:', epoch,'w:',w, 'Loss:', l)

print('Predicted(after training)',4,forward(4))

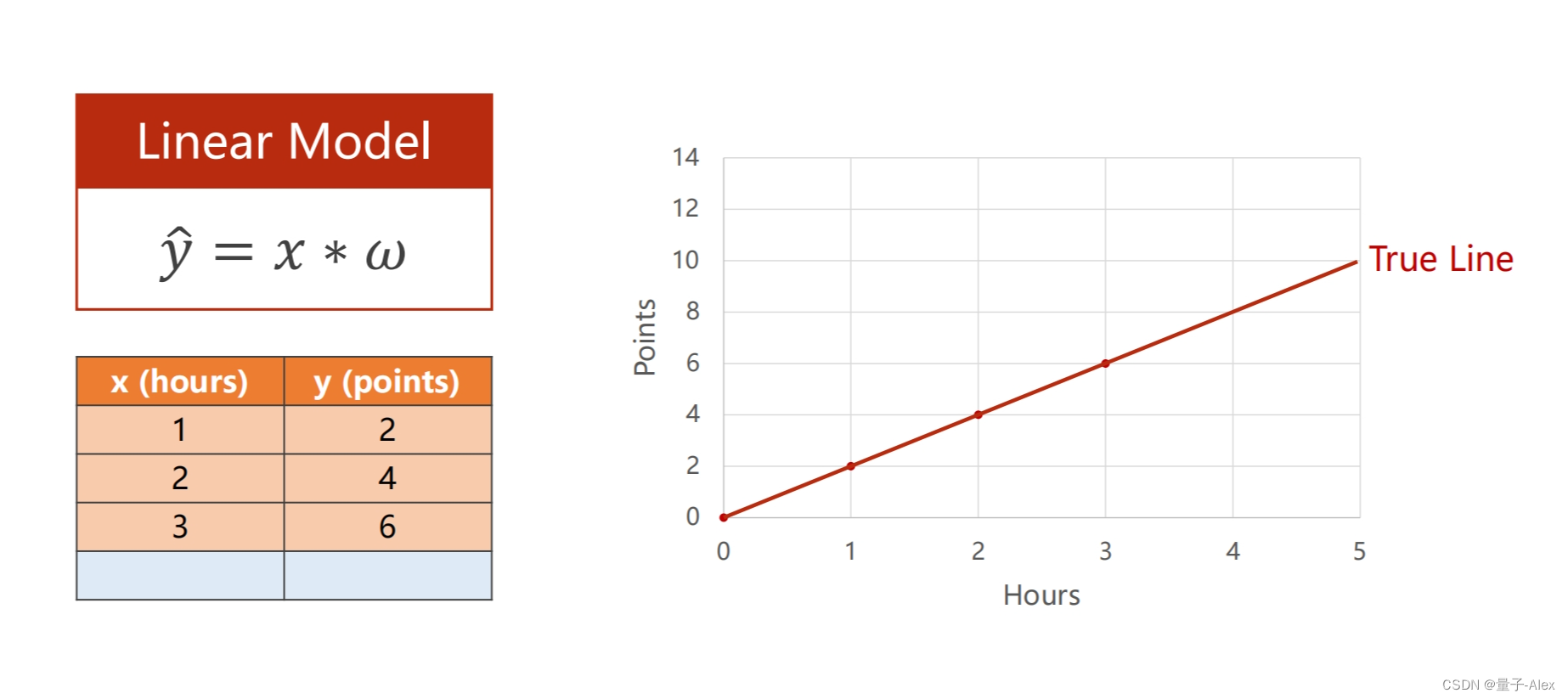

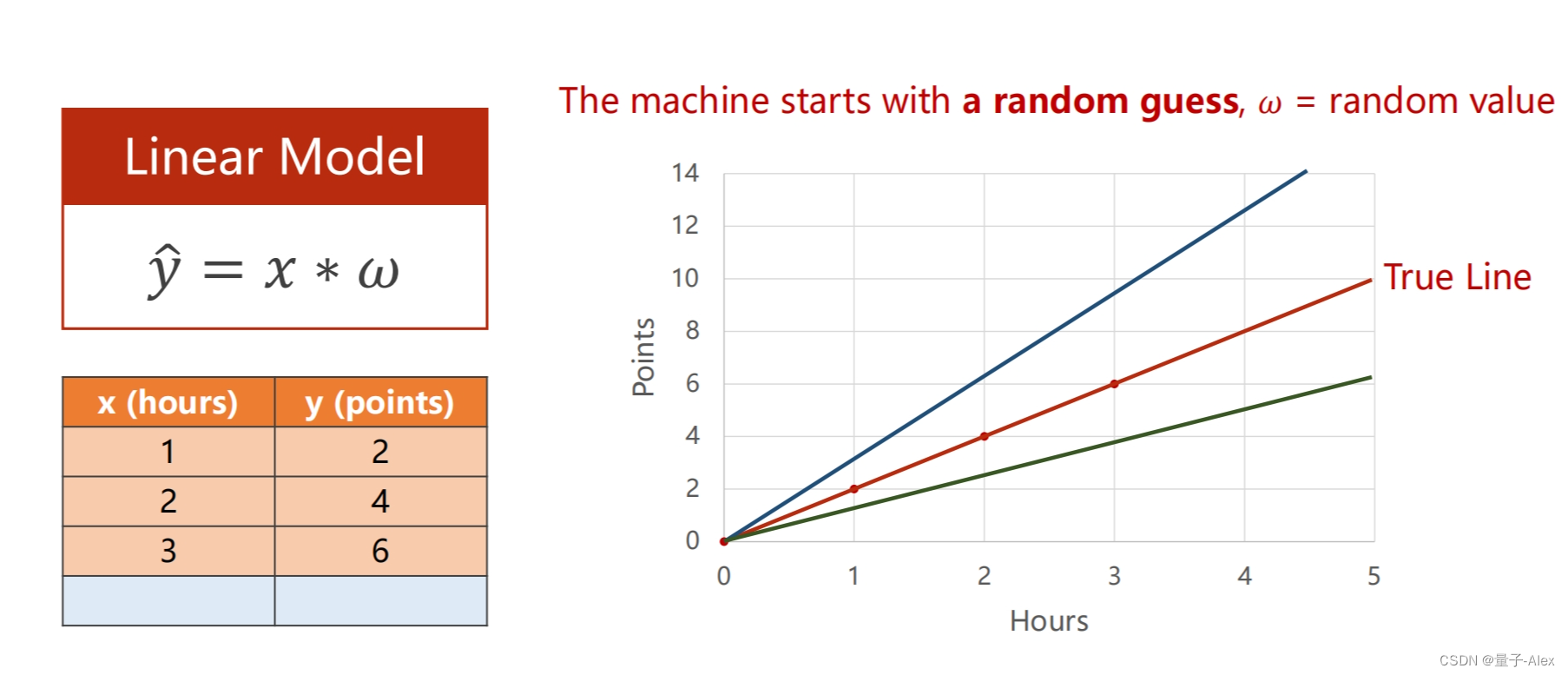

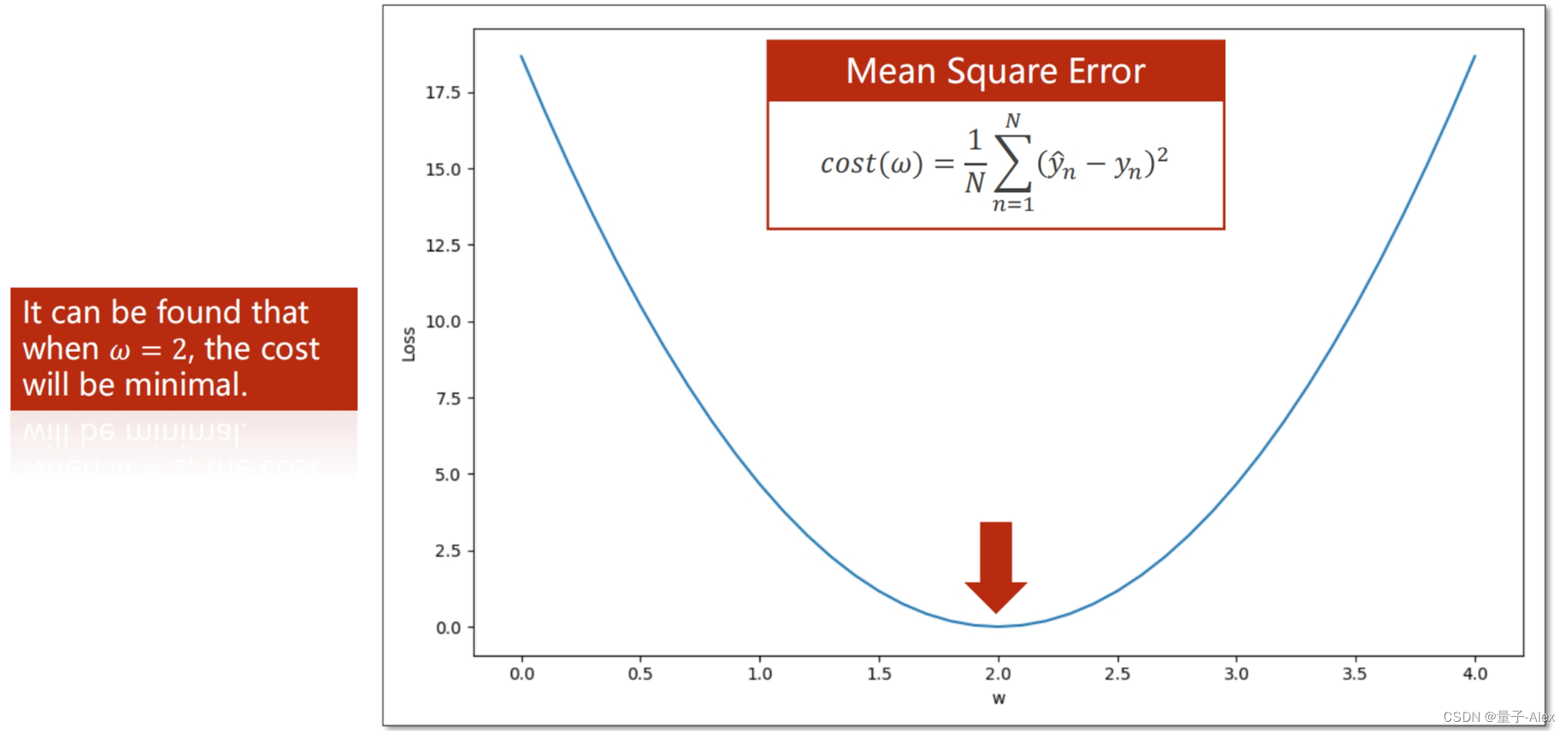

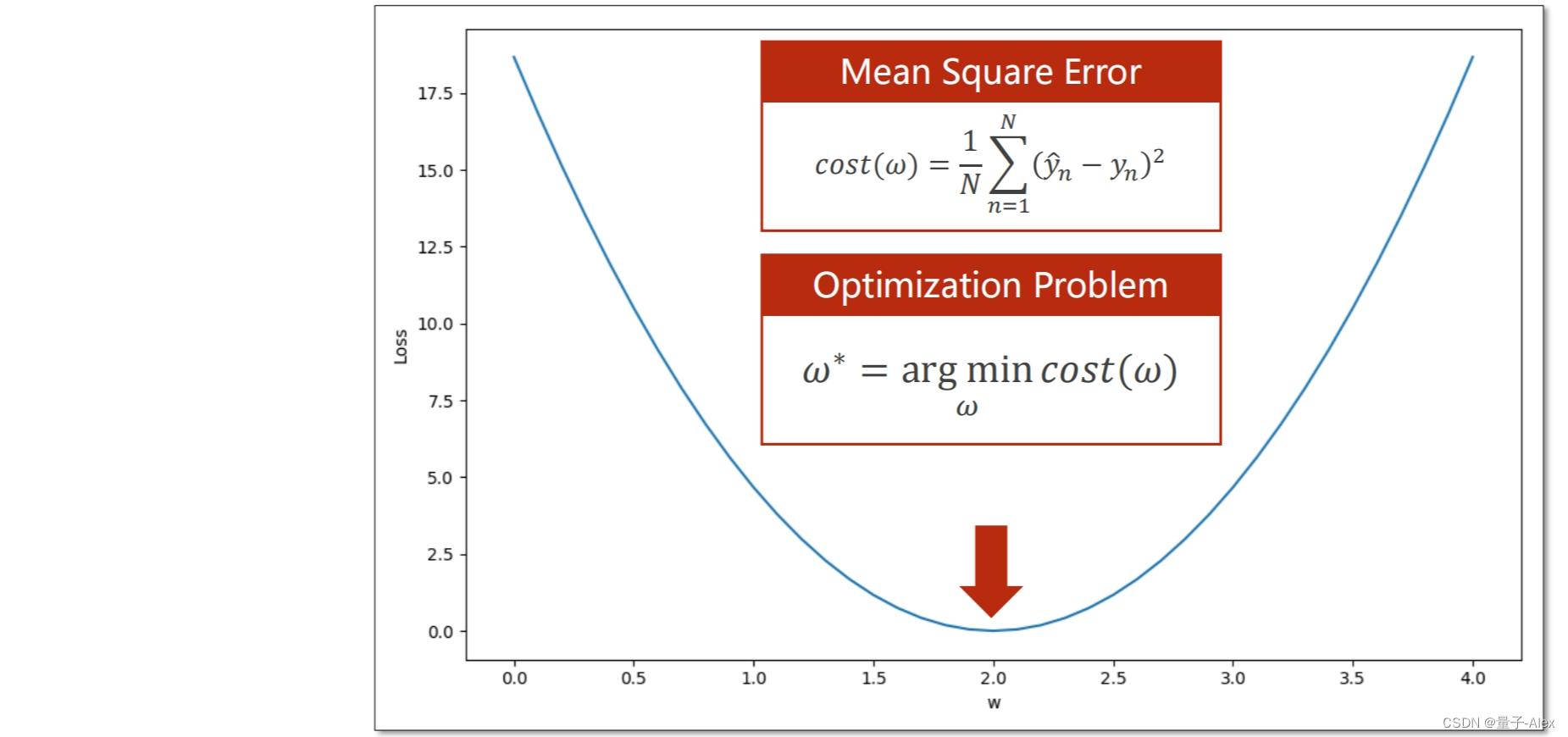

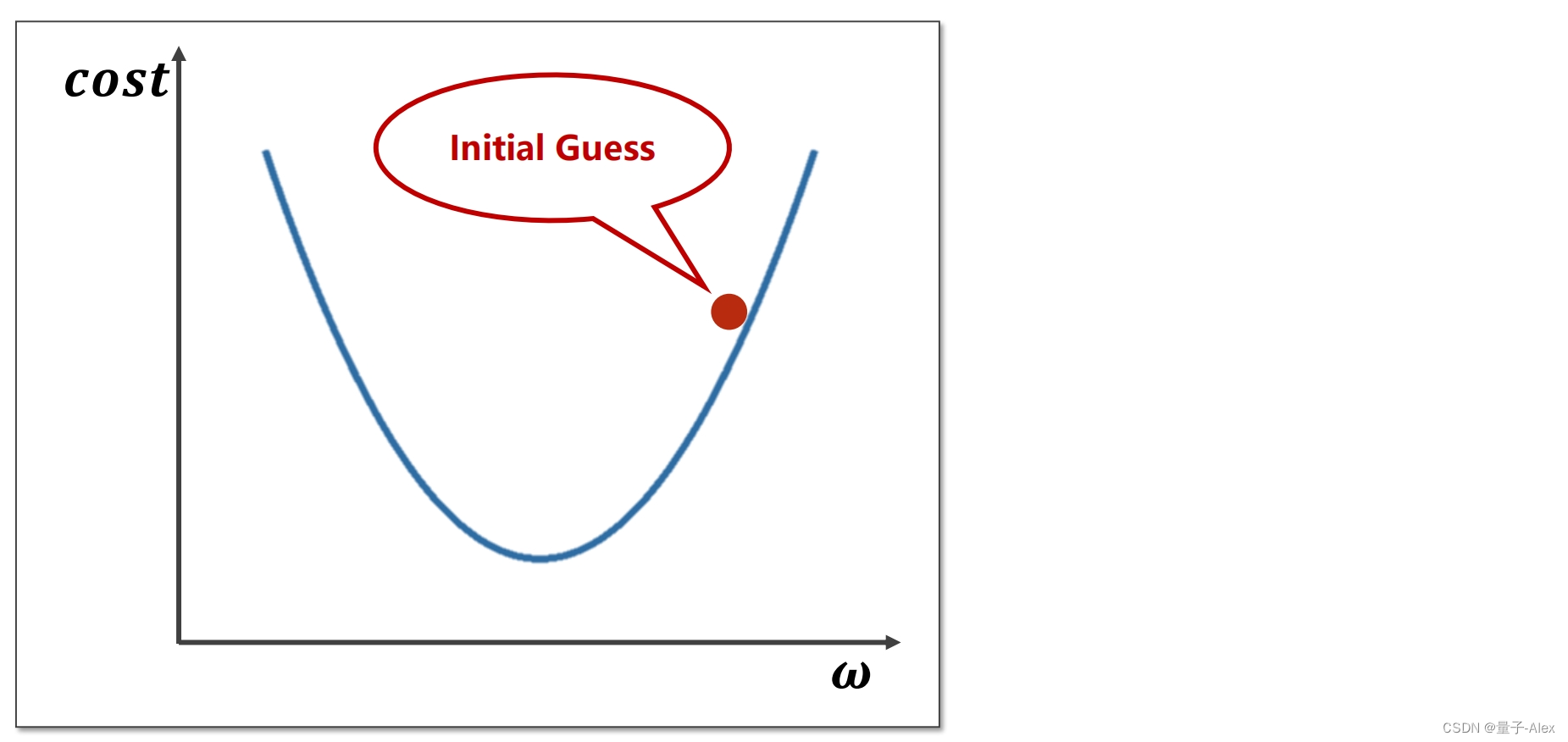

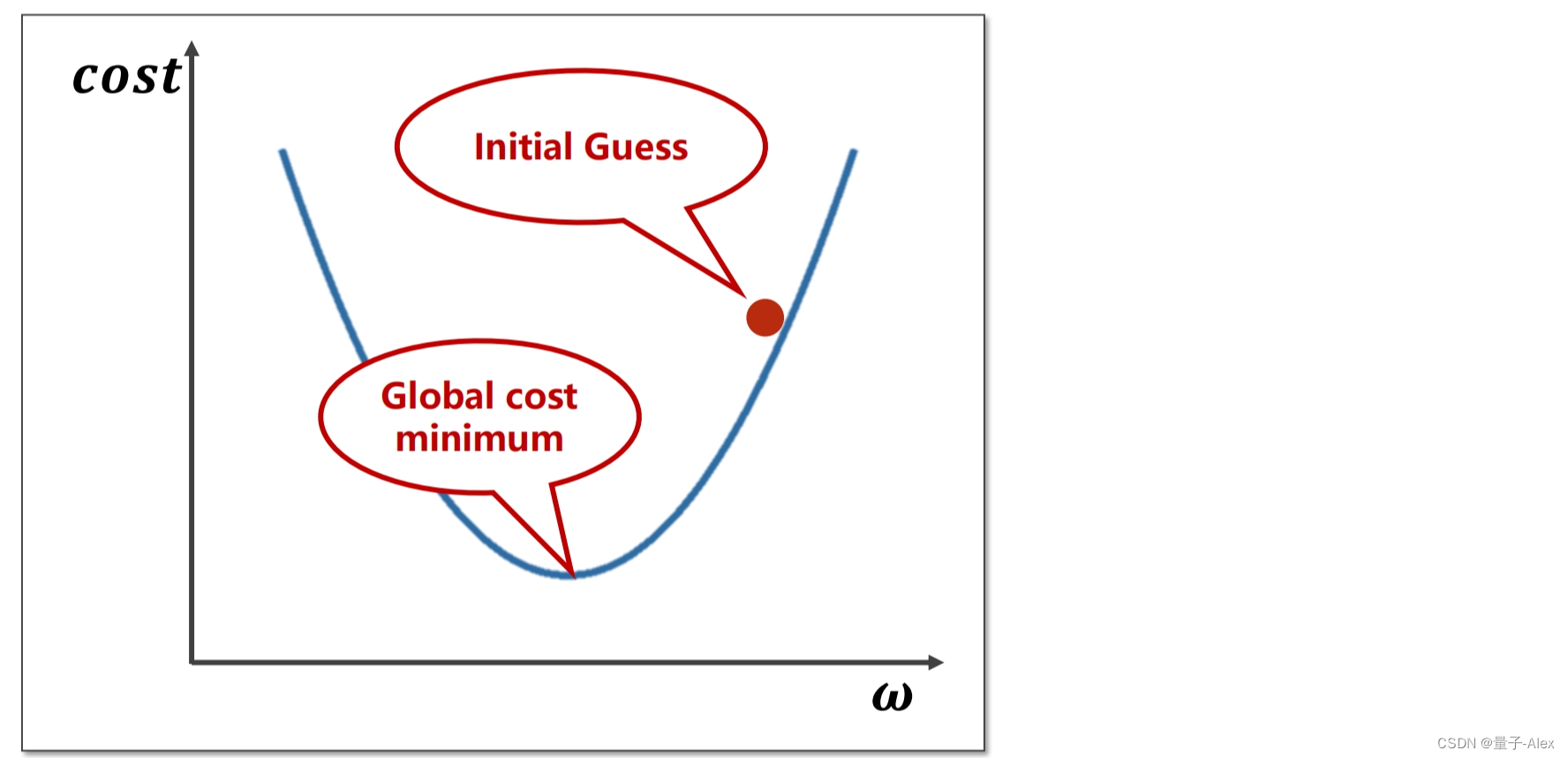

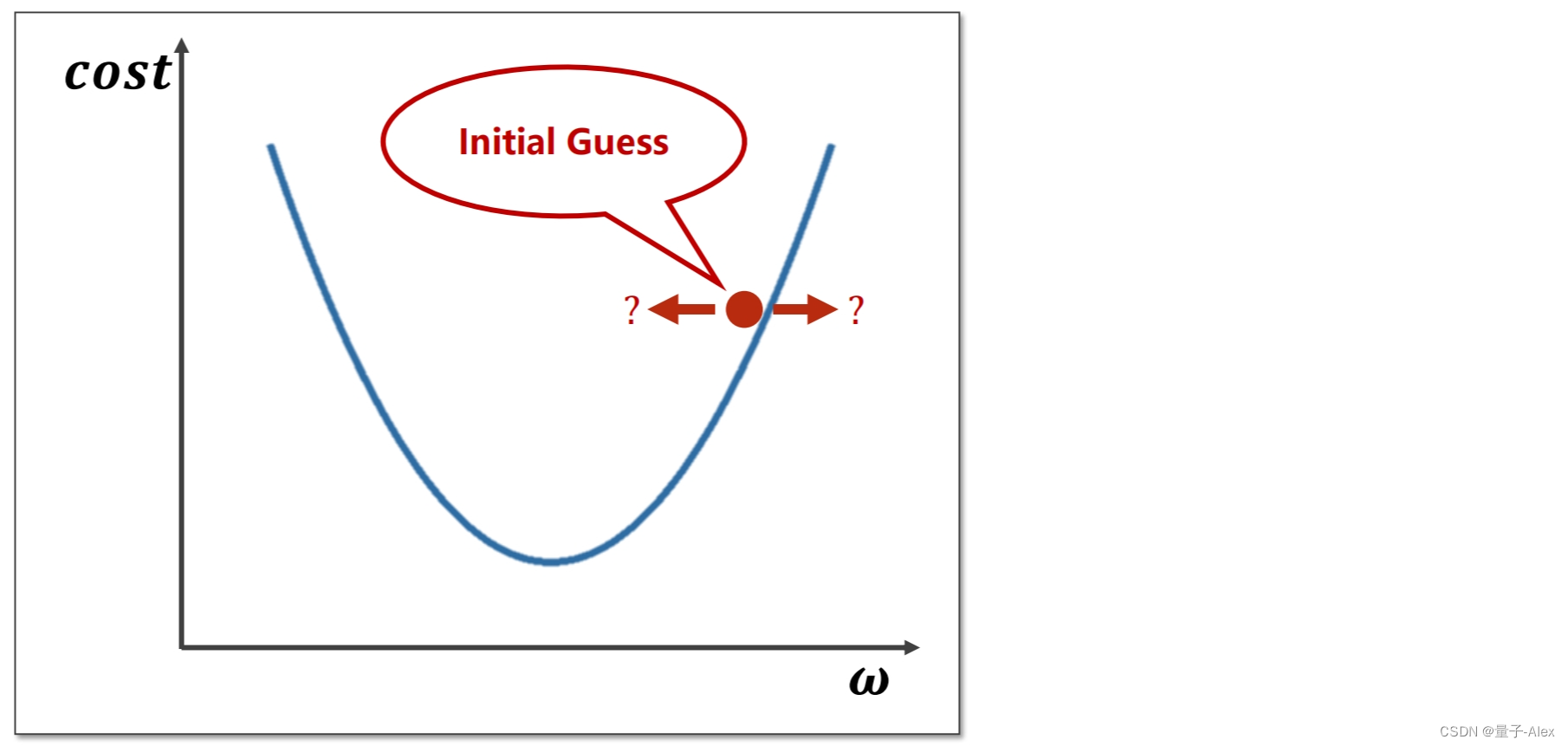

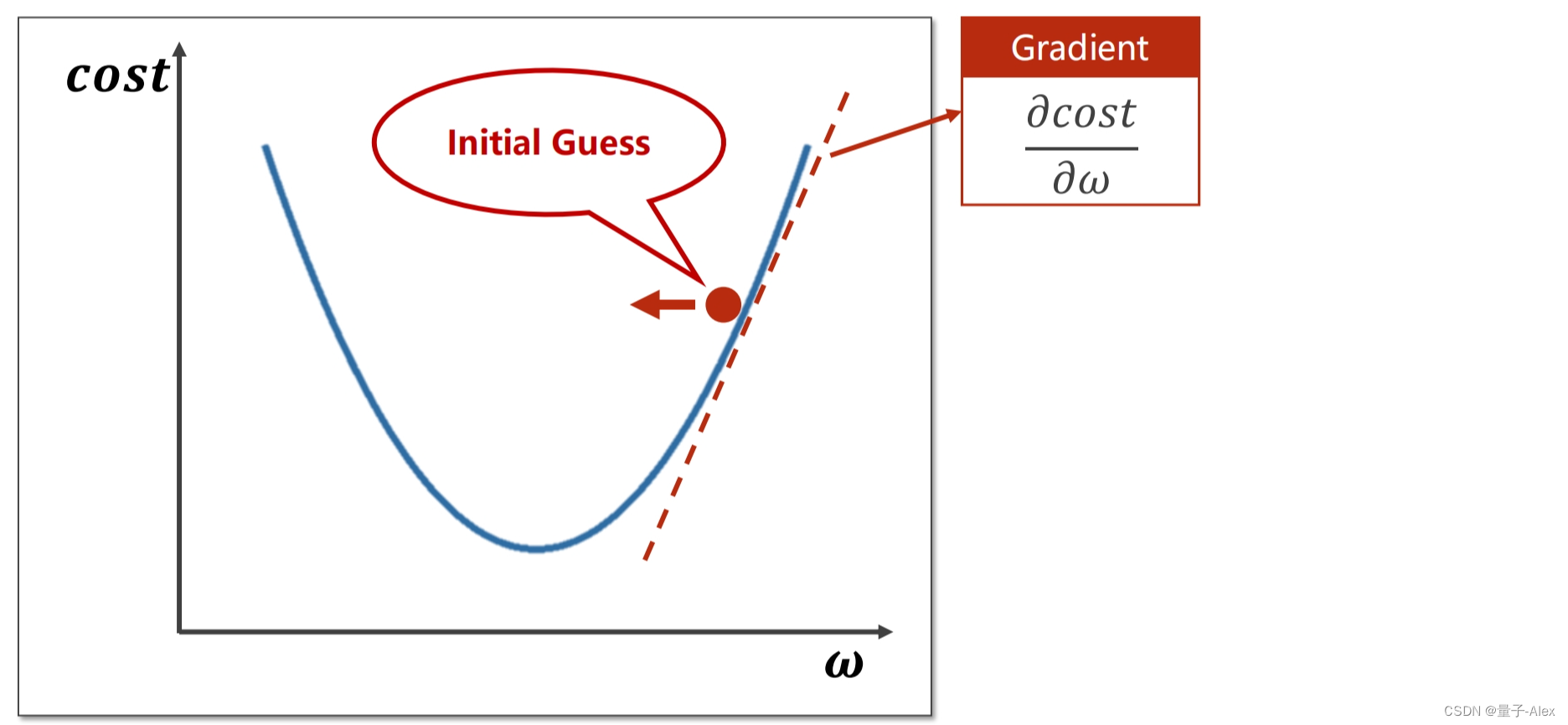

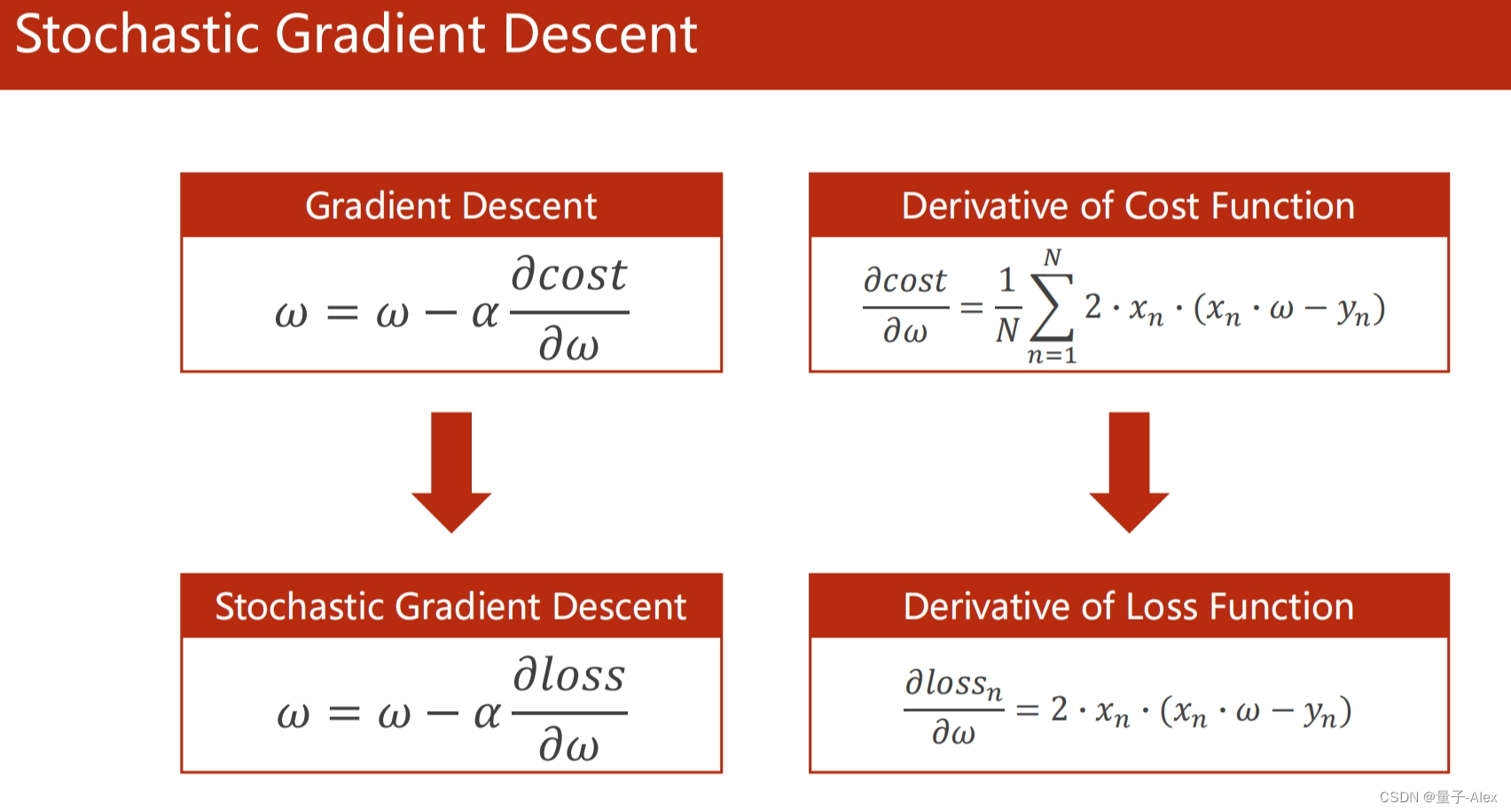

讲解了梯度下降算法及其在Python中的应用,包括典型梯度下降和随机梯度下降,以线性回归为例。

讲解了梯度下降算法及其在Python中的应用,包括典型梯度下降和随机梯度下降,以线性回归为例。

482

482

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?