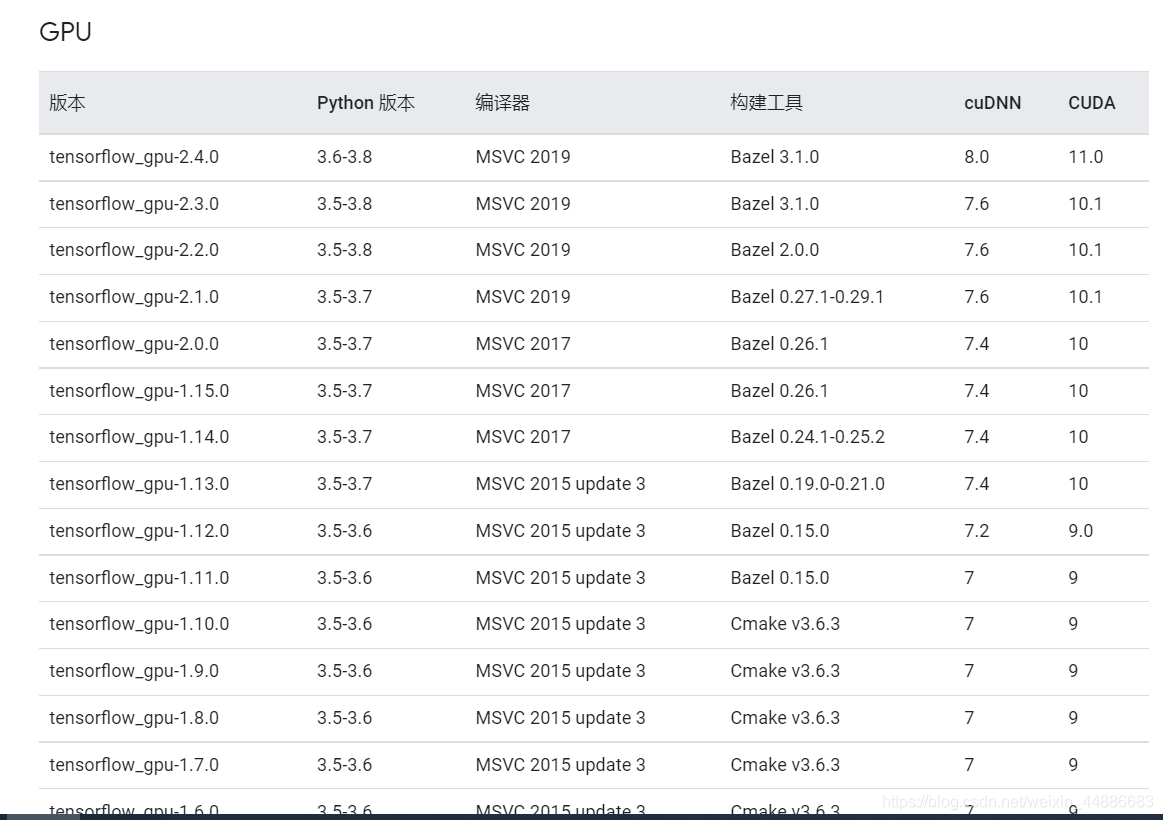

https://tensorflow.google.cn/install/source_windows

在上面这个网址会更新

上表没有涵盖cuda11.1

cuda11.1 适配 tensorflow-gpu 2.5.0 keras 2.4.3

cuda和tensorflow-gpu版本适配(2021.08更新,cuda11.1适配方案)

最新推荐文章于 2025-03-04 20:45:44 发布

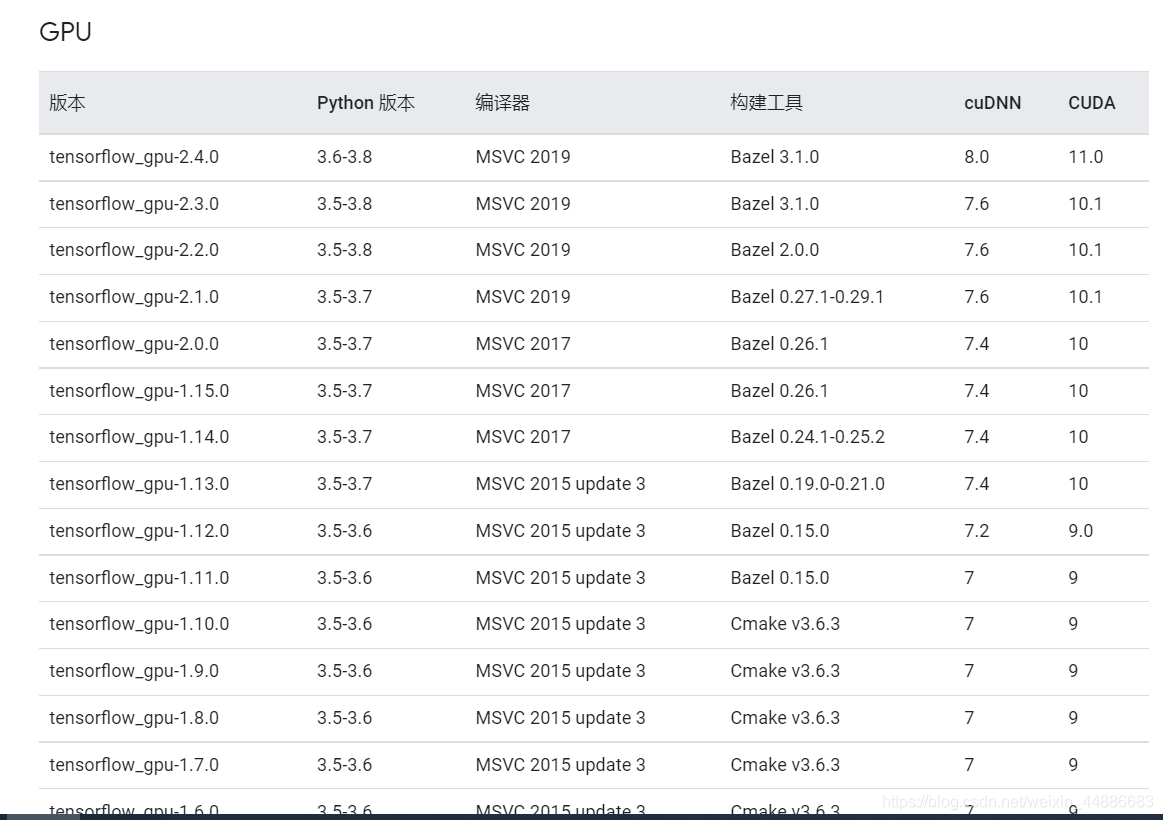

https://tensorflow.google.cn/install/source_windows

在上面这个网址会更新

上表没有涵盖cuda11.1

cuda11.1 适配 tensorflow-gpu 2.5.0 keras 2.4.3

1892

1892

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?