int adreno_ringbuffer_init(struct adreno_device *adreno_dev)

{

struct kgsl_device *device = KGSL_DEVICE(adreno_dev);

int i;

int status = -ENOMEM;

if (!adreno_is_a3xx(adreno_dev)) {

unsigned int priv =

KGSL_MEMDESC_RANDOM | KGSL_MEMDESC_PRIVILEGED;

if (IS_ERR_OR_NULL(device->scratch)) {

device->scratch = kgsl_allocate_global(device,

PAGE_SIZE, 0, 0, priv, "scratch");

if (IS_ERR(device->scratch))

return PTR_ERR(device->scratch);

}

}

if (ADRENO_FEATURE(adreno_dev, ADRENO_PREEMPTION))

adreno_dev->num_ringbuffers =

ARRAY_SIZE(adreno_dev->ringbuffers);

else

adreno_dev->num_ringbuffers = 1;

for (i = 0; i < adreno_dev->num_ringbuffers; i++) {

status = _adreno_ringbuffer_init(adreno_dev, i);

if (status) {

adreno_ringbuffer_close(adreno_dev);

return status;

}

}

adreno_dev->cur_rb = &(adreno_dev->ringbuffers[0]);

if (ADRENO_FEATURE(adreno_dev, ADRENO_PREEMPTION)) {

const struct adreno_gpudev *gpudev = ADRENO_GPU_DEVICE(adreno_dev);

struct adreno_preemption *preempt = &adreno_dev->preempt;

int ret;

timer_setup(&preempt->timer, adreno_preemption_timer, 0);

ret = gpudev->preemption_init(adreno_dev);

WARN(ret, "adreno GPU preemption is disabled\n");

}

return 0;

}

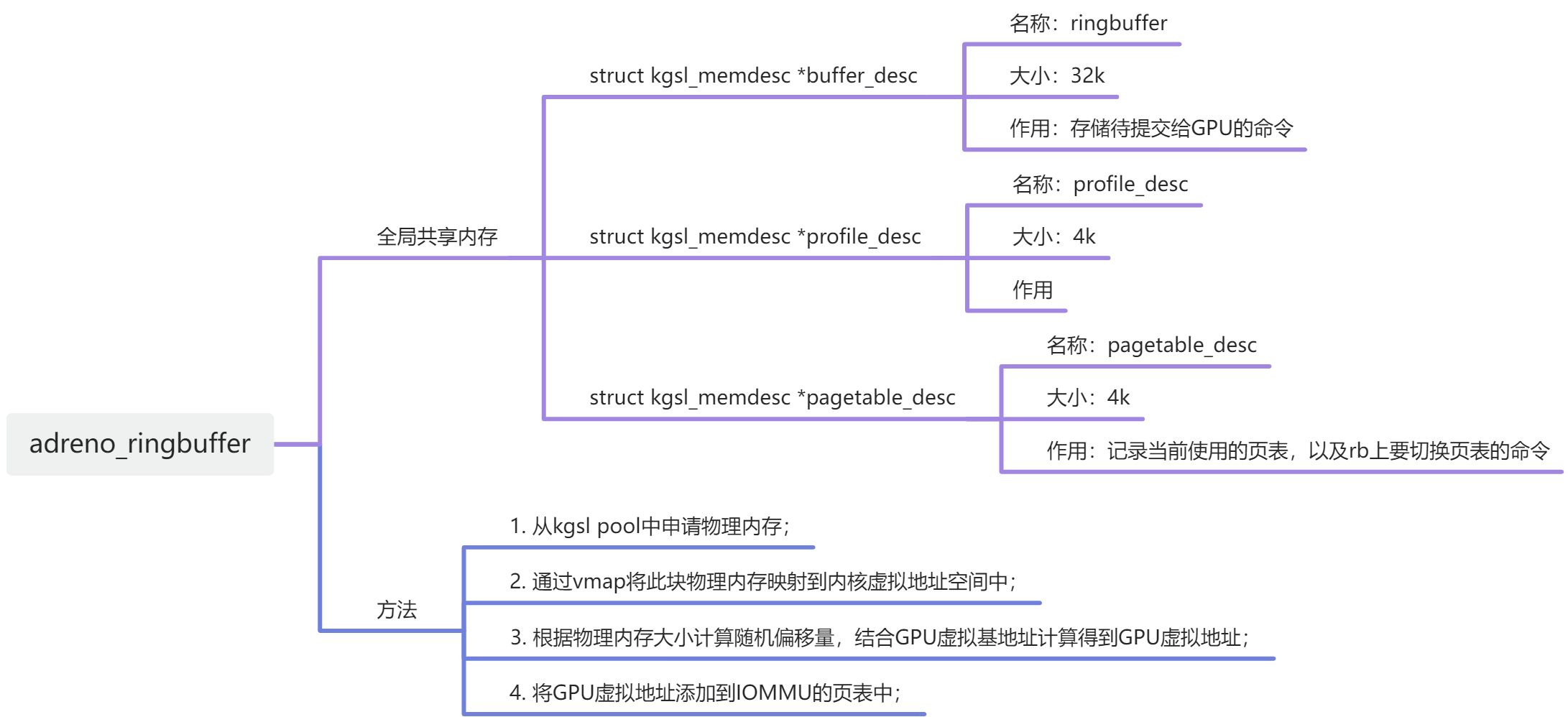

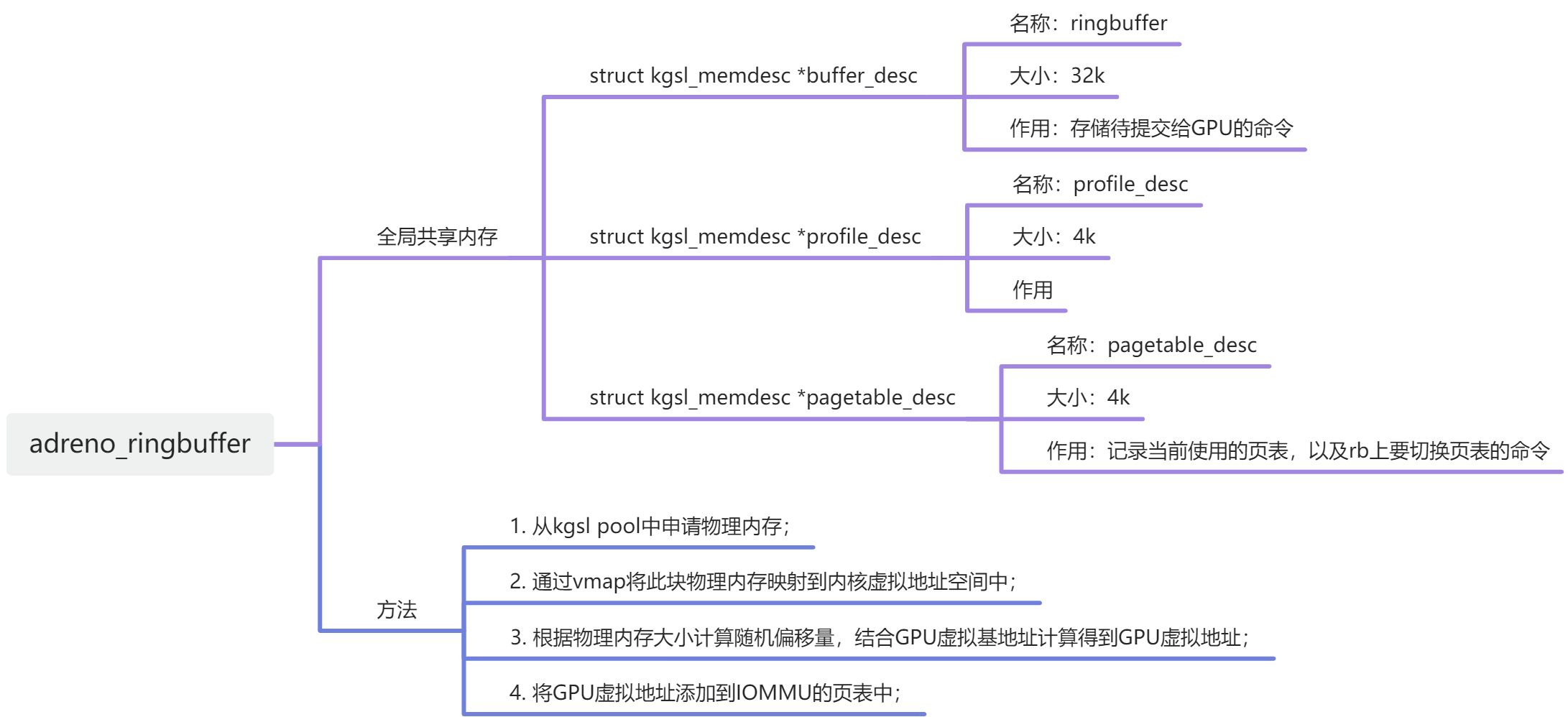

1. _adreno_ringbuffer_init

static int _adreno_ringbuffer_init(struct adreno_device *adreno_dev,

int id)

{

struct kgsl_device *device = KGSL_DEVICE(adreno_dev);

struct adreno_ringbuffer *rb = &adreno_dev->ringbuffers[id];

unsigned int priv = 0;

if (IS_ERR_OR_NULL(rb->pagetable_desc)) {

rb->pagetable_desc = kgsl_allocate_global(device, PAGE_SIZE,

SZ_16K, 0, KGSL_MEMDESC_PRIVILEGED, "pagetable_desc");

if (IS_ERR(rb->pagetable_desc))

return PTR_ERR(rb->pagetable_desc);

}

if (IS_ERR_OR_NULL(rb->profile_desc))

rb->profile_desc = kgsl_allocate_global(device, PAGE_SIZE,

0, KGSL_MEMFLAGS_GPUREADONLY, 0, "profile_desc");

if (ADRENO_FEATURE(adreno_dev, ADRENO_APRIV))

priv |= KGSL_MEMDESC_PRIVILEGED;

if (IS_ERR_OR_NULL(rb->buffer_desc)) {

rb->buffer_desc = kgsl_allocate_global(device, KGSL_RB_SIZE,

SZ_4K, KGSL_MEMFLAGS_GPUREADONLY, priv, "ringbuffer");

if (IS_ERR(rb->buffer_desc))

return PTR_ERR(rb->buffer_desc);

}

if (!list_empty(&rb->events.group))

return 0;

rb->id = id;

kgsl_add_event_group(device, &rb->events, NULL, _rb_readtimestamp, rb,

"rb_events-%d", id);

rb->timestamp = 0;

init_waitqueue_head(&rb->ts_expire_waitq);

spin_lock_init(&rb->preempt_lock);

return 0;

}

2368

2368

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?