P13 损失函数与反向传播

前言

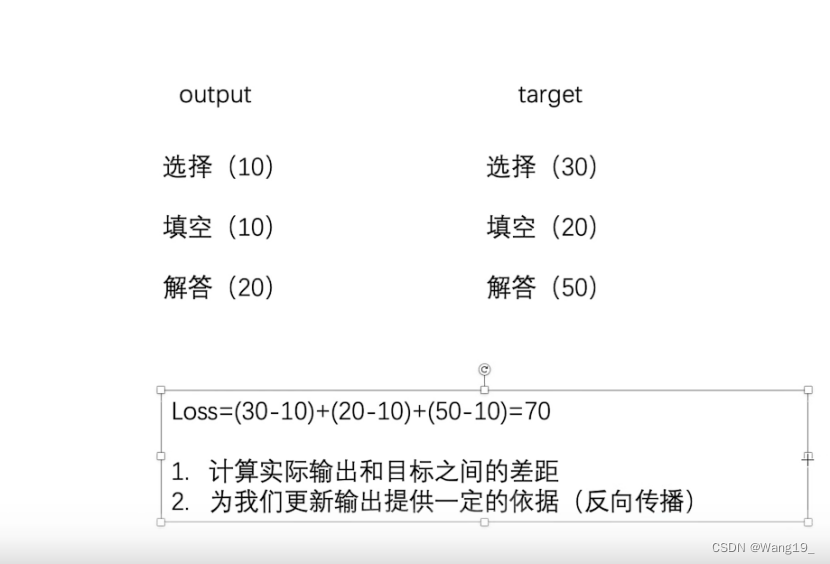

损失函数

- 计算实际输出和目标之间的差距

- 为后续训练更新输出提供一定的依据(反向传播)

一、L1loss

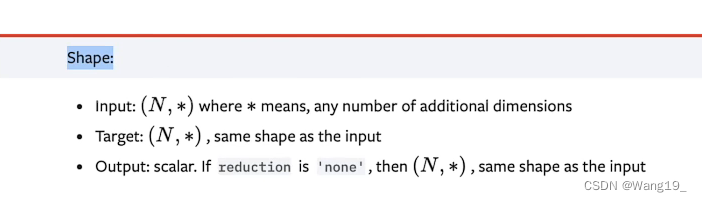

1.参数格式

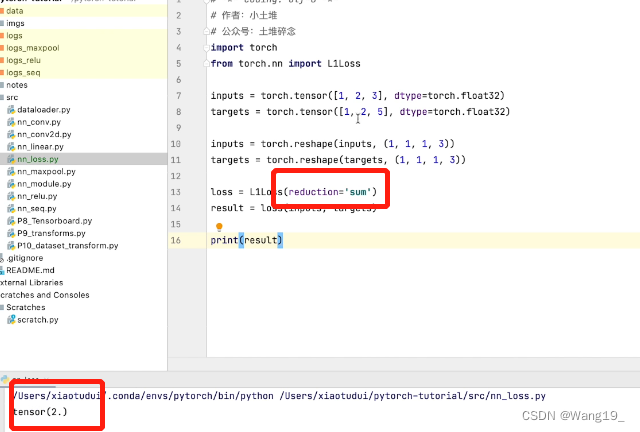

2.案例以及报错解决

数据整形格式错误,解决方案:

inputs = torch.tensor([1, 2, 3], dtype=torch.float32)

targets = torch.tensor([1, 2, 5], dtype=torch.float32)

注意输入数据的格式变换:

inputs = torch.reshape(inputs, (1, 1, 1, 3)) # batch_size, channel, 1行, 3列

targets = torch.reshape(targets, (1, 1, 1, 3))

输出结果:

设置reduction:

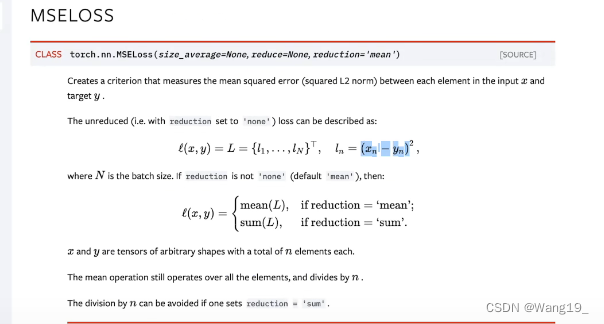

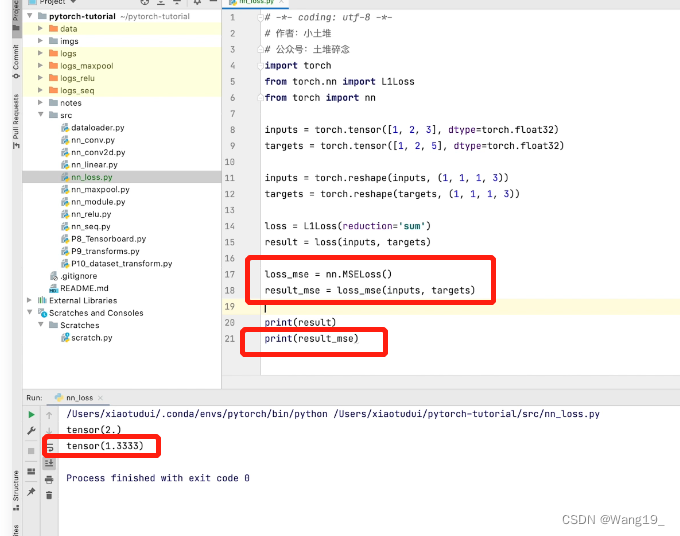

二、MSELoss

1.计算公式

2.案例

代码如下:

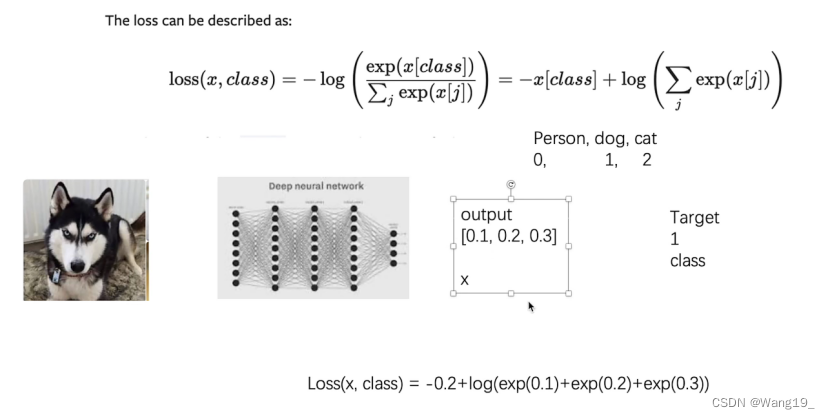

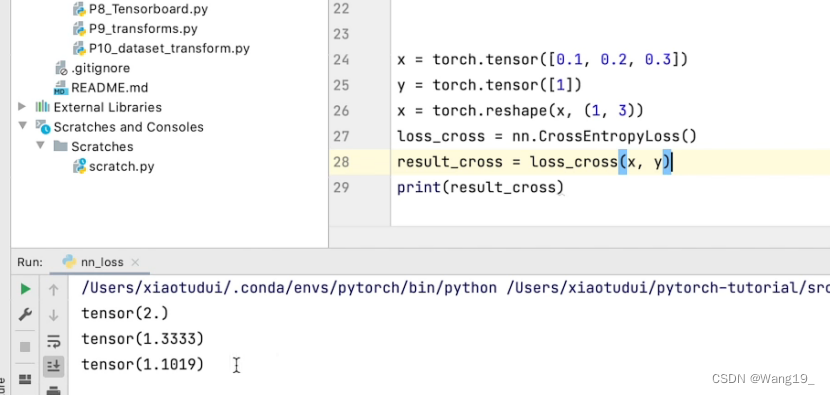

三、交叉熵CrossEntropyLoss

1.计算公式

2.关键代码

test1=test()

loss=nn.CrossEntropyLoss()

for data in dataloader:

imgs,t=data

#output代表该图像是每种类别的概率

output=test1(imgs)

print(output)

print(t)

#实际输出和目标之间的差距

result_loss=loss(output,t)

output:

# tensor([[-0.0928, -0.0330, 0.0529, 0.1399, -0.1088, 0.0506, -0.0798, 0.0198,

# 0.1653, -0.0215]], grad_fn=<AddmmBackward>)

# tensor([7])

# tensor(2.2961, grad_fn=<NllLossBackward>)

四、在之前写的神经网络中结合loss function

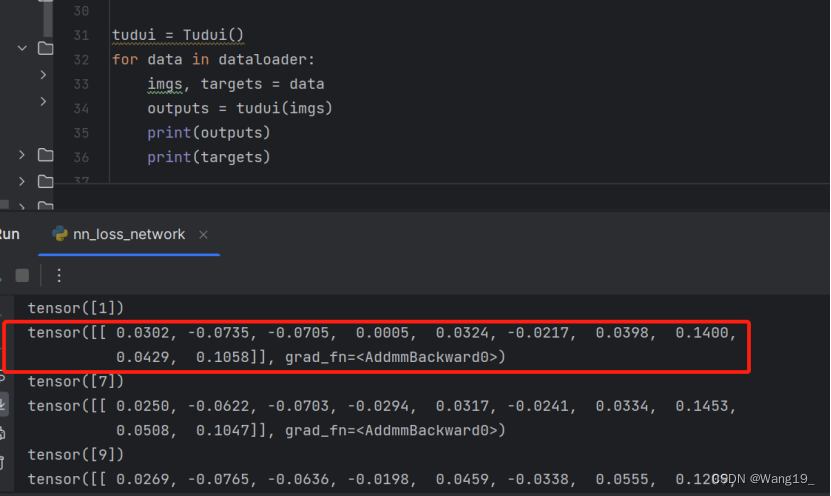

1.查看outputs和targets

该神经网络使得每个output有10个输出,每个代表预测该类别的概率

2.使用交叉熵处理分类问题

得到神经网络输出和真实输出的误差

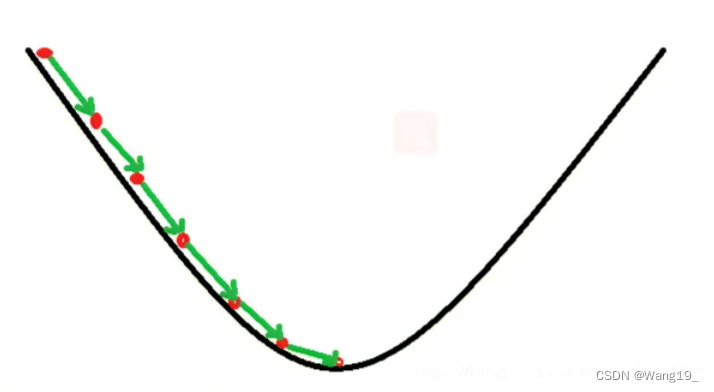

五、梯度下降算法

1.概述

梯度下降(

gradient descent)在机器学习中应用十分的广泛,不论是在线性回归还是Logistic回归中,它的主要目的是通过迭代找到目标函数的最小值,或者收敛到最小值。

loss = nn.CrossEntropyLoss()

tudui = Tudui()

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets)

result_loss.backward()

print("ok")

六、完整代码

# !usr/bin/env python3

# -*- coding:utf-8 -*-

"""

author :24nemo

date :2021年07月07日

"""

import torch

from torch import nn

from torch.nn import L1Loss

inputs = torch.tensor([1, 2, 3], dtype=torch.float32)

targets = torch.tensor([1, 2, 5], dtype=torch.float32)

inputs = torch.reshape(inputs, (1, 1, 1, 3)) # batch_size, channel, 1行, 3列

targets = torch.reshape(targets, (1, 1, 1, 3))

loss = L1Loss(reduction='sum')

result = loss(inputs, targets)

loss_mse = nn.MSELoss()

result_mse = loss_mse(inputs, targets)

print(result)

print(result_mse)

x = torch.tensor([0.1, 0.2, 0.3])

y = torch.tensor([1])

x = torch.reshape(x, (1, 3))

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(x, y)

print(result_cross)

'''

使用 L1Loss 时,一定要注意 shape 的形状,输入和输出的大小,要看清楚:

Input: (N, *)(N,∗) where *∗ means, any number of additional dimensions

Target: (N, *)(N,∗), same shape as the input

'''

import torch

from torch import nn

from torch.nn import L1Loss

inputs = torch.tensor([1, 2, 3], dtype=torch.float32)

targets = torch.tensor([1, 2, 5], dtype=torch.float32)

inputs = torch.reshape(inputs, (1, 1, 1, 3)) # batch_size, channel, 1行, 3列

targets = torch.reshape(targets, (1, 1, 1, 3))

loss = L1Loss()

result = loss(inputs, targets)

print("result:", result)

'''

MSELoss

'''

loss_mse = nn.MSELoss()

result_mse = loss_mse(inputs, targets)

print("result_mse:", result_mse)

'''

交叉熵,用于分类问题

这里up讲得挺细致

'''

结合神经网络:

import torchvision

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("../dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=1)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss()

tudui = Tudui()

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets)

result_loss.backward() # 反向传播, 这里要注意不能使用定义损失函数那里的 loss,而要使用 调用损失函数之后的 result_loss

print("ok")

727

727

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?