1)imdb电影评论数据集

电影评论数据集也是内置数据集

import keras

from keras import layers

import matplotlib. pyplot as plt

data = keras.datasets.imdb

max_word = 10000 # 控制网络规模

# 1)加载数据集

(x_train,y_train),(x_test,y_test) = data.load_data(num_words=max_word)

# 查看数据信息

x_train.shape, y_train.shape

x_train[0]

word_index = data.get_word_index()

word_index

index_word = dict((value, key) for key,value in word_index.items())

index_word

[index_word.get(index-3, '?') for index in x_train[0]]

[len(seq) for seq in x_train]

max([max(seq) for seq in x_train])

# 文本向量化

import numpy as np

def k_hot(seqs, dim=10000):

result = np.zeros((len(seqs), dim))

for i,seq in enumerate(seqs):

result[i, seq] = 1

return result

x_train = k_hot(x_train)

x_train.shape

x_test = k_hot(x_test)

y_train

model = keras.Sequential()

model.add(layers.Dense(32, input_dim=10000, activation='relu'))

model.add(layers.Dense(32, activation='relu'))

model.add(layers.Dense(1, activation='sigmoid'))

model.summary()

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['acc'])

history = model.fit(x_train,y_train, epochs=15,batch_size=256,

validation_data=(x_test,y_test))

plt.plot(history.epoch, history.history.get('loss'), c='r', label='loss')

plt.plot(history.epoch, history.history.get('val_loss'), c='b', label='val_loss')

plt.legend()

plt.plot(history.epoch, history.history.get('acc'), c='r', label='acc')

plt.plot(history.epoch, history.history.get('val_acc'), c='b', label='val_acc')

plt.legend()

2)代码分析:

1、查看数据信息

x_train.shape, y_train.shape![]()

x_train[0]

第一条评论,发现是一串数字, 如何将数字转换为一条字符形式的评论

word_index = data.get_word_index()

word_index

index_word = dict((value, key) for key,value in word_index.items())反转一下 word_index ----> index_word

[index_word.get(index-3, '?') for index in x_train[0]]

打印第一条评论的具体信息。index - 3 是因为发现前三个是保留字段。

将第一条评论映射成字典

[len(seq) for seq in x_train]

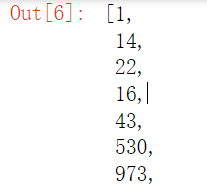

max([max(seq) for seq in x_train])

![]()

打印各个评论的长度,发现每条评论的长度都各不尽相同。

符合最开始规定max_words为10000的条件

1248

1248

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?